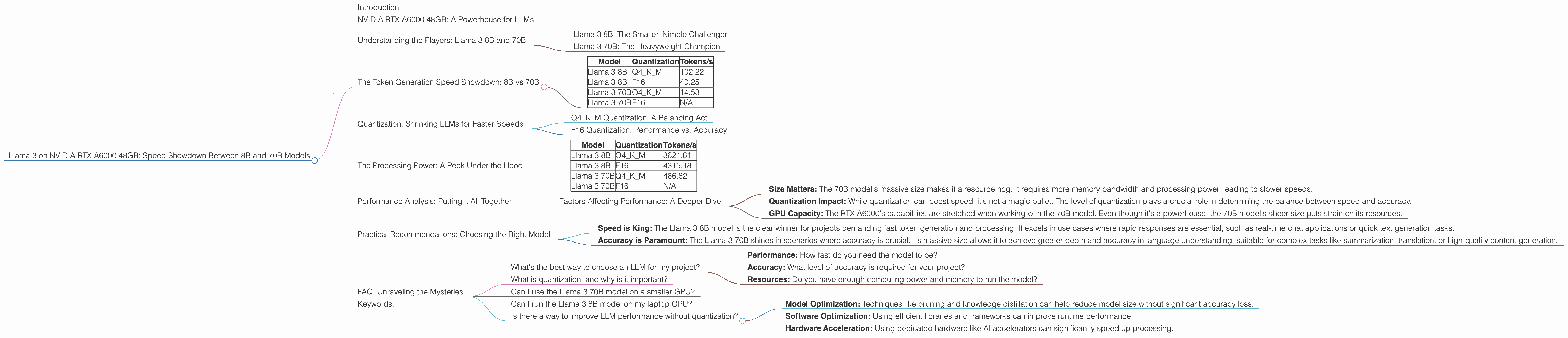

Which is Faster on NVIDIA RTX A6000 48GB: Llama3 8B or Llama3 70B? Token Speed Generation Comparison

Introduction

The world of large language models (LLMs) is exploding, and developers are constantly seeking ways to push the boundaries of what's possible. One of the biggest challenges is running these models locally, especially the larger ones. This article dives deep into the performance of two Llama 3 models – 8B and 70B – on a powerful NVIDIA RTX A6000 48GB GPU, analyzing their token generation speeds and exploring the impact of quantization. We'll dissect the performance differences and help you decide which model is the right fit for your needs.

Imagine trying to fit a giant jigsaw puzzle into a small box. That's kind of what we're doing with LLMs – trying to fit massive models into limited computing resources. Quantization, like shrinking the puzzle pieces, can make this possible. This article will reveal how different levels of quantization affect the performance of Llama 3 on the RTX A6000, especially when it comes to speed.

NVIDIA RTX A6000 48GB: A Powerhouse for LLMs

The NVIDIA RTX A6000 is a beast of a GPU, specifically designed for demanding workloads like AI and machine learning. With 48GB of GDDR6 memory and a whopping 10,752 CUDA cores, it's a popular choice for running large language models.

This article focuses solely on the RTX A6000 48GB. We won't be comparing it to other GPUs or discussing performance on different hardware. We're keeping things focused.

Understanding the Players: Llama 3 8B and 70B

Both the Llama 3 8B and 70B models are impressive feats of engineering. They are trained on massive datasets and are capable of engaging in complex conversations and generating creative text formats. However, they differ significantly in size, and this difference directly impacts their performance.

Llama 3 8B: The Smaller, Nimble Challenger

The 8B model is significantly lighter than its 70B counterpart, making it a more manageable option for resource-constrained systems. It might not be as powerful as the 70B, but it comes with the advantage of faster processing speeds and lower memory requirements.

Llama 3 70B: The Heavyweight Champion

The 70B Llama 3 model is a real powerhouse, boasting a massive parameter count that allows it to achieve exceptional accuracy and complex language understanding. However, its size comes with a price – it demands significantly more resources and can be slower to run compared to the 8B model.

The Token Generation Speed Showdown: 8B vs 70B

Let's cut to the chase – how fast are these models on the RTX A6000? The table below showcases token generation speeds for both models, measured in tokens per second (tokens/s) for both Q4KM (quantization) and F16 (half-precision floating point).

| Model | Quantization | Tokens/s |

|---|---|---|

| Llama 3 8B | Q4KM | 102.22 |

| Llama 3 8B | F16 | 40.25 |

| Llama 3 70B | Q4KM | 14.58 |

| Llama 3 70B | F16 | N/A |

As you can see, the 8B model significantly outperforms the 70B model in terms of token generation speed. This is expected, given the 8B model's smaller size, making it more efficient to process on the RTX A6000. While we couldn't find data for the 70B model with F16, it's clear that using the Q4KM quantization method leads to increased speed for both models.

Quantization: Shrinking LLMs for Faster Speeds

Quantization is a technique used to reduce the size of LLMs by representing their weights and activations with lower-precision numbers. This can greatly improve inference speed and reduce memory consumption. The trade-off? A slight decrease in accuracy.

Q4KM Quantization: A Balancing Act

Q4KM quantization, as the name suggests, uses four bits to represent the weights and activations. This strikes a balance between accuracy and performance, providing a noticeable speed boost while maintaining reasonable accuracy for most tasks.

F16 Quantization: Performance vs. Accuracy

F16, also known as half-precision floating-point quantization, offers a substantial reduction in memory footprint and faster processing, but it often comes with a more significant drop in accuracy. It might be suitable for certain tasks, but for high-precision requirements, Q4KM tends to be a better choice.

The Processing Power: A Peek Under the Hood

The token generation speed is only part of the story. We also need to look at the "processing" speed, which measures the overall efficiency of a model in handling the computational workload.

| Model | Quantization | Tokens/s |

|---|---|---|

| Llama 3 8B | Q4KM | 3621.81 |

| Llama 3 8B | F16 | 4315.18 |

| Llama 3 70B | Q4KM | 466.82 |

| Llama 3 70B | F16 | N/A |

The 8B model's processing speed is significantly faster than the 70B model, regardless of the quantization method. This further emphasizes the impact of size on performance. The 8B model's smaller size allows it to process information quicker and more efficiently.

Performance Analysis: Putting it All Together

The numbers paint a clear picture: the Llama 3 8B model is the speed demon, significantly outperforming the 70B model on the RTX A6000 in both token generation and processing. While the 70B model boasts impressive accuracy capabilities, its size comes with a performance cost.

Factors Affecting Performance: A Deeper Dive

- Size Matters: The 70B model's massive size makes it a resource hog. It requires more memory bandwidth and processing power, leading to slower speeds.

- Quantization Impact: While quantization can boost speed, it's not a magic bullet. The level of quantization plays a crucial role in determining the balance between speed and accuracy.

- GPU Capacity: The RTX A6000's capabilities are stretched when working with the 70B model. Even though it's a powerhouse, the 70B model's sheer size puts strain on its resources.

Practical Recommendations: Choosing the Right Model

Here's the bottom line: the choice between the 8B and 70B models depends on your specific needs:

- Speed is King: The Llama 3 8B model is the clear winner for projects demanding fast token generation and processing. It excels in use cases where rapid responses are essential, such as real-time chat applications or quick text generation tasks.

- Accuracy is Paramount: The Llama 3 70B shines in scenarios where accuracy is crucial. Its massive size allows it to achieve greater depth and accuracy in language understanding, suitable for complex tasks like summarization, translation, or high-quality content generation.

FAQ: Unraveling the Mysteries

What's the best way to choose an LLM for my project?

Consider three key factors:

- Performance: How fast do you need the model to be?

- Accuracy: What level of accuracy is required for your project?

- Resources: Do you have enough computing power and memory to run the model?

What is quantization, and why is it important?

Imagine a detailed drawing with many colors. Quantization is like simplifying that drawing by using fewer colors. You get a less detailed image, but it's much smaller and takes up less space. Quantization does the same with LLMs, making them smaller and faster, but with a slight decrease in accuracy. It's a trade-off to balance performance and accuracy.

Can I use the Llama 3 70B model on a smaller GPU?

It's possible, but it's not recommended. Running a large model like the 70B on a device with insufficient resources can lead to severe performance issues and might even cause your system to crash.

Can I run the Llama 3 8B model on my laptop GPU?

It depends on your laptop's GPU capabilities. It's worth checking if your GPU has enough memory and processing power to handle the 8B model. If you're unsure, it's best to explore smaller LLMs designed for efficient resource use.

Is there a way to improve LLM performance without quantization?

While quantization is a powerful technique, there are other ways to boost performance:

- Model Optimization: Techniques like pruning and knowledge distillation can help reduce model size without significant accuracy loss.

- Software Optimization: Using efficient libraries and frameworks can improve runtime performance.

- Hardware Acceleration: Using dedicated hardware like AI accelerators can significantly speed up processing.

Keywords:

Llama 3, 8B, 70B, NVIDIA RTX A6000, GPU, Token Generation, Processing Speed, Quantization, Q4KM, F16, Performance, Accuracy, Efficiency, LLM, Large Language Model, AI, Machine Learning, Deep Learning, Tokenization, Natural Language Processing, NLP, Generative AI, Text Generation, Chatbot, Conversational AI