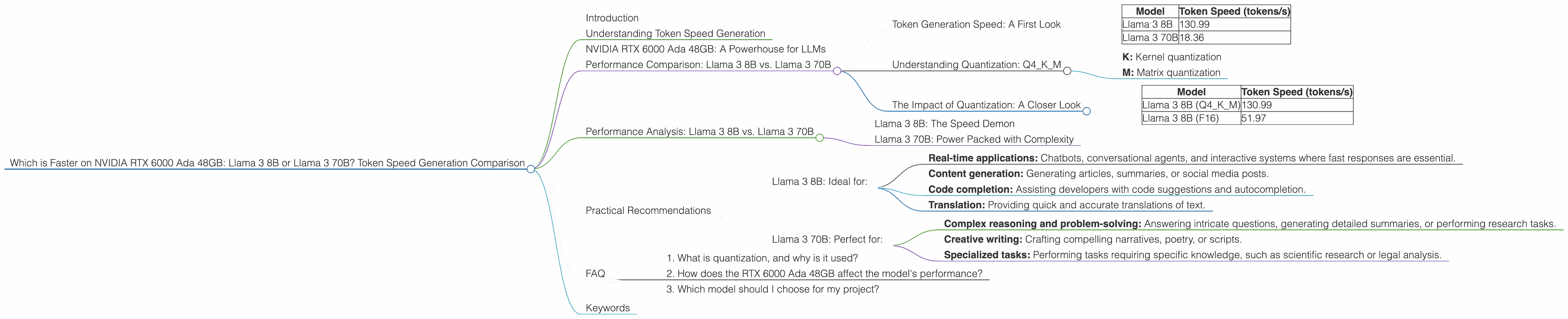

Which is Faster on NVIDIA RTX 6000 Ada 48GB: Llama3 8B or Llama3 70B? Token Speed Generation Comparison

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models and advancements appearing regularly. Two prominent players in this space are the Llama 3 models - 8B and 70B. Choosing the right model for your needs can be challenging, particularly when considering performance on specific hardware.

This article explores the token speed generation of Llama 3 8B and Llama 3 70B on an NVIDIA RTX 6000 Ada 48GB GPU, a powerful card often used for machine learning tasks. By comparing their performance metrics, we'll gain insights into which model is faster and better suited for different applications.

Understanding Token Speed Generation

Token speed generation refers to how fast an LLM can process and generate tokens, the individual units that make up text. For users, this translates to how quickly a model can respond to prompts and create coherent text outputs.

NVIDIA RTX 6000 Ada 48GB: A Powerhouse for LLMs

The NVIDIA RTX 6000 Ada 48GB is a high-end graphics card designed for demanding workloads, including artificial intelligence and machine learning. Its powerful Ada Lovelace architecture and generous memory make it an excellent choice for running large language models.

Performance Comparison: Llama 3 8B vs. Llama 3 70B

Token Generation Speed: A First Look

Let's start by comparing the peak token speeds of the two models on the RTX 6000 Ada 48GB. The following table shows the token generation speed in tokens per second (tokens/s), based on Q4KM quantization for both models:

| Model | Token Speed (tokens/s) |

|---|---|

| Llama 3 8B | 130.99 |

| Llama 3 70B | 18.36 |

As you can see, the Llama 3 8B model significantly outperforms the Llama 3 70B model in terms of token generation speed.

A quick analogy: Imagine you have two different printers. The Llama 3 8B is like a high-speed laser printer that can print documents much faster than a traditional inkjet printer, which is like the Llama 3 70B.

The difference in speed is primarily due to the size of the models. The 70B model, being much larger, requires processing a much more extensive set of parameters, leading to a slower response time.

Understanding Quantization: Q4KM

Both models are presented with Q4KM quantization. This means that the model's weights (the parameters learned during training) are stored using 4 bits, resulting in significant memory savings compared to 32-bit floating-point (F32). The "K" and "M" refer to the quantization techniques used:

- K: Kernel quantization

- M: Matrix quantization

While quantization reduces model size and memory footprint, it may slightly affect model accuracy. However, it can significantly improve performance, particularly when dealing with large models.

The Impact of Quantization: A Closer Look

While the table above shows Q4KM quantization, it's also worth examining the performance of the Llama 3 8B model when using F16 (half-precision floating-point) quantization:

| Model | Token Speed (tokens/s) |

|---|---|

| Llama 3 8B (Q4KM) | 130.99 |

| Llama 3 8B (F16) | 51.97 |

While still faster than the 70B model, the performance of the 8B model drops when using F16 quantization. This is expected, as F16 quantization uses more memory than Q4KM.

Performance Analysis: Llama 3 8B vs. Llama 3 70B

Llama 3 8B: The Speed Demon

The Llama 3 8B model emerges as the clear winner in terms of token speed generation. On the RTX 6000 Ada 48GB, it's capable of generating significantly more tokens per second than the 70B model, making it ideal for use cases where speed is paramount. Remember, however, that using Q4KM quantization can be preferred for the best possible results.

Llama 3 70B: Power Packed with Complexity

Despite its slower token generation speed, the Llama 3 70B model shines in its greater capacity. Its larger size allows it to hold and process more information, making it more suitable for tasks requiring complex reasoning and sophisticated understanding.

Think of the 70B model as a large, intricate machine with many moving parts. It may take longer to complete a task, but it can handle more intricate and demanding processes.

Key takeaway: If you prioritize speed, and your application doesn't involve highly complex tasks, the Llama 3 8B is the better choice. But if you need a model capable of handling intricate and nuanced tasks, the Llama 3 70B model is a strong contender.

Practical Recommendations

Llama 3 8B: Ideal for:

- Real-time applications: Chatbots, conversational agents, and interactive systems where fast responses are essential.

- Content generation: Generating articles, summaries, or social media posts.

- Code completion: Assisting developers with code suggestions and autocompletion.

- Translation: Providing quick and accurate translations of text.

Llama 3 70B: Perfect for:

- Complex reasoning and problem-solving: Answering intricate questions, generating detailed summaries, or performing research tasks.

- Creative writing: Crafting compelling narratives, poetry, or scripts.

- Specialized tasks: Performing tasks requiring specific knowledge, such as scientific research or legal analysis.

FAQ

1. What is quantization, and why is it used?

Quantization is a technique used to reduce the size of LLM models by representing their weights (parameters) with fewer bits. It helps to optimize memory usage and improve performance, particularly on devices with limited resources. Think of it as compressing the model without losing too much of its essential information.

2. How does the RTX 6000 Ada 48GB affect the model's performance?

The RTX 6000 Ada 48GB is a powerful GPU, ideal for running large language models and accelerating their operations. Its high memory capacity and advanced architecture allow for efficient processing of model parameters, leading to better performance.

3. Which model should I choose for my project?

The best model for your project depends on your specific requirements. If you need a model that can generate responses quickly, the Llama 3 8B is a good choice. If you need a model that can handle complex tasks, the Llama 3 70B is a better option.

Keywords

Large Language Models, Llama 3, NVIDIA RTX 6000 Ada 48GB, Token Speed Generation, Quantization, Q4KM, F16, Performance Comparison, Model Selection, Applications, Use Cases, Practical Recommendations.