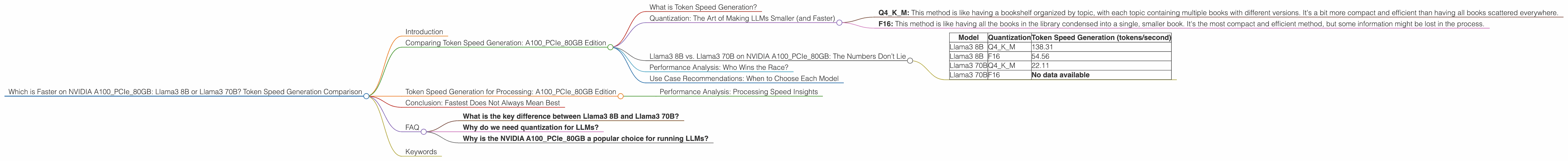

Which is Faster on NVIDIA A100 PCIe 80GB: Llama3 8B or Llama3 70B? Token Speed Generation Comparison

Introduction

Welcome, fellow AI adventurers! Today we're diving into the exhilarating world of Large Language Models (LLMs) and their performance on powerful hardware.

Specifically, we'll focus on two behemoths: Llama3 8B and Llama3 70B. Both are open-source LLMs based on the impressive Llama architecture, but they pack vastly different capabilities. To put them through the paces, we'll use an NVIDIA A100PCIe80GB GPU, a top-tier graphics card known for its impressive processing muscle.

Think of this comparison like choosing between a nimble sports car (Llama3 8B) and a luxurious limousine (Llama3 70B). Both can get you from point A to point B, but their strengths lie in different areas. Let’s see which beast reigns supreme in our token speed generation showdown.

Comparing Token Speed Generation: A100PCIe80GB Edition

What is Token Speed Generation?

Imagine a language model as a storyteller. They weave a tapestry of words, one token at a time. Each token represents a piece of information, like a word or part of a word. Token speed generation measures how quickly the LLM can produce these tokens, essentially its storytelling pace.

Quantization: The Art of Making LLMs Smaller (and Faster)

Now, let's talk about quantization. It's like taking a massive library and condensing it into a smaller, more accessible format. By squeezing the model's information into fewer bits, we make it more lightweight and faster to process. This is especially useful for devices with limited memory.

In our case, we'll explore two quantization methods:

- Q4KM: This method is like having a bookshelf organized by topic, with each topic containing multiple books with different versions. It's a bit more compact and efficient than having all books scattered everywhere.

- F16: This method is like having all the books in the library condensed into a single, smaller book. It's the most compact and efficient method, but some information might be lost in the process.

Llama3 8B vs. Llama3 70B on NVIDIA A100PCIe80GB: The Numbers Don’t Lie

For this comparison, we're using data from the following sources:

- Performance of llama.cpp on various devices (https://github.com/ggerganov/llama.cpp/discussions/4167) by ggerganov

- GPU Benchmarks on LLM Inference (https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference) by XiongjieDai

Here's a breakdown of our data:

| Model | Quantization | Token Speed Generation (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 138.31 |

| Llama3 8B | F16 | 54.56 |

| Llama3 70B | Q4KM | 22.11 |

| Llama3 70B | F16 | No data available |

Performance Analysis: Who Wins the Race?

*The smaller, the faster: * The Llama3 8B model clearly outperforms the Llama3 70B in token speed generation. This makes sense, as the 8B model is significantly smaller and requires less processing power.

Quantization matters: Using Q4KM quantization for both Llama3 8B and Llama3 70B leads to a faster token speed generation compared to F16. However, the difference is more significant for the smaller Llama3 8B, suggesting that Q4KM is well-suited for smaller models.

Trade-offs: While the Llama3 8B may be faster, it comes with a trade-off. The 70B model has a much larger vocabulary and can handle more complex tasks.

Use Case Recommendations: When to Choose Each Model

Go for speed with Llama3 8B: If you need fast token generation for tasks like chatbots or simple text summarization, the Llama3 8B model is your best bet.

Get sophisticated with Llama3 70B: For more complex tasks, such as writing creative content or generating long-form articles, the Llama3 70B model is a better choice.

Token Speed Generation for Processing: A100PCIe80GB Edition

Now, let's examine how these models perform in terms of processing speed. This refers to how quickly they can handle the internal operations involved in generating tokens.

| Model | Quantization | Token Speed Generation (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 5800.48 |

| Llama3 8B | F16 | 7504.24 |

| Llama3 70B | Q4KM | 726.65 |

| Llama3 70B | F16 | No data available |

Performance Analysis: Processing Speed Insights

Llama3 8B shines again: The Llama3 8B model demonstrates impressive processing speed, significantly outperforming the Llama3 70B. This strong performance is likely due to its smaller size, allowing for quicker internal calculations.

F16: A mixed bag: For Llama3 8B, F16 quantization surprisingly resulted in faster processing compared to Q4KM. However, the difference is relatively small compared to the token speed generation. We need more data to draw conclusive insights about F16 processing, especially for the Llama3 70B.

The bigger, the slower: Similar to the token speed generation, the larger Llama3 70B experiences a considerable performance drop in the processing speed department. This further emphasizes the trade-off between model size and speed.

Conclusion: Fastest Does Not Always Mean Best

Our investigation reveals that Llama3 8B, with its smaller size, edges out the Llama3 70B in terms of token generation and processing speed when using the NVIDIA A100PCIe80GB. However, this doesn't necessarily mean that the Llama3 8B is the "best" model overall. The optimal choice depends on your needs.

For applications that prioritize speed and efficiency, like basic chatbots or text summarization, the Llama3 8B might be the perfect fit. But for tasks demanding greater complexity and sophistication, the Llama3 70B could be a better option.

FAQ

What is the key difference between Llama3 8B and Llama3 70B?

The main difference between the two models is their size. Llama3 8B has a smaller model size, making it more efficient and faster for processing. However, it comes with a smaller vocabulary and limited capabilities compared to the larger Llama3 70B.

Why do we need quantization for LLMs?

Quantization allows us to reduce the memory footprint of LLMs, making them lighter and more efficient to run on devices with limited resources. This is especially important for running LLMs on mobile devices or edge devices with limited memory and processing power.

Why is the NVIDIA A100PCIe80GB a popular choice for running LLMs?

The NVIDIA A100PCIe80GB is a powerful GPU designed for high-performance computing tasks, including machine learning and deep learning. Its large memory capacity and high processing speed make it ideal for running large language models.

Keywords

Llama3, Llama 8B, Llama 70B, NVIDIA A100PCIe80GB, Token Speed Generation, Quantization, Q4KM, F16, LLM, Large Language Model, AI, Machine Learning, Deep Learning, Performance Comparison.