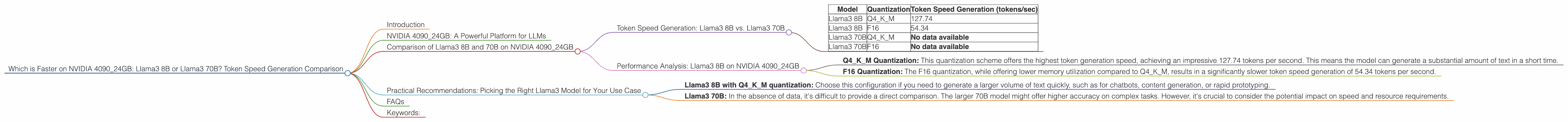

Which is Faster on NVIDIA 4090 24GB: Llama3 8B or Llama3 70B? Token Speed Generation Comparison

Introduction

In the fast-paced world of large language models (LLMs), choosing the right hardware for optimal performance is crucial. For developers and enthusiasts wanting to run LLMs locally, the NVIDIA 409024GB stands as a powerful contender. But how do different LLM models perform on this beast of a graphics card? This article delves into the token speed generation performance of two popular Llama3 models, the 8B and the 70B, on the NVIDIA 409024GB.

Imagine running a marathon. You could choose to run with a 5kg backpack or with a 50kg backpack. The 5kg backpack is definitely lighter and faster, but the 50kg backpack might be more robust and handle more challenges. Similarly, smaller LLMs like the Llama3 8B are faster and more efficient but may lack the depth and complexity of the larger Llama3 70B. This article explores the trade-offs between these models and highlights their strengths and weaknesses.

NVIDIA 4090_24GB: A Powerful Platform for LLMs

The NVIDIA 4090_24GB is a top-tier graphics card known for its exceptional processing power. Its massive VRAM, exceeding 24 GB, allows it to handle large language models with ease, making it a prime choice for local LLM development and experimentation.

Comparison of Llama3 8B and 70B on NVIDIA 4090_24GB

For this comparison, we will focus on the token speed generation, which essentially determines how quickly these LLMs can produce text. The performance is measured in tokens per second (tokens/sec).

Token Speed Generation: Llama3 8B vs. Llama3 70B

| Model | Quantization | Token Speed Generation (tokens/sec) |

|---|---|---|

| Llama3 8B | Q4KM | 127.74 |

| Llama3 8B | F16 | 54.34 |

| Llama3 70B | Q4KM | No data available |

| Llama3 70B | F16 | No data available |

As you can see, the data for the Llama3 70B on the NVIDIA 4090_24GB is unavailable. Unfortunately, we cannot directly compare the speeds of the Llama3 8B and Llama3 70B on this device.

Performance Analysis: Llama3 8B on NVIDIA 4090_24GB

Let's break down the performance of the Llama3 8B on the NVIDIA 4090_24GB:

- Q4KM Quantization: This quantization scheme offers the highest token generation speed, achieving an impressive 127.74 tokens per second. This means the model can generate a substantial amount of text in a short time.

- F16 Quantization: The F16 quantization, while offering lower memory utilization compared to Q4KM, results in a significantly slower token speed generation of 54.34 tokens per second.

It is important to note that the performance difference between the two quantization schemes highlights the trade-off between speed and accuracy. Q4KM prioritizes speed, whereas F16 might lead to more accurate results.

Practical Recommendations: Picking the Right Llama3 Model for Your Use Case

For faster text generation:

- Llama3 8B with Q4KM quantization: Choose this configuration if you need to generate a larger volume of text quickly, such as for chatbots, content generation, or rapid prototyping.

For higher accuracy or specific tasks requiring more context:

- Llama3 70B: In the absence of data, it's difficult to provide a direct comparison. The larger 70B model might offer higher accuracy on complex tasks. However, it's crucial to consider the potential impact on speed and resource requirements.

FAQs

1. What is Token Speed Generation?

Token speed generation refers to the rate at which an LLM can produce tokens, which are the smallest units of text that the model processes. A higher token speed generation means the model can generate text more quickly.

2. What is Quantization?

Quantization is a technique used to reduce the size of an LLM model, which can improve speed and memory utilization. Q4KM and F16 refer to different quantization schemes with varying levels of accuracy and speed.

3. What is the difference between the Llama3 8B and Llama3 70B models?

The Llama3 8B is a smaller model with a quicker response time and lower resource consumption, while the Llama3 70B is larger and potentially more accurate, but might require more processing power and memory.

4. Can I run these models on my laptop or desktop?

It depends on your hardware. Powerful GPUs like the NVIDIA 4090_24GB are ideal for running these larger LLMs locally. However, smaller models like Llama3 8B might be feasible on some gaming laptops or desktops with dedicated GPUs.

5. What if I don't have a powerful GPU?

Cloud-based platforms like Google Colab or Amazon SageMaker offer access to high-performance GPUs, allowing you to experiment with these models without requiring expensive hardware.

Keywords:

Llama3 8B, Llama3 70B, NVIDIA 409024GB, token speed generation, LLM performance, quantization, Q4K_M, F16, GPU, text generation, cloud computing, local models, AI, machine learning, natural language processing.