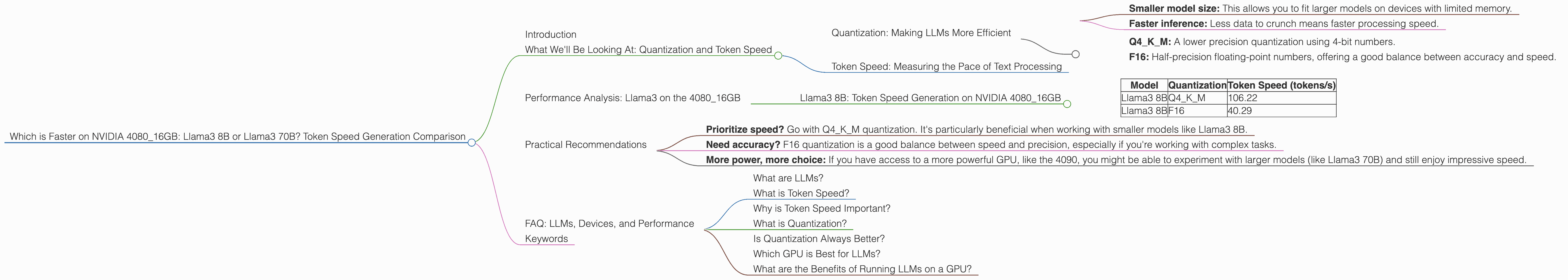

Which is Faster on NVIDIA 4080 16GB: Llama3 8B or Llama3 70B? Token Speed Generation Comparison

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements appearing every day. But with so many options, it can be tough to pick the right one for your needs. One key factor to consider is performance. How fast can your chosen LLM process text and generate responses?

In this article, we're going to dive into the performance of the Llama3 8B and Llama3 70B models on the powerful NVIDIA 4080_16GB GPU. We'll compare their token speed generation, highlighting the different approaches to quantization and their impact on performance.

What We'll Be Looking At: Quantization and Token Speed

To get a good grasp of the comparison, let's understand two critical concepts:

Quantization: Making LLMs More Efficient

Quantization is a technique that shrinks the size of LLMs, making them faster and more memory-efficient. Think of it like making a high-resolution picture smaller for faster web browsing. LLMs are usually trained with 32-bit floating-point numbers (FP32), which take up a lot of space. Quantization reduces the precision of these numbers, resulting in:

- Smaller model size: This allows you to fit larger models on devices with limited memory.

- Faster inference: Less data to crunch means faster processing speed.

We will be looking at two common quantization levels:

- Q4KM: A lower precision quantization using 4-bit numbers.

- F16: Half-precision floating-point numbers, offering a good balance between accuracy and speed.

Token Speed: Measuring the Pace of Text Processing

Token speed, measured in tokens per second (tokens/s), indicates how many text units an LLM can process each second. Think of tokens like the individual letters of a text message, but they can also be words or even parts of words. The higher the token speed, the faster your LLM can generate responses.

Performance Analysis: Llama3 on the 4080_16GB

So, how do the Llama3 8B and 70B stack up on the 4080_16GB?

Unfortunately, there's no data available for the Llama3 70B model on the 4080_16GB. We only have data for the Llama3 8B.

Let's break down what we know:

Llama3 8B: Token Speed Generation on NVIDIA 4080_16GB

| Model | Quantization | Token Speed (tokens/s) |

|---|---|---|

| Llama3 8B | Q4KM | 106.22 |

| Llama3 8B | F16 | 40.29 |

Analysis:

The Llama3 8B model on the 408016GB demonstrates a significant performance advantage with Q4K_M quantization compared to F16. It's almost 2.6 times faster at generating text.

Why is this?

The Q4KM quantization, despite its lower precision, allows for much faster processing by the GPU. It's like having a super-efficient engine that can handle a higher volume of text in the same amount of time.

How To Choose:

If you prioritize speed and are working with a relatively small model like Llama3 8B, Q4KM quantization is the clear winner. However, if you need higher accuracy for tasks like writing creative content or translations, F16 might be a better choice.

Practical Recommendations

Here's how to apply this information to your own projects:

- Prioritize speed? Go with Q4KM quantization. It's particularly beneficial when working with smaller models like Llama3 8B.

- Need accuracy? F16 quantization is a good balance between speed and precision, especially if you're working with complex tasks.

- More power, more choice: If you have access to a more powerful GPU, like the 4090, you might be able to experiment with larger models (like Llama3 70B) and still enjoy impressive speed.

FAQ: LLMs, Devices, and Performance

Here are some common questions you might have:

What are LLMs?

LLMs are computer programs trained on massive amounts of text data. Think of them as incredibly clever AI assistants that can generate text, translate languages, write different kinds of creative content, and answer your questions in a way that feels natural.

What is Token Speed?

Token speed is a way to measure how fast an LLM can process text. It's measured in tokens per second (tokens/s).

Why is Token Speed Important?

Higher token speed means faster responses. It's critical for interactive applications, such as chatbots and real-time content generation.

What is Quantization?

Quantization is a technique to shrink the size of LLMs, making them faster and more memory-efficient. It does this by reducing the precision of the data the model uses.

Is Quantization Always Better?

No, not always. While lower precision quantization can make models faster, it can also lead to a loss of accuracy. The optimal level of quantization depends on your specific use case.

Which GPU is Best for LLMs?

The best GPU for LLMs depends on your budget, the size of the model you're using, and your performance requirements.

What are the Benefits of Running LLMs on a GPU?

GPUs are specifically designed for parallel processing, which is perfect for the complex calculations involved in running LLMs. This results in significantly faster performance compared to running them on a CPU.

Keywords

LLMs, Llama3, 8B, 70B, NVIDIA 408016GB, GPU, token speed, performance, quantization, Q4K_M, F16, inference, processing, efficiency, speed, accuracy, model size, memory, parallel processing, deep learning, AI, natural language processing, NLP, chatbots, content generation, translation, language models, AI assistant, technology, computer science.