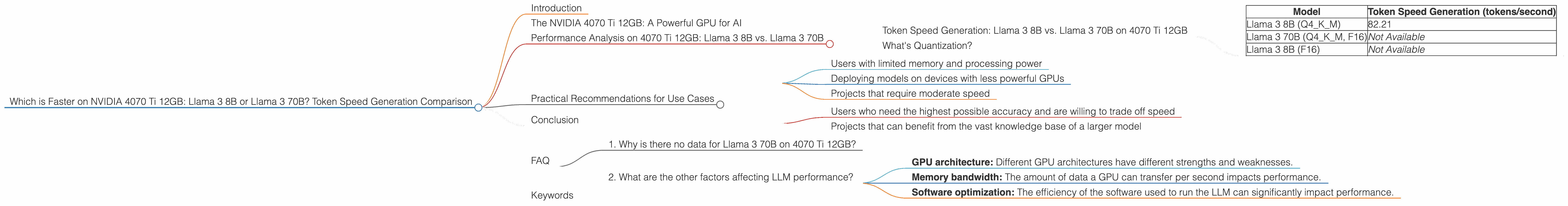

Which is Faster on NVIDIA 4070 Ti 12GB: Llama3 8B or Llama3 70B? Token Speed Generation Comparison

Introduction

The world of Large Language Models (LLMs) is exploding with exciting new models like Llama 3, offering unparalleled capabilities in natural language processing. But with these models comes a crucial question: how do they perform on different hardware?

In this article, we'll specifically delve into the performance of Llama 3 8B and Llama 3 70B on an NVIDIA 4070 Ti 12GB graphics card – the battle of the titans!

We'll compare their token speed generation using real-world data. This data helps us understand the difference in speed between these models on this specific GPU, allowing us to make informed decisions about choosing the right model for our specific needs. So, fasten your seatbelts and get ready for a deep dive into the world of LLM performance!

The NVIDIA 4070 Ti 12GB: A Powerful GPU for AI

The NVIDIA 4070 Ti 12GB is a powerful GPU designed for gamers and creators, but it's also a great choice for running LLMs locally.

Its 12GB of GDDR6X memory and impressive processing power make it capable of handling the demanding computations involved in LLMs.

Performance Analysis on 4070 Ti 12GB: Llama 3 8B vs. Llama 3 70B

Token Speed Generation: Llama 3 8B vs. Llama 3 70B on 4070 Ti 12GB

Let's dive into the numbers! We'll focus on the token speed generation, which is the most important metric for developers and users who want to run these models locally. To make things clear, we'll use a simple analogy:

Imagine you're building a house. Every brick is a token. Token speed generation is how fast you can lay those bricks to finish the house.

Here's a table showcasing the speed comparison of Llama 3 models on a 4070 Ti 12GB:

| Model | Token Speed Generation (tokens/second) |

|---|---|

| Llama 3 8B (Q4KM) | 82.21 |

| Llama 3 70B (Q4KM, F16) | Not Available |

| Llama 3 8B (F16) | Not Available |

Analysis:

- Llama 3 8B (Q4KM): This model achieved a token speed generation of 82.21 tokens per second. This is a pretty decent speed for a model of its size on this GPU.

- Llama 3 70B: Unfortunately, we have no data for Llama 3 70B on this specific GPU and configuration. This highlights the need for more diverse benchmarks across different models and hardware to get a complete picture.

What's Quantization?

Let's quickly define quantization for non-techies: It's like creating a smaller version of the model, using less space and processing power, but with a tiny bit of compromise on accuracy. It's like carrying a lighter backpack on a hiking trip. You might have to leave a few things behind, but you're faster and can go farther!

In this table, "Q4KM" stands for a specific quantization technique. There are other techniques like "F16", which also affects performance.

Practical Recommendations for Use Cases

Based on the data we have, the Llama 3 8B model (Q4KM) seems like a good choice for users looking for a reasonably fast and efficient model on a 4070 Ti 12GB.

However, remember that the absence of data for Llama 3 70B doesn't mean it's not a good choice on this hardware. It just means we haven't found data for those specific configurations yet.

Here's a quick breakdown:

Llama 3 8B (Q4KM) is great for:

- Users with limited memory and processing power

- Deploying models on devices with less powerful GPUs

- Projects that require moderate speed

Llama 3 70B (Q4KM, F16) is potentially good for:

- Users who need the highest possible accuracy and are willing to trade off speed

- Projects that can benefit from the vast knowledge base of a larger model

Keep in mind: The best model choice depends on your specific needs and constraints.

Conclusion

While our data is limited, it sheds light on the performance of Llama 3 models on an NVIDIA 4070 Ti 12GB. Hopefully, in the future, we'll have more data to provide a more comprehensive comparison of different models and configurations. This will empower developers to make well-informed choices about the right LLM for their projects.

FAQ

1. Why is there no data for Llama 3 70B on 4070 Ti 12GB?

This can be due to a few reasons. It could be that the model has not yet been tested on this specific hardware, or that the results are not publicly available. There might be limitations in the hardware or software that prevent a successful run.

2. What are the other factors affecting LLM performance?

Besides the model size and quantization techniques, other crucial factors include:

- GPU architecture: Different GPU architectures have different strengths and weaknesses.

- Memory bandwidth: The amount of data a GPU can transfer per second impacts performance.

- Software optimization: The efficiency of the software used to run the LLM can significantly impact performance.

Keywords

Llama 3, 8B, 70B, NVIDIA 4070 Ti 12GB, token speed generation, LLM performance, quantization, GPU, Q4KM, F16, benchmarks, natural language processing, AI