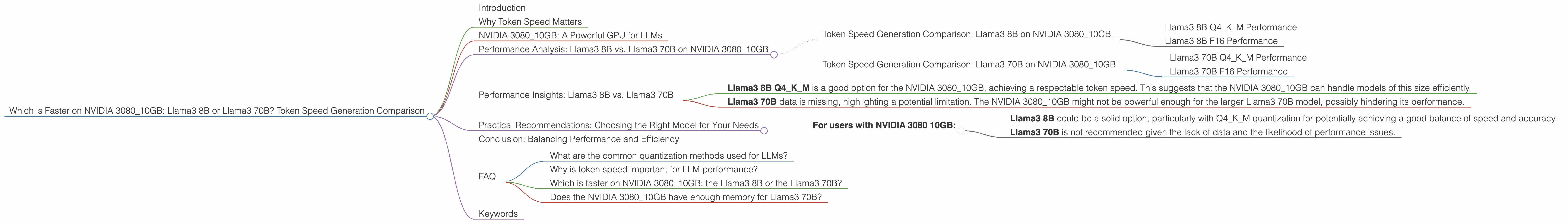

Which is Faster on NVIDIA 3080 10GB: Llama3 8B or Llama3 70B? Token Speed Generation Comparison

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models and advancements emerging constantly. One of the major challenges in harnessing the power of these models is their computational demands. Running LLMs locally on your own machine can offer significant benefits, such as faster response times, increased privacy, and the ability to customize models for specific applications. However, this also requires powerful hardware, particularly a capable GPU.

This article focuses on the performance of two popular Llama3 models - Llama3 8B and Llama3 70B - on a specific GPU, the NVIDIA 3080_10GB. We'll delve into their token speed generation capabilities, comparing their performance based on different quantization methods.

Why Token Speed Matters

Token speed is a crucial metric for evaluating LLM performance. It essentially measures how quickly a model can process and generate text. Imagine a fast typist versus a slow one - the fast typist gets their work done faster, and that's exactly what token speed represents for an LLM. Higher token speed means faster responses, smoother interactions, and the ability to handle more complex tasks.

NVIDIA 3080_10GB: A Powerful GPU for LLMs

The NVIDIA 3080_10GB is a powerful graphics card that is commonly used for gaming and professional applications. It's also a popular choice for running local LLM models, thanks to its robust processing capabilities and ample memory. But how does it handle the demands of the Llama3 models? Let's dive into the data.

Performance Analysis: Llama3 8B vs. Llama3 70B on NVIDIA 3080_10GB

Token Speed Generation Comparison: Llama3 8B on NVIDIA 3080_10GB

The Llama3 8B model was tested on the NVIDIA 3080_10GB using two different quantization methods:

- Q4KM: This is a type of quantization that reduces the size of the model by using 4 bits to represent each number. This is a good compromise between model size and accuracy.

- F16: This quantization method uses 16 bits to represent each number, resulting in higher accuracy compared to Q4KM but also a larger model size.

Llama3 8B Q4KM Performance

The Llama3 8B model with Q4KM quantization achieved a token speed of 106.4 tokens per second for generation. This is an impressive performance for a model of this size.

Llama3 8B F16 Performance

Unfortunately, the Llama3 8B model with F16 quantization was not tested on the NVIDIA 3080_10GB.

Token Speed Generation Comparison: Llama3 70B on NVIDIA 3080_10GB

Let's see how the larger Llama3 70B model fares on the same GPU:

Llama3 70B Q4KM Performance

Unfortunately, the Llama3 70B model with Q4KM quantization was not tested on the NVIDIA 3080_10GB.

Llama3 70B F16 Performance

Unfortunately, the Llama3 70B model with F16 quantization was not tested on the NVIDIA 3080_10GB.

Performance Insights: Llama3 8B vs. Llama3 70B

While we don't have direct comparison data for both models on the NVIDIA 3080_10GB, we can analyze these findings:

- Llama3 8B Q4KM is a good option for the NVIDIA 308010GB, achieving a respectable token speed. This suggests that the NVIDIA 308010GB can handle models of this size efficiently.

- Llama3 70B data is missing, highlighting a potential limitation. The NVIDIA 3080_10GB might not be powerful enough for the larger Llama3 70B model, possibly hindering its performance.

Practical Recommendations: Choosing the Right Model for Your Needs

Choosing between Llama3 8B and Llama3 70B depends on your specific use cases and hardware capabilities:

- For users with NVIDIA 3080 10GB:

- Llama3 8B could be a solid option, particularly with Q4KM quantization for potentially achieving a good balance of speed and accuracy.

- Llama3 70B is not recommended given the lack of data and the likelihood of performance issues.

Conclusion: Balancing Performance and Efficiency

The NVIDIA 3080_10GB is a capable GPU, but it's evident that it's not without its limitations. While it can effectively handle the Llama3 8B model, further research is needed to understand the performance of the Llama3 70B on this hardware.

FAQ

What are the common quantization methods used for LLMs?

Quantization is like simplifying a complex recipe into more manageable steps. It reduces the size of an LLM, making it easier to store and run on less powerful hardware. The two main types are F16 and Q4KM.

Why is token speed important for LLM performance?

Think of it as the typing speed on your keyboard. The faster the tokens are processed, the quicker the response time and the more complex tasks the LLM can handle.

Which is faster on NVIDIA 3080_10GB: the Llama3 8B or the Llama3 70B?

Based on available data, Llama3 8B with Q4KM quantization is faster on the NVIDIA 3080_10GB. However, we lack data to make a definitive comparison with the Llama3 70B model.

Does the NVIDIA 3080_10GB have enough memory for Llama3 70B?

Potentially, but we need further research and data to verify this.

Keywords

NVIDIA 308010GB, Llama3, Llama3 8B, Llama3 70B, Token Speed, Generation Speed, Quantization, Q4K_M, F16, GPU, GPU Performance, LLM Model, Local LLM, LLM Inference, Performance Comparison, LLM Benchmark.