Which is Faster on NVIDIA 3070 8GB: Llama3 8B or Llama3 70B? Token Speed Generation Comparison

Introduction

The world of large language models (LLMs) is buzzing with excitement, with new models and advancements emerging constantly. One of the hottest topics is running these models locally on your own hardware. This allows for greater privacy and control over your data. While powerful LLMs like Llama3 70B are impressive, running them locally can be a challenge for less powerful hardware.

This article explores the performance of Llama3 8B and Llama3 70B models on a popular mid-range GPU, the NVIDIA 3070_8GB, focusing on token speed generation, a key metric for LLM performance. We'll dive deep into the numbers, analyze the strengths and weaknesses of each model, and provide practical recommendations for choosing the best option for your needs.

Let's get started!

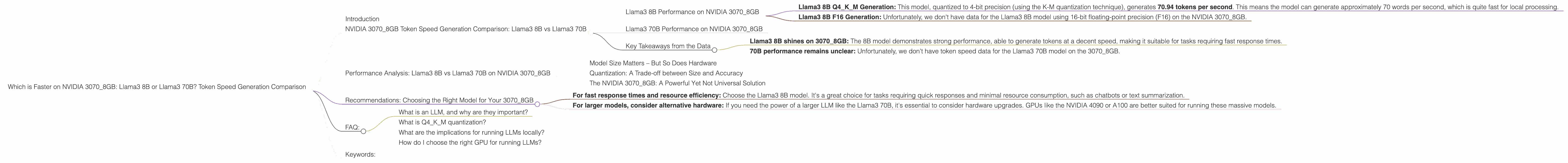

NVIDIA 3070_8GB Token Speed Generation Comparison: Llama3 8B vs Llama3 70B

Llama3 8B Performance on NVIDIA 3070_8GB

The Llama3 8B model, even with its smaller size compared to the 70B model, shows impressive performance on the NVIDIA 3070_8GB. Here's a breakdown:

- Llama3 8B Q4KM Generation: This model, quantized to 4-bit precision (using the K-M quantization technique), generates 70.94 tokens per second. This means the model can generate approximately 70 words per second, which is quite fast for local processing.

- Llama3 8B F16 Generation: Unfortunately, we don't have data for the Llama3 8B model using 16-bit floating-point precision (F16) on the NVIDIA 3070_8GB.

Llama3 70B Performance on NVIDIA 3070_8GB

The Llama3 70B model, despite being significantly larger, lacks performance data on NVIDIA 30708GB for both Q4KM and F16 configurations. This is likely due to the hardware limitations of the 30708GB.

Key Takeaways from the Data

- Llama3 8B shines on 3070_8GB: The 8B model demonstrates strong performance, able to generate tokens at a decent speed, making it suitable for tasks requiring fast response times.

- 70B performance remains unclear: Unfortunately, we don't have token speed data for the Llama3 70B model on the 3070_8GB.

Performance Analysis: Llama3 8B vs Llama3 70B on NVIDIA 3070_8GB

Model Size Matters – But So Does Hardware

The Llama3 8B model's success on the 30708GB can be attributed to its smaller size. Smaller models typically demand fewer resources and can be run more efficiently on less powerful hardware. The 70B model, on the other hand, is much larger and likely requires more memory and computational power than the 30708GB can provide.

Quantization: A Trade-off between Size and Accuracy

The use of Q4KM quantization helps to reduce the memory footprint of the Llama3 8B model, allowing it to run effectively on the 3070_8GB. However, quantization can lead to a slight decrease in accuracy compared to using F16 precision.

The NVIDIA 3070_8GB: A Powerful Yet Not Universal Solution

The NVIDIA 3070_8GB is a popular and capable GPU, but it's important to understand its limitations. While it can handle smaller LLMs like the Llama3 8B effectively, it might not be powerful enough for larger models like the Llama3 70B.

Recommendations: Choosing the Right Model for Your 3070_8GB

- For fast response times and resource efficiency: Choose the Llama3 8B model. It's a great choice for tasks requiring quick responses and minimal resource consumption, such as chatbots or text summarization.

- For larger models, consider alternative hardware: If you need the power of a larger LLM like the Llama3 70B, it's essential to consider hardware upgrades. GPUs like the NVIDIA 4090 or A100 are better suited for running these massive models.

FAQ:

What is an LLM, and why are they important?

LLMs are powerful Artificial Intelligence (AI) models trained on massive datasets of text and code, enabling them to understand and generate human-like language. LLMs are revolutionizing numerous fields, including language translation, content creation, and code generation.

What is Q4KM quantization?

Quantization is a technique used to reduce the size of AI models. Instead of storing each number (float) as a 32-bit value, we can compress it to 4-bit value using the "K-M" technique. This significantly decreases memory usage without compromising accuracy too much.

What are the implications for running LLMs locally?

Running LLMs locally on personal devices opens exciting possibilities for privacy and control over data. It allows for on-device processing without relying on cloud services, improving latency and security.

How do I choose the right GPU for running LLMs?

The ideal GPU for running LLMs depends on the specific model and your desired performance. For smaller models, a mid-range GPU like the 3070_8GB can be sufficient. However, very large models require more powerful GPUs like the 4090 or A100.

Keywords:

Llama3 8B, Llama3 70B, NVIDIA 30708GB, Token Speed Generation, LLM, Large Language Model, GPU, Performance Comparison, Quantization, Q4K_M, F16, Local Processing, On-Device Inference, AI, Machine Learning, Deep Learning,