Which is Faster on Apple M1 Max: Llama3 8B or Llama2 7B? Token Speed Generation Comparison

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But having all this power at your fingertips is only half the story. To truly unleash the potential of LLMs, you need the right hardware to run them efficiently. This is where the Apple M1 Max chip enters the picture. Its powerful GPU and blazing-fast memory make it an ideal candidate for running LLMs locally.

In this article, we'll dive deep into the performance of two popular LLMs, Llama3 8B and Llama2 7B, running on the Apple M1 Max chip. We'll compare their token generation speeds, focusing on different quantization levels (F16, Q40, Q80) and explore which model reigns supreme in terms of token generation speed. Buckle up, geeks, as we embark on this thrilling journey!

Apple M1 Max Token Speed Generation: A Detailed Look at Llama3 8B and Llama2 7B

The Apple M1 Max chip, with its impressive GPU and memory, is a strong contender for running LLMs locally. But which model, Llama3 8B or Llama2 7B, reigns supreme in terms of token generation speed on this powerhouse device? Let's break down the numbers and explore the performance of each model across different quantization levels.

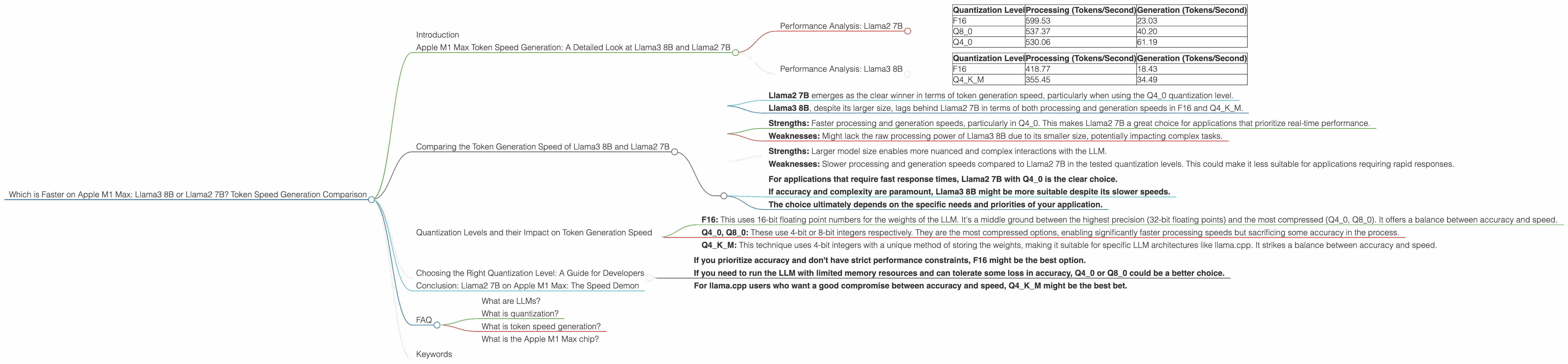

Performance Analysis: Llama2 7B

Let's first take a closer look at the performance of Llama2 7B model on the Apple M1 Max with different quantization levels:

| Quantization Level | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| F16 | 599.53 | 23.03 |

| Q8_0 | 537.37 | 40.20 |

| Q4_0 | 530.06 | 61.19 |

Key Observations:

- Llama2 7B demonstrates impressive performance on the M1 Max, showcasing robust processing speeds across the board.

- Notably, the Q4_0 quantization level delivers the fastest generation speed, with a remarkable 61.19 tokens/second.

- This suggests that while sacrificing some precision by moving towards lower quantization levels, we can gain significant speed enhancements for Llama2 7B on the M1 Max.

Performance Analysis: Llama3 8B

Now, let's shift our focus to the Llama3 8B model and its performance on the Apple M1 Max:

| Quantization Level | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| F16 | 418.77 | 18.43 |

| Q4KM | 355.45 | 34.49 |

Key Observations:

- While Llama3 8B demonstrates slower processing speeds than Llama2 7B in F16, its performance in Q4KM is surprisingly close.

- Llama3 8B achieves a generation speed of 34.49 tokens/second using Q4KM quantization, which falls short of Llama2 7B's Q4_0 generation speed.

- It's important to note that this data only encompasses Q4KM and F16 quantization for Llama3 8B, while Llama2 7B offers data for Q8_0 as well.

Comparing the Token Generation Speed of Llama3 8B and Llama2 7B

Now, let's put these two contenders head-to-head and compare their token generation speeds on the Apple M1 Max.

Overall Performance:

- Llama2 7B emerges as the clear winner in terms of token generation speed, particularly when using the Q4_0 quantization level.

- Llama3 8B, despite its larger size, lags behind Llama2 7B in terms of both processing and generation speeds in F16 and Q4KM.

Strengths and Weaknesses:

Llama2 7B:

- Strengths: Faster processing and generation speeds, particularly in Q4_0. This makes Llama2 7B a great choice for applications that prioritize real-time performance.

- Weaknesses: Might lack the raw processing power of Llama3 8B due to its smaller size, potentially impacting complex tasks.

Llama3 8B:

- Strengths: Larger model size enables more nuanced and complex interactions with the LLM.

- Weaknesses: Slower processing and generation speeds compared to Llama2 7B in the tested quantization levels. This could make it less suitable for applications requiring rapid responses.

Practical Recommendations:

- For applications that require fast response times, Llama2 7B with Q4_0 is the clear choice.

- If accuracy and complexity are paramount, Llama3 8B might be more suitable despite its slower speeds.

- The choice ultimately depends on the specific needs and priorities of your application.

Quantization Levels and their Impact on Token Generation Speed

Quantization is a technique used to reduce the size of LLMs while maintaining a reasonable level of accuracy. Think of it like compressing a video file – you reduce the file size, but you might lose some visual quality. The same principle applies here. Let's break down different quantization levels and how they impact the performance of our two LLMs:

- F16: This uses 16-bit floating point numbers for the weights of the LLM. It's a middle ground between the highest precision (32-bit floating points) and the most compressed (Q40, Q80). It offers a balance between accuracy and speed.

- Q40, Q80: These use 4-bit or 8-bit integers respectively. They are the most compressed options, enabling significantly faster processing speeds but sacrificing some accuracy in the process.

- Q4KM: This technique uses 4-bit integers with a unique method of storing the weights, making it suitable for specific LLM architectures like llama.cpp. It strikes a balance between accuracy and speed.

Choosing the Right Quantization Level: A Guide for Developers

So how do you choose the right quantization level for your application? It boils down to a careful balancing act between accuracy, speed, and memory footprint. Here's a quick guide:

- If you prioritize accuracy and don't have strict performance constraints, F16 might be the best option.

- If you need to run the LLM with limited memory resources and can tolerate some loss in accuracy, Q40 or Q80 could be a better choice.

- For llama.cpp users who want a good compromise between accuracy and speed, Q4KM might be the best bet.

Conclusion: Llama2 7B on Apple M1 Max: The Speed Demon

Our analysis has shown that Llama2 7B with Q4_0 quantization is the clear winner in terms of token generation speed on the Apple M1 Max. It's a blazing-fast option that delivers remarkable performance for real-time applications. While Llama3 8B offers a larger model size, it comes with a trade-off in terms of processing speed.

Remember, the best choice for you ultimately depends on the specific requirements of your application. If you're looking for a speed demon, Llama2 7B on the Apple M1 Max is hard to beat!

FAQ

What are LLMs?

LLMs are AI models that have been trained on massive amounts of text data. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is quantization?

Quantization is a technique used to reduce the size of LLMs, which helps to improve speed and reduce memory usage. It works by reducing the number of bits used to represent each weight in a language model. Think of it like compressing a video file - you reduce the file size, but you might lose some visual quality. The same principle applies here. The less bits you have, the more you compress the model and the faster it becomes, but also the less accurate it might be.

What is token speed generation?

Token speed generation refers to the speed at which an LLM can generate tokens (pieces of text that represent words or parts of words). It's an important metric for evaluating LLM performance, especially in applications where fast response times are critical.

What is the Apple M1 Max chip?

The Apple M1 Max is a powerful chip designed by Apple for use in its high-end laptops. It features a powerful GPU and fast memory, making it an ideal choice for running computationally intensive tasks like LLM inference.

Keywords

LLMs, Llama2, Llama3, Apple M1 Max, token speed, generation, quantization, F16, Q40, Q80, Q4KM, performance, comparison, benchmark, developer, AI, machine learning, natural language processing, NLP