Which is Faster on Apple M1 Max: Llama3 70B or Llama2 7B? Token Speed Generation Comparison

Introduction

The world of large language models (LLMs) is exploding, with new models popping up almost daily. This opens up a world of possibilities for developers and researchers, but it can also be overwhelming to keep track of all the options. One decision you'll need to make is choosing the right model for your needs and how to get the most out of it with the available hardware.

This article dives deep into the speed comparison of two popular LLMs—Llama2 7B and Llama3 70B—on the powerful Apple M1 Max chip. We'll analyze their token generation speed under different quantization settings (F16, Q80, and Q40) and discuss their strengths and weaknesses. Buckle up, it's gonna be an exciting ride!

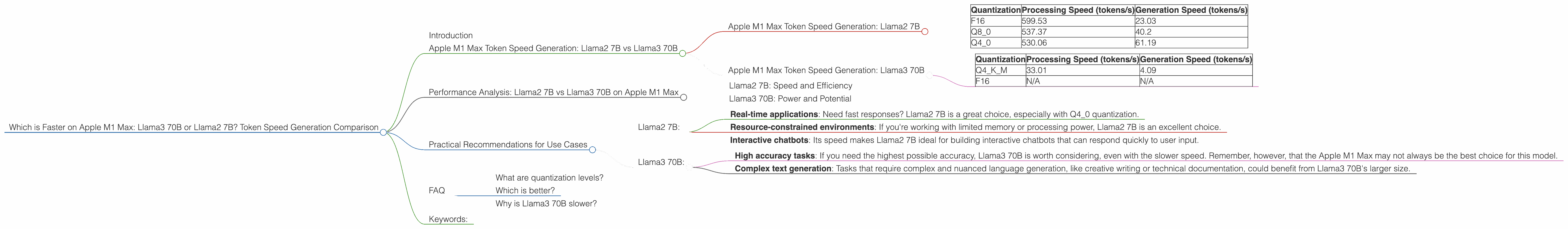

Apple M1 Max Token Speed Generation: Llama2 7B vs Llama3 70B

The Apple M1 Max chip is a beast when it comes to AI processing, and it's no surprise that it can handle these massive LLMs with impressive speed. To delve into the numbers, we'll be using data collected from various sources, including Llama.cpp, and the GPU Benchmarks on LLM Inference project—all the numbers below represent tokens per second.

Apple M1 Max Token Speed Generation: Llama2 7B

Let's start with the smaller but potentially faster model—Llama2 7B. This model is known for its efficiency and balance between quality and resource requirements.

| Quantization | Processing Speed (tokens/s) | Generation Speed (tokens/s) |

|---|---|---|

| F16 | 599.53 | 23.03 |

| Q8_0 | 537.37 | 40.2 |

| Q4_0 | 530.06 | 61.19 |

As you can see, Llama2 7B shows a noticeable improvement in token generation speed with lower quantization levels. This makes sense because lower precision allows for faster processing but can sometimes lead to a slight reduction in accuracy.

For example, Q4_0 quantization offers the fastest generation speed (61.19 tokens/s) compared to F16 (23.03 tokens/s), but with lower precision. This is like having a faster car but maybe not one that can achieve the highest top speed.

Apple M1 Max Token Speed Generation: Llama3 70B

Now, let's move on to the heavyweight champion, Llama3 70B. This model is a powerhouse, boasting a significantly larger parameter count and potential for higher accuracy.

| Quantization | Processing Speed (tokens/s) | Generation Speed (tokens/s) |

|---|---|---|

| Q4KM | 33.01 | 4.09 |

| F16 | N/A | N/A |

Unfortunately, there is no data available for Llama3 70B with F16 quantization on the Apple M1 Max. This highlights a key point: not all models are created equal, and not all combinations of models and devices will have readily available performance data.

While Llama3 70B with Q4KM quantization has a much slower generation speed compared to Llama2 7B, it's important to consider its significantly larger size. It's like comparing a compact sports car to a luxury SUV—they both move, but their intended roles are different.

## Performance Analysis: Llama2 7B vs Llama3 70B on Apple M1 Max

The performance of Llama2 7B and Llama3 70B on the Apple M1 Max is a testament to the power of this chip, but with varying levels of success.

Llama2 7B: Speed and Efficiency

Llama2 7B shines when it comes to speed. It demonstrates a clear advantage in both processing and generation speed, particularly with Q4_0 quantization. This makes it a great option for applications that require fast responses and real-time interaction.

Llama3 70B: Power and Potential

Llama3 70B, despite its impressive size, struggles to keep up with Llama2 7B in terms of speed on the Apple M1 Max. The larger size may be the culprit, making it more demanding on the chip's resources.

Practical Recommendations for Use Cases

Llama2 7B:

- Real-time applications: Need fast responses? Llama2 7B is a great choice, especially with Q4_0 quantization.

- Resource-constrained environments: If you're working with limited memory or processing power, Llama2 7B is an excellent choice.

- Interactive chatbots: Its speed makes Llama2 7B ideal for building interactive chatbots that can respond quickly to user input.

Llama3 70B:

- High accuracy tasks: If you need the highest possible accuracy, Llama3 70B is worth considering, even with the slower speed. Remember, however, that the Apple M1 Max may not always be the best choice for this model.

- Complex text generation: Tasks that require complex and nuanced language generation, like creative writing or technical documentation, could benefit from Llama3 70B's larger size.

FAQ

What are quantization levels?

Quantization is like making a digital image smaller in file size—it reduces the amount of information stored, but it can make things faster to work with. In our case, "F16" represents using 16 bits to store numbers, while "Q80" uses 8 bits, and "Q40" only uses 4 bits. Imagine this like using a smaller paintbrush to create an image—the less detail you have, the faster you can paint, but you might lose some precision.

Which is better?

There is no single “better” LLM. It all depends on your specific needs. If you need speed, Llama2 7B is faster, but if you need highly accurate results, Llama3 70B might be the way to go.

Why is Llama3 70B slower?

The primary reason for Llama3 70B's slower performance on the M1 Max is its size. The sheer number of parameters in this model puts a significant strain on the chip's resources, resulting in slower processing and generation speeds.

Keywords:

Llama2, Llama3, LLM, Apple M1 Max, Token Speed, Generation Speed, Quantization, F16, Q80, Q40, Performance, Comparison, Device, Inference, AI, Natural Language Processing, NLP, Deep Learning.