Which is Better for Running LLMs locally: NVIDIA RTX A6000 48GB or NVIDIA A100 PCIe 80GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, and the ability to run them locally is becoming increasingly important for developers and researchers. But with so many different GPUs available, it can be hard to know which one is best for your needs.

This article will compare the performance of two popular GPUs, the NVIDIA RTX A6000 48GB and the NVIDIA A100 PCIe 80GB, on several popular LLM models. We will analyze the data in a user-friendly way to help you understand the strengths and weaknesses of each GPU and get the most out of your LLM development endeavors.

Performance Analysis of RTX A6000 48GB vs A100 PCIe 80GB

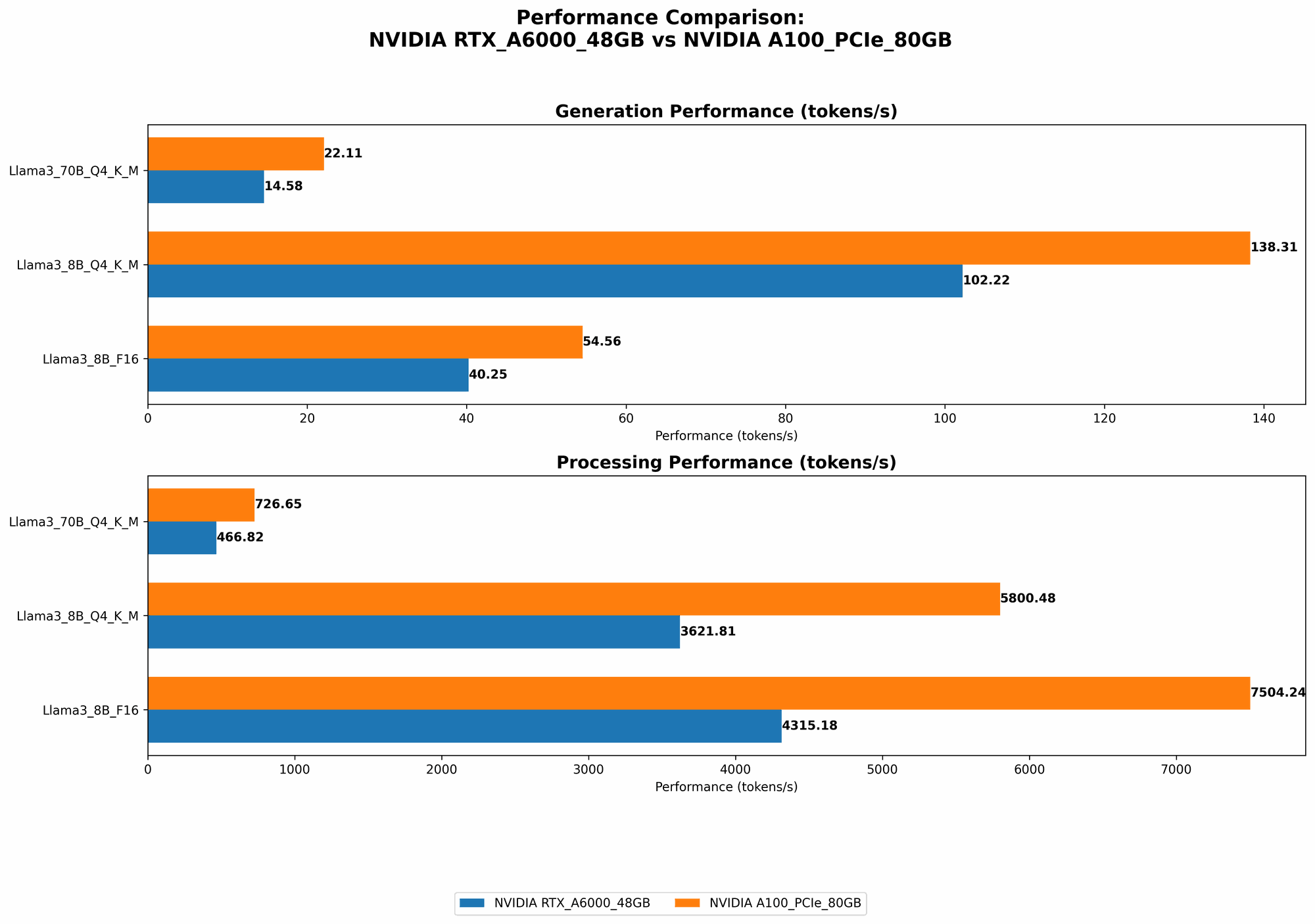

Comparison of NVIDIA RTX A6000 48GB and NVIDIA A100 PCIe 80GB on Llama 3 8B and 70B models

Let's dive into the performance data and analyze the differences between the two GPUs:

| Model | GPU | Generation (Tokens/second) | Processing (Tokens/second) |

|---|---|---|---|

| 8B | RTX A6000 48GB | 102.22 | 3621.81 |

| 8B | A100 PCIe 80GB | 138.31 | 5800.48 |

| 70B | RTX A6000 48GB | 14.58 | 466.82 |

| 70B | A100 PCIe 80GB | 22.11 | 726.65 |

As you can see, the A100 PCIe 80GB consistently outperforms the RTX A6000 48GB in both token generation and processing speeds across both the Llama 3 8B and 70B models. This performance difference stems from the A100's superior architecture and higher memory bandwidth.

Remember: The data provided is for a specific configuration and may vary depending on different factors like software versions, drivers, and other hardware.

NVIDIA RTX A6000 48GB: Strengths and Weaknesses

The RTX A6000 48GB is a powerful GPU designed for professional workloads like 3D rendering and deep learning. It features a large 48GB of GDDR6 memory, which is beneficial for handling large datasets and models. Here's a breakdown of its strengths and weaknesses:

Strengths

- Large Memory: Its 48GB of GDDR6 memory enables efficient training and deployment of large models.

- Good Overall Performance: While not as powerful as the A100, it still offers solid performance for both generation and processing, making it a decent option for lighter LLM models.

- More Affordable: The RTX A6000 generally comes at a lower price tag compared to the A100, making it a more cost-effective choice for some.

Weaknesses

- Lower Performance: It falls behind the A100 in terms of overall performance, especially with larger models like the Llama 3 70B.

- Limited Availability: These GPUs are in high demand, and it can be challenging to obtain one, especially during periods of increased demand.

NVIDIA A100 PCIe 80GB: Strengths and Weaknesses

The NVIDIA A100 PCIe 80GB is a top-tier GPU designed for high-performance computing and AI applications. It boasts a massive 80GB of HBM2e memory and a powerful Ampere architecture, delivering exceptional performance for even the most demanding LLM workloads.

Strengths

- Exceptional Performance: It outperforms the RTX A6000 in both token generation and processing, especially with larger models.

- High Memory Bandwidth: Its massive 80GB of HBM2e memory with a high bandwidth significantly improves data transfer speed, resulting in faster processing.

- Tensor Core Acceleration: The A100's Tensor Cores accelerate matrix multiplication, leading to significant gains in AI model performance, particularly for large models.

Weaknesses

- High Cost: The A100 PCIe 80GB comes with a high price tag, making it a significant investment for anyone interested in LLM development.

- Limited Availability: Like the RTX A6000, finding an A100, especially the PCIe version, might be challenging due to high demand.

Practical Recommendations

To help you decide which GPU is right for you, let's consider some real-world scenarios:

- Limited Budget, Smaller Models: If you are working with smaller models like Llama 3 8B and have a limited budget, the RTX A6000 48GB is a viable option. It can offer decent performance for your tasks.

- High Performance, Large Models: If you need the best possible performance for large language models like the Llama 3 70B and have the budget, the A100 PCIe 80GB is the clear winner. Its exceptional performance will accelerate your LLM development and research.

- Cost-Efficiency and Flexibility: If you need to handle both small and large models, consider the RTX A6000 for smaller models and potentially utilize cloud-based services like Google Colab or Amazon SageMaker for larger models.

Quantization: Understanding the Impact on Performance

Quantization is a technique used to reduce the size of a model's memory footprint and increase its inference speed. This is achieved by reducing the number of bits used to represent the weights and activations of the model. This technique is often used for deploying large LLMs on devices with limited memory and processing power.

In our data, "Q4" indicates that the model has been quantized using 4 bits per value. This leads to a significant reduction in model size and memory usage but can result in a slight decrease in accuracy.

FAQs

What are LLMs?

LLMs are machine learning models trained on vast amounts of text data. They can understand, generate, and translate text, making them incredibly versatile in applications like text summarization, chatbots, and code generation.

What is the difference between token generation and processing?

- Token generation: This refers to the speed at which the LLM can generate new tokens (words or sub-words) based on the input it receives.

- Token processing: This describes how fast the LLM can perform calculations and process the tokens provided as input.

How can I run LLMs locally?

To run LLMs locally, you need a powerful GPU and a suitable software framework like llama.cpp or transformers. You can find resources and tutorials online for setting up your LLM environment (check sources like the llama.cpp repository or Hugging Face).

Keywords

Large Language Models, LLMs, NVIDIA, RTX A6000, A100, PCIe, GPU, Performance, Benchmark, Llama, Token Generation, Token Processing, Quantization, Local Deployment, Inference, Deep Learning, AI, GPU Memory, Cost, Availability, Development, Research, Tokenization, Hugging Face, llama.cpp.