Which is Better for Running LLMs locally: NVIDIA RTX 5000 Ada 32GB or NVIDIA L40S 48GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, and the need for powerful hardware to run them locally is growing. For developers, researchers, and enthusiasts, the ability to experiment with and deploy LLMs on their own machines is a powerful tool. But with so many GPUs on the market, it's hard to know which one is right for you.

This article dives deep into the performance of two popular GPUs: the NVIDIA RTX5000Ada32GB and the NVIDIA L40S48GB. These GPUs are both popular choices for running LLMs, but which one comes out on top? We'll compare their performance on popular LLM models like Llama 3, analyze their strengths and weaknesses, and offer practical recommendations for choosing the right device.

Comparison of NVIDIA RTX5000Ada32GB and NVIDIA L40S48GB

Let's break down the key specs and compare the two GPUs in terms of their performance:

- NVIDIA RTX5000Ada_32GB: This powerhouse features 32GB of GDDR6 memory and 7,168 CUDA cores (the building blocks for parallel processing in NVIDIA GPUs). It's known for its high performance and versatility.

- NVIDIA L40S_48GB: Packed with a whopping 48GB of HBM3e memory, the L40S is a true memory monster. It boasts 18,432 CUDA cores, making it a beast in terms of raw processing power. However, while the L40S is a powerhouse for professional workloads, it may be overkill for hobbyist use.

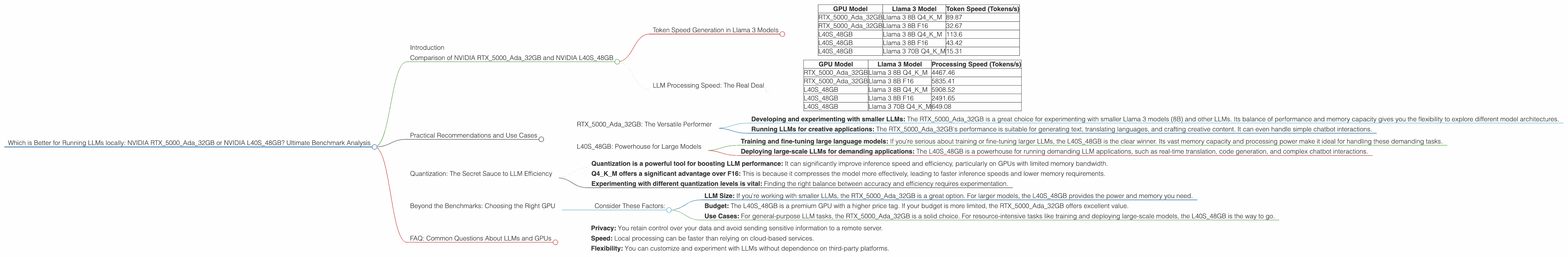

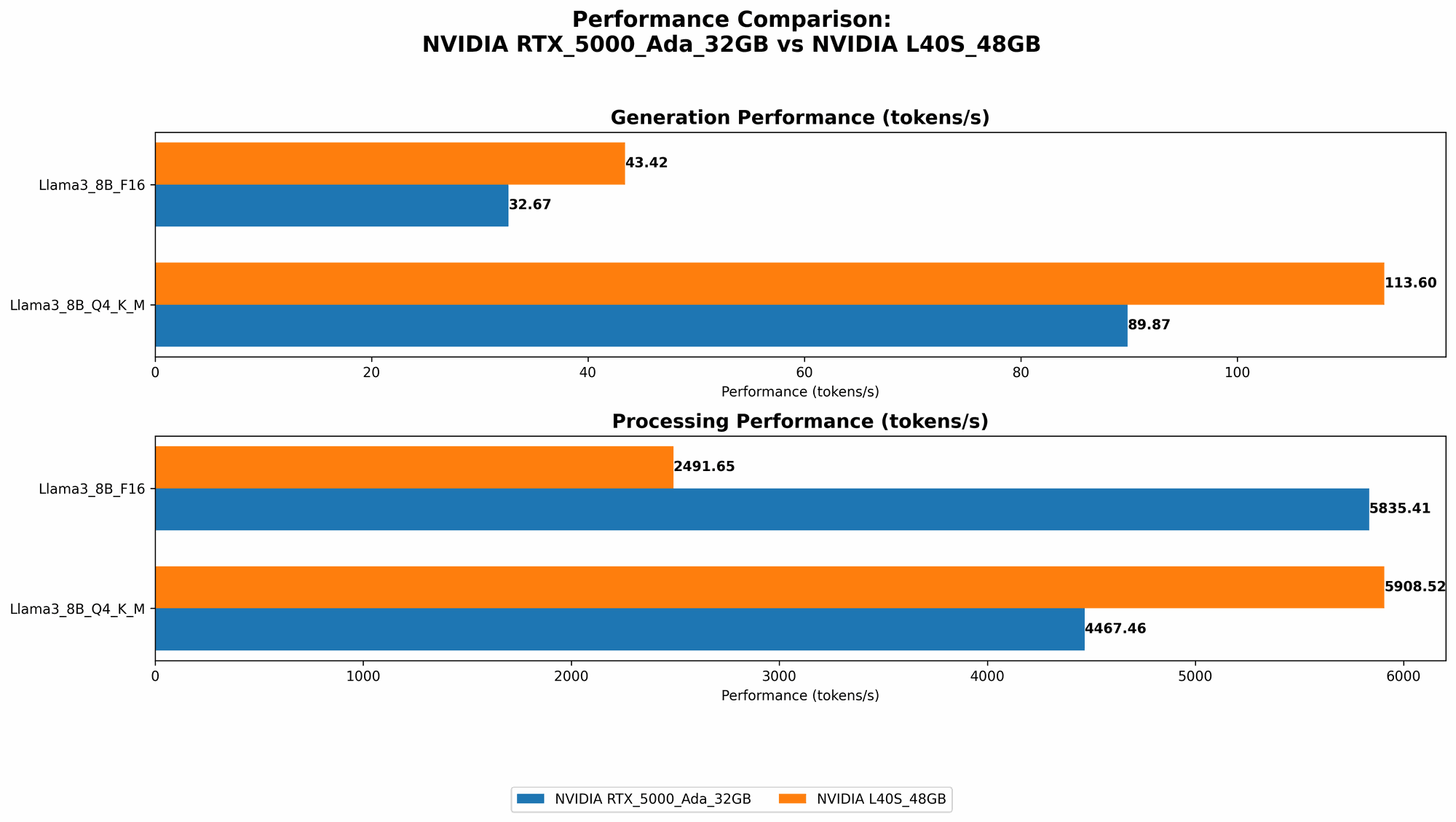

Token Speed Generation in Llama 3 Models

The table below summarizes the token generation performance of each GPU on different configurations of the Llama 3 model. The higher the number, the more tokens the GPU can generate per second, which translates to faster inference speeds.

| GPU Model | Llama 3 Model | Token Speed (Tokens/s) |

|---|---|---|

| RTX5000Ada_32GB | Llama 3 8B Q4KM | 89.87 |

| RTX5000Ada_32GB | Llama 3 8B F16 | 32.67 |

| L40S_48GB | Llama 3 8B Q4KM | 113.6 |

| L40S_48GB | Llama 3 8B F16 | 43.42 |

| L40S_48GB | Llama 3 70B Q4KM | 15.31 |

Observations:

- L40S48GB dominates in token speed generation: The L40S48GB consistently delivers significantly faster inference speeds for both the 8B and 70B Llama 3 models. This is likely due to its superior memory bandwidth and larger memory capacity.

- Q4KM quantization improves performance: The Q4KM quantization (a technique that reduces model size and memory footprint) consistently outperforms the F16 (half precision) setting. This is because Q4KM packs more information into fewer bits, allowing the GPU to process data faster.

LLM Processing Speed: The Real Deal

While token generation is crucial for LLMs, we also need to consider their overall processing speed. This includes all the steps involved in running an LLM, from pre-processing input to generating output. Below, we compare the processing speed of each GPU on different Llama 3 model configurations:

| GPU Model | Llama 3 Model | Processing Speed (Tokens/s) |

|---|---|---|

| RTX5000Ada_32GB | Llama 3 8B Q4KM | 4467.46 |

| RTX5000Ada_32GB | Llama 3 8B F16 | 5835.41 |

| L40S_48GB | Llama 3 8B Q4KM | 5908.52 |

| L40S_48GB | Llama 3 8B F16 | 2491.65 |

| L40S_48GB | Llama 3 70B Q4KM | 649.08 |

Observations:

- RTX5000Ada32GB excels in F16 processing: In the 8B Llama 3 models, the RTX5000Ada32GB outperforms the L40S48GB in F16 processing, despite having fewer CUDA cores. This indicates that the RTX5000Ada32GB's memory bandwidth and architecture might be better suited for F16 computations.

- L40S48GB is a champion for 70B models: The L40S48GB shines brightly when running the 70B Llama 3 model. The massive memory bandwidth and large memory capacity of the L40S_48GB are crucial for handling the larger model size.

Practical Recommendations and Use Cases

RTX5000Ada_32GB: The Versatile Performer

The RTX5000Ada_32GB is ideal for developers and researchers who are working with smaller to medium-sized LLMs. Its versatility makes it suitable for a wide range of tasks, including:

- Developing and experimenting with smaller LLMs: The RTX5000Ada_32GB is a great choice for experimenting with smaller Llama 3 models (8B) and other LLMs. Its balance of performance and memory capacity gives you the flexibility to explore different model architectures.

- Running LLMs for creative applications: The RTX5000Ada_32GB's performance is suitable for generating text, translating languages, and crafting creative content. It can even handle simple chatbot interactions.

L40S_48GB: Powerhouse for Large Models

The L40S_48GB is a beast for working with massive LLMs. Its sheer processing power and massive memory are perfect for pushing the boundaries of language modeling:

- Training and fine-tuning large language models: If you're serious about training or fine-tuning larger LLMs, the L40S_48GB is the clear winner. Its vast memory capacity and processing power make it ideal for handling these demanding tasks.

- Deploying large-scale LLMs for demanding applications: The L40S_48GB is a powerhouse for running demanding LLM applications, such as real-time translation, code generation, and complex chatbot interactions.

Quantization: The Secret Sauce to LLM Efficiency

Think of quantization as a diet for LLMs! It's a technique that reduces the size of the LLM without sacrificing too much accuracy. Imagine cramming a 10-course meal into a single tiny sandwich! That's essentially what quantization does for LLMs - it compresses the information into a smaller package, making them more efficient.

The Q4KM quantization mentioned in the tables uses just 4 bits to represent each number in the model, compared to the standard 16 bits used in F16. This dramatically reduces the memory footprint of the LLM, allowing it to run faster and more efficiently.

Quantization is particularly beneficial for GPUs with lower memory bandwidth, like the RTX5000Ada_32GB. It compensates for its lower memory bandwidth by reducing the data size and allowing the GPU to process information more rapidly.

Key Takeaways:

- Quantization is a powerful tool for boosting LLM performance: It can significantly improve inference speed and efficiency, particularly on GPUs with limited memory bandwidth.

- Q4KM offers a significant advantage over F16: This is because it compresses the model more effectively, leading to faster inference speeds and lower memory requirements.

- Experimenting with different quantization levels is vital: Finding the right balance between accuracy and efficiency requires experimentation.

Beyond the Benchmarks: Choosing the Right GPU

Ultimately, the choice between the RTX5000Ada32GB and L40S48GB comes down to your specific needs and budget.

Consider These Factors:

- LLM Size: If you're working with smaller LLMs, the RTX5000Ada32GB is a great option. For larger models, the L40S48GB provides the power and memory you need.

- Budget: The L40S48GB is a premium GPU with a higher price tag. If your budget is more limited, the RTX5000Ada32GB offers excellent value.

- Use Cases: For general-purpose LLM tasks, the RTX5000Ada32GB is a solid choice. For resource-intensive tasks like training and deploying large-scale models, the L40S48GB is the way to go.

FAQ: Common Questions About LLMs and GPUs

1. What are Large Language Models (LLMs)?

LLMs are a type of artificial intelligence that excel at understanding and generating human-like text. They are trained on massive datasets of text and code, enabling them to perform a wide range of tasks, from writing stories to translating languages. Popular examples include GPT-3, LaMDA, and Llama.

2. Why run LLMs locally?

Running LLMs locally offers several advantages, including:

- Privacy: You retain control over your data and avoid sending sensitive information to a remote server.

- Speed: Local processing can be faster than relying on cloud-based services.

- Flexibility: You can customize and experiment with LLMs without dependence on third-party platforms.

3. What are CUDA cores and what are they used for?

CUDA cores are the processing units found on NVIDIA GPUs. They enable parallel processing, which is crucial for tackling the complex computations involved in running LLMs. Think of them as tiny workers cooperating to complete a massive task.

4. What is memory bandwidth?

Memory bandwidth refers to the speed at which data can be transferred between the GPU's memory and the processing units (CUDA cores). Higher memory bandwidth generally means faster performance. It's like a highway for data flowing to and from the GPU's brain.

5. What is quantization and how does it benefit LLM performance?

Quantization is a technique that reduces the size of a model by representing its numbers using fewer bits. Imagine using a smaller coin to represent a dollar – you can fit more coins in your pocket! This makes the model faster and more efficient, particularly on GPUs with limited memory bandwidth.

Keywords:

NVIDIA RTX5000Ada32GB, NVIDIA L40S48GB, LLM, Llama 3, Token Speed, Processing Speed, Quantization, Q4KM, F16, GPU, CUDA Cores, Memory Bandwidth, Inference, Local Deployment, GPU Benchmark, Performance Analysis, Development, Research, Use Cases, Applications, Large Language Models, AI, Deep Learning