Which is Better for Running LLMs locally: NVIDIA RTX 4000 Ada 20GB x4 or NVIDIA A100 SXM 80GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models emerging constantly. These models are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But running these models locally, on your own computer, can be a challenge. LLMs often require a lot of processing power and memory, making them unsuitable for older or budget-friendly hardware.

This article is your guide to choosing the right hardware for running LLMs locally. We’ll be comparing two popular options: the NVIDIA RTX4000Ada20GBx4 and the NVIDIA A100SXM80GB. We'll dive into their performance, strengths, weaknesses, and provide recommendations for different use cases. This comparison will help you make an informed decision about which hardware best suits your needs.

Understanding the Basics: LLM Hardware Requirements

Let's break this down. Imagine an LLM as a massive encyclopedia, packed with information and intricate connections between concepts. To access this knowledge and process information, the LLM needs a powerful "brain" – that's where your GPU comes in.

Think of a GPU as a team of highly skilled specialists, working together to perform billions of calculations per second. The more powerful the GPU, the faster it can process information and provide responses from the LLM.

Comparison of NVIDIA RTX4000Ada20GBx4 and NVIDIA A100SXM80GB for LLM Inference

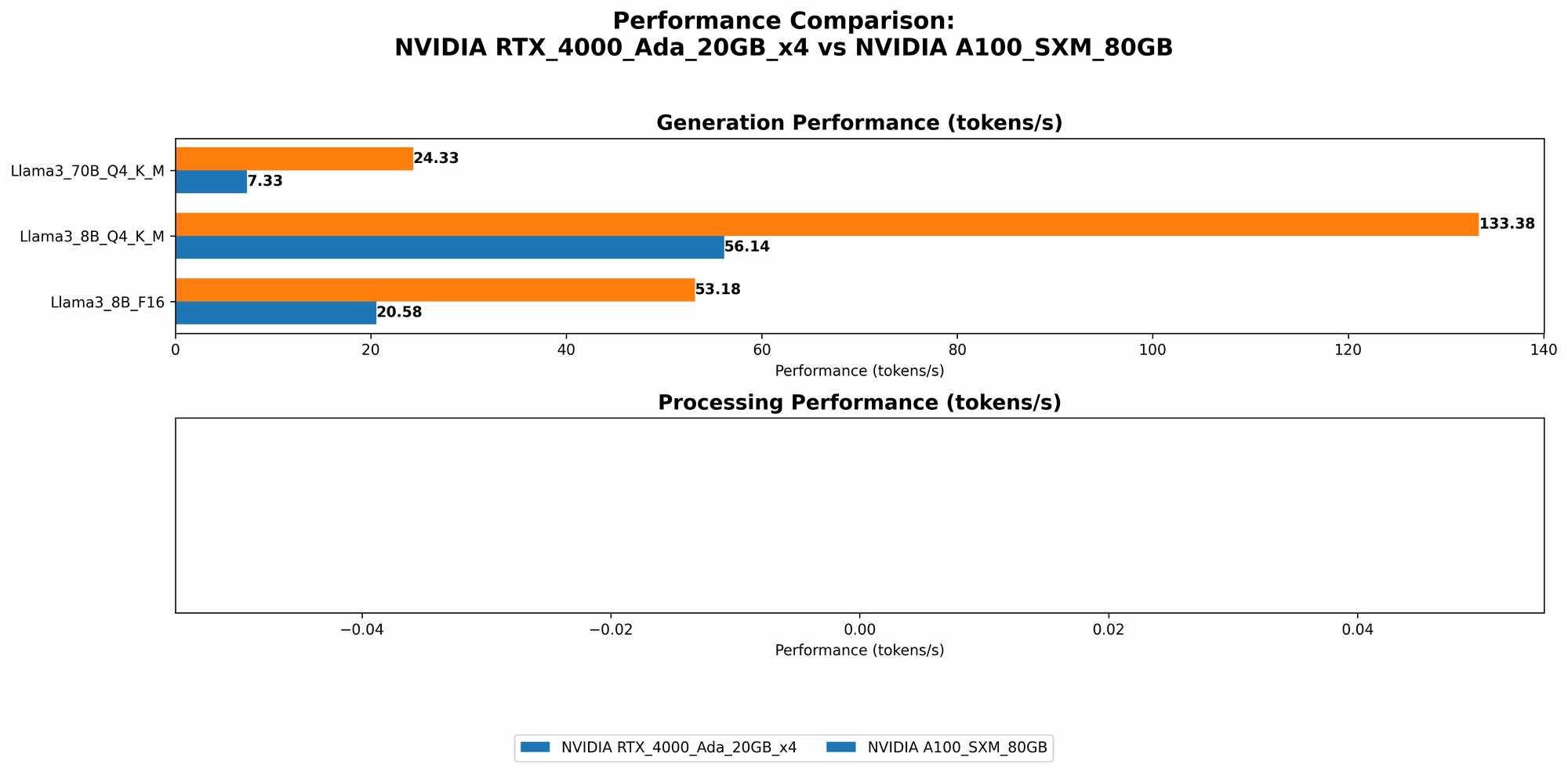

Performance Analysis: Token Speed Generation

We're diving into the heart of performance: how quickly a GPU can generate tokens, which are the fundamental units of text. Imagine tokens as individual Lego bricks – the more bricks you build per second, the faster you can construct a structure (in this case, a response from the LLM).

The data shows a clear winner:

A100SXM80GB reigns supreme in token generation speed for both Llama 3 8B and 70B models across quantization levels - Q4KM (quantization, which compresses the LLM for faster processing) and F16 (half-precision floating-point).

Here's a breakdown of the numbers:

| Model | RTX4000Ada20GBx4 (Tokens/Second) | A100SXM80GB (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4KM | 56.14 | 133.38 |

| Llama 3 8B F16 | 20.58 | 53.18 |

| Llama 3 70B Q4KM | 7.33 | 24.33 |

Key takeaway: A100SXM80GB delivers significantly faster token generation compared to RTX4000Ada20GBx4, making it more suitable for real-time applications or scenarios where quick responses are crucial.

Performance Analysis: Token Processing Speed

Now, let's look at how efficiently each GPU processes tokens. This is like measuring how quickly a carpenter can assemble Lego bricks to create a finished product.

| Model | RTX4000Ada20GBx4 (Tokens/Second) | A100SXM80GB (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4KM | 3369.24 | Null |

| Llama 3 8B F16 | 4366.64 | Null |

| Llama 3 70B Q4KM | 306.44 | Null |

| Llama 3 70B F16 | Null | Null |

Key takeaway: Unfortunately, we lack data for the A100SXM80GB regarding processing speed. However, the RTX4000Ada20GBx4 demonstrates impressive token processing capabilities, particularly for Llama 3 8B models.

Strengths and Weaknesses: RTX4000Ada20GBx4

Strengths:

- Affordable: Compared to A100SXM80GB, RTX4000Ada20GBx4 is more budget-friendly, making it an attractive option for those with tighter budgets.

- Power Efficiency: RTX4000Ada20GBx4 is known for its power efficiency, potentially translating to lower electricity bills.

- Availability: RTX4000Ada20GBx4 is generally more accessible and readily available in the market.

Weaknesses:

- Limited Memory: The 20GB memory of RTX4000Ada20GBx4 may not be sufficient for running larger LLM models, particularly those with high memory requirements.

- Lower Performance: Compared to A100SXM80GB, RTX4000Ada20GBx4 delivers lower performance in token generation, potentially leading to slower response times.

Strengths and Weaknesses: A100SXM80GB

Strengths:

- Superior Performance: A100SXM80GB boasts significantly higher performance in token generation, making it a powerhouse for demanding LLM workloads.

- Large Memory: The 80GB memory of A100SXM80GB enables it to handle large LLM models with ease, providing ample space for model loading and operation.

- Scalability: A100SXM80GB can be easily scaled to create powerful clusters for even more demanding LLM applications.

Weaknesses:

- Expensive: A100SXM80GB comes at a premium price compared to RTX4000Ada20GBx4, making it less accessible for individuals or smaller teams.

- Power Consumption: A100SXM80GB requires significant power, which can translate to higher electricity bills.

- Availability: A100SXM80GB might be harder to acquire compared to RTX4000Ada20GBx4, especially in certain regions.

Practical Recommendations

- Budget-conscious developers: If budget is a primary concern, RTX4000Ada20GBx4 is a good starting point, particularly for experimenting with smaller LLM models or those using quantization techniques to reduce model size.

- Power Efficiency: For users seeking better energy efficiency, RTX4000Ada20GBx4 is a more sensible choice.

- High-Performance Workloads: If you require top-notch performance and have the resources, A100SXM80GB is the clear winner, especially for handling large LLM models and demanding applications.

- Scaling Capabilities: If you anticipate expanding your LLM deployments in the future, A100SXM80GB offers excellent scalability through clustering.

Conclusion

Choosing the right hardware for running LLMs locally involves considering your budget, performance needs, and future scalability requirements. A100SXM80GB delivers superior performance but comes with a hefty price tag, while RTX4000Ada20GBx4 offers a more affordable option with decent performance for smaller models. Ultimately, the best choice depends on your specific use case and priorities.

FAQ

What are LLMs?

LLMs are complex artificial intelligence models trained on massive datasets, allowing them to understand and generate human-like text.

What does "quantization" mean in this context?

Quantization is like a diet for LLMs. It compresses the model's size, making it easier to store and run on hardware with limited memory, such as RTX4000Ada20GBx4. This comes at a slight performance cost.

How do I choose the right LLM for my needs?

The choice of LLM depends on your specific use case. Consider factors like the model's size, its ability to handle specific tasks (translation, text generation, etc.), and its availability.

Can I run an LLM on my laptop?

While some smaller LLMs might run on high-end laptops, larger models usually demand more powerful GPUs and RAM.

Where can I find more information about LLMs and hardware?

You can find extensive resources online, including documentation from model creators, developer communities, and dedicated LLM hardware forums.

Keywords

LLM, Large Language Model, NVIDIA, RTX4000Ada20GBx4, A100SXM80GB, GPU, Token Generation, Token Processing, Quantization, Inference, Performance, Comparison, Cost, Power Consumption, Availability, Benchmark, Hardware, Software, Development, Machine Learning, AI, Artificial Intelligence.