Which is Better for Running LLMs locally: NVIDIA RTX 4000 Ada 20GB x4 or NVIDIA A100 PCIe 80GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, and running these powerful AI models locally is becoming increasingly popular. Whether you're a researcher, developer, or simply someone who wants to experiment with the cutting edge of AI, the choice of hardware is crucial.

In this article, we'll dive deep into the performance of two popular GPU options: NVIDIA RTX4000Ada20GBx4 and NVIDIA A100PCIe80GB, comparing their capabilities for running LLM models locally. We'll analyze their strengths and weaknesses, providing a comprehensive understanding of which device is better suited for different use cases and LLM models.

Think of this as a GPU showdown for the ultimate LLM champion! 🥊

Performance Analysis: A100 vs. RTX 4000 x4

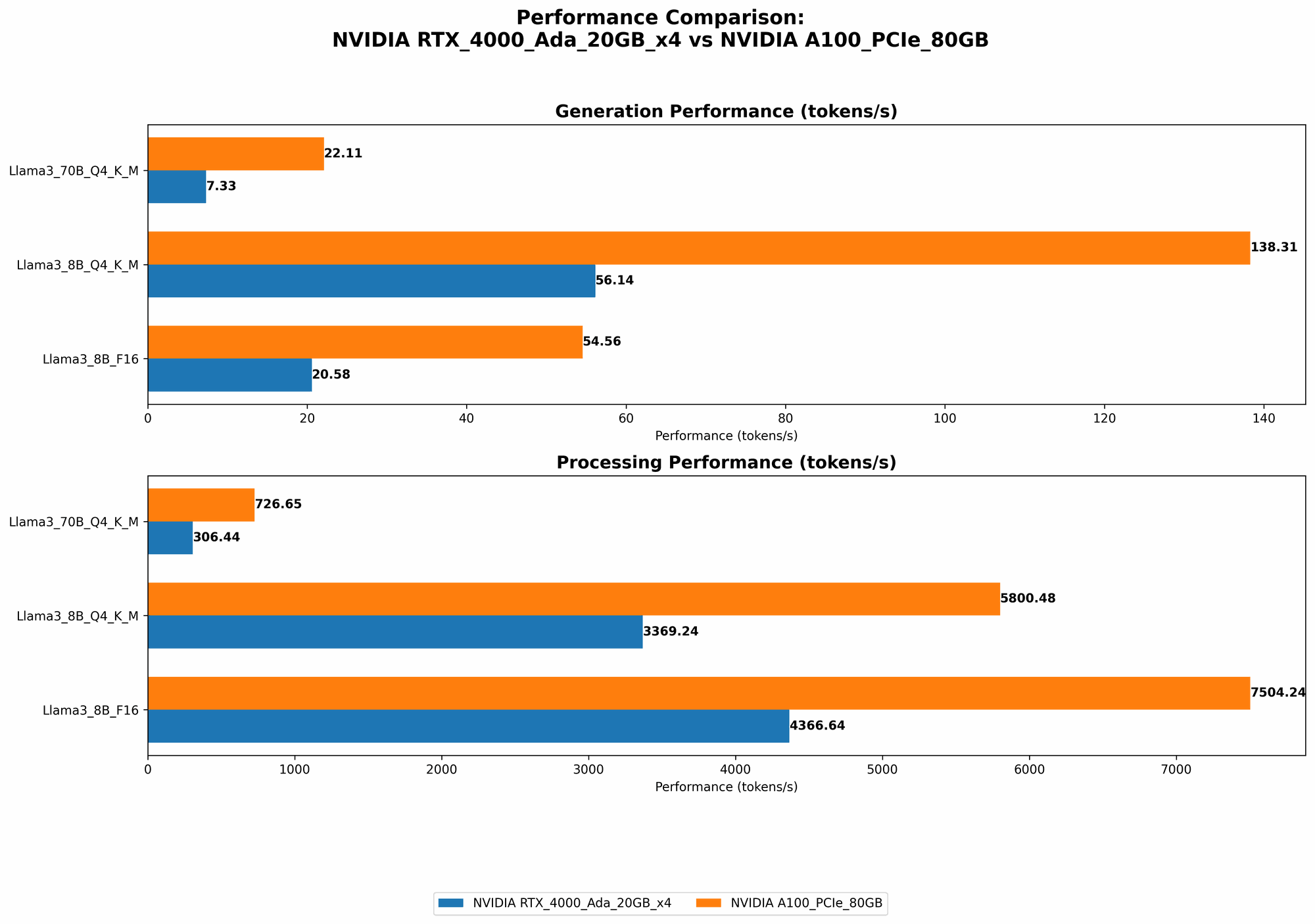

Let's start by comparing the raw performance of these two beasts on various Llama 3 models, with a focus on token generation (how fast they can create text) and processing (how fast they can handle the actual calculations):

Comparison of NVIDIA A100PCIe80GB and NVIDIA RTX4000Ada20GBx4 on Llama 3 Models

| Model | Device | Token Generation (Tokens/Second) | Processing (Tokens/Second) |

|---|---|---|---|

| Llama 3 8B Q4 K_M Generation | NVIDIA RTX4000Ada20GBx4 | 56.14 | 3369.24 |

| Llama 3 8B F16 Generation | NVIDIA RTX4000Ada20GBx4 | 20.58 | 4366.64 |

| Llama 3 70B Q4 K_M Generation | NVIDIA RTX4000Ada20GBx4 | 7.33 | 306.44 |

| Llama 3 8B Q4 K_M Generation | NVIDIA A100PCIe80GB | 138.31 | 5800.48 |

| Llama 3 8B F16 Generation | NVIDIA A100PCIe80GB | 54.56 | 7504.24 |

| Llama 3 70B Q4 K_M Generation | NVIDIA A100PCIe80GB | 22.11 | 726.65 |

Note: We currently lack data for the Llama 3 70B F16 generation on both devices.

Key Observations:

- A100 Dominates in Token Generation and Processing: The A100 consistently outperforms the RTX 4000 x4 in both token generation and processing speeds for both Llama 3 8B and 70B models. This is primarily due to the A100's superior tensor cores and higher memory bandwidth, making it a powerhouse for LLM applications.

- Quantization Matters: The A100 excels even more when using quantized models (Q4 K_M). Quantization is a technique that reduces the size of the LLM model by using smaller numbers, which speeds up processing without significantly impacting the model's performance. This highlights how the A100's architecture is especially well-suited for efficient handling of quantized models.

- RTX 4000 x4 Still Holds Value: While the A100 is clearly the winner in terms of raw performance, the RTX 4000 x4 isn't a slouch. For smaller LLMs and simpler tasks, the RTX 4000 x4 can be a viable option, offering a good balance between performance and cost.

Practical Considerations

So, which device is the right choice for you?

- For research and development: If you frequently work with large LLMs, the A100 is the clear leader. It provides the speed and memory capacity to tackle even the most demanding tasks.

- For smaller LLMs and simpler tasks: The RTX 4000 x4 can be a cost-effective alternative. It still offers good performance for lighter models and tasks like text summarization or basic text generation.

Understanding the Numbers: Token Generation and Processing

To fully appreciate the performance differences, let's delve into the meaning of token generation and processing:

Token Generation: The Speed of Thought

Token generation measures how quickly a GPU can produce new text. Think of it as the speed at which the LLM can 'think' and create output.

Higher token generation speed means faster response times, allowing you to quickly generate text, translate languages, or run other LLM tasks. It's like having a super-fast brain that can churn out ideas and words at lightning speed.

Processing: The Power of Calculation

Processing, in this context, measures how efficiently a GPU can handle the massive number of calculations involved in running a LLM. Think of it as the GPU's ability to crunch numbers and process information quickly.

Higher processing speed means the LLM can handle more complex tasks, analyze more data, and produce more nuanced outputs. It's like having a powerful engine that can handle complex tasks without breaking a sweat.

Understanding Quantization: A Smaller Package, Faster Performance

Quantization is a way of optimizing LLM models for faster inference. It's a bit like putting your LLM on a diet, making it smaller and more efficient.

Instead of using large numbers (like 32-bit floating-point numbers), quantization uses smaller numbers (like 4-bit or 8-bit integers). This reduces the memory footprint of the model and allows the GPU to process information faster.

Think of it like compressing a video file without losing too much quality. The file becomes smaller, but it still plays smoothly.

By using quantization, you can run LLMs on devices with less memory and still achieve impressive performance gains. This is why the A100 shines with quantized models; its architecture is optimized to efficiently handle these smaller data types.

The A100 Advantage: A Closer Look

So, why is the A100 such a beast for LLMs? Let's break down its key strengths:

Tensor Cores: The Powerhouse of Deep Learning

The A100 is equipped with specialized hardware called Tensor Cores, specifically designed to accelerate matrix multiplications, the heart of deep learning algorithms. These cores are incredibly efficient at performing these computations, giving the A100 its edge in LLM performance.

Memory Bandwidth: Getting to the Data Quickly

The A100 boasts high memory bandwidth, meaning it can move data between the GPU and memory at blazing speeds. This is crucial for LLMs, as they need to access vast amounts of data to generate text. With faster data access, the A100 can process information much more quickly.

PCIe: A Faster Connection

The A100 leverages the PCIe (Peripheral Component Interconnect Express) bus for communication with the system's memory. PCIe provides a much faster data transfer rate compared to older technologies, ensuring that the A100 can get the data it needs quickly and efficiently.

The RTX 4000 x4: A Worthwhile Alternative

Despite being outperformed by the A100, the RTX 4000 x4 still holds its own, particularly for smaller LLMs and specific use cases:

Scalability: Four GPUs, Four Times the Power

The RTX 4000 x4 is a multi-GPU setup, meaning you get the combined power of four individual GPUs. While the A100 might be stronger per GPU, the RTX 4000 x4 offers scalability, potentially reaching similar levels of performance with the right configuration.

Cost-Effectiveness: A Balanced Approach

The RTX 4000 x4 offers a good balance between performance and cost. While the A100 might be the king of the hill, it also comes with a hefty price tag. The RTX 4000 x4 provides a more affordable option for those who don't need the absolute top performance.

Conclusion: The Right GPU for the Job

The choice between the NVIDIA A100PCIe80GB and NVIDIA RTX4000Ada20GBx4 boils down to your needs and budget:

- A100: The A100 is the ultimate choice for large-scale LLM applications, research, and development.

- RTX 4000 x4: The RTX 4000 x4 is a cost-effective option for smaller LLMs, simpler tasks, and those who value scalability.

FAQ

What are LLMs?

LLMs are large language models, powerful Artificial Intelligence systems trained on massive amounts of text data. They can understand and generate human-like language, and they're used in a wide variety of applications, including chatbots, translation services, and AI-powered writing assistants.

What does "Q4 K_M" mean?

"Q4 KM" refers to a quantization scheme used to optimize LLM models for faster performance. "Q4" indicates that the model is using 4-bit integers, while "KM" refers to "kernel and matrix" quantization, a specific technique that reduces the size of the model without sacrificing too much accuracy.

What are the differences between FP16 and Q4?

FP16 (16-bit floating-point) is the standard data type used in many ML models. Q4 (4-bit integer) is a quantized version that uses smaller numbers, resulting in a smaller model size and faster processing, but potentially with some loss of accuracy.

What are other popular GPUs for running LLMs?

Besides the A100 and RTX 4000, other popular GPUs for running LLMs include:

- NVIDIA A40: A more powerful version of the A100, offering higher performance and memory capacity

- NVIDIA RTX 4090: A high-end gaming GPU with excellent performance, but it might not be as efficient for LLMs as specialized cards like the A100

- AMD MI250: A powerful GPU designed for AI applications, particularly in the datacenter space

What about CPU-based LLM inference?

While GPUs are generally more powerful for running LLMs, you can also run them on CPUs. However, the performance will be significantly slower. CPUs are typically better suited for smaller LLMs or tasks that don't require massive computational power.

Keywords

LLM, Large Language Model, GPU, NVIDIA, A100, RTX 4000, Ada, Token Generation, Processing, Quantization, Q4 K_M, F16, Performance, Benchmark, Comparison, Local Inference, Llama 3, AI, Deep Learning, Tensor Cores, Memory Bandwidth, PCIe, Scalability, Efficiency, Cost-Effectiveness, Use Cases