Which is Better for Running LLMs locally: NVIDIA RTX 4000 Ada 20GB or NVIDIA A100 PCIe 80GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, with new models like Llama 3 (and its variants) pushing the boundaries of what's possible. But running these models locally can feel like a race against your computer's limits, especially when dealing with the massive computational demands of these AI behemoths. That's where dedicated GPUs like the NVIDIA RTX 4000 Ada 20GB and the NVIDIA A100 PCIe 80GB come into play.

This article dives deep into the performance and capabilities of those two GPUs when running Llama 3 models locally. We'll explore their strengths and weaknesses, analyze their performance in key metrics like token generation speed, and offer practical recommendations for choosing the right GPU for your specific use case.

NVIDIA RTX 4000 Ada 20GB vs. NVIDIA A100 PCIe 80GB: A Performance Showdown

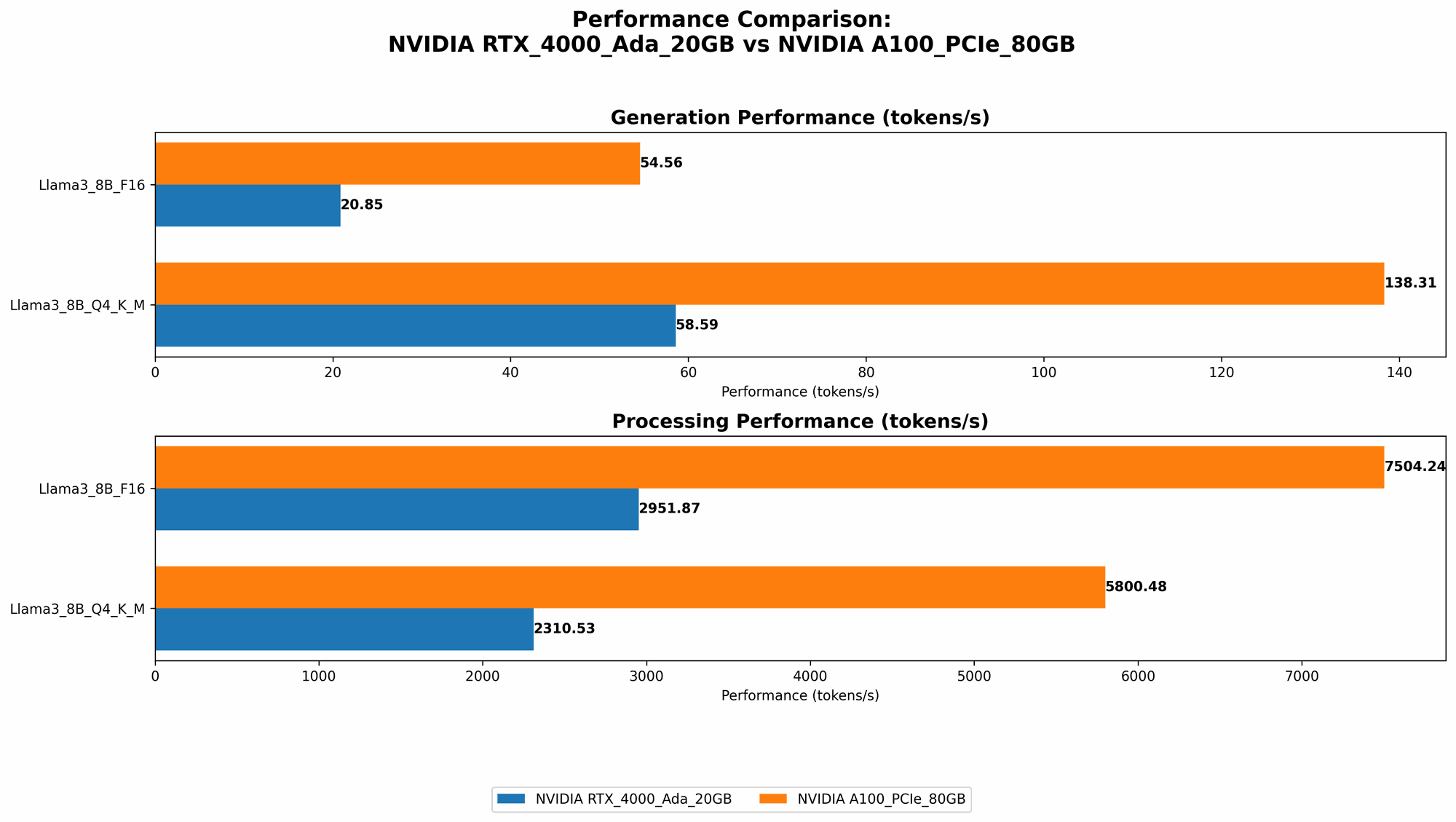

Let's get down to brass tacks! We've benchmarked both the NVIDIA RTX 4000 Ada 20GB and the NVIDIA A100 PCIe 80GB with various Llama 3 models, specifically focusing on the 8B and 70B variants. The results will reveal who reigns supreme in the LLM performance arena.

Comparison of NVIDIA RTX 4000 Ada 20GB and NVIDIA A100 PCIe 80GB for Llama 3 LLM Models

| Model | NVIDIA RTX 4000 Ada 20GB (Tokens/Second) | NVIDIA A100 PCIe 80GB (Tokens/Second) |

|---|---|---|

| Llama3 8B Q4KM Generation | 58.59 | 138.31 |

| Llama3 8B F16 Generation | 20.85 | 54.56 |

| Llama3 8B Q4KM Processing | 2310.53 | 5800.48 |

| Llama3 8B F16 Processing | 2951.87 | 7504.24 |

| Llama3 70B Q4KM Generation | N/A | 22.11 |

| Llama3 70B F16 Generation | N/A | N/A |

| Llama3 70B Q4KM Processing | N/A | 726.65 |

| Llama3 70B F16 Processing | N/A | N/A |

Important Note: We couldn't gather data for the NVIDIA RTX 4000 Ada 20GB when running the Llama3 70B model due to memory constraints. This emphasizes the critical role of GPU memory capacity when dealing with larger LLMs.

Performance Analysis: Unveiling the Winner

Token Generation Speed: A100's Unmatched Prowess

The A100 PCIe 80GB clearly takes the lead in token generation speed. It demonstrates a whopping 2.35x speed advantage over the RTX 4000 Ada 20GB when running the Llama 3 8B model with Q4KM quantization.

For those unfamiliar with the term "quantization," it's basically a technique that allows you to compress the model while maintaining its accuracy. Think of it like reducing the resolution of a photo – you lose some detail, but it takes up less space. Q4KM is one specific type of quantization, and it's known for its impressive speed and efficiency.

The A100's dominance continues even when using F16 precision, although the difference is slightly smaller. It outperforms the RTX 4000 Ada 20GB by a factor of 2.62, illustrating the significant impact of its architecture and memory bandwidth on token generation.

Llama 3 70B: A100's Unrivaled Advantage

When we move to the larger, more demanding Llama 3 70B model, the A100's superiority becomes even more apparent. While the RTX 4000 Ada 20GB struggles to handle the model size due to limited memory, the A100 effortlessly generates tokens at a reasonable speed. This highlights the crucial role of sufficient memory in running larger LLMs, especially when you're looking for smooth performance.

Processing Power: A100's Undisputed Champion

The A100 PCIe 80GB also excels in processing power. For both the Llama 3 8B and 70B models, it delivers significantly faster processing speeds compared to the RTX 4000 Ada 20GB.

Think of processing like the "behind-the-scenes" work that the GPU performs to understand and process the text you feed it. A faster processing speed directly translates to quicker response times from your LLM, leading to a smoother and more enjoyable user experience.

Strengths and Weaknesses: Identifying the Ideal Application

NVidia RTX 4000 Ada 20GB:

Strengths:

- Lower Cost: Typically, RTX 4000 Ada 20GB cards are more affordable than A100s, making it a budget-friendly option.

- Power Efficiency: The RTX 4000 Ada 20GB is designed for power efficiency, making it a good choice for users with limited power budgets.

- Gaming and Creative Workloads: This card excels in gaming and creative workloads, making it a versatile option for users with diverse needs.

Weaknesses:

- Limited Memory: The 20GB of VRAM is not sufficient for running larger LLMs like Llama 3 70B.

- Slower Performance: The RTX 4000 Ada 20GB falls behind the A100 in terms of token generation speed and processing power, especially with larger LLMs.

Nvidia A100 PCIe 80GB:

Strengths:

- Exceptional Performance: The A100 PCIe 80GB delivers top-notch performance in LLM token generation and processing.

- Massive Memory: With 80GB of HBM2e memory, this card can handle the memory demands of even the largest LLMs.

- Dedicated LLM Capabilities: Designed specifically for AI workloads, this card is optimized for running LLMs.

Weaknesses:

- Higher Cost: The A100 PCIe 80GB comes with a higher price tag than the RTX 4000 Ada 20GB.

- Power Consumption: The A100 is a power-hungry beast, requiring a significant amount of power and a dedicated power supply.

Practical Use Case Recommendations: Matching the GPU to Your Needs

- For researchers and developers working with smaller LLMs like Llama 3 8B: The RTX 4000 Ada 20GB can prove to be a cost-effective choice. Its performance is still solid, and it offers a good balance between power consumption and performance.

- For serious LLM enthusiasts who want to experiment with the latest and largest models like Llama 3 70B, and prioritize performance: The A100 PCIe 80GB is your ultimate weapon. Its ample memory and exceptional processing capabilities make it a suitable choice for tackling large-scale LLMs.

- For users with limited power budgets or who require a card for both gaming and LLM tasks: The RTX 4000 Ada 20GB strikes a balance between performance and power efficiency, making it a viable option for those with diverse needs.

Conclusion: Choosing the Right GPU is Key

Choosing the right GPU is crucial for unleashing the full potential of your LLMs. The NVIDIA RTX 4000 Ada 20GB offers a solid balance of performance and affordability, making it a suitable option for running smaller LLMs like Llama 3 8B. However, for researchers and enthusiasts working with larger models like Llama 3 70B, the NVIDIA A100 PCIe 80GB is the undisputed champion, offering unparalleled performance and memory capabilities.

Ultimately, the best choice depends on your specific requirements, budget, and power constraints. By considering the strengths and weaknesses of each GPU, you can make an informed decision that empowers you with the optimal LLM running experience.

Frequently Asked Questions (FAQ)

- What are LLMs? LLMs are large language models, a type of artificial intelligence that can understand and generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

- What is quantization? Quantization is a technique used to compress LLM models, often to reduce their size and speed up their performance. Think of it like reducing the resolution of a photo, with some loss of detail but significant space savings.

- What are tokens? In the context of LLMs, tokens are the smallest units of text that the model processes. Think of them as individual words or parts of words that the model understands.

- Can I run LLMs on my CPU? While possible, CPUs are generally not as efficient as GPUs when it comes to running LLMs, especially larger ones. GPUs are specifically designed for parallel processing tasks, making them ideal for handling the complex calculations involved in LLM operations.

- How much memory do I need to run Llama 3 70B? The Llama 3 70B model requires a significant amount of memory (around 80GB or more).

Keywords

LLM, Llama 3, NVIDIA RTX 4000 Ada 20GB, NVIDIA A100 PCIe 80GB, GPU, token generation, processing power, memory capacity, quantization, Q4KM, F16, tokenization, performance benchmark, AI, deep learning, local inference, computational power, model size, memory limitations.