Which is Better for Running LLMs locally: NVIDIA L40S 48GB or NVIDIA RTX 4000 Ada 20GB x4? Ultimate Benchmark Analysis

Introduction

Large Language Models (LLMs) are revolutionizing the way we interact with technology. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, like a human would. But running LLMs—especially the larger ones—can require immense computational power.

This article dives deep into the performance of two popular hardware options for running LLMs locally: the mighty NVIDIA L40S48GB and the slightly more affordable but still powerful NVIDIA RTX4000Ada20GB_x4 configuration. We'll compare their performance on various LLM models using real-world benchmark data from experts in the field. Let's see which one emerges as the champion for your local LLM endeavors!

The Contenders: NVIDIA L40S48GB vs. NVIDIA RTX4000Ada20GB_x4

The NVIDIA L40S_48GB: A Heavyweight Champion

The NVIDIA L40S_48GB packs a punch with its 48GB of HBM3e memory, making it a beast for handling large LLM models. Its impressive memory bandwidth and high compute power deliver impressive performance in various scenarios. Think of it as the LeBron James of GPUs, capable of taking on any challenge with ease.

The NVIDIA RTX4000Ada20GBx4 Configuration: A Four-Pronged Attack

Instead of relying on a single high-end card, the RTX4000Ada20GBx4 configuration utilizes four powerful RTX 4000 Ada 20GB GPUs. This offers a multi-GPU setup, which can be advantageous for certain tasks, especially if you can effectively distribute the workload across the four cards. This setup is like a well-coordinated basketball team, where each player contributes to the overall success, but not necessarily as strong as the single, dominant L40S_48GB.

Benchmark Breakdown: Unveiling the Performance Champions

We'll analyze the performance of these two configurations on the popular Llama 3 model in various settings:

- Llama 3 8B: This is a smaller model, making it more suitable for experimentation and lighter workloads.

- Llama 3 70B: This is a larger model requiring more resources and suitable for demanding applications.

The tests are conducted with the following parameters:

- Quantization: Q4KM (quantized to 4 bits using the K-M algorithm) and F16 (16-bit floating point). Quantization aims to reduce memory footprint and increase speed by making the model smaller and faster on the GPU.

- Task: Token generation and processing (which includes everything from token generation to embedding computation).

Data Source: The benchmark data is sourced from llama.cpp repository and GPU Benchmarks on LLM Inference.

Performance Analysis: Unveiling the Champions

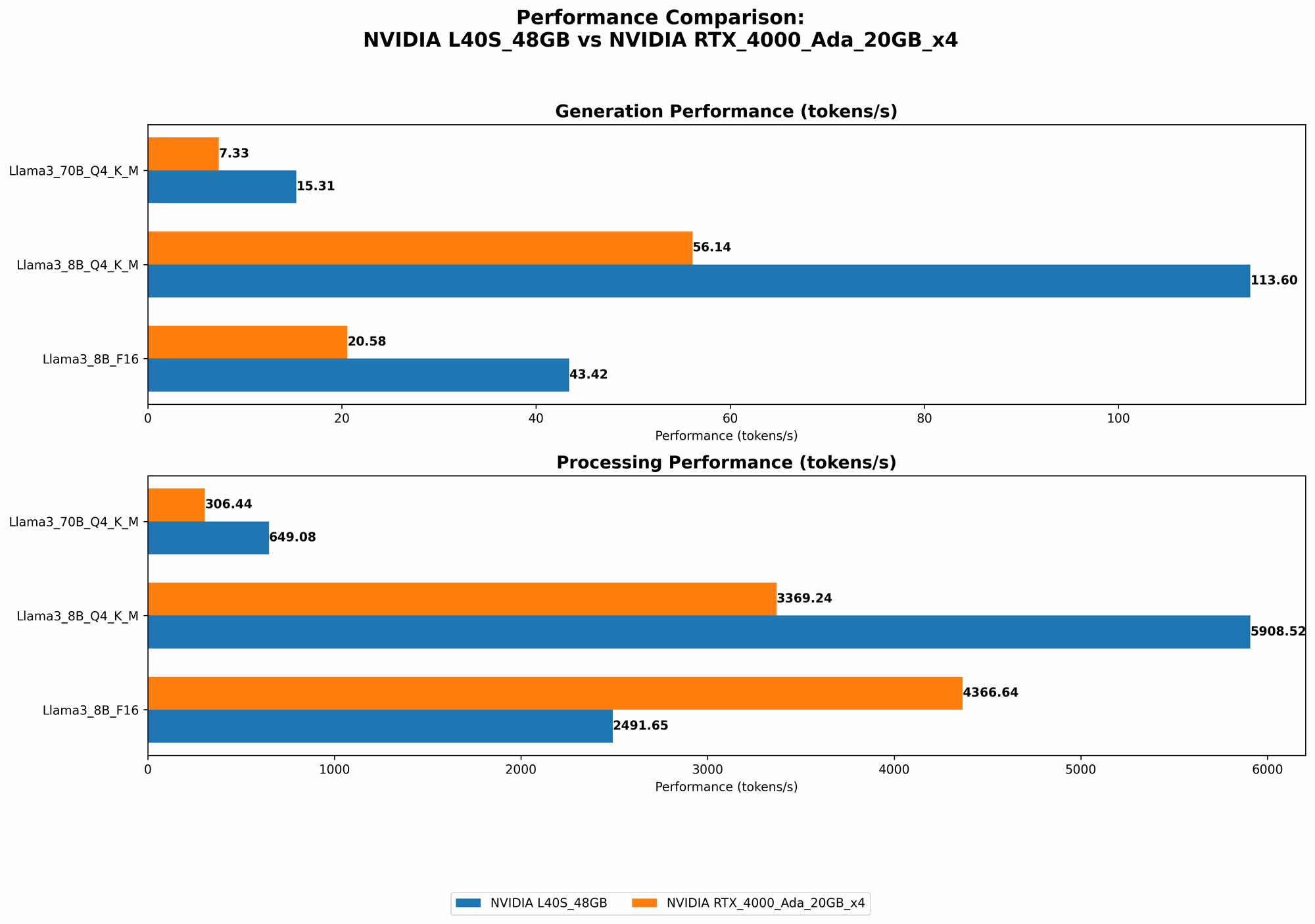

Comparison of NVIDIA L40S48GB and NVIDIA RTX4000Ada20GB_x4 for Llama 3 8B

| Configuration/Task | Q4KM Token Generation (tokens/sec) | F16 Token Generation (tokens/sec) | Q4KM Token Processing (tokens/sec) | F16 Token Processing (tokens/sec) |

|---|---|---|---|---|

| NVIDIA L40S_48GB | 113.6 | 43.42 | 5908.52 | 2491.65 |

| NVIDIA RTX4000Ada20GBx4 | 56.14 | 20.58 | 3369.24 | 4366.64 |

Analysis:

- Token Generation: As you can see, the L40S48GB reigns supreme, achieving a 2.03x higher token generation speed with Q4K_M quantization and a 2.11x advantage using F16. This translates to significantly faster response times and a smoother user experience for text generation.

- Token Processing: While the L40S48GB still excels in token processing, the RTX4000Ada20GBx4 configuration surprises with its F16 performance. It surpasses the L40S48GB by 1.75x in F16 token processing. It's worth noting that for Q4KM token processing, the L40S_48GB has a 1.75x advantage.

Conclusion: For the Llama 3 8B model, the L40S48GB is the clear winner for both token generation and processing in the Q4KM setting. However, the RTX4000Ada20GB_x4 exhibits surprising performance in F16 token processing, making it a strong contender if you prioritize the benefits of lower precision for faster inference.

Comparison of NVIDIA L40S48GB and NVIDIA RTX4000Ada20GB_x4 for Llama 3 70B

| Configuration/Task | Q4KM Token Generation (tokens/sec) | F16 Token Generation (tokens/sec) | Q4KM Token Processing (tokens/sec) | F16 Token Processing (tokens/sec) |

|---|---|---|---|---|

| NVIDIA L40S_48GB | 15.31 | N/A | 649.08 | N/A |

| NVIDIA RTX4000Ada20GBx4 | 7.33 | N/A | 306.44 | N/A |

Analysis:

- Token Generation: The L40S48GB demonstrates its dominance once again, outperforming the RTX4000Ada20GBx4 by 2.09x in Q4KM token generation. This indicates that the L40S48GB's superior memory and processing power are crucial for handling the larger 70B model.

- Token Processing: The L40S_48GB also excels in token processing for the 70B model, achieving a 2.12x faster speed than the multi-GPU setup.

Conclusion: The L40S48GB emerges as the clear champion for the Llama 3 70B model in both token generation and processing with Q4KM quantization. The RTX4000Ada20GB_x4 struggles to keep up with the demands of the larger model.

Practical Considerations for Choosing the Right GPU

NVIDIA L40S_48GB: The High-Performance Powerhouse

- Strengths:

- Exceptional Performance: The L40S_48GB is a powerhouse with exceptional performance for both token generation and processing, especially for larger models.

- High Memory Capacity: Its large 48GB HBM3e memory allows for efficient handling of demanding models and datasets.

- Weaknesses:

- High Cost: This GPU comes with a hefty price tag, which might be a barrier for budget-conscious users.

- Use Cases:

- Running large LLMs (70B+): The L40S_48GB is ideal for researchers and developers working with large LLM models.

- High-performance applications: If you need the best possible performance for demanding tasks, this GPU is your go-to choice.

NVIDIA RTX4000Ada20GBx4: The Affordable Multi-GPU Solution

- Strengths:

- Cost-effective: The RTX4000Ada20GBx4 configuration is a more budget-friendly option compared to a single L40S_48GB.

- Scalability: The multi-GPU setup allows for potential scalability, offering flexibility to add more GPUs as your needs evolve.

- Weaknesses:

- Performance Limitations with Larger Models: The RTX4000Ada20GBx4 struggles with larger models like Llama 3 70B, especially in F16, falling short compared to the L40S_48GB.

- Complexity: Managing a multi-GPU setup can be more complex than using a single GPU.

- Use Cases:

- Running smaller LLMs (8B or less): This configuration is a good choice for working with smaller LLMs, particularly if you are starting with a limited budget.

- Experimentation and prototyping: It's suitable for exploring different LLM models and techniques before committing to a high-end setup.

Conclusion

Choosing the right GPU for your LLM projects depends on your specific needs and budget.

If you prioritize performance and can afford the investment, the NVIDIA L40S_48GB is the ultimate choice, especially for handling larger models.

However, if budget is a concern, the NVIDIA RTX4000Ada20GBx4 configuration provides a cost-effective solution, particularly for smaller LLMs. It's a good option to start with while you experiment and learn, but don't expect it to match the raw power of the L40S_48GB.

Frequently Asked Questions (FAQ)

What is Quantization?

Quantization is a technique used to reduce the size of LLM models. Imagine you have a large model, like a giant castle made of LEGO bricks. Quantization is like replacing some of the larger LEGO bricks with smaller, more compact ones, making the castle smaller while still maintaining its essential structure. This makes the model more efficient, requiring less memory and allowing for faster processing.

How Do I Choose the Right GPU for my LLM Project?

Consider these factors:

- Model size: If you're working with larger models (70B+), prioritize high memory and processing power (like the L40S_48GB).

- Budget: If you're on a budget, start with a more affordable option, such as the RTX4000Ada20GBx4.

- Performance requirements: If you need the best possible performance, go for the L40S_48GB.

What are the Other Options for Running LLMs Locally?

There are other GPU options available, like the NVIDIA A100 and H100, but these are even higher-end and more expensive than the L40S_48GB. You could also explore using multiple CPUs, but their performance generally falls short of dedicated GPUs.

Can I Use My Existing GPU for LLMs?

It depends on the size of the model and your GPU's specs. A mid-range GPU like a RTX 3070 or 3080 might be sufficient for smaller models and simple tasks. However, for larger models and demanding workflows, a dedicated high-end GPU is recommended.

Keywords

NVIDIA L40S48GB, NVIDIA RTX4000Ada20GBx4, LLM, Large Language Model, Llama 3, Token Generation, Token Processing, Quantization, Q4K_M, F16, GPU, Benchmark Analysis, Performance Comparison, Local Inference, Deep Learning, AI, Machine Learning.