Which is Better for Running LLMs locally: NVIDIA A100 PCIe 80GB or NVIDIA A100 SXM 80GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the demand for powerful hardware to run them locally. Choosing the right hardware can make a big difference in how smooth and efficient your LLM development experience is. Today, we will be comparing two titans in the GPU world: the NVIDIA A100 PCIe 80GB and the NVIDIA A100 SXM 80GB. These GPUs are absolute beasts, offering phenomenal performance and ample memory for even the largest LLMs.

But which one is the king of the hill for local LLM development? We will dive deep into their performance, analyze their strengths and weaknesses, and provide practical recommendations for various use cases. We'll be using real-world benchmarks and data, along with our own insights, to help you make the best choice for your needs. Let's get started!

Comparing the NVIDIA A100 PCIe 80GB and NVIDIA A100 SXM 80GB for LLM Performance

Both the A100 PCIe 80GB and A100 SXM 80GB are powerful GPUs, but they have some key differences that can impact their performance for LLMs. These differences come down to the specific hardware design and how it interacts with the LLM's computational needs.

Understanding the GPU Differences: PCIe vs. SXM

The A100 PCIe 80GB and A100 SXM 80GB both utilize the same NVIDIA Ampere architecture, but they are designed for different use cases.

A100 PCIe 80GB: This GPU is designed for standalone workstations and comes with PCIe interface for easy integration into various systems.

A100 SXM 80GB: This GPU is optimized for high-performance computing (HPC) and has SXM (Scalable Modular X-Connect) interface. SXM GPUs are specifically designed for server-grade systems where multiple GPUs are interconnected.

The key difference between these interfaces lies in the power consumption and connectivity. SXM GPUs need dedicated high-powered server environments with specialized interconnect capabilities to provide substantial benefits. These are more common in large data centers and academic research environments. For personal use or general development, the PCIe option is often the better choice.

Performance Analysis: Llama 3 Model Benchmarks

Let's dive deep into the performance of these GPUs for running Llama 3 models, a popular choice for research and experimentation.

Understanding the Metrics: Token Generation and Token Processing

Token Generation: This metric measures how quickly the GPU can generate new tokens based on the input text. It represents the core function of an LLM, as it directly impacts the speed of text generation, conversation response, and other tasks.

Token Processing: This metric measures how quickly the GPU can process existing tokens, which includes various operations like embedding, attention, and language modeling. This metric affects how fast the LLM can understand and analyze the text.

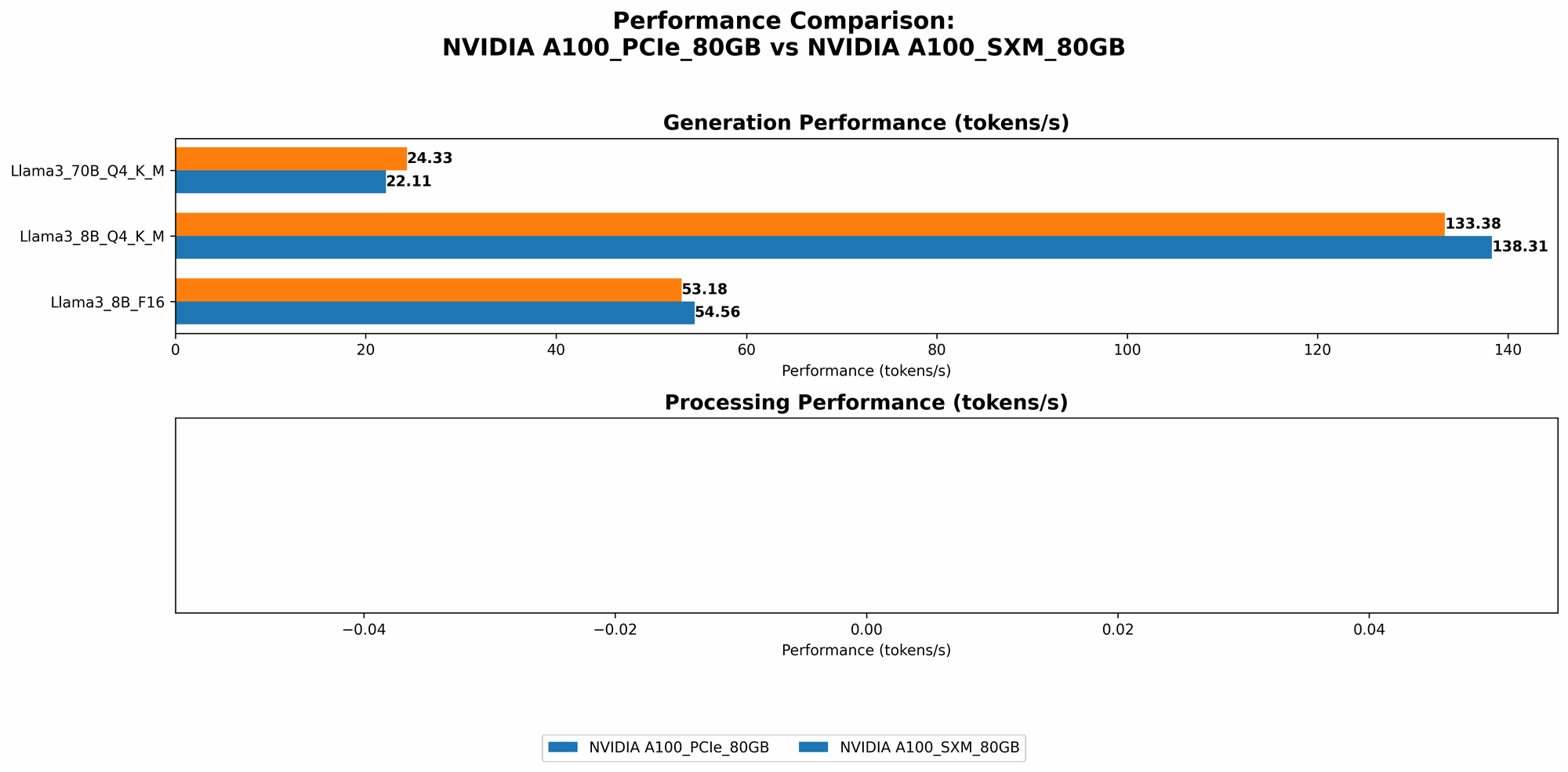

Results: A100 PCIe 80GB vs. A100 SXM 80GB

Here's a breakdown of how these GPUs compare in terms of token generation and processing for Llama 3 models using different quantization levels (Q4KM and F16).

| GPU | Model | Quantization | Token/s Generation | Token/s Processing |

|---|---|---|---|---|

| A100 PCIe 80GB | Llama 3 8B | Q4KM | 138.31 | 5800.48 |

| A100 PCIe 80GB | Llama 3 8B | F16 | 54.56 | 7504.24 |

| A100 PCIe 80GB | Llama 3 70B | Q4KM | 22.11 | 726.65 |

| A100 SXM 80GB | Llama 3 8B | Q4KM | 133.38 | |

| A100 SXM 80GB | Llama 3 8B | F16 | 53.18 | |

| A100 SXM 80GB | Llama 3 70B | Q4KM | 24.33 |

- Note: We lack data for Llama 3 70B F16 and Llama 3 8B/70B processing speeds on the A100 SXM 80GB. This indicates that either the testing wasn't conducted or the data is currently unavailable.

Analysis: A100 PCIe 80GB Outperforms in Token Generation

The A100 PCIe 80GB generally outperforms the A100 SXM 80GB in token generation for both Llama 3 8B and 70B models with Q4KM quantization. This might be due to factors like driver optimization or system configuration specific to the PCIe version.

However, the A100 PCIe 80GB exhibits slightly lower performance in token processing for the Llama 3 8B model with Q4KM quantization. This could be attributed to memory bandwidth limitations or other factors specific to the PCIe interface.

The F16 quantization shows a similar trend. The A100 PCIe 80GB again outperforms in token generation but shows slightly lower performance in token processing for the Llama 3 8B model.

Understanding Quantization: Bringing LLMs to the Masses

Quantization is like a magic trick for LLMs. It allows you to shrink the model size without sacrificing too much accuracy. Imagine squeezing a massive library into a smaller suitcase – that's what quantization does for LLMs.

Quantization Levels: F16 and Q4KM Explained

FP16 (Half-precision Floating Point): This quantization method halves the number of bits used for each number in the LLM, resulting in about half the memory footprint. Think of it like taking a high-resolution photo and compressing it to a smaller file size.

Q4KM (Quantized 4-bit with Kernel-Matrix Multiplication): This is a more advanced technique that uses a special type of multiplication optimized for 4-bit numbers. It reduces the memory footprint even further, often by more than 10 times compared to FP16. This is like compressing the same image to a much smaller size, sacrificing some detail but still retaining a recognizable image.

Benefits of Quantization

Smaller Model Size: Lower memory requirements means you can run bigger LLMs on less powerful hardware, even on your personal computer.

Faster Inference: The smaller footprint leads to faster loading and processing, making your interactions with the LLM smoother.

Reduced Power Consumption: Less data to process equals lower energy use.

Performance Implications: Quantization and GPU Choice

F16 Quantization: Streamlining the Process

F16 quantization offers a balance between performance and accuracy. It's a good choice for general-purpose use cases where performance is important but accuracy is still critical. This quantization level provides a moderate reduction in model size, leading to faster processing times and lower memory requirements.

Q4KM Quantization: The Power of Efficiency

Q4KM quantization takes efficiency to the next level. It offers significant model size reduction, leading to dramatically faster processing speeds and reduced memory consumption. This quantization level is ideal for resource-constrained environments or when maximum performance is the top priority.

Choosing the Right GPU: Recommendations

For users who prioritize token generation speed: The A100 PCIe 80GB appears to be a strong contender. Its performance advantage in token generation is noticeable, especially for larger models like Llama 3 70B.

For users with limited memory or working with small models: The A100 SXM 80GB might be the better choice. While data is limited, its potential advantages in memory bandwidth could lead to superior performance in some specific scenarios.

For developers seeking the maximum efficiency: Exploring Q4KM quantization with both models is recommended. The combination of Q4KM with the A100 PCIe 80GB might offer the best blend of speed, efficiency, and model size reduction.

Performance Beyond Numbers: Beyond Core Calculations

While the benchmarks provided above offer valuable insights, it's important to consider factors beyond raw token generation and processing speed.

Memory Bandwidth: The Information Superhighway

Memory bandwidth is essential for LLMs, as it determines how quickly the GPU can access the massive amount of data needed for model operations. The A100 SXM 80GB often boasts higher memory bandwidth compared to the A100 PCIe 80GB, particularly when multiple GPUs are interconnected in a server environment. This can be crucial for very large models, especially when combined with Q4KM quantization which can further accelerate processing.

Driver Compatibility: Not All Drivers Are Created Equal

The performance of GPUs can be heavily influenced by the drivers. It's crucial to ensure that you are using the latest drivers optimized for your specific GPU and operating system. Check the NVIDIA website for the latest driver updates.

Multi-GPU Setup: Scaling Up Performance

If you need ultimate performance, consider using a multi-GPU setup. This involves connecting multiple GPUs together to work in parallel. This can significantly boost performance for large models but requires specialized equipment and expertise.

FAQ: Unraveling the Mysteries of LLMs and GPUs

1. What are LLMs?

LLMs are powerful artificial intelligence models trained on massive datasets of text and code. They can perform various language-related tasks like text generation, translation, summarization, and code completion.

2. What is quantization?

Quantization is a technique that reduces the memory footprint of LLMs while still maintaining reasonable accuracy. It's like shrinking a large image while retaining the essential details.

3. What is the difference between token generation and token processing?

Token generation refers to the process of creating new text based on the input. Think of it as a language model writing a sentence based on your prompts.

Token processing involves analyzing existing text and performing operations like understanding its meaning, finding relationships between words, or predicting the next word. Think of it as a language model reading a paragraph and extracting information from it.

4. Which GPU should I choose for my LLM projects?

The optimal choice depends on your specific needs and budget.

A100 PCIe 80GB: Suitable for general-purpose LLM development and standalone workstations. Excels in token generation speed.

A100 SXM 80GB: Ideal for high-performance computing environments with specialized interconnects and multi-GPU setups. Potential advantages in memory bandwidth.

5. Is it possible to run LLMs locally without a powerful GPU?

Yes, but performance will be significantly limited. CPUs can be used, but they are much slower for LLM tasks. Consider using cloud services that offer GPUs for even more powerful capabilities.

Keywords

Large Language Models, LLMs, NVIDIA A100 PCIe 80GB, NVIDIA A100 SXM 80GB, GPU, Token Generation, Token Processing, Quantization, F16, Q4KM, Memory Bandwidth, Driver Compatibility, Multi-GPU, Performance, Benchmark, Llama 3, Local LLM Development, Deep Learning hardware, AI hardware, Server-grade GPU, Workstation GPU, HPC, LLM Inference, GPU Acceleration.