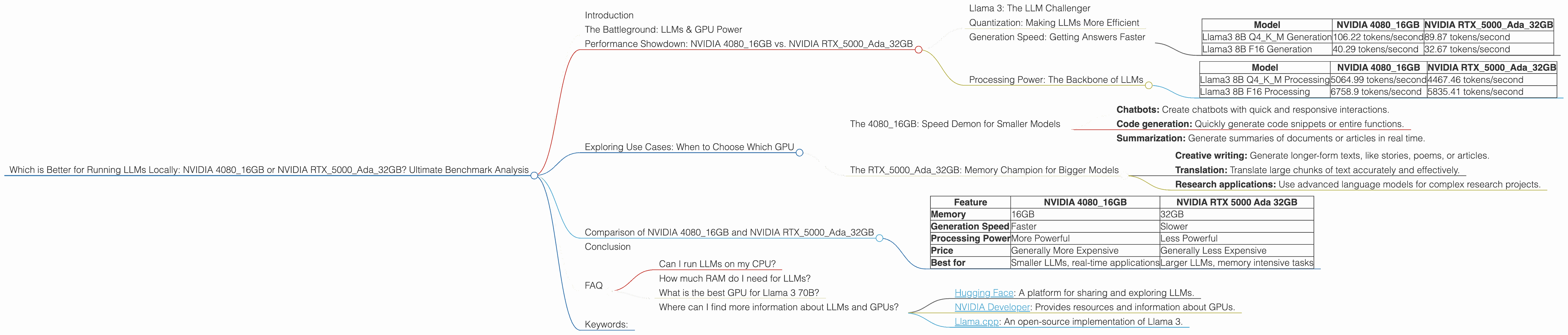

Which is Better for Running LLMs locally: NVIDIA 4080 16GB or NVIDIA RTX 5000 Ada 32GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is buzzing! Imagine having a super-smart AI on your own computer, generating creative text, answering questions, and even translating languages. This magic comes from powerful GPUs - those specialized processing units that excel at handling the complex calculations LLMs require. But which GPU is best suited for running LLMs locally?

Today, we're diving into the head-to-head showdown between two titans: the NVIDIA GeForce RTX 4080 16GB and the NVIDIA RTX 5000 Ada 32GB. Buckle up, because we're about to explore their performance, weigh their strengths and weaknesses, and ultimately, determine the champion for your LLM adventures!

The Battleground: LLMs & GPU Power

Before we unleash the GPUs, let's understand the players. LLMs are like digital wizards, trained on massive datasets to understand and generate human-like text. But their computational hunger requires a powerful engine: your GPU.

Think of a GPU as the brain of a computer. But unlike a general-purpose CPU, a GPU is designed to handle thousands of parallel calculations simultaneously. This makes them essential for tasks like image processing, video editing, and, you guessed it, running LLMs!

Performance Showdown: NVIDIA 408016GB vs. NVIDIA RTX5000Ada32GB

Llama 3: The LLM Challenger

Our benchmark will use a popular and powerful open-source LLM: Llama 3. Available in different sizes (like 7B, 8B, and 70B parameters), Llama 3 offers flexibility and efficiency. We'll explore these differences to determine the preferred GPU for various LLM scenarios.

Note: This comparison focuses on the NVIDIA GeForce RTX 4080 16GB and NVIDIA RTX 5000 Ada 32GB. Data for other models or devices is not included.

Quantization: Making LLMs More Efficient

Quantization is like a diet for LLMs. It helps them consume less memory and processing power, making them run faster.

Imagine you have a detailed map to a treasure. You could carry the entire map, heavy and cumbersome, or use a simpler, smaller version that still guides you to the treasure. Quantization is like using a simplified map for LLMs, allowing them to work efficiently without compromising their abilities.

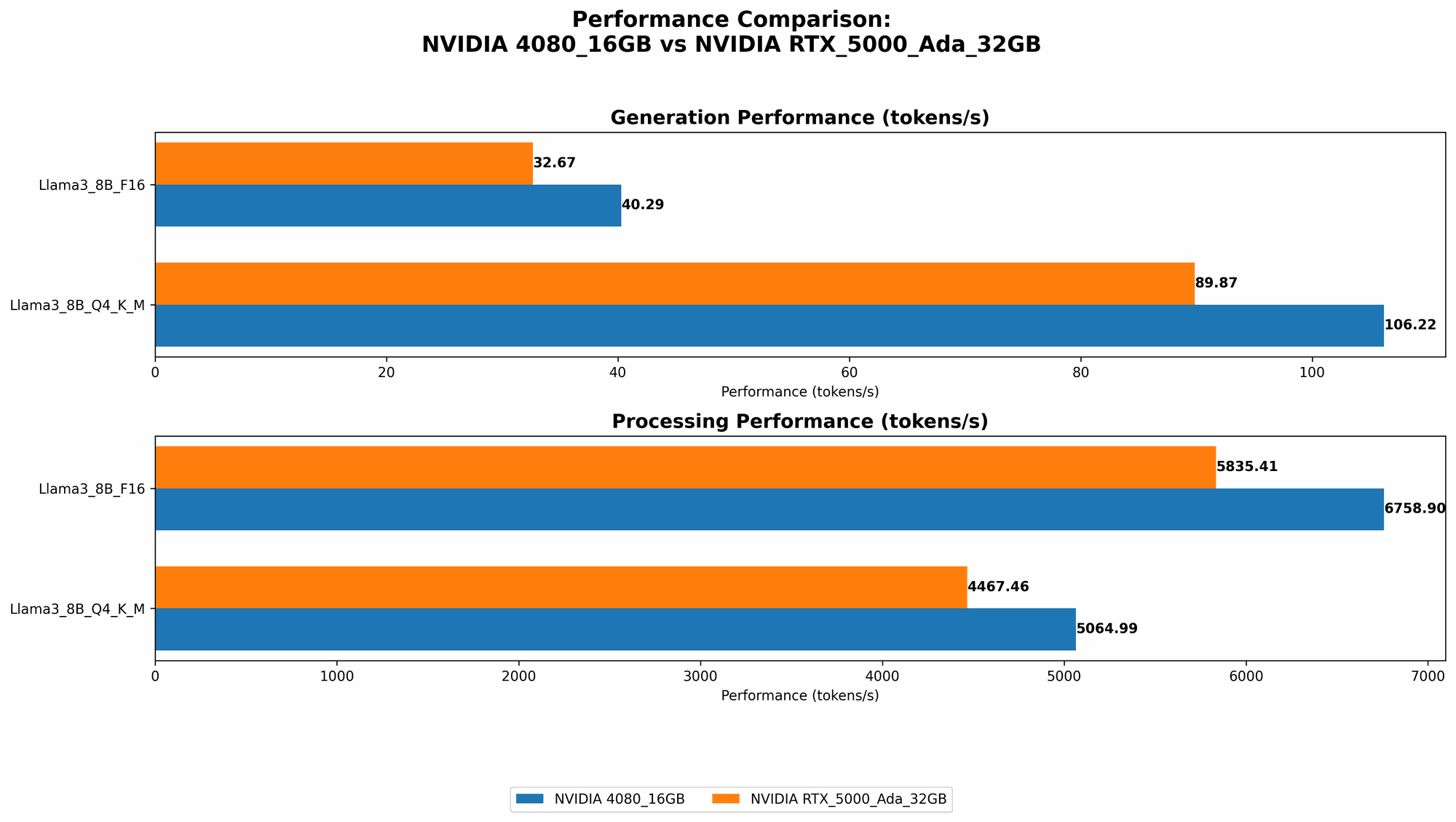

Generation Speed: Getting Answers Faster

One crucial aspect of running LLMs is their speed in generating text. How quickly can they create those witty responses, compelling stories, or accurate translations?

| Model | NVIDIA 4080_16GB | NVIDIA RTX5000Ada_32GB |

|---|---|---|

| Llama3 8B Q4KM Generation | 106.22 tokens/second | 89.87 tokens/second |

| Llama3 8B F16 Generation | 40.29 tokens/second | 32.67 tokens/second |

Analysis: The NVIDIA GeForce RTX 4080 16GB clearly outperforms the NVIDIA RTX 5000 Ada 32GB in terms of text generation speed. This is particularly evident in the Q4KM configuration (quantized to 4-bit precision), with the 4080 achieving a significant 18% speed advantage.

However, it's important to note that the NVIDIA GeForce RTX 4080 16GB only has 16GB of memory while the NVIDIA RTX 5000 Ada 32GB has 32GB. This can be a significant factor for larger LLM models, such as Llama 3 70B, as they require more memory to operate efficiently.

Processing Power: The Backbone of LLMs

LLMs need a powerful engine to process information and understand language. Here's how the two GPUs stack up:

| Model | NVIDIA 4080_16GB | NVIDIA RTX5000Ada_32GB |

|---|---|---|

| Llama3 8B Q4KM Processing | 5064.99 tokens/second | 4467.46 tokens/second |

| Llama3 8B F16 Processing | 6758.9 tokens/second | 5835.41 tokens/second |

Analysis: Similar to text generation, the NVIDIA GeForce RTX 4080 16GB demonstrates higher processing power, delivering approximately 13% better performance across both quantization levels.

Exploring Use Cases: When to Choose Which GPU

The 4080_16GB: Speed Demon for Smaller Models

The NVIDIA GeForce RTX 4080 16GB is the clear winner in terms of speed for smaller LLMs like Llama 3 8B. Its fast processing and generation capabilities make it ideal for applications requiring real-time interaction:

- Chatbots: Create chatbots with quick and responsive interactions.

- Code generation: Quickly generate code snippets or entire functions.

- Summarization: Generate summaries of documents or articles in real time.

The RTX5000Ada_32GB: Memory Champion for Bigger Models

The NVIDIA RTX 5000 Ada 32GB shines when it comes to handling memory-intensive tasks. Its 32GB of VRAM makes it the best choice for larger LLMs:

- Creative writing: Generate longer-form texts, like stories, poems, or articles.

- Translation: Translate large chunks of text accurately and effectively.

- Research applications: Use advanced language models for complex research projects.

Comparison of NVIDIA 408016GB and NVIDIA RTX5000Ada32GB

Here's a concise breakdown of their strengths and weaknesses:

| Feature | NVIDIA 4080_16GB | NVIDIA RTX 5000 Ada 32GB |

|---|---|---|

| Memory | 16GB | 32GB |

| Generation Speed | Faster | Slower |

| Processing Power | More Powerful | Less Powerful |

| Price | Generally More Expensive | Generally Less Expensive |

| Best for | Smaller LLMs, real-time applications | Larger LLMs, memory intensive tasks |

Conclusion

The decision between the NVIDIA GeForce RTX 4080 16GB and NVIDIA RTX 5000 Ada 32GB boils down to your specific needs. If speed and responsiveness are paramount, the 4080 16GB is your champion. If handling large LLMs is your priority, the RTX 5000 Ada 32GB excels.

Remember, these are just two options - the LLM landscape is constantly evolving. Stay tuned for updates and benchmarks for new models and GPUs!

FAQ

Can I run LLMs on my CPU?

While possible, CPUs are not as efficient as GPUs for running LLMs. The massive parallel processing power of GPUs significantly accelerates LLM operations.

How much RAM do I need for LLMs?

It depends on the size of the LLM. Larger models require more RAM. Consider at least 16GB of RAM for good performance.

What is the best GPU for Llama 3 70B?

Due to its larger size, Llama 3 70B requires significant memory. The NVIDIA RTX 5000 Ada 32GB is recommended for this model.

Where can I find more information about LLMs and GPUs?

There are many online resources, such as:

- Hugging Face: A platform for sharing and exploring LLMs.

- NVIDIA Developer: Provides resources and information about GPUs.

- Llama.cpp: An open-source implementation of Llama 3.

Keywords:

NVIDIA 408016GB, NVIDIA RTX5000Ada32GB, LLM, Large Language Model, GPU, Graphics Processing Unit, Llama 3, Quantization, Generation Speed, Processing Power, Benchmark, Performance, Comparison, Memory, Use Cases, Chatbot, Code Generation, Summarization, Translation, Research, RAM, Hugging Face, NVIDIA Developer, Llama.cpp