Which is Better for Running LLMs locally: NVIDIA 4080 16GB or NVIDIA A100 PCIe 80GB? Ultimate Benchmark Analysis

Introduction

Large Language Models (LLMs) are revolutionizing the way we interact with technology. From generating creative text formats to translating languages, these powerful AI models are making waves across industries. But with their sheer size and computational demands, running LLMs locally has been a challenge. Fortunately, advancements in GPU technology are making local LLM execution more feasible.

This article dives deep into the performance of two popular GPU options for running LLMs: the NVIDIA 408016GB and the NVIDIA A100PCIe_80GB. We'll analyze their strengths and weaknesses, focusing on token generation and processing speed for popular LLM models like Llama 3 8B and 70B. Buckle up, because it's about to get technical!

Performance Comparison: NVIDIA 408016GB vs. NVIDIA A100PCIe_80GB

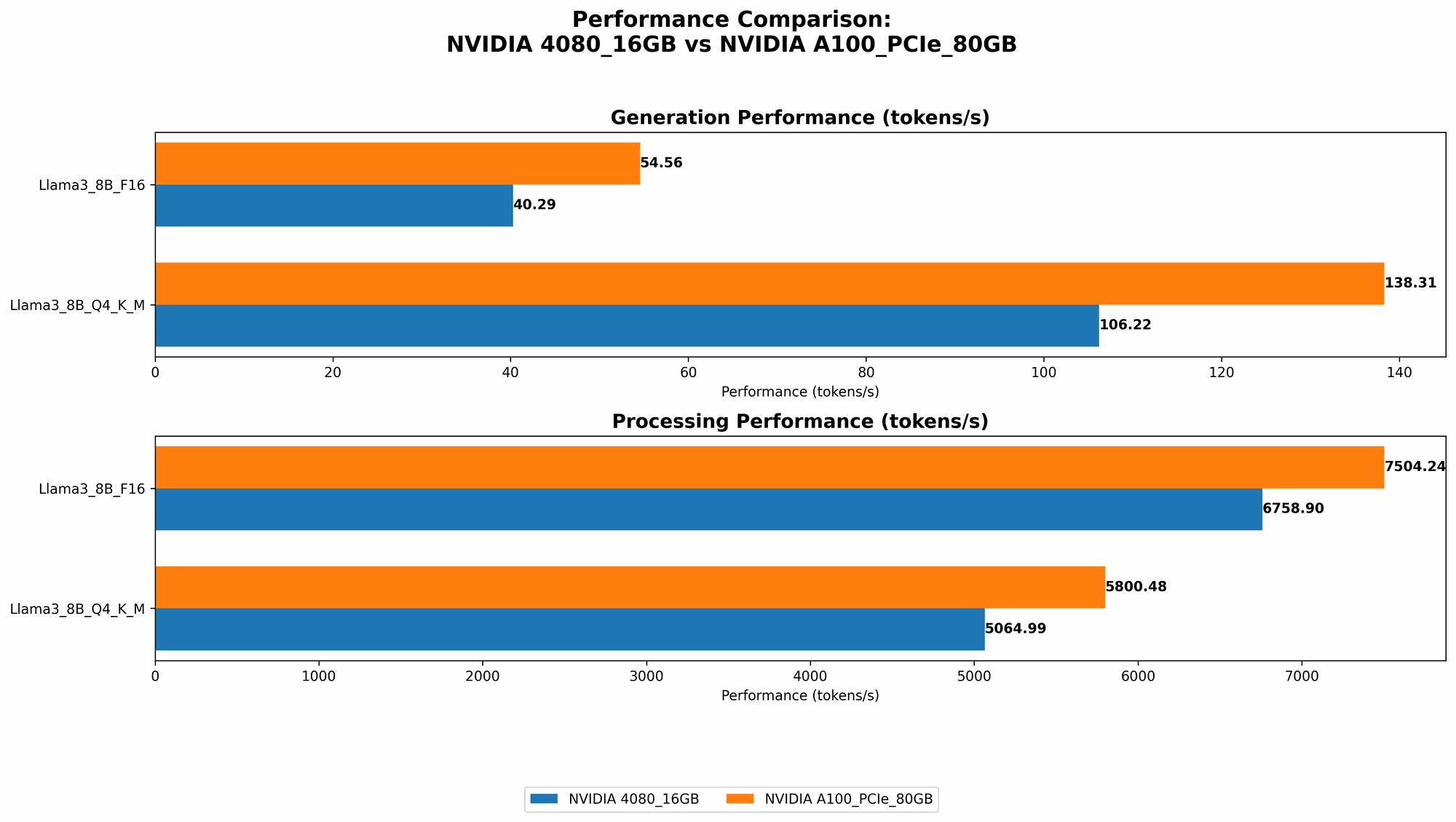

Llama 3 8B: Token Generation Speed

It's time for the showdown! Let's see how these GPUs perform with the Llama 3 8B model.

| Model | NVIDIA 4080_16GB (Tokens/second) | NVIDIA A100PCIe80GB (Tokens/second) |

|---|---|---|

| Llama38BQ4KM_Generation | 106.22 | 138.31 |

| Llama38BF16_Generation | 40.29 | 54.56 |

The A100PCIe80GB takes the crown for token generation speed across both quantization levels (Q4KM and F16). The A100's higher performance can be attributed to several factors:

- More CUDA Cores: The A100 boasts more CUDA cores, enabling parallel processing and speeding up the model's calculations.

- Higher Memory Bandwidth: The A100's massive 80GB memory allows for faster data transfer, which is crucial for efficient token generation.

Llama 3 8B: Processing Speed

Now let's shift gears to processing speed. This metric represents how quickly the GPU can process the entire model.

| Model | NVIDIA 4080_16GB (Tokens/second) | NVIDIA A100PCIe80GB (Tokens/second) |

|---|---|---|

| Llama38BQ4KM_Processing | 5064.99 | 5800.48 |

| Llama38BF16_Processing | 6758.9 | 7504.24 |

Similar to token generation, the A100PCIe80GB shines in processing speed, achieving a noticeable advantage over the 4080_16GB. This reinforces the A100's prowess in handling the computational demands of these models.

Llama 3 70B: Token Generation Speed

Let's ramp up the difficulty and see how these GPUs handle the larger Llama 3 70B model.

| Model | NVIDIA 4080_16GB (Tokens/second) | NVIDIA A100PCIe80GB (Tokens/second) |

|---|---|---|

| Llama370BQ4KM_Generation | null | 22.11 |

| Llama370BF16_Generation | null | null |

Here's the catch: The 408016GB simply doesn't have enough memory to accommodate the Llama 3 70B model, even in its quantized form (Q4K_M). This limitation makes the 4080 unsuitable for running large LLMs like the 70B model.

The A100PCIe80GB, on the other hand, can comfortably handle the 70B model, achieving a respectable token generation speed of 22.11 tokens/second.

Llama 3 70B: Processing Speed

| Model | NVIDIA 4080_16GB (Tokens/second) | NVIDIA A100PCIe80GB (Tokens/second) |

|---|---|---|

| Llama370BQ4KM_Processing | null | 726.65 |

| Llama370BF16_Processing | null | null |

The 408016GB, once again, falls short due to memory constraints. The A100PCIe80GB demonstrates its capabilities, achieving a processing speed of 726.65 tokens/second for the Llama 3 70B model in Q4K_M format.

Performance Analysis: A Detailed Breakdown

NVIDIA 4080_16GB: Strengths and Weaknesses

Strengths:

- Solid Value for Smaller Models: The 4080_16GB is a budget-friendly option for running smaller LLMs, like the Llama 3 8B, efficiently. Its performance is decent for most use cases.

- Power Efficiency: The 4080_16GB offers a balanced performance-to-power ratio, making it ideal for users concerned about energy consumption.

- Accessibility: The 4080_16GB is widely available and easier to procure compared to the A100.

Weaknesses:

- Memory Limitations: The 4080_16GB's memory capacity is simply not enough to handle larger LLMs like the Llama 3 70B, severely limiting its applicability to more complex models.

NVIDIA A100PCIe80GB: Strengths and Weaknesses

Strengths:

- Unmatched Performance: The A100PCIe80GB provides a clear advantage in both token generation and processing speed for both 8B and 70B models.

- Large Memory Capacity: The A100's 80GB memory allows for running even the most demanding LLMs without memory constraints.

- Hardware Acceleration: The A100 leverages tensor cores for optimized performance, further boosting the A100's speed.

Weaknesses:

- Cost: The A100PCIe80GB is significantly more expensive than the 4080_16GB, making it a less attractive option for budget-conscious users.

- Availability: The A100 can be difficult to obtain due to its high demand and limited supply.

Practical Recommendations: Choosing the Right GPU for Your Needs

- Budget-Friendly Option for Smaller Models: If you're primarily working with smaller LLMs like the Llama 3 8B and have a limited budget, the NVIDIA 4080_16GB is a viable choice.

- Powerhouse for Larger Models: If you plan to work with larger LLMs, like the Llama 3 70B, or need the best performance possible, the NVIDIA A100PCIe80GB is the clear winner.

Quantization: A Key Factor to Consider

Quantization is a technique used to reduce the size of LLM models by using smaller data types (like Q4KM or F16) instead of the original 32-bit floating-point numbers. This allows the model to fit in less memory, enabling it to run on GPUs with limited memory capacity.

Consider this analogy: imagine representing a mountain range with different levels of detail. A detailed model (32-bit floating-point) uses many points to capture all the mountains' nuances. A quantized model (like Q4KM) uses fewer points, representing the mountain range with less precision, but still conveying the general shape.

Quantization introduces some accuracy trade-offs, but it can significantly improve performance and reduce memory requirements, making it a valuable tool for running LLMs on less powerful GPUs.

FAQ: Answers to Your Burning Questions

Q: How does quantization affect LLM performance?

- A: Quantization can decrease the model's accuracy slightly, but it also significantly improves performance and reduces memory requirements. For many use cases, the trade-off is acceptable.

Q: What is the recommended quantization level for each LLM?

- A: The ideal quantization level depends on the specific LLM and your desired accuracy. Experimentation is often required to find the optimal balance. Many developers recommend Q4KM as a good starting point for balanced performance and accuracy.

Q: Can I run LLMs on my CPU?

- A: While technically possible, CPUs are far less efficient than GPUs for running LLMs. The parallel processing capabilities of GPUs are essential for achieving decent performance.

Q: What are the benefits of running LLMs locally?

- A: Running LLMs locally offers advantages like faster response times, offline capabilities, and greater privacy.

Q: What other factors should I consider when choosing a GPU?

- A: Beyond performance, consider factors such as power consumption, noise levels, and compatibility with your system.

Keywords: NVIDIA 408016GB, NVIDIA A100PCIe80GB, LLM, Large Language Models, Llama 3, Token Generation, Processing Speed, Quantization, Q4K_M, F16, GPU, GPU Benchmark, Local Inference, LLMs on GPUs, LLMs on NVIDIA, LLM Speed, LLM Performance.