Which is Better for Running LLMs locally: NVIDIA 4080 16GB or NVIDIA 4090 24GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming, and with it comes the demand for powerful hardware capable of running these complex models locally. If you're a developer, researcher, or simply an enthusiast looking to experiment with LLMs on your own machine, choosing the right GPU can be a daunting task.

This article dives deep into a head-to-head comparison of two popular NVIDIA GPUs: the GeForce RTX 4080 16GB and the GeForce RTX 4090 24GB, focusing on their performance in running LLM models. We'll analyze their strengths and weaknesses, identify the best use cases for each, and provide practical guidance for your decision.

Comparing the NVIDIA GeForce RTX 4080 16GB and NVIDIA GeForce RTX 4090 24GB for LLM Inference

This comparison focuses on the primary task of LLM inference, which involves feeding a prompt to the LLM and receiving a generated text response. We'll evaluate the two cards based on their performance with Llama 3 models – a popular family of open-source LLMs.

Understanding Key Performance Factors

Before diving into the numbers, let's clarify some key terms:

- Quantization: Quantization is a technique used to reduce the size of LLM models without sacrificing much accuracy. It's like compressing an image – you lose some detail, but the overall picture remains recognizable.

- Q4KM: This refers to a specific type of quantization. Q4 stands for 4-bit quantization, which is a common method for reducing memory footprint and accelerating inference.

- F16: This refers to the use of half-precision floating-point numbers (16-bit) for calculations. It's generally faster than using full precision (32-bit), but might lead to slight accuracy loss.

- Generation: Refers to the time it takes to generate text from an LLM model.

- Processing: Refers to the time it takes for the model to process the input prompt and prepare for text generation.

Remember: While we focus on Llama 3 models here, the key performance factors and general conclusions will apply to other LLMs as well.

Performance Analysis: NVIDIA GeForce RTX 4080 16GB vs. NVIDIA GeForce RTX 4090 24GB

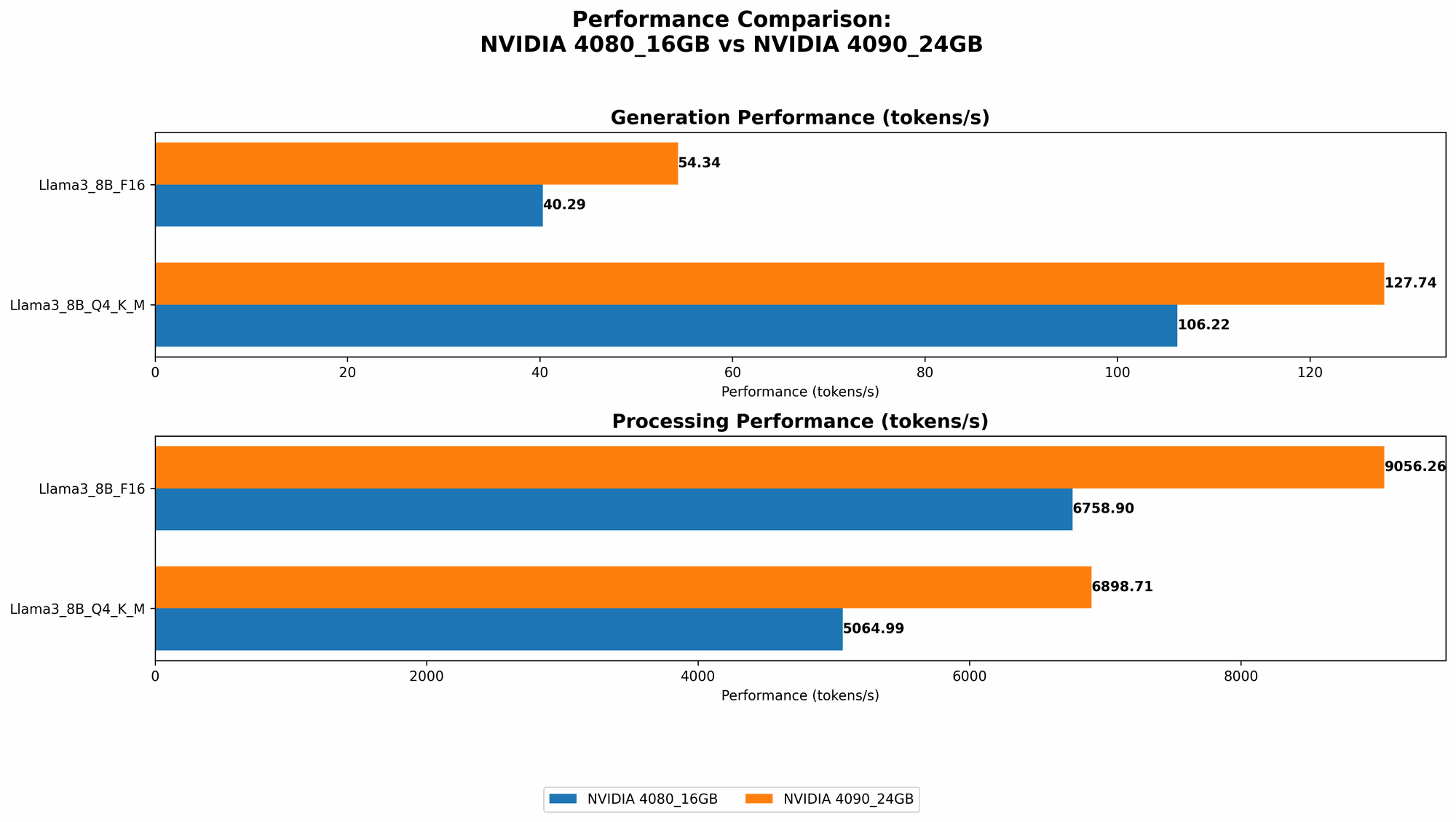

We'll examine the GPUs' performance by comparing their token speeds – which basically tell us how quickly they can process the building blocks of text (tokens) from LLMs. Higher token speeds generally imply faster LLM inference.

Llama 3 8B Token Speed Comparison

| Model | GPU | Generation (Tokens/Second) | Processing (Tokens/Second) |

|---|---|---|---|

| Llama38BQ4KM | 4080_16GB | 106.22 | 5064.99 |

| Llama38BQ4KM | 4090_24GB | 127.74 | 6898.71 |

| Llama38BF16 | 4080_16GB | 40.29 | 6758.9 |

| Llama38BF16 | 4090_24GB | 54.34 | 9056.26 |

Key Observations:

- Faster Generation: The 4090 consistently outperforms the 4080 in both Q4KM and F16 configurations – generating text faster. This difference is particularly notable with the F16 model, likely due to the 4090's slightly larger memory bandwidth (which is crucial for handling the higher precision calculations).

- Processing Advantage: The 4090 also demonstrates a clear advantage in processing speed across both quantization configurations. It handles pre-generation tasks faster than the 4080.

Practical Implications:

The 4090 provides a noticeable speedup in both text generation and prompt processing. This translates to faster and smoother LLM interactions, especially when working with larger or more complex models.

Llama 3 70B Token Speed: Missing Data & Insights

Important: Unfortunately, the available benchmark data doesn’t include performance metrics for the 4080 and 4090 with the Llama 3 70B model. This is a limitation of the available data, and we can't draw definitive conclusions about their performance with this specific model.

Considerations:

- Memory Requirements: The 70B model is significantly larger than the 8B model, and it's possible that either GPU might struggle due to memory constraints.

- Other GPUs: Benchmarking data for other GPUs (like the 4070 Ti) might provide insights into how these larger models perform on different hardware.

Moving forward: We encourage researchers and developers to provide more comprehensive benchmarks that cover various models and GPUs to fill the gaps in our understanding.

Performance Strengths and Weaknesses Comparison

NVIDIA GeForce RTX 4080 16GB

Strengths:

- Cost-Effective: Compared to the 4090, the 4080 offers a more affordable price point, making it attractive for budget-conscious users.

- Decent Performance: While not as fast as the 4090, the 4080 still delivers respectable performance for smaller LLM models like the 8B Llama 3.

Weaknesses:

- Limited with Larger Models: Might struggle with larger models (e.g., Llama 3 70B) due to its limited memory capacity.

- Slower than 4090: The 4090 generally offers a significant performance boost, especially for more demanding tasks and larger models.

NVIDIA GeForce RTX 4090 24GB

Strengths:

- Superior Performance: Offers the fastest token speeds among the two GPUs, particularly evident with larger models.

- Powerhouse for Large Models: Its 24GB of VRAM allows it to handle even the most memory-intensive LLMs with ease.

Weaknesses:

- High Price: The 4090 comes at a premium price, making it a less budget-friendly option.

- Energy Consumption: Due to its higher performance, it consumes more power compared to the 4080.

Practical Recommendations: Choosing the Right GPU

For Researchers & Developers:

- Prioritize Speed and Memory for Large Models (Llama3 70B): The 4090 is the clear winner when running large models. Its superior performance and abundant memory make it ideal for intensive research and development work.

- Budget-Conscious User with 8B Llama3 or Smaller: The 4080 is a solid choice if you're working with smaller models and have a limited budget. It can provide decent performance for a lower price.

For Enthusiasts and Casual Users:

- Fast and Efficient with Large Models: The 4090 offers the best overall experience with LLM models of all sizes. If you have the budget and need the fastest possible performance, it's the go-to option.

- Budget-Friendly, Good for Smaller Models: The 4080 is a great starting point for experimenting with LLMs on a budget. It can handle smaller models like the 8B Llama 3 with reasonable speed.

Remember: The best GPU for you depends on your specific needs, available budget, and the LLM models you plan to work with. Consider the size and complexity of the models, your performance expectations, and your budget constraints when making your decision.

FAQ: The Big Questions About LLMs and GPUs

What are the key differences between the NVIDIA RTX 4080 and RTX 4090?

The RTX 4090 is a significantly more powerful GPU than the RTX 4080. It boasts higher clock speeds, more CUDA cores, and a larger amount of VRAM (24GB vs 16GB) than the 4080. This translates to noticeably faster performance, especially when dealing with larger and more complex workloads. However, this power comes at a higher price.

Which is Better for Me: RTX 4080 or RTX 4090?

The best choice depends on your needs and budget. If you're working with large models like the 70B Llama 3 and prioritize speed and memory, the RTX 4090 is the way to go. However, if you're working with smaller models like the 8B Llama 3 and have a budget constraint, the RTX 4080 offers a solid balance of performance and affordability.

How does quantization affect GPU performance?

Quantization is a technique used to reduce the size and computational requirements of LLM models. By using a smaller number of bits to represent data (e.g., 4-bit instead of 32-bit), quantization can lead to faster inference speeds and reduced memory footprint. While it might sacrifice some accuracy, the trade-off is often worth it, especially for resource-constrained devices.

What are the alternatives to NVIDIA GPUs for running LLMs locally?

While NVIDIA GPUs are currently the most popular choice for LLM inference, other alternatives exist. AMD GPUs like Radeon RX 7900 XTX are increasingly capable of running LLMs, although their performance might not match NVIDIA's top-of-the-line cards. You can also explore options like Google's Tensor Processing Units (TPUs) or custom silicon designs, but these often require more specialized knowledge and infrastructure.

What is the future of LLM inference on consumer hardware?

The future looks bright for running LLMs locally. As GPUs and other hardware continue to improve, we can expect faster and more efficient LLM inference on consumer devices. Research and development efforts are constantly exploring new techniques like quantization, model parallelism, and specialized hardware architectures to further optimize local LLM performance. The possibilities for running more advanced models with greater speed and accessibility are exciting.

Keywords

Nvidia 4080, Nvidia 4090, LLM, Large Language Model, Llama 3, GPU, Token Speed, Quantization, Inference, AI, Machine Learning, Deep Learning, Generation, Processing, Performance Comparison, Benchmark Analysis, Local LLM, AI Hardware, GPU Comparison, NVIDIA GeForce RTX 4080, NVIDIA GeForce RTX 4090, 16GB, 24GB, F16, Q4KM, AMD GPUs, TPUs, Model Parallelism, Custom Silicon, Consumer Hardware, LLM Performance.