Which is Better for Running LLMs locally: NVIDIA 4070 Ti 12GB or NVIDIA RTX 5000 Ada 32GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is buzzing with excitement! These sophisticated AI models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a challenge, especially if you're looking for smooth performance and a seamless experience. That's where dedicated GPUs come into play.

In this article, we're diving deep into the performance of two popular NVIDIA GPUs: the 4070 Ti 12GB and the RTX 5000 Ada 32GB. We'll analyze each model's strengths and weaknesses when it comes to running LLMs locally, and help you decide which is the best fit for your needs.

Comparing NVIDIA 4070 Ti 12GB and NVIDIA RTX 5000 Ada 32GB for LLM Inference

We'll be looking at the performance of these two GPUs with various LLM models, focusing on Llama 3, a popular open-source LLM. We'll analyze the key factors like token generation speed and overall processing power, considering different quantization techniques for optimal performance.

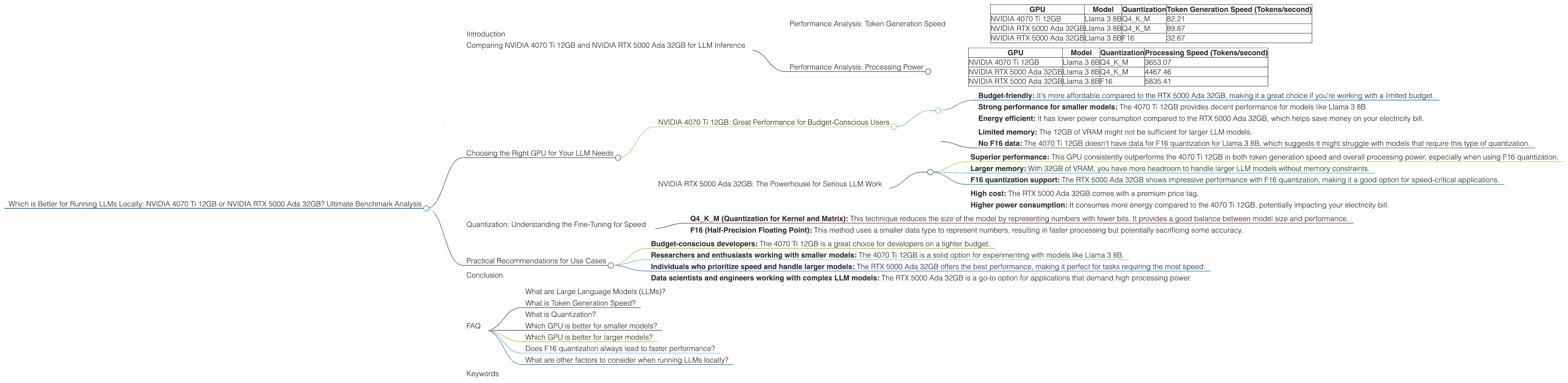

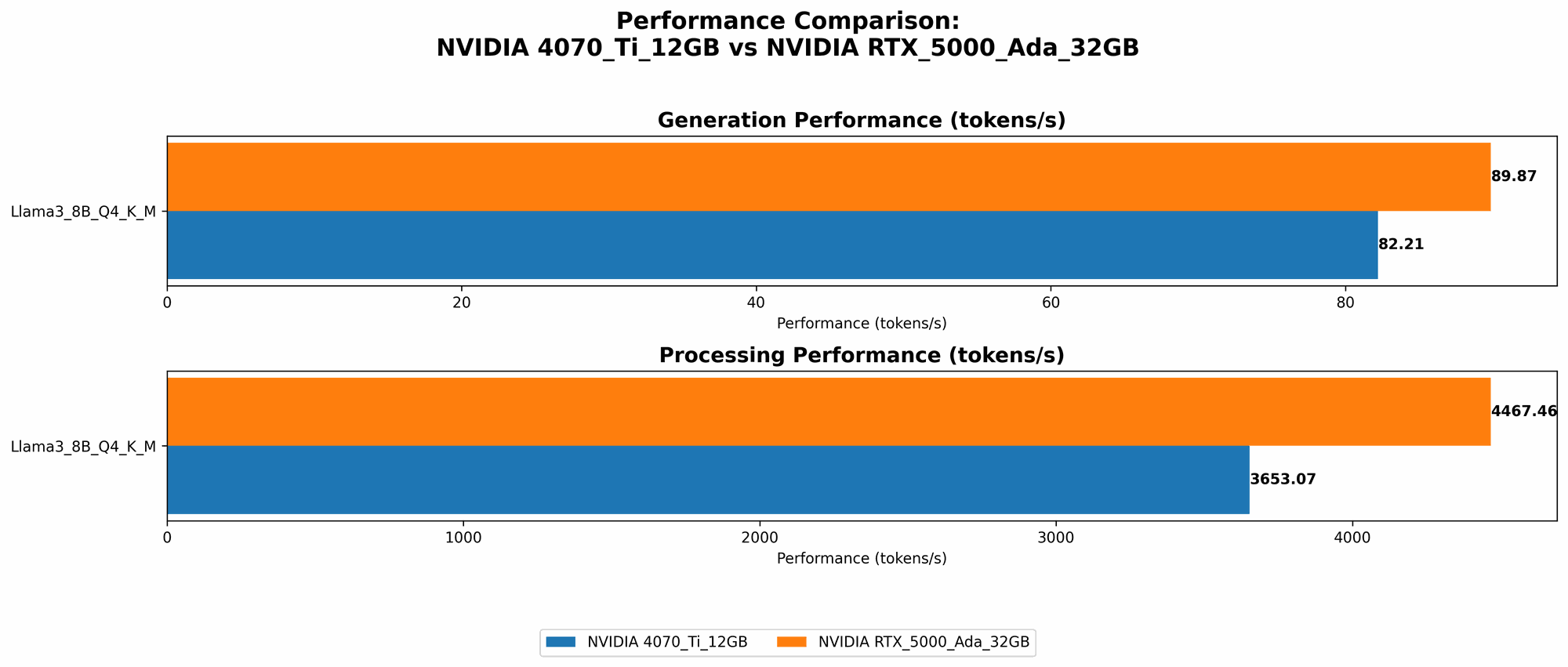

Performance Analysis: Token Generation Speed

Token generation speeds determine how quickly your GPU can process text data and output responses from the LLM. It's like measuring how fast your model can "think" and generate text based on the input. Let's see how these GPUs perform on Llama 3:

| GPU | Model | Quantization | Token Generation Speed (Tokens/second) |

|---|---|---|---|

| NVIDIA 4070 Ti 12GB | Llama 3 8B | Q4KM | 82.21 |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 8B | Q4KM | 89.87 |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 8B | F16 | 32.67 |

Key Takeaways:

- RTX 5000 Ada 32GB takes the lead: The RTX 5000 Ada 32GB consistently outperforms the 4070 Ti 12GB in token generation speed, especially when using the Q4KM quantization technique.

- F16 vs. Q4KM: The RTX 5000 Ada 32GB shows significantly lower token generation speed with F16 quantization compared to Q4KM, emphasizing the impact of quantization on performance. The 4070 Ti 12GB doesn't have data for F16 quantization for Llama 3 8B, indicating a potential limitation with this configuration.

- Consider your model size: As we move to larger models like Llama 3 70B, the data is not available for either GPU, which suggests a limitation for both cards when it comes to handling these larger models.

Performance Analysis: Processing Power

Now, let's look at the overall processing power of these GPUs, which is key to handling the complex calculations involved in LLM inference.

| GPU | Model | Quantization | Processing Speed (Tokens/second) |

|---|---|---|---|

| NVIDIA 4070 Ti 12GB | Llama 3 8B | Q4KM | 3653.07 |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 8B | Q4KM | 4467.46 |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 8B | F16 | 5835.41 |

Key Takeaways:

- RTX 5000 Ada 32GB shines again: The RTX 5000 Ada 32GB demonstrates superior processing speed for Llama 3 8B, regardless of the quantization method used.

- F16 boosts performance: Notably, the RTX 5000 Ada 32GB shows an impressive improvement in processing speed when using F16 quantization, potentially making it a better choice for scenarios where speed is crucial.

- Limitations for larger models: As for the Llama 3 70B model, we lack data for both GPUs, highlighting the challenge of running these larger models locally.

Choosing the Right GPU for Your LLM Needs

NVIDIA 4070 Ti 12GB: Great Performance for Budget-Conscious Users

The 4070 Ti 12GB offers a solid performance especially when using Q4KM quantization for Llama 3 8B. It’s a great option if you're looking for a balance between cost and performance. Here's a breakdown of its strengths:

- Budget-friendly: It's more affordable compared to the RTX 5000 Ada 32GB, making it a great choice if you're working with a limited budget.

- Strong performance for smaller models: The 4070 Ti 12GB provides decent performance for models like Llama 3 8B.

- Energy efficient: It has lower power consumption compared to the RTX 5000 Ada 32GB, which helps save money on your electricity bill.

However, consider these limitations:

- Limited memory: The 12GB of VRAM might not be sufficient for larger LLM models.

- No F16 data: The 4070 Ti 12GB doesn't have data for F16 quantization for Llama 3 8B, which suggests it might struggle with models that require this type of quantization.

NVIDIA RTX 5000 Ada 32GB: The Powerhouse for Serious LLM Work

For those who prioritize speed and want to handle larger models, the RTX 5000 Ada 32GB is the way to go. Let's break down its advantages:

- Superior performance: This GPU consistently outperforms the 4070 Ti 12GB in both token generation speed and overall processing power, especially when using F16 quantization.

- Larger memory: With 32GB of VRAM, you have more headroom to handle larger LLM models without memory constraints.

- F16 quantization support: The RTX 5000 Ada 32GB shows impressive performance with F16 quantization, making it a good option for speed-critical applications.

Keep these limitations in mind:

- High cost: The RTX 5000 Ada 32GB comes with a premium price tag.

- Higher power consumption: It consumes more energy compared to the 4070 Ti 12GB, potentially impacting your electricity bill.

Quantization: Understanding the Fine-Tuning for Speed

Think of quantization as a way to "shrink" the model without sacrificing too much accuracy. It’s like taking a high-resolution picture and compressing it for faster loading but with some potential quality loss.

Q4KM (Quantization for Kernel and Matrix): This technique reduces the size of the model by representing numbers with fewer bits. It provides a good balance between model size and performance.

F16 (Half-Precision Floating Point): This method uses a smaller data type to represent numbers, resulting in faster processing but potentially sacrificing some accuracy.

Practical Recommendations for Use Cases

Here's a quick guide on how to choose the ideal GPU for your LLM projects:

- Budget-conscious developers: The 4070 Ti 12GB is a great choice for developers on a tighter budget.

- Researchers and enthusiasts working with smaller models: The 4070 Ti 12GB is a solid option for experimenting with models like Llama 3 8B.

- Individuals who prioritize speed and handle larger models: The RTX 5000 Ada 32GB offers the best performance, making it perfect for tasks requiring the most speed.

- Data scientists and engineers working with complex LLM models: The RTX 5000 Ada 32GB is a go-to option for applications that demand high processing power.

Conclusion

Choosing the right GPU for running LLMs locally is crucial for harnessing the full potential of these powerful AI models. The NVIDIA 4070 Ti 12GB provides a solid balance between performance and budget, while the RTX 5000 Ada 32GB reigns supreme in terms of processing power and memory capacity. Both GPUs offer different strengths and weaknesses, so carefully consider your needs and budget before making your decision.

FAQ

What are Large Language Models (LLMs)?

LLMs are sophisticated AI models trained on massive datasets of text and code. They can generate human-like text, translate languages, answer your questions, and perform many other tasks.

What is Token Generation Speed?

Token generation speed measures how quickly a GPU can process text data and output responses from an LLM. It's essentially the speed at which the model can “think” and generate text.

What is Quantization?

Quantization is a technique used to reduce the size of LLM models without significantly impacting accuracy. It involves representing numbers with fewer bits, leading to faster processing.

Which GPU is better for smaller models?

For smaller models like Llama 3 8B, the NVIDIA 4070 Ti 12GB offers decent performance, especially considering its affordability.

Which GPU is better for larger models?

For larger models, the NVIDIA RTX 5000 Ada 32GB with its larger memory and superior processing power is the better choice.

Does F16 quantization always lead to faster performance?

Not necessarily. While F16 quantization often leads to faster processing, it can sometimes sacrifice some accuracy.

What are other factors to consider when running LLMs locally?

Besides GPU choice, other factors to consider include CPU performance, available memory, and software compatibility.

Keywords

Large Language Models, LLM, GPU, NVIDIA, 4070 Ti 12GB, RTX 5000 Ada 32GB, Token Generation Speed, Processing Power, Quantization, Q4KM, F16, Local Inference, Performance Benchmark, LLM Inference, Llama 3, AI, Machine Learning, Deep Learning, Text Generation, NLP, Natural Language Processing, Computational Power, Cost-Effective, High-Performance, Data Science, Machine Learning Development.