Which is Better for Running LLMs locally: NVIDIA 3090 24GB x2 or NVIDIA A40 48GB? Ultimate Benchmark Analysis

Introduction

The exciting world of large language models (LLMs) has taken the tech world by storm, offering unprecedented capabilities for natural language processing. But running these powerful models locally can be resource-intensive. Today, we’ll compare two popular GPUs—the NVIDIA 309024GBx2 (two 3090s in SLI) and the NVIDIA A40_48GB—to see how they perform in running the Llama 3 family of LLMs. We’ll go beyond simple performance comparisons and dive deep into their strengths and weaknesses, helping you pick the best device for your specific use case.

Comparison of NVIDIA 309024GBx2 and NVIDIA A40_48GB for Llama 3 Model Inference

This deep-dive explores the performance of two powerful GPU configurations—the NVIDIA 309024GBx2 and the NVIDIA A40_48GB—when running different sizes and precision levels of Llama 3 models. We analyze the speed of both token generation and processing, highlighting the strengths and weaknesses of each configuration.

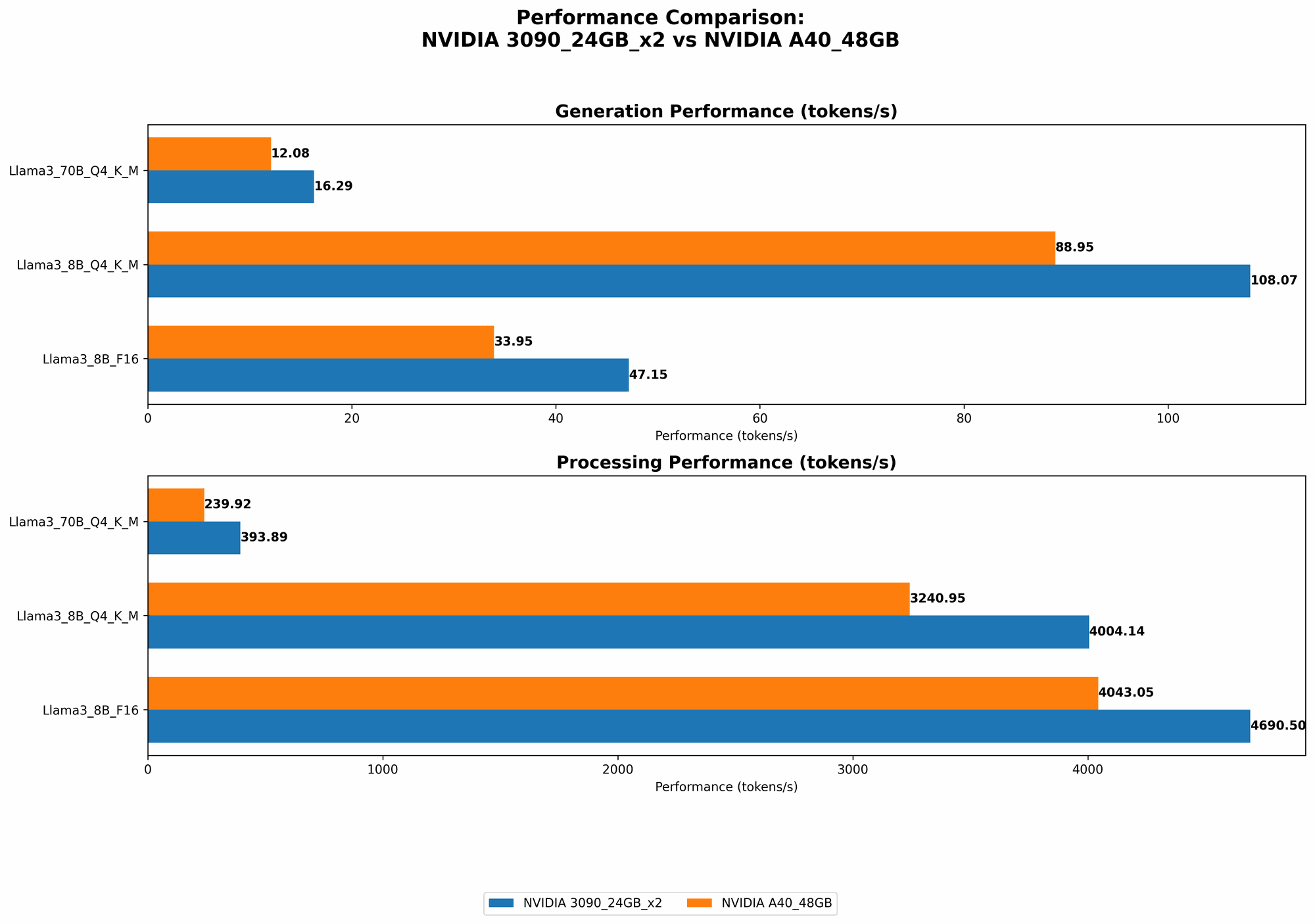

NVIDIA 309024GBx2 vs. NVIDIA A40_48GB: Token Generation Performance

The table below shows the token generation speed (tokens per second) of each configuration for different Llama 3 variants.

| Model | NVIDIA 309024GBx2 | NVIDIA A40_48GB |

|---|---|---|

| Llama 3 8B Q4KM | 108.07 | 88.95 |

| Llama 3 8B F16 | 47.15 | 33.95 |

| Llama 3 70B Q4KM | 16.29 | 12.08 |

| Llama 3 70B F16 | N/A | N/A |

Observations:

- NVIDIA 309024GBx2 consistently outperforms NVIDIA A40_48GB in token generation. This could be attributed to the added parallelism offered by SLI, allowing the two 3090s to work in tandem for faster processing.

- The difference in performance is more pronounced for smaller models like Llama 3 8B. This suggests that the 309024GBx2 configuration might be more optimal for handling smaller LLMs that require faster response times.

- While the A40 is more powerful in terms of raw compute resources, the 309024GBx2 configuration with SLI appears to provide better performance for certain workloads. This underscores the importance of considering specific workloads and performance requirements when choosing hardware.

NVIDIA 309024GBx2 vs. NVIDIA A40_48GB: Token Processing Performance

Now let’s dive into the token processing performance, which is a critical metric for tasks like text generation and translation.

| Model | NVIDIA 309024GBx2 | NVIDIA A40_48GB |

|---|---|---|

| Llama 3 8B Q4KM | 4004.14 | 3240.95 |

| Llama 3 8B F16 | 4690.5 | 4043.05 |

| Llama 3 70B Q4KM | 393.89 | 239.92 |

| Llama 3 70B F16 | N/A | N/A |

Observations:

- The NVIDIA 309024GBx2 again outperforms the NVIDIA A4048GB in token processing. This emphasizes the efficiency of the 309024GB_x2 configuration for handling computationally intensive tasks.

- The performance difference is not as significant for token processing compared to token generation. This indicates that the A40 might become a more suitable choice for applications that heavily rely on processing large amounts of text data.

Quantization: A Simple Explanation

Quantization is like simplifying complex numbers by using a limited set of values. Imagine a drawing with a million colors. You can reduce the number of colors to just 16 and still get a recognizable image, sacrificing a bit of detail. Quantization in LLMs does the same—it reduces the precision of weights, making the model smaller and faster to run, but it might slightly lower the quality of the generated output.

Q4KM: This means using a limited set of 4-bit values for key and value weights while using full precision for the matrix.

F16: This signifies using half-precision floating-point numbers for weights, significantly reducing storage requirements and increasing speed.

Performance Analysis: NVIDIA 309024GBx2 vs. NVIDIA A40_48GB

Strengths and Weaknesses of NVIDIA 309024GBx2

Strengths:

- High token generation speed: The 309024GBx2 configuration excels in generating tokens quickly, making it ideal for applications that prioritize fast responses, like chatbots or interactive dialogue systems.

- Overall faster performance: The 309024GBx2 outperforms the A40_48GB in both token generation and processing, indicating its efficiency for various tasks.

- Lower cost: Two 3090s are typically more cost-effective than a single A40, especially considering the need for a powerful workstation to host the A40.

Weaknesses:

- Scalability: Adding more GPUs with SLI can be complex and may not always result in linear performance improvements.

- Power consumption: Two 3090s consume more power than a single A40, which might be a concern for energy-conscious users.

Strengths and Weaknesses of NVIDIA A40_48GB

Strengths:

- High memory capacity: The A40's massive 48GB of memory allows for running larger LLMs, which could be crucial for tasks requiring extensive context or knowledge processing.

- Raw compute power: The A40 boasts higher raw processing power than the 3090s, making it suitable for applications demanding intensive computations, such as scientific modeling.

- Scalability: The A40 is designed for server environments and can be easily scaled to handle massive workloads.

Weaknesses:

- Lower token generation speed: While the A40 performs well in token processing, it lags behind the 309024GBx2 when it comes to speed of token generation.

- Higher cost: The A40 is significantly more expensive than the 309024GBx2 configuration.

- Power consumption: The A40 consumes substantial power, which might be a consideration for small-scale setups.

Practical Recommendations for Use Cases

Choose NVIDIA 309024GBx2 for:

- Chatbots and conversational AI: The 309024GBx2's speed makes it a good choice for applications that require fast responses and real-time interactions.

- Interactive dialogue systems: The 309024GBx2 configuration guarantees a faster response time, which is crucial for natural-feeling and responsive dialogues.

- Budget-conscious users: Two 3090s offer a compelling alternative for those who want high performance at a more affordable price.

Choose NVIDIA A40_48GB for:

- Large language models with extensive context: The A40's vast memory capacity makes it ideal for handling models requiring large context windows, such as those used in document summarization or translation.

- High-performance computing: The A40’s exceptional horsepower makes it suitable for scientific research, machine learning training, and other computationally demanding tasks.

- Scalable deployments: The A40 is a perfect fit for server environments that need to handle massive workloads and scale easily to meet increasing demands.

Conclusion

Choosing between NVIDIA 309024GBx2 and NVIDIA A4048GB for running LLMs locally requires careful consideration of your specific needs. The 309024GB_x2 excels in token generation speed, making it perfect for applications requiring fast responsiveness. The A40, on the other hand, boasts a larger memory capacity and raw processing power, ideal for handling massive models and computationally intensive tasks. Ultimately, the best choice depends on your specific application and the balance you prioritize between performance, cost, and power consumption.

FAQ

Q: What is the difference between token generation and token processing?

A: Token generation is the process of creating new tokens (words, subwords, or characters) based on the model's input. Token processing involves performing operations on these tokens, such as translation, summarization, or code generation.

Q: Can I use both NVIDIA 309024GBx2 and NVIDIA A40_48GB together for even better performance?

A: It's possible to combine different GPUs, but it requires sophisticated hardware and software configuration. The benefit might not be always linear and could come with complexities.

Q: What are the latest developments in LLM hardware?

A: There are advancements in specialized AI accelerators like Google's TPU (Tensor Processing Unit) and Graphcore's IPUs (Intelligence Processing Units) designed to handle LLMs more efficiently.

Q: Are there alternatives to NVIDIA GPUs for running LLMs?

A: Yes, AMD GPUs and specialized AI accelerators from other companies are becoming increasingly competitive, offering alternatives to NVIDIA's offerings.

Keywords

NVIDIA 3090, NVIDIA A40, LLMs, Large Language Models, Token Generation, Token Processing, Quantization, Q4KM, F16, Inference, Performance, Benchmark, Comparison, Llama 3, GPU, AI, Deep Learning, Natural Language Processing, NLP, AI Hardware, AI Acceleration, GPU Performance, LLM Inference, LLM Hardware, AMD GPU, TPU, IPU