Which is Better for Running LLMs locally: NVIDIA 3090 24GB x2 or NVIDIA 4090 24GB x2? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, and with it, the demand for powerful hardware to run them locally. Whether you're a developer experimenting with cutting-edge AI, a researcher pushing the boundaries of natural language processing, or just someone who wants to unleash the full potential of these amazing models on your own machine, the choice of hardware is crucial.

In this deep dive, we'll pit two titans of the GPU world against each other: the dual NVIDIA 309024GB and the dual NVIDIA 409024GB. We'll analyze their performance in running popular LLM models like Llama 3, examining key metrics like token generation speed and processing power. By the end of this article, you'll be equipped to make an informed decision about which GPU setup is ideal for your LLM endeavors.

Performance Analysis: NVIDIA 309024GBx2 vs. NVIDIA 409024GBx2

Comparison of NVIDIA 309024GBx2 and NVIDIA 409024GBx2 for Llama 3 Models

Let's dive into the heart of the matter: how do these GPUs stack up against each other when it comes to running Llama 3 models? We'll focus on two key aspects: token generation speed and processing power.

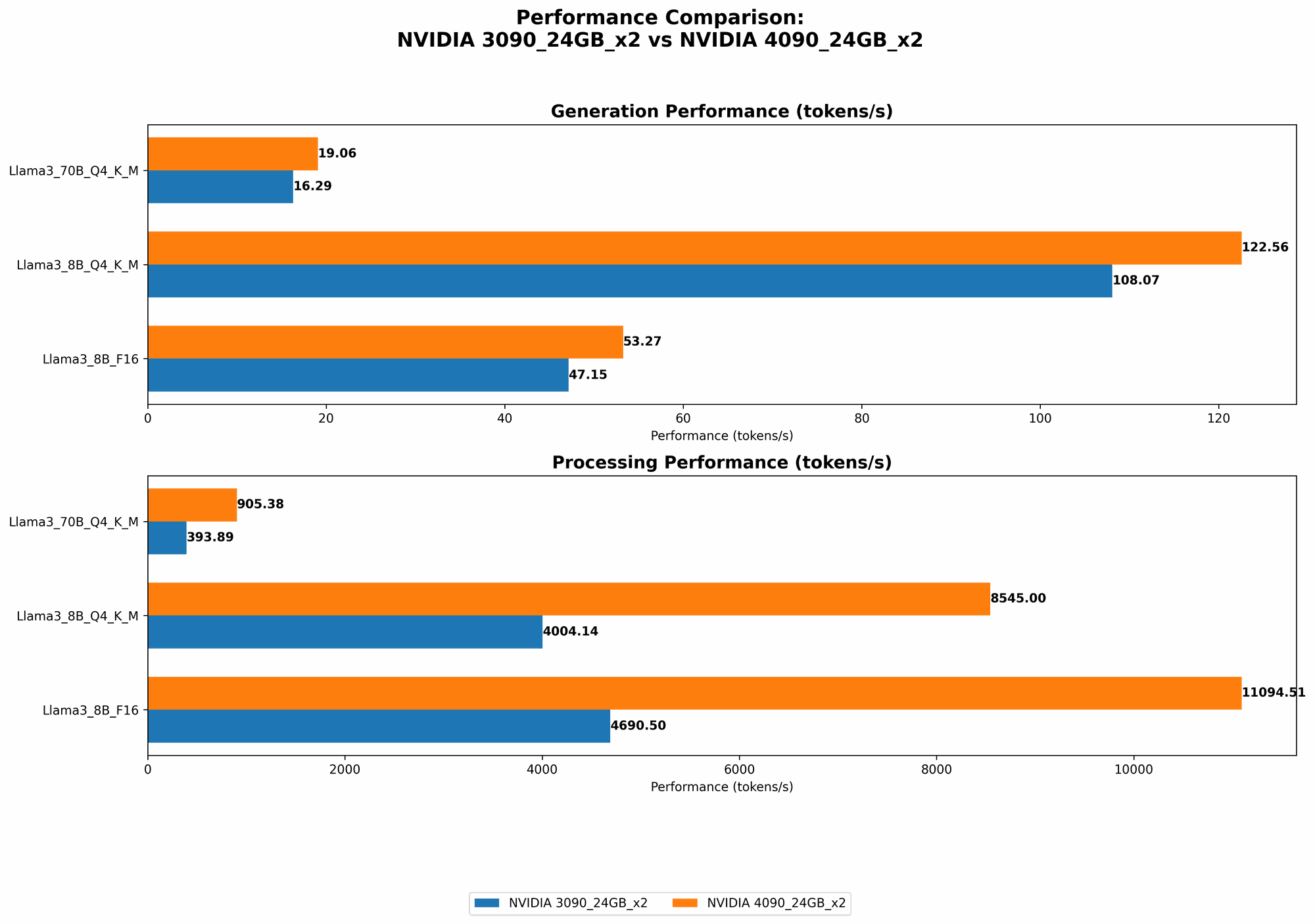

Token Generation Comparison

| Model | NVIDIA 309024GBx2 (Tokens/Second) | NVIDIA 409024GBx2 (Tokens/Second) |

|---|---|---|

| Llama 3 8B (Q4KM) | 108.07 | 122.56 |

| Llama 3 8B (F16) | 47.15 | 53.27 |

| Llama 3 70B (Q4KM) | 16.29 | 19.06 |

- Llama 3 8B: The NVIDIA 409024GBx2 shows its superiority in generating tokens for the 8B Llama 3 model, offering a ~13% improvement over the 309024GBx2, regardless of whether quantization (Q4KM) or half-precision (F16) is used.

- Llama 3 70B: Again, the 409024GBx2 pulls ahead. It delivers a ~17% boost in token generation speed for the 70B Llama 3 model compared to the 309024GBx2.

Implications: The faster token generation speed of the 409024GBx2 means you'll get responses from your LLM models quicker, making it a better choice for real-time applications like interactive chatbots or text generation.

Processing Power Comparison

| Model | NVIDIA 309024GBx2 (Tokens/Second) | NVIDIA 409024GBx2 (Tokens/Second) |

|---|---|---|

| Llama 3 8B (Q4KM) | 4004.14 | 8545.0 |

| Llama 3 8B (F16) | 4690.5 | 11094.51 |

| Llama 3 70B (Q4KM) | 393.89 | 905.38 |

- Llama 3 8B: The 409024GBx2 boasts a processing power advantage of more than double the 309024GBx2 for the 8B Llama 3 model. This difference is even more pronounced when using F16 precision, where the 409024GBx2 is more than twice as fast.

- Llama 3 70B: While the performance gap is smaller for the 70B Llama 3 model, the 409024GBx2 still outperforms the 309024GBx2 by a respectable margin, delivering more than double the processing power.

Implications: The 409024GBx2 shines when it comes to processing large amounts of text data. This makes it a more suitable choice for tasks like text summarization, translation, and code generation, where the model needs to process substantial amounts of text.

Strengths and Weaknesses of Each Setup

NVIDIA 309024GBx2

Strengths:

- Value for Money: The 309024GBx2 offers a more budget-friendly option compared to the 409024GBx2, especially considering you need two of them for significant performance gains.

- Sufficient for Smaller Models: While it falls short in the processing power department, it can handle smaller LLM models like Llama 3 8B with reasonable performance.

Weaknesses:

- Limited Processing Power: The 309024GBx2 struggles to handle larger models like Llama 3 70B due to its limited processing power.

- Slower Performance: Overall, it's slower than the 409024GBx2 in both token generation and processing.

NVIDIA 409024GBx2

Strengths:

- Unmatched Performance: The 409024GBx2 offers the most impressive performance, whether you're running smaller or larger LLM models.

- Future-Proof: With the advancements in LLM technology, it provides more headroom for future models and research.

Weaknesses:

- High Cost: The 409024GBx2 comes with a hefty price tag - you'll need to invest significantly more than for the 309024GBx2.

- Power Consumption: These GPUs are power-hungry, which translates to higher electricity bills.

Practical Recommendations

- For Developers and Researchers: The 409024GBx2 is the go-to choice, especially if you're working with larger LLM models and are willing to invest in top-tier performance.

- Budget-Conscious Users: If you're on a tighter budget and don't need to work with the largest LLMs, the 309024GBx2 can still be a good option.

Conclusion

The choice between the NVIDIA 309024GBx2 and the NVIDIA 409024GBx2 for running LLMs locally depends on your specific needs and budget. For those seeking the ultimate performance and future-proofing, the 409024GBx2 reigns supreme. However, the 309024GBx2 can be a viable option for developers and researchers working with smaller LLM models or those seeking greater value for their investment.

FAQ

1. What is Quantization?

Imagine you have a book with a full alphabet, from A to Z. Quantization is like using a smaller alphabet, maybe only A to J. You lose some detail, but the book is much smaller and easier to carry around. In LLMs, quantization reduces the size of the model, making it faster and requiring less memory while sacrificing a bit of accuracy.

2. What are F16 and Q4KM?

F16 refers to "half-precision" floating-point numbers. This means each number uses 16 bits instead of 32, making the model smaller. Q4KM is a type of quantization using 4-bit integers. Each method offers trade-offs between accuracy and speed.

3. What are the best LLMs for running locally?

The best LLMs for local running depend on your needs. Smaller models like Llama 3 8B are more manageable on less powerful hardware. Large models like Llama 3 70B require more powerful GPUs, but offer more capabilities. Consider your computational resources and the specific tasks you want to achieve.

Keywords

LLMs, Large Language Models, NVIDIA 309024GBx2, NVIDIA 409024GBx2, Llama 3 8B, Llama 3 70B, Token Generation, Processing Power, Quantization, F16, Q4KM, GPU, Performance Benchmark, AI, Natural Language Processing, Local Inference.