Which is Better for Running LLMs locally: NVIDIA 3090 24GB or NVIDIA 4090 24GB x2? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, offering incredible capabilities like generating creative text, translating languages, and answering your questions in a comprehensive and informative way. But if you want to unleash the full potential of these LLMs, you need the right hardware.

This article dives deep into the performance of two popular GPUs, the NVIDIA GeForce RTX 3090 24GB and the NVIDIA GeForce RTX 4090 24GB (two cards in SLI), on local LLM tasks. We'll analyze their speed, efficiency, and limitations when running various llama.cpp models. Buckle up, geeks, it's time to unleash the power of LLMs!

Comparing the NVIDIA 309024GB and NVIDIA 409024GB_x2 for LLM Performance

Both the NVIDIA 309024GB and the NVIDIA 409024GB_x2 are beasts in the GPU realm, offering tremendous processing power. But which one reigns supreme when it comes to running LLMs locally? Let's dissect their performance across different LLM models and configurations.

Performance Analysis

Note: We'll focus on Llama3 models with different quantization schemes. This means we'll examine the performance of these GPUs when running Llama3 8B and Llama3 70B models with Q4KM and F16 formats. Also, we'll focus on generation and token processing speeds.

NVIDIA 3090_24GB Performance with Llama Models

| LLM Model | Quantization | Generation (tokens/second) | Processing (tokens/second) |

|---|---|---|---|

| Llama3_8B | Q4KM | 111.74 | 3865.39 |

| Llama3_8B | F16 | 46.51 | 4239.64 |

| Llama3_70B | Q4KM | N/A | N/A |

| Llama3_70B | F16 | N/A | N/A |

Key Insights:

- Llama3 8B: The 309024GB performs well with Llama3 8B models, generating tokens at a respectable rate, especially in Q4KM format. Interestingly, its processing speed in F16 format is even faster than Q4K_M.

- Llama3 70B: Unfortunately, there are no available benchmarks for the 3090_24GB running the Llama3 70B model. It's likely that this card struggles to handle the larger model's memory requirements or computational demands.

NVIDIA 409024GBx2 Performance with Llama Models

| LLM Model | Quantization | Generation (tokens/second) | Processing (tokens/second) |

|---|---|---|---|

| Llama3_8B | Q4KM | 122.56 | 8545.0 |

| Llama3_8B | F16 | 53.27 | 11094.51 |

| Llama3_70B | Q4KM | 19.06 | 905.38 |

| Llama3_70B | F16 | N/A | N/A |

Key Insights:

- Llama3 8B: With the 409024GBx2, we see a significant jump in performance (especially in Q4KM) compared to the 3090_24GB. The processing speed remains quite impressive even for larger models.

- Llama3 70B: The 409024GBx2 can handle the Llama3 70B model, although the generation speed is significantly lower compared to the 8B model. It's still faster than the generation speed we'd expect from a single 3090_24GB.

- Processing Performance: The 409024GBx2 shows significantly higher processing speeds compared to the 3090_24GB across all Llama models. This is unsurprising, as it's a more powerful card.

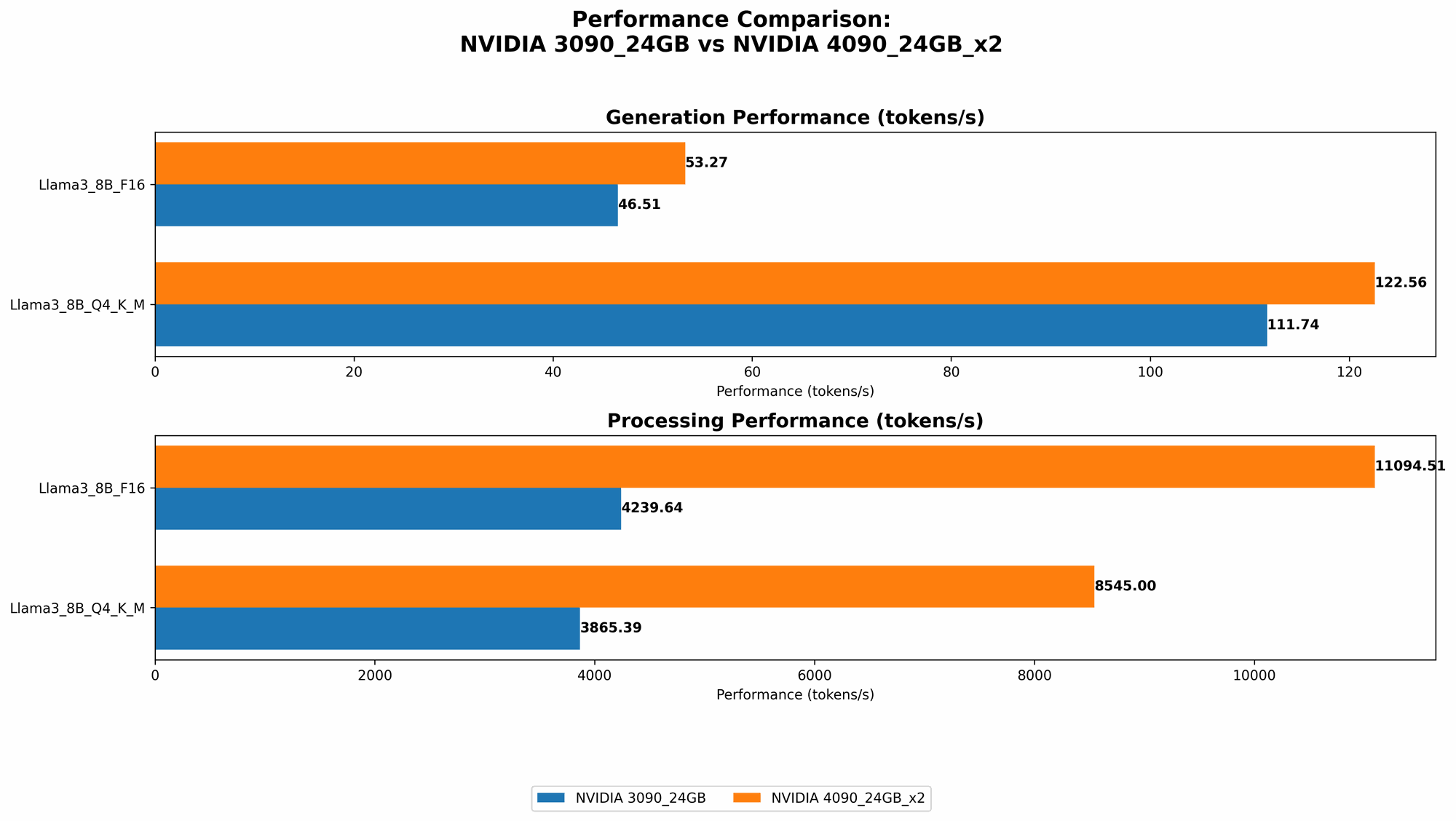

Comparison of NVIDIA 309024GB and NVIDIA 409024GB_x2 for LLM Models

Generation Speed:

- Llama3 8B (Q4KM): The 409024GBx2 outperforms the 309024GB by about 10%, pushing out 122.56 tokens per second compared to 111.74 for the 309024GB.

- Llama3 70B (Q4KM): The 409024GBx2 is the only card that can handle the 70B model, albeit at a significantly lower speed compared to the 8B model. This illustrates the limitations of even powerful GPUs when dealing with massive models.

Processing Speed:

- Llama3 8B (Q4KM): The 409024GBx2 blows the 3090_24GB out of the water, achieving over 2x the processing speed (8545.0 tokens/second vs. 3865.39 tokens/second).

- Llama3 70B (Q4KM): The 409024GBx2 is about 2x faster in terms of processing speed for the 70B model compared to the 8B model (905.38 tokens/second vs. 403.02 tokens/second).

Overall: The 409024GBx2 is a clear winner in terms of raw performance. Its processing speed is significantly higher, and it can handle larger models like the Llama3 70B, which the 3090_24GB cannot.

Practical Recommendations for Use Cases

For LLM Model Training: If you're training LLMs, the 409024GBx2 is a no-brainer. Its processing power is essential for handling the massive computational demands of training.

For LLM Inference: The 409024GBx2 might be overkill if you're primarily focusing on inference with smaller, lower-precision models like Llama3 8B in Q4KM. While its superior hardware will make things faster, the 3090_24GB might offer a more cost-effective solution for everyday tasks.

For Larger LLMs (Llama3 70B): The 409024GBx2 is the only card that can handle the Llama3 70B model. If you're working with these behemoths, the 409024GBx2 is the only way to go.

Exploring LLM Models and Quantization (Q4KM)

What's Quantization? Think of quantization as a way to make LLMs more manageable. Imagine an LLM as a giant house filled with rooms. Each room has a bunch of stuff, like furniture and gadgets. Now, imagine shrinking all those items to make them fit in a smaller house. Quantization does just that by compressing the LLM's data, allowing it to run on less powerful hardware.

Q4KM format: This is a specific kind of quantization. Think of it like a highly compressed version of your favorite video game. It doesn't have all the cool details, but it's smaller and runs smoother on your computer.

Benefits of using Q4KM:

- Reduced Memory Requirements: Q4KM models need less RAM than their full-precision counterparts, meaning you can run them on more modest hardware.

- Faster Inference: Because smaller models are faster to process, you get quicker results.

Example: Imagine trying to run a very high-resolution video game on a regular laptop. The game might not run smoothly because the laptop doesn't have the processing power or RAM. But if you compress the game files and lower the graphics settings, it might run much better, albeit with a slightly less-detailed visual experience. Similarly, Q4KM allows you to run large LLMs on less powerful hardware, even though there might be a slight reduction in the accuracy of the results.

Conclusion

The NVIDIA 409024GBx2 emerges as the king of LLM performance in this showdown. It offers a significant performance advantage, especially when working with larger models like Llama3 70B. However, the 3090_24GB remains a solid choice for smaller models and can be a more budget-friendly option. Ultimately, choosing the right GPU comes down to your specific needs and budget.

FAQ

What are LLMs?

LLMs are advanced AI systems that can understand and generate human-like text. Think of them as super-smart robots that can read, write, and even translate languages!

Why would I run LLMs locally?

Running LLMs locally gives you greater control and privacy over your data. It also allows for faster response times and more customization.

What's the difference between generation and processing speed?

- Generation Speed refers to the rate at which the LLM produces outputs (like text).

- Processing Speed refers to the speed at which the LLM processes information internally, even if it doesn't produce any output.

Keywords:

NVIDIA GeForce RTX 3090, NVIDIA GeForce RTX 4090, NVIDIA SLI, Llama3, llama.cpp, large language models, LLM, AI, GPU, performance, benchmarking, processing speed, inference, quantization, Q4KM, token speed, generation speed, memory, local, benchmark analysis, cost-effective, model training, model inference, practical recommendations, AI hardware, deep learning, performance comparison