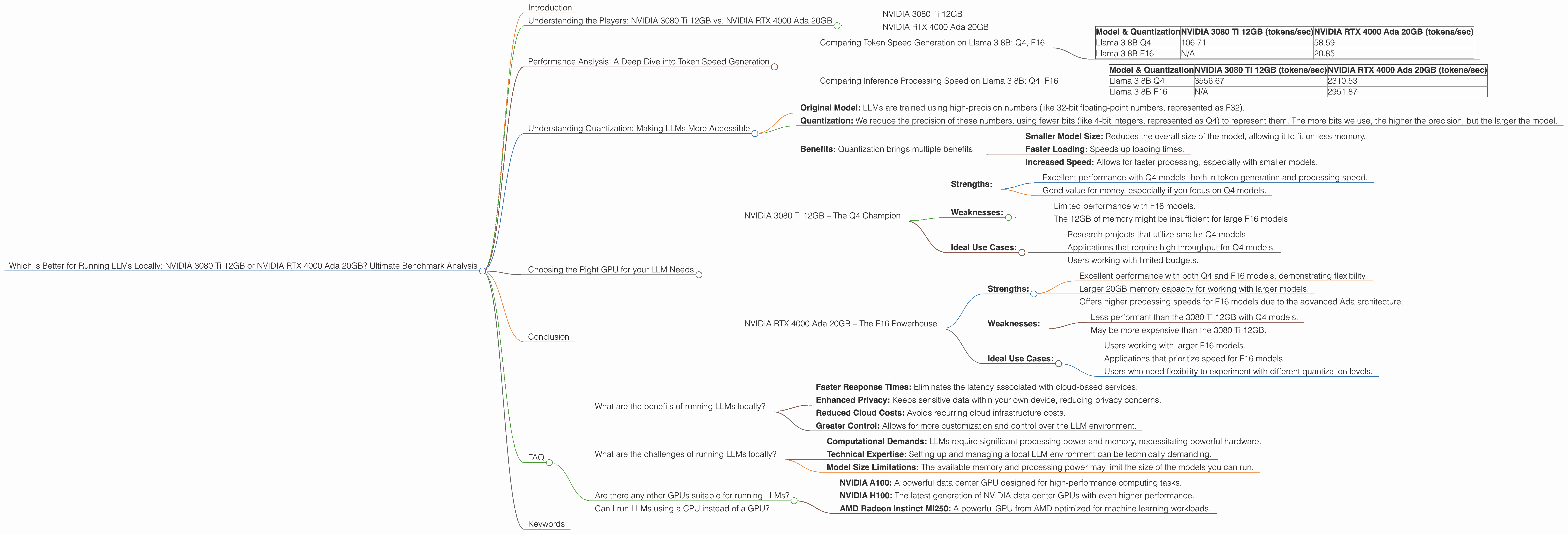

Which is Better for Running LLMs locally: NVIDIA 3080 Ti 12GB or NVIDIA RTX 4000 Ada 20GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, with new research and advancements happening every day. One area of significant interest is the ability to run these models locally, allowing for faster response times, better privacy, and reduced dependence on cloud infrastructure. But running LLMs locally can be computationally demanding, requiring specialized hardware with ample processing power. This article delves into the performance comparison of two popular GPUs, the NVIDIA 3080 Ti 12GB and the NVIDIA RTX 4000 Ada 20GB, for running the latest generation of LLMs, specifically focusing on the Llama 3 family. We'll explore the strengths and weaknesses of each card, providing valuable insights to help you choose the right tool for your local LLM needs.

Understanding the Players: NVIDIA 3080 Ti 12GB vs. NVIDIA RTX 4000 Ada 20GB

NVIDIA 3080 Ti 12GB

The NVIDIA 3080 Ti 12GB was released in 2021 as a top-of-the-line gaming GPU, and it continues to be a popular choice for both gaming and machine learning tasks. It boasts 12GB of GDDR6X memory, a hefty 10,240 CUDA cores, and a boost clock speed of 1665 MHz.

NVIDIA RTX 4000 Ada 20GB

The NVIDIA RTX 4000 Ada 20GB, released in 2023, belongs to the latest generation of NVIDIA GPUs. It packs a punch with 20GB of GDDR6X memory, a staggering 16,384 CUDA cores, and a boost clock speed of 2535 MHz.

Performance Analysis: A Deep Dive into Token Speed Generation

Comparing Token Speed Generation on Llama 3 8B: Q4, F16

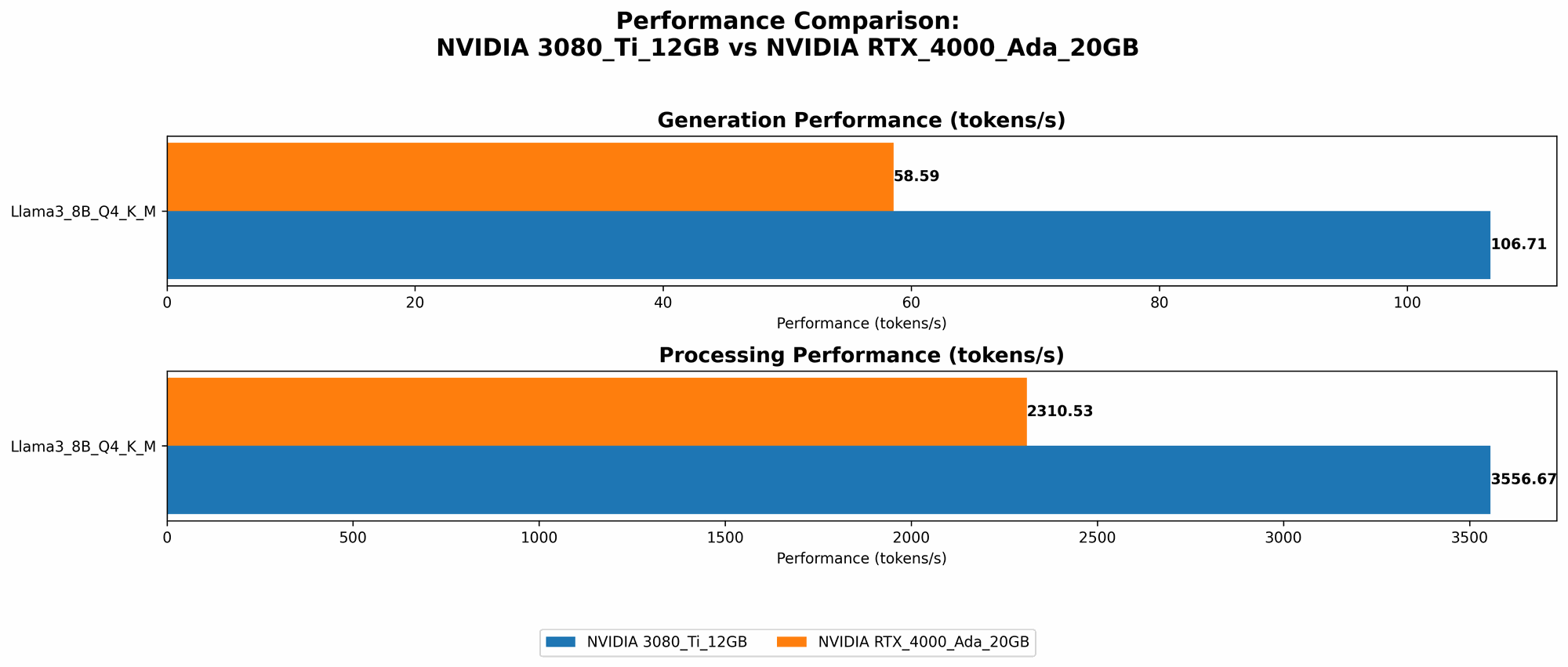

Let's start our analysis by examining the token speed generation performance of both GPUs on the Llama 3 8B model, using two different quantization levels: Q4 and F16. These numbers represent how many tokens per second each GPU can generate, directly impacting the responsiveness of your LLM application.

| Model & Quantization | NVIDIA 3080 Ti 12GB (tokens/sec) | NVIDIA RTX 4000 Ada 20GB (tokens/sec) |

|---|---|---|

| Llama 3 8B Q4 | 106.71 | 58.59 |

| Llama 3 8B F16 | N/A | 20.85 |

Key Observations:

- 3080 Ti 12GB Dominates in Q4: The 3080 Ti 12GB outperforms the RTX 4000 Ada 20GB by a significant margin when using Q4 quantization. This indicates that for computationally demanding models, the older generation GPU holds an advantage.

- F16 Advantage for RTX 4000 Ada 20GB: The 4000 Ada 20GB reclaims the lead with F16 quantization, showcasing its efficiency in handling higher precision models.

Practical Implications:

- Q4 Efficiency for 3080 Ti 12GB: The 3080 Ti 12GB shines for users who prioritize speed on smaller models with Q4 quantization, making it ideal for lightweight applications or research projects.

- F16 Flexibility for RTX 4000 Ada 20GB: The 4000 Ada 20GB provides greater flexibility, offering good performance for both Q4 and F16 models. This makes it a better choice for users who may want to explore different quantization levels or work with larger models.

Comparing Inference Processing Speed on Llama 3 8B: Q4, F16

Now, let's delve into inference processing, which refers to the speed at which the GPU can process the input data to generate the output. We'll examine both Q4 and F16 quantization levels for the Llama 3 8B model.

| Model & Quantization | NVIDIA 3080 Ti 12GB (tokens/sec) | NVIDIA RTX 4000 Ada 20GB (tokens/sec) |

|---|---|---|

| Llama 3 8B Q4 | 3556.67 | 2310.53 |

| Llama 3 8B F16 | N/A | 2951.87 |

Key Observations:

- 3080 Ti 12GB Dominates in Q4 Processing: Similar to token generation, the 3080 Ti 12GB outperforms the 4000 Ada 20GB in Q4 processing. This suggests that its architecture is better optimized for handling large amounts of data during inference on Q4 models.

- F16 Advantage for RTX 4000 Ada 20GB: Again, the 4000 Ada 20GB shines in F16 processing, demonstrating its ability to handle higher precision models with greater efficiency.

Practical Implications:

- 3080 Ti 12GB for High Q4 Throughput: If you're working with Q4 models and prioritize high data processing speeds, the 3080 Ti 12GB is a superior choice. This makes it suitable for applications with high input and output volumes.

- RTX 4000 Ada 20GB for F16 Flexibility: The 4000 Ada 20GB's strengths in F16 processing make it ideal for applications that require higher precision or work with larger models.

Understanding Quantization: Making LLMs More Accessible

Quantization is a powerful technique used to reduce the size of LLM models without sacrificing too much accuracy. Imagine squeezing a giant encyclopedia into a pocket-sized book – that's what quantization does to LLM models.

Here's how it works:

- Original Model: LLMs are trained using high-precision numbers (like 32-bit floating-point numbers, represented as F32).

- Quantization: We reduce the precision of these numbers, using fewer bits (like 4-bit integers, represented as Q4) to represent them. The more bits we use, the higher the precision, but the larger the model.

- Benefits: Quantization brings multiple benefits:

- Smaller Model Size: Reduces the overall size of the model, allowing it to fit on less memory.

- Faster Loading: Speeds up loading times.

- Increased Speed: Allows for faster processing, especially with smaller models.

Analogy: Think of it like reducing the resolution of an image – it might not be as sharp, but it takes up less storage space and loads faster. Similarly, a quantized LLM might not perform as well as the full precision version, but it's smaller, faster, and more efficient.

Choosing the Right GPU for your LLM Needs

NVIDIA 3080 Ti 12GB – The Q4 Champion

- Strengths:

- Excellent performance with Q4 models, both in token generation and processing speed.

- Good value for money, especially if you focus on Q4 models.

- Weaknesses:

- Limited performance with F16 models.

- The 12GB of memory might be insufficient for large F16 models.

- Ideal Use Cases:

- Research projects that utilize smaller Q4 models.

- Applications that require high throughput for Q4 models.

- Users working with limited budgets.

NVIDIA RTX 4000 Ada 20GB – The F16 Powerhouse

- Strengths:

- Excellent performance with both Q4 and F16 models, demonstrating flexibility.

- Larger 20GB memory capacity for working with larger models.

- Offers higher processing speeds for F16 models due to the advanced Ada architecture.

- Weaknesses:

- Less performant than the 3080 Ti 12GB with Q4 models.

- May be more expensive than the 3080 Ti 12GB.

- Ideal Use Cases:

- Users working with larger F16 models.

- Applications that prioritize speed for F16 models.

- Users who need flexibility to experiment with different quantization levels.

Conclusion

Both the NVIDIA 3080 Ti 12GB and the NVIDIA RTX 4000 Ada 20GB are excellent options for running LLMs locally. Choosing the right GPU ultimately depends on your specific needs and priorities. The 3080 Ti 12GB excels in Q4 performance, making it a cost-effective solution for speed-sensitive applications with smaller models. The RTX 4000 Ada 20GB offers more flexibility with both Q4 and F16 models, with a larger memory capacity that's better suited for larger and more complex models.

FAQ

What are the benefits of running LLMs locally?

Running LLMs locally offers several advantages:

- Faster Response Times: Eliminates the latency associated with cloud-based services.

- Enhanced Privacy: Keeps sensitive data within your own device, reducing privacy concerns.

- Reduced Cloud Costs: Avoids recurring cloud infrastructure costs.

- Greater Control: Allows for more customization and control over the LLM environment.

What are the challenges of running LLMs locally?

Despite the benefits, there are challenges to running LLMs locally:

- Computational Demands: LLMs require significant processing power and memory, necessitating powerful hardware.

- Technical Expertise: Setting up and managing a local LLM environment can be technically demanding.

- Model Size Limitations: The available memory and processing power may limit the size of the models you can run.

Are there any other GPUs suitable for running LLMs?

Yes, there are several other GPUs that can be suitable for running LLMs locally, such as:

- NVIDIA A100: A powerful data center GPU designed for high-performance computing tasks.

- NVIDIA H100: The latest generation of NVIDIA data center GPUs with even higher performance.

- AMD Radeon Instinct MI250: A powerful GPU from AMD optimized for machine learning workloads.

Can I run LLMs using a CPU instead of a GPU?

Yes, but it's significantly slower and less efficient than using a GPU. CPUs are better suited for general-purpose tasks, while GPUs are designed for intensive computations like those required by LLMs.

Keywords

LLM, large language models, GPU, NVIDIA, 3080 Ti, RTX 4000 Ada, Ada, performance, benchmark, token speed, generation, inference, processing, Q4, F16, quantization, local, speed, memory, cost, efficiency, practical, use cases, applications, research, developers, geeks, comparison, guide, choosing, strengths, weaknesses