Which is Better for Running LLMs locally: NVIDIA 3080 Ti 12GB or NVIDIA 4070 Ti 12GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is booming, offering incredible potential for developers and businesses alike. But running these models locally can be a computationally demanding task, requiring powerful hardware to handle the complex computations involved. Two popular choices for tackling this challenge are the NVIDIA GeForce RTX 3080 Ti 12GB and NVIDIA GeForce RTX 4070 Ti 12GB graphics cards. Both pack impressive performance, but which one reigns supreme for LLM workloads? In this article, we’ll delve into the nitty-gritty details, comparing the performance of these two GPUs on various LLM models and helping you determine the best fit for your needs.

Performance Analysis: Comparing the NVIDIA 3080 Ti 12GB and NVIDIA 4070 Ti 12GB

Token Speed Comparison: Llama3 8B Model

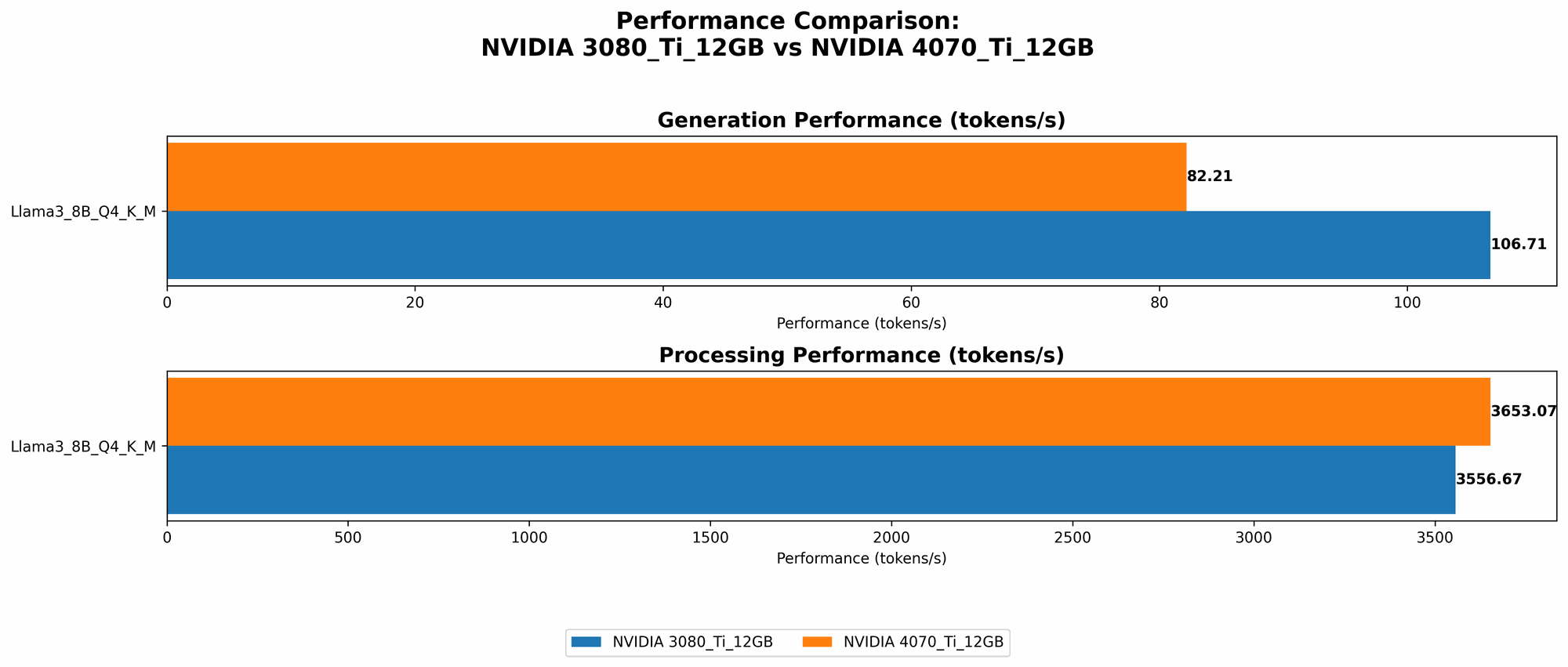

Let's kick things off with a familiar face: the Llama3 8B model. This model has become a popular choice for running LLMs locally, striking a balance between performance and resource requirements. We can quantify performance in terms of tokens per second, which indicates how many tokens a GPU can process during a given time frame.

Here’s what we see in our benchmark data:

| GPU | Llama3 8B - Q4KM - Generation (Tokens/second) |

|---|---|

| NVIDIA 3080 Ti 12GB | 106.71 |

| NVIDIA 4070 Ti 12GB | 82.21 |

Analysis: The NVIDIA 3080 Ti 12GB emerges as the clear winner for the Llama3 8B model, generating significantly more tokens per second compared to the 4070 Ti 12GB.

How this relates to your work: If you're working with the Llama3 8B model, the 3080 Ti 12GB can provide a noticeable performance advantage, leading to faster response times and potentially better performance.

Processing Performance with Quantized Models

While the benchmark data doesn't reveal much about F16 precision, we can still derive insights from the performance of quantized models with Q4KM methods. Quantization reduces the size of the model by representing weights and activations with fewer bits.

Performance data for Q4KM processing:

| GPU | Llama3 8B - Q4KM - Processing (Tokens/second) |

|---|---|

| NVIDIA 3080 Ti 12GB | 3556.67 |

| NVIDIA 4070 Ti 12GB | 3653.07 |

Analysis: In the case of quantized Llama3 8B processing, the 4070 Ti 12GB marginally outperforms the 3080 Ti 12GB. This suggests that for quantized models, the 4070 Ti 12GB might provide a slight performance edge.

Practical Implications: If you prioritize optimizing for model size, the 4070 Ti 12GB could be a better choice for processing quantized Llama3 8B models.

Missing Data: Llama3 70B and Beyond

Unfortunately, our benchmark data doesn't include information about the 4070 Ti 12GB's performance with larger models like Llama3 70B. While the 3080 Ti 12GB is capable of running these models, the memory limitations might be a factor.

What this means for you: If you intend to run models larger than Llama3 8B, you’ll need to consider alternative strategies, such as model partitioning or using cloud-based services with more powerful hardware.

Strengths and Weaknesses: A Detailed Comparison

NVIDIA 3080 Ti 12GB: The Powerhouse

Strengths:

- Higher Performance: While its performance with larger models might be limited, the 3080 Ti 12GB excels with models like Llama3 8B. It delivers a noticeably faster token generation speed, making it a strong contender for tasks requiring rapid response times.

- Solid Memory Capacity: The 12GB of GDDR6X memory on the 3080 Ti 12GB provides a decent amount of memory for running moderately sized models.

Weaknesses:

- Potential Memory Constraints: While sufficient for Llama3 8B, the 12GB memory might be a bottleneck for running larger models like Llama3 70B. This could result in performance degradation or memory-related errors.

- Higher Power Consumption: The 3080 Ti 12GB is known for its high power consumption, which can lead to increased electricity bills.

NVIDIA 4070 Ti 12GB: The Value Champion

Strengths:

- Improved Efficiency: The 4070 Ti 12GB offers a balance of performance and power efficiency. Although it may not be as fast as the 3080 Ti 12GB, it consumes significantly less power, making it a cost-effective option.

- Stronger for Quantized Models: In our benchmarks, the 4070 Ti 12GB delivered slightly better performance for processing quantized Llama3 8B models. This highlights its potential for efficient model usage.

Weaknesses:

- Lower Performance on Llama3 8B: Compared to the 3080 Ti 12GB, the 4070 Ti 12GB falls behind in terms of token generation speed for the Llama3 8B model.

- Limited Memory Capacity: The 12GB of GDDR6X memory on the 4070 Ti 12GB might not be enough for running large models effectively.

User Recommendations: Choosing the Right GPU

For Llama3 8B and Below:

- If performance reigns supreme: The NVIDIA 3080 Ti 12GB is your best bet. It offers a substantial performance advantage for the Llama3 8B model and can handle numerous other tasks with ease.

- If efficiency is key: The NVIDIA 4070 Ti 12GB provides a more cost-effective solution with lower power consumption.

For Llama3 70B and Above:

- Consider alternatives: Both the 3080 Ti 12GB and the 4070 Ti 12GB will likely struggle with models this large. You might want to consider model partitioning, using a more powerful GPU (like a 4090 Ti 24GB), or exploring cloud-based solutions.

Understanding Key Concepts for LLM Performance

FP16 vs. Quantization: A Simplified Analogy

Imagine you're trying to describe a color to someone. Using FP16 is like using a limited color palette of 16 shades. While still good for many colors, you might miss some nuances. Quantization takes a different approach, like choosing specific colors from a larger palette, but with fewer choices overall.

- FP16 (Float16): Uses 16 bits to represent numbers, offering a balance between accuracy and performance.

- Quantization: Reduces the number of bits used to represent weights and activations, leading to smaller model sizes and potential performance improvements.

Tokens: The Building Blocks of Language

Tokens are the units of language that LLMs process. Think of them as the individual words or parts of words that make up a sentence. The more tokens a GPU can process per second, the faster the model can generate text or understand your requests.

FAQs: Addressing Your Questions

Q1: Are there other GPUs that could be better for LLMs?

A: Yes, there are other powerful GPUs available, such as the NVIDIA RTX 4090 Ti 24GB. This card boasts a larger memory capacity and higher performance but comes at a higher cost.

Q2: Is it worth running LLMs locally?

A: It depends on your needs and resources. If you need fast, private access and control over your data, local deployment can be beneficial. However, if you're working with massive models and require advanced capabilities, cloud-based services might be a better fit.

Q3: Can I run LLMs on a CPU?

A: While possible, CPUs are generally not as efficient as GPUs for running LLMs due to their parallel processing capabilities. This means you'll likely experience slower performance and higher resource consumption.

Keywords:

NVIDIA 3080 Ti, NVIDIA 4070 Ti, LLM, Llama 3, token speed, performance, benchmarks, GPU comparison, quantized models, FP16, Q4KM, tokens, processing, memory, power consumption, local deployment, cloud services, CPU, GPU, deep learning, large language model.