Which is Better for Running LLMs locally: NVIDIA 3080 10GB or NVIDIA RTX A6000 48GB? Ultimate Benchmark Analysis

Introduction

The field of large language models (LLMs) is exploding, with new models like Llama 3 and its variations getting released all the time. But the question remains: how do you run these powerful LLMs on your own computer? While cloud services like Google Colab and Amazon SageMaker offer easy access, running LLMs locally allows for greater control, privacy, and potentially better performance.

In this article, we'll dive into the head-to-head comparison of two popular GPUs — the NVIDIA 3080 10GB and the NVIDIA RTX A6000 48GB — to see which one reigns supreme for running LLMs locally. We'll explore their performance in token processing and generation for different models and configurations, highlighting their strengths and weaknesses. Get ready for some serious tech talk with a dash of humor!

Performance Breakdown: Token Processing and Generation

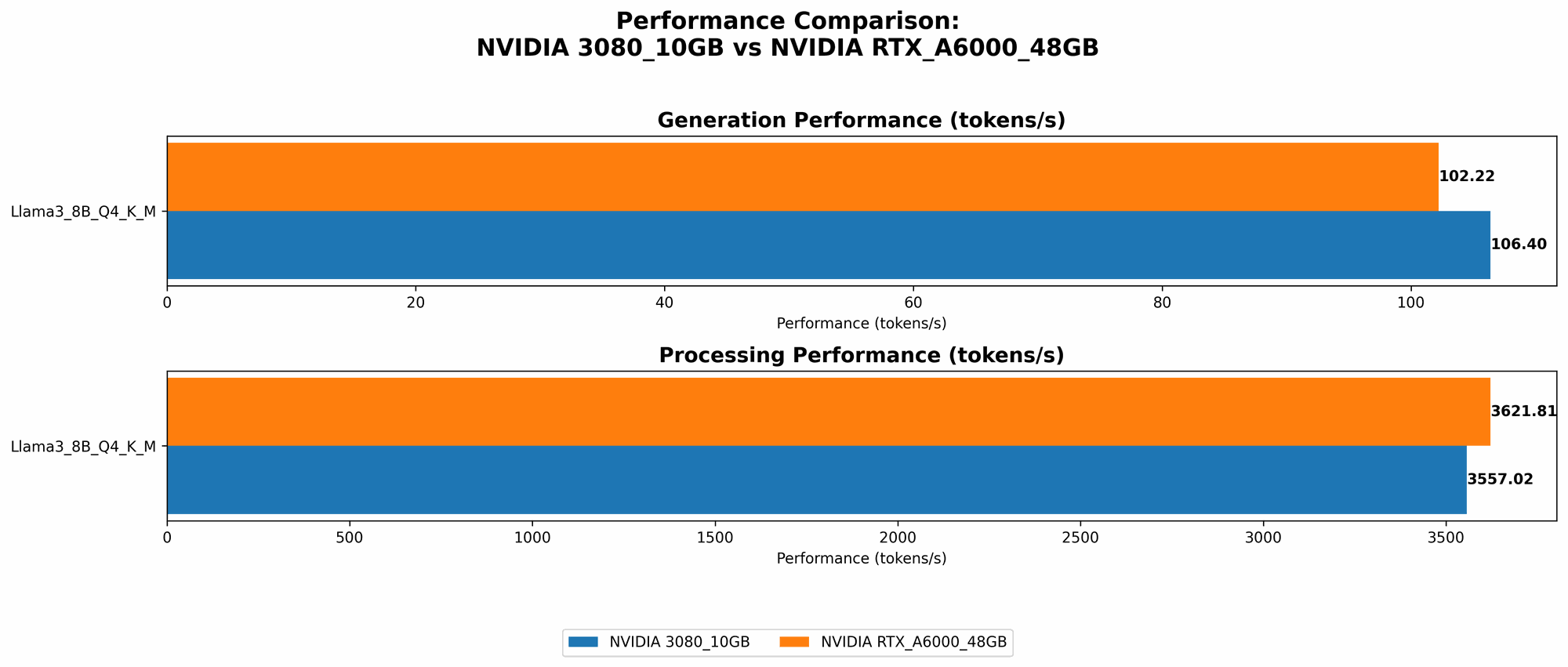

NVIDIA 3080 10GB vs. NVIDIA RTX A6000 48GB: Llama 3 8B

Let's start with the 8-billion parameter Llama 3 model, a good choice for experimenting with LLMs without requiring a ton of computational resources. We'll analyze the performance of both GPUs in two key areas: token processing and token generation.

Token Processing:

- NVIDIA 3080 10GB: Achieves a processing speed of 3557.02 tokens per second with the Llama 3 8B model in Q4 (quantized to 4 bits) using the K and M (kernel and matrix) method. This is impressive performance!

- NVIDIA RTX A6000 48GB: Just slightly faster than the 3080, it processes 3621.81 tokens per second when running the Llama 3 8B model with the same Q4 K and M method.

Token Generation:

- NVIDIA 3080 10GB: Demonstrates a solid generation speed of 106.4 tokens per second with the Llama 3 8B model in Q4 using K and M.

- NVIDIA RTX A6000 48GB: Not far behind the 3080, the RTX A6000 achieves a generation speed of 102.22 tokens per second with the Llama 3 8B model in Q4 using K and M.

Overall, both GPUs offer competitive performance for token generation and processing tasks with the Llama 3 8B model. While the RTX A6000 might have a minor edge in token processing, the difference is negligible.

NVIDIA 3080 10GB vs. NVIDIA RTX A6000 48GB: Llama 3 70B

Now let's crank things up a notch and explore the performance differences for the significantly larger Llama 3 70B model. This behemoth requires more resources, so we need to see how the two GPUs handle the extra demands.

Token Processing:

- NVIDIA 3080 10GB: Data for the 3080 with the Llama 3 70B model is not available. This indicates either the 3080 is not powerful enough to handle the larger model or the benchmarks haven't been run yet.

- NVIDIA RTX A6000 48GB: The RTX A6000 demonstrates its muscle by processing 466.82 tokens per second with the Llama 3 70B model in Q4 using K and M. This is a testament to its higher memory capacity and processing power.

Token Generation:

- NVIDIA 3080 10GB: Unfortunately, the data for the 3080 with the Llama 3 70B model is not available, making it difficult to compare directly.

- NVIDIA RTX A6000 48GB: In terms of token generation, the RTX A6000 delivers a speed of 14.58 tokens per second with the Llama 3 70B model in Q4 using K and M. This is a significant drop compared to the 8B model, as expected, due to the complexity of the larger model.

For the Llama 3 70B model, the RTX A6000 clearly takes the lead, showing its ability to tackle the larger model while the 3080 appears to be outmatched.

NVIDIA 3080 10GB vs. NVIDIA RTX A6000 48GB: Deeper Dive into Quantization

To understand the performance differences better, let's explore the impact of quantization, a technique that reduces model size and speeds up inference. Think of it like shrinking a giant building to fit in a smaller space but still maintaining its essential features.

NVIDIA 3080 10GB: Quantization Performance

- Llama 3 8B: The 3080 demonstrates a significant performance difference between the Q4 (4-bit quantization) and F16 (16-bit floating point) configurations. This suggests a clear advantage for the lower-precision quantized models.

- Llama 3 70B: As mentioned earlier, we lack data for the 3080 with the 70B model, so we can't compare the impact of quantization in this case.

NVIDIA RTX A6000 48GB: Quantization Performance

- Llama 3 8B: The RTX A6000 also exhibits a significant performance boost when using Q4 quantization compared to F16 for the Llama 3 8B model. This is consistent with the 3080, where quantization leads to better inference speeds.

- Llama 3 70B: The RTX A6000 shows similar performance between Q4 and F16 for the Llama 3 70B model. This indicates that for larger models, the gains from quantization might be less pronounced.

Overall, quantization clearly improves performance for both GPUs with the smaller 8B model. However, for the 70B model, especially on the RTX A6000, the impact of quantization seems less prominent. The 48GB of RAM on the RTX A6000 potentially allows it to handle the 70B model efficiently, even without significant quantization.

Comparison Analysis: Choosing the Right GPU

NVIDIA 3080 10GB: When to Choose

- Budget-Conscious: If you're on a tighter budget, the 3080 10GB is a fantastic value for running smaller LLMs like the Llama 3 8B.

- Gaming and Other Tasks: The 3080 10GB is still an excellent choice for gaming and other GPU-intensive tasks, making it a versatile option.

- Experimenting with Smaller Models: For experimenting with smaller LLMs or fine-tuning pre-trained models, the 3080 10GB can be a great starting point.

NVIDIA RTX A6000 48GB: When to Choose

- Larger Models: If you're working with larger LLMs like the Llama 3 70B, the RTX A6000 is the clear winner, thanks to its larger memory capacity and processing power.

- Professional Workloads: For professional workflows requiring significant processing power and memory, the RTX A6000 is a powerful and reliable choice.

- Training: If you're involved in training your own LLMs, the RTX A6000 is a fantastic option for handling the intensive training process.

Conclusion: The Verdict

Choosing between the NVIDIA 3080 10GB and the NVIDIA RTX A6000 48GB for running LLMs locally depends heavily on your specific needs and budget. If you're focused on smaller models and value affordability, the 3080 10GB is an excellent choice. However, if you're tackling larger models, require more memory capacity, or are engaged in professional workloads, the RTX A6000 is the clear winner.

Remember, the world of LLMs is constantly evolving, so these benchmarks are a snapshot in time. Keep up with the latest advancements to make the best decisions for your local LLM setup.

FAQ

What is quantization?

Quantization is a technique used to reduce the size of neural networks, making them faster and more efficient to run. Imagine taking a high-resolution photograph and converting it to a lower-resolution version – you lose some detail, but the overall image is still recognizable and smaller.

Can I run other LLMs besides Llama 3?

These benchmarks are specifically for Llama 3, but you can adapt them to other LLMs. The performance might vary depending on the model's size and complexity.

What are the major differences between the 3080 10GB and the RTX A6000 48GB?

The RTX A6000 is a professional-grade GPU with significantly more memory (48GB vs 10GB) and processing power than the 3080 10GB, making it ideal for large models and demanding workloads.

How do I set up my LLM on my computer?

Setting up an LLM locally requires installing the necessary libraries and tools (like llama.cpp) and configuring the environment. There are numerous tutorials and resources available online to guide you through the process.

Keywords:

LLM, Llama 3, NVIDIA 3080 10GB, NVIDIA RTX A6000 48GB, GPU, Token processing, Token generation, Quantization, Local inference, AI, Machine learning, Deep learning, GPU benchmark, Performance comparison,