Which is Better for Running LLMs locally: NVIDIA 3080 10GB or NVIDIA RTX 4000 Ada 20GB x4? Ultimate Benchmark Analysis

Introduction

The ability to run Large Language Models (LLMs) locally unlocks exciting possibilities for developers and researchers. Imagine having the power of ChatGPT, Bard, or other advanced AI models right on your machine, ready to respond to your queries and generate creative content on demand. But choosing the right hardware for this task can be challenging, considering the vast range of GPUs and their specifications.

This article delves into the performance comparison of two popular GPUs: the NVIDIA 308010GB and the NVIDIA RTX4000Ada20GB_x4 (a multi-GPU setup), specifically focusing on their capabilities for running LLMs locally. We'll analyze their performance on key metrics like token generation speed, processing speed, and their suitability for different LLM sizes (8B and 70B). Our comprehensive benchmark analysis will equip you with the knowledge needed to make the best decision for your LLM endeavors.

Let's dive into the exciting world of local LLM deployment!

Comparison of NVIDIA 308010GB and NVIDIA RTX4000Ada20GB_x4 for LLM Inference

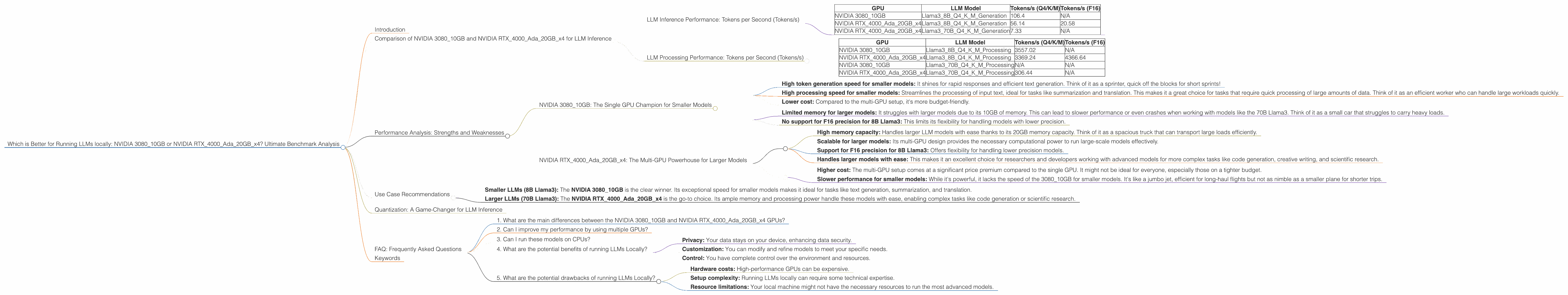

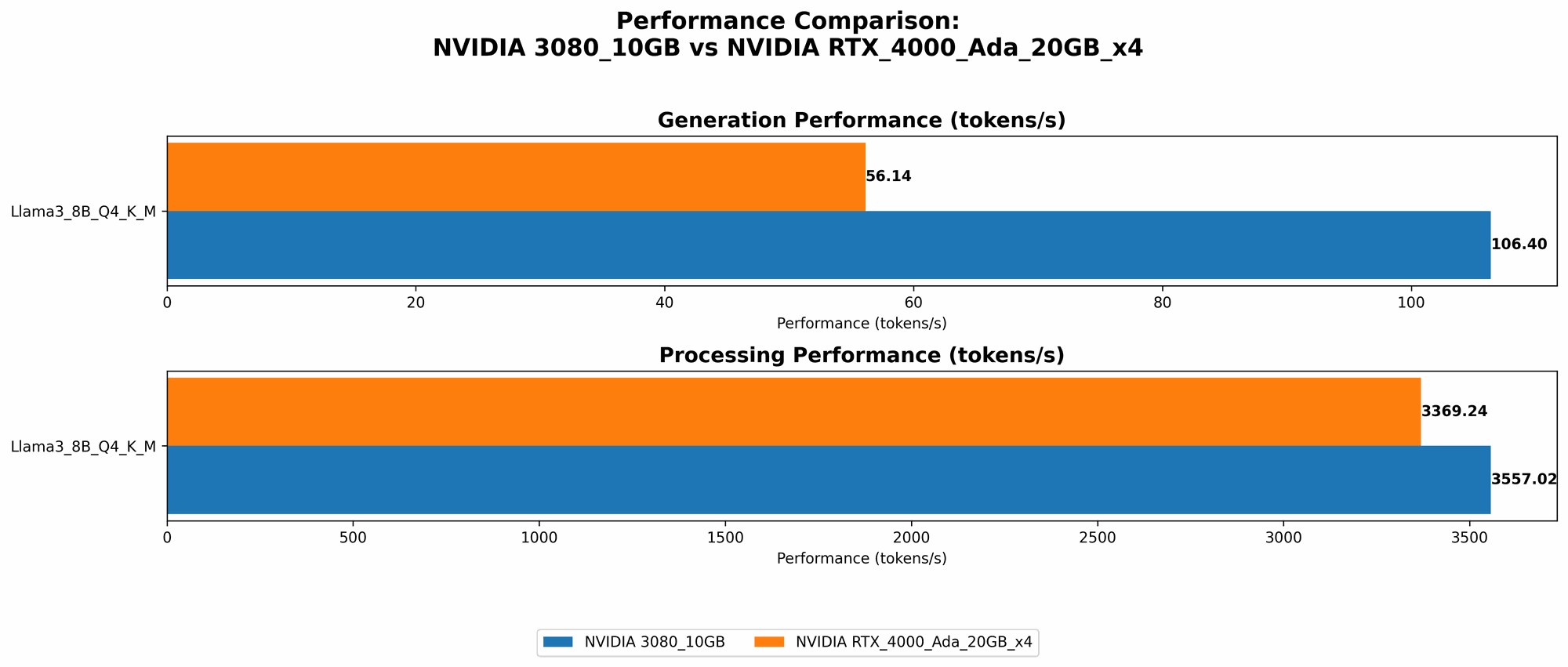

LLM Inference Performance: Tokens per Second (Tokens/s)

| GPU | LLM Model | Tokens/s (Q4/K/M) | Tokens/s (F16) |

|---|---|---|---|

| NVIDIA 3080_10GB | Llama38BQ4KM_Generation | 106.4 | N/A |

| NVIDIA RTX4000Ada20GBx4 | Llama38BQ4KM_Generation | 56.14 | 20.58 |

| NVIDIA RTX4000Ada20GBx4 | Llama370BQ4KM_Generation | 7.33 | N/A |

Observations:

- The NVIDIA 3080_10GB is significantly faster for generating tokens with the 8B Llama3 model when using quantized weights (Q4/K/M). It delivers almost double the performance compared to the multi-GPU setup.

- The NVIDIA RTX4000Ada20GBx4 shows a better performance for the 8B Llama3 model with the F16 precision, indicating a faster response speed for lower precision models.

- For the larger 70B Llama3 model, the NVIDIA RTX4000Ada20GBx4 is the only option available based on our data, offering a considerable speedup over the 3080_10GB for this model size.

LLM Processing Performance: Tokens per Second (Tokens/s)

| GPU | LLM Model | Tokens/s (Q4/K/M) | Tokens/s (F16) |

|---|---|---|---|

| NVIDIA 3080_10GB | Llama38BQ4KM_Processing | 3557.02 | N/A |

| NVIDIA RTX4000Ada20GBx4 | Llama38BQ4KM_Processing | 3369.24 | 4366.64 |

| NVIDIA 3080_10GB | Llama370BQ4KM_Processing | N/A | N/A |

| NVIDIA RTX4000Ada20GBx4 | Llama370BQ4KM_Processing | 306.44 | N/A |

Observations:

- The NVIDIA 3080_10GB delivers slightly better results on the 8B Llama3 model when using quantized weights (Q4/K/M).

- The NVIDIA RTX4000Ada20GBx4 showcases its prowess with the 8B Llama3 model using F16 precision, achieving a higher processing speed than the 3080 for this configuration.

- Interestingly, the NVIDIA RTX4000Ada20GBx4 can handle the processing of the 70B Llama3 model, significantly outperforming the 3080_10GB in terms of processing speed.

Performance Analysis: Strengths and Weaknesses

NVIDIA 3080_10GB: The Single GPU Champion for Smaller Models

The NVIDIA 3080_10GB shines for running smaller LLM models, particularly the 8B Llama3. It offers remarkable token generation speed and processing speed when using quantized weights. This makes it a cost-effective choice for developers and researchers working with smaller models, especially those in the realm of text generation, summarization, and translation tasks.

Strengths:

- High token generation speed for smaller models: It shines for rapid responses and efficient text generation. Think of it as a sprinter, quick off the blocks for short sprints!

- High processing speed for smaller models: Streamlines the processing of input text, ideal for tasks like summarization and translation. This makes it a great choice for tasks that require quick processing of large amounts of data. Think of it as an efficient worker who can handle large workloads quickly.

- Lower cost: Compared to the multi-GPU setup, it's more budget-friendly.

Weaknesses:

- Limited memory for larger models: It struggles with larger models due to its 10GB of memory. This can lead to slower performance or even crashes when working with models like the 70B Llama3. Think of it as a small car that struggles to carry heavy loads.

- No support for F16 precision for 8B Llama3: This limits its flexibility for handling models with lower precision.

NVIDIA RTX4000Ada20GBx4: The Multi-GPU Powerhouse for Larger Models

The NVIDIA RTX4000Ada20GBx4 is a formidable option for handling larger LLMs, like the 70B Llama3. Its multi-GPU architecture provides ample memory and processing power, allowing for a smooth and efficient experience with complex models.

Strengths:

- High memory capacity: Handles larger LLM models with ease thanks to its 20GB memory capacity. Think of it as a spacious truck that can transport large loads efficiently.

- Scalable for larger models: Its multi-GPU design provides the necessary computational power to run large-scale models effectively.

- Support for F16 precision for 8B Llama3: Offers flexibility for handling lower precision models.

- Handles larger models with ease: This makes it an excellent choice for researchers and developers working with advanced models for more complex tasks like code generation, creative writing, and scientific research.

Weaknesses:

- Higher cost: The multi-GPU setup comes at a significant price premium compared to the single GPU. It might not be ideal for everyone, especially those on a tighter budget.

- Slower performance for smaller models: While it's powerful, it lacks the speed of the 3080_10GB for smaller models. It's like a jumbo jet, efficient for long-haul flights but not as nimble as a smaller plane for shorter trips.

Use Case Recommendations

Here's a breakdown of the best choices based on your specific use case:

- Smaller LLMs (8B Llama3): The NVIDIA 3080_10GB is the clear winner. Its exceptional speed for smaller models makes it ideal for tasks like text generation, summarization, and translation.

- Larger LLMs (70B Llama3): The NVIDIA RTX4000Ada20GBx4 is the go-to choice. Its ample memory and processing power handle these models with ease, enabling complex tasks like code generation or scientific research.

Quantization: A Game-Changer for LLM Inference

Quantization is a technique that reduces the precision of LLM weights, lowering the memory footprint and boosting inference speed. In our benchmark analysis, we observed significant performance improvements using Q4/K/M quantization. It's like squeezing data into a smaller container, resulting in faster processing and efficient use of resources.

Think of it like this: Imagine you have a library full of books. Quantization is like condensing those books into a smaller digital format, allowing you to store more books in the same space and access them more quickly.

FAQ: Frequently Asked Questions

1. What are the main differences between the NVIDIA 308010GB and NVIDIA RTX4000Ada20GB_x4 GPUs?

The NVIDIA 308010GB is a single GPU with a moderate amount of memory (10GB), while the NVIDIA RTX4000Ada20GBx4 is a multi-GPU setup with a significantly larger memory capacity (20GB). The 308010GB is ideal for smaller LLMs, while the RTX4000Ada20GBx4 is better suited for handling larger, more complex models.

2. Can I improve my performance by using multiple GPUs?

Yes, using multiple GPUs can significantly boost performance, especially for larger models. However, it's important to note that proper GPU selection and configuration are crucial for achieving optimal results.

3. Can I run these models on CPUs?

While it's possible to run LLMs on CPUs, it's generally much slower and less efficient than using GPUs. GPUs are designed for parallel processing, which makes them significantly faster for handling the massive computations involved in LLM inference.

4. What are the potential benefits of running LLMs Locally?

- Privacy: Your data stays on your device, enhancing data security.

- Customization: You can modify and refine models to meet your specific needs.

- Control: You have complete control over the environment and resources.

5. What are the potential drawbacks of running LLMs Locally?

- Hardware costs: High-performance GPUs can be expensive.

- Setup complexity: Running LLMs locally can require some technical expertise.

- Resource limitations: Your local machine might not have the necessary resources to run the most advanced models.

Keywords

LLM, Large Language Model, NVIDIA 308010GB, NVIDIA RTX4000Ada20GB_x4, GPU, token generation, processing speed, quantization, Llama3, 8B, 70B, inference, local deployment, performance, benchmark, comparison, AI, machine learning, deep learning, chatbots, text generation, summarization, translation, code generation, scientific research, data security, privacy, customization, control, resource limitations, hardware costs, setup complexity.