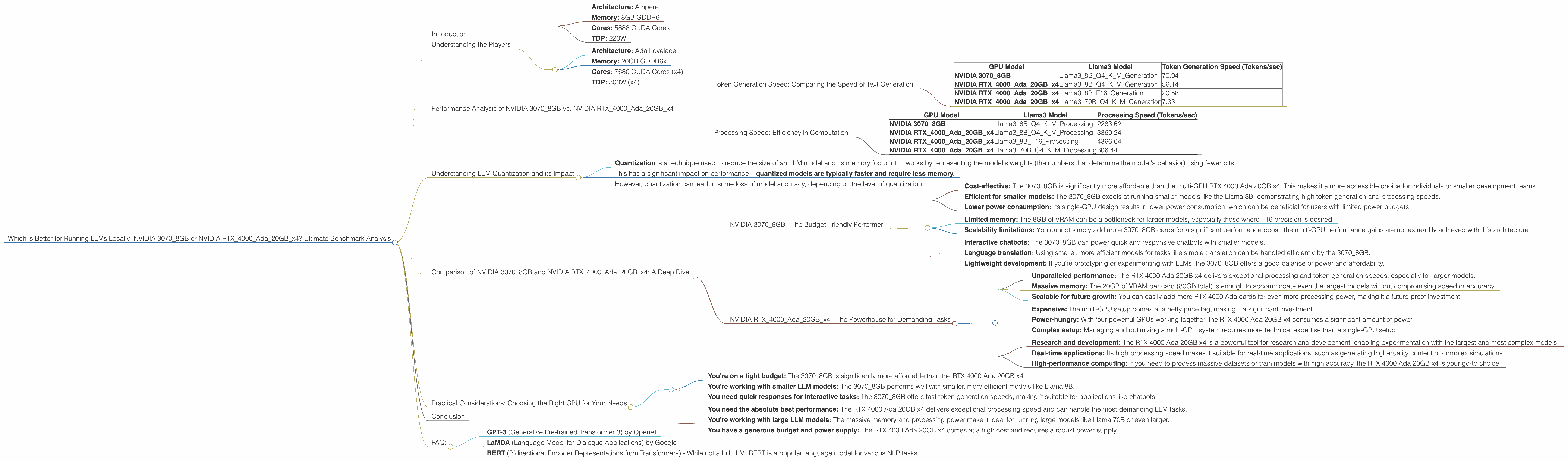

Which is Better for Running LLMs locally: NVIDIA 3070 8GB or NVIDIA RTX 4000 Ada 20GB x4? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, offering incredible capabilities for everything from generating creative text to translating languages. But running these powerful models locally can be resource-intensive, especially on older hardware.

Choosing the right hardware for local LLM inference is critical. In this article, we'll dive deep into comparing the performance of two popular GPUs: the NVIDIA GeForce RTX 3070 8GB and NVIDIA RTX 4000 Ada 20GB x4.

We'll analyze their speed, efficiency, and suitability for running different LLM models, all based on real-world benchmark data. Buckle up, because this is going to be a wild ride through the computational depths of LLMs!

Understanding the Players

Our gladiators in this GPU arena are the NVIDIA GeForce RTX 3070 8GB and the NVIDIA RTX 4000 Ada 20GB x4. Let's break down their key features:

NVIDIA GeForce RTX 3070 8GB:

- Architecture: Ampere

- Memory: 8GB GDDR6

- Cores: 5888 CUDA Cores

- TDP: 220W

NVIDIA RTX 4000 Ada 20GB x4:

- Architecture: Ada Lovelace

- Memory: 20GB GDDR6x

- Cores: 7680 CUDA Cores (x4)

- TDP: 300W (x4)

Important Note: The RTX 4000 Ada 20GB x4 is a multi-GPU setup using four RTX 4000 cards. This setup offers significantly more power and memory compared to the single-GPU 3070.

Performance Analysis of NVIDIA 30708GB vs. NVIDIA RTX4000Ada20GB_x4

We'll be focusing on the performance of these GPUs with Llama 3 models, specifically the 8B and 70B variants. Our benchmark data will examine:

- Token Generation Speed: Measures how quickly the GPU can generate new tokens (text)

- Processing Speed: Measures how efficiently the GPU can process the internal calculations of the model

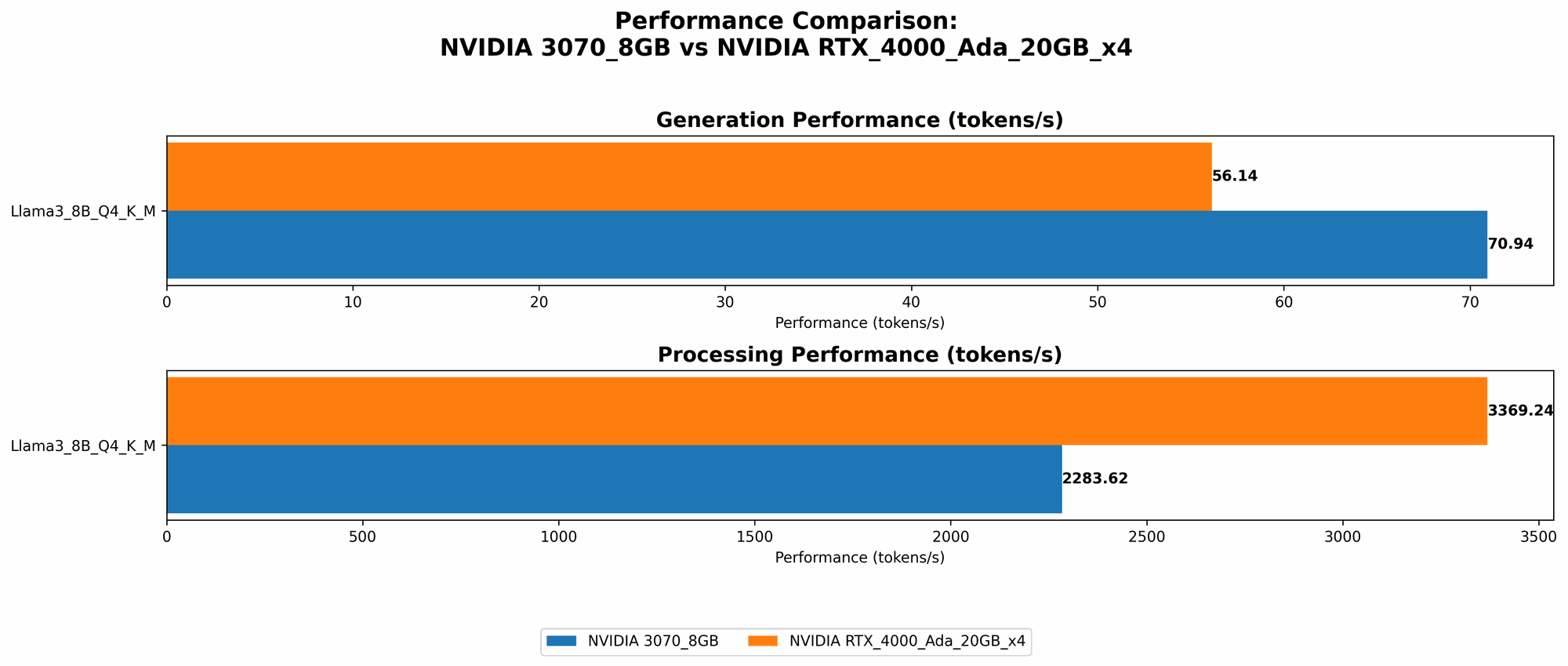

Token Generation Speed: Comparing the Speed of Text Generation

This benchmark measures how many tokens per second each GPU can generate for various Llama 3 configurations.

| GPU Model | Llama3 Model | Token Generation Speed (Tokens/sec) |

|---|---|---|

| NVIDIA 3070_8GB | Llama38BQ4KM_Generation | 70.94 |

| NVIDIA RTX4000Ada20GBx4 | Llama38BQ4KM_Generation | 56.14 |

| NVIDIA RTX4000Ada20GBx4 | Llama38BF16_Generation | 20.58 |

| NVIDIA RTX4000Ada20GBx4 | Llama370BQ4KM_Generation | 7.33 |

Key Observations:

- NVIDIA 30708GB wins the token generation race for the Llama38BQ4K_M configuration, demonstrating its ability to handle this smaller, more efficient model efficiently.

- NVIDIA RTX4000Ada20GBx4 performs better with F16 (half-precision) models, showing its ability to leverage its greater memory and power for larger models like the 70B variant. However, it falls short on the smaller 8B model.

- The multi-GPU setup of the RTX 4000 Ada 20GB x4 does not necessarily translate to a linear performance increase, which is expected considering the complexity of parallel processing.

Practical Implications:

- For smaller models (Llama 8B) where speed is paramount, the 3070_8GB takes the lead. This is ideal for interactive use cases where you need quick responses.

- For larger models (Llama 70B) or if you desire higher precision, the RTX 4000 Ada 20GB x4 is your best bet. This configuration offers more power for demanding tasks but comes at a higher cost and power consumption.

Processing Speed: Efficiency in Computation

This benchmark measures how quickly each GPU can perform the model's internal calculations, which is crucial for overall performance.

| GPU Model | Llama3 Model | Processing Speed (Tokens/sec) |

|---|---|---|

| NVIDIA 3070_8GB | Llama38BQ4KM_Processing | 2283.62 |

| NVIDIA RTX4000Ada20GBx4 | Llama38BQ4KM_Processing | 3369.24 |

| NVIDIA RTX4000Ada20GBx4 | Llama38BF16_Processing | 4366.64 |

| NVIDIA RTX4000Ada20GBx4 | Llama370BQ4KM_Processing | 306.44 |

Key Observations:

- The RTX 4000 Ada 20GB x4 dominates in processing speed for most models and configurations. This is a testament to its powerful Ada architecture and multi-GPU setup.

- The 3070_8GB offers more comparable processing speeds for the smaller 8B model but struggles to keep up with the RTX 4000 Ada 20GB x4 as the model size increases.

Practical Implications:

- For tasks requiring fast and efficient computation, the RTX 4000 Ada 20GB x4 emerges as the clear winner. This is ideal for research or development workloads that demand maximum processing power.

- The 3070_8GB can still be a viable option if you're focused on small models and are on a tighter budget. However, its limitations become more apparent as model sizes grow.

Understanding LLM Quantization and its Impact

Before we dive further into the performance analysis, let's quickly understand LLM quantization.

- Quantization is a technique used to reduce the size of an LLM model and its memory footprint. It works by representing the model's weights (the numbers that determine the model's behavior) using fewer bits.

- This has a significant impact on performance – quantized models are typically faster and require less memory.

- However, quantization can lead to some loss of model accuracy, depending on the level of quantization.

*Think of it like this: Imagine you're trying to describe a picture using only a limited number of colors. You can get the general idea across, but you lose some fine details. Quantization is similar, except it's applied to the model's weights.

In our benchmark results, the configurations labeled "Q4KM" refer to models that have been quantized using 4-bit precision for both key (K) and value (V) matrices and mixed precision (M) for other operations. Quantization allows for considerable performance benefits while maintaining a decent level of accuracy.

Comparison of NVIDIA 30708GB and NVIDIA RTX4000Ada20GB_x4: A Deep Dive

NVIDIA 3070_8GB - The Budget-Friendly Performer

The NVIDIA 3070_8GB is a powerful single-GPU option that delivers impressive performance for smaller LLM models. While it may not have the brute force of the RTX 4000 Ada 20GB x4, it offers a compelling combination of affordability and speed.

Strengths:

- Cost-effective: The 3070_8GB is significantly more affordable than the multi-GPU RTX 4000 Ada 20GB x4. This makes it a more accessible choice for individuals or smaller development teams.

- Efficient for smaller models: The 3070_8GB excels at running smaller models like the Llama 8B, demonstrating high token generation and processing speeds.

- Lower power consumption: Its single-GPU design results in lower power consumption, which can be beneficial for users with limited power budgets.

Weaknesses:

- Limited memory: The 8GB of VRAM can be a bottleneck for larger models, especially those where F16 precision is desired.

- Scalability limitations: You cannot simply add more 3070_8GB cards for a significant performance boost; the multi-GPU performance gains are not as readily achieved with this architecture.

Ideal Use Cases:

- Interactive chatbots: The 3070_8GB can power quick and responsive chatbots with smaller models.

- Language translation: Using smaller, more efficient models for tasks like simple translation can be handled efficiently by the 3070_8GB.

- Lightweight development: If you're prototyping or experimenting with LLMs, the 3070_8GB offers a good balance of power and affordability.

NVIDIA RTX4000Ada20GBx4 - The Powerhouse for Demanding Tasks

The NVIDIA RTX 4000 Ada 20GB x4 is a multi-GPU behemoth that redefines the boundaries of LLM performance. Its sheer power and massive memory are designed to handle the most demanding LLM applications with ease.

Strengths:

- Unparalleled performance: The RTX 4000 Ada 20GB x4 delivers exceptional processing and token generation speeds, especially for larger models.

- Massive memory: The 20GB of VRAM per card (80GB total) is enough to accommodate even the largest models without compromising speed or accuracy.

- Scalable for future growth: You can easily add more RTX 4000 Ada cards for even more processing power, making it a future-proof investment.

Weaknesses:

- Expensive: The multi-GPU setup comes at a hefty price tag, making it a significant investment.

- Power-hungry: With four powerful GPUs working together, the RTX 4000 Ada 20GB x4 consumes a significant amount of power.

- Complex setup: Managing and optimizing a multi-GPU system requires more technical expertise than a single-GPU setup.

Ideal Use Cases:

- Research and development: The RTX 4000 Ada 20GB x4 is a powerful tool for research and development, enabling experimentation with the largest and most complex models.

- Real-time applications: Its high processing speed makes it suitable for real-time applications, such as generating high-quality content or complex simulations.

- High-performance computing: If you need to process massive datasets or train models with high accuracy, the RTX 4000 Ada 20GB x4 is your go-to choice.

Practical Considerations: Choosing the Right GPU for Your Needs

So, how do you choose between the NVIDIA 3070_8GB and NVIDIA RTX 4000 Ada 20GB x4? It comes down to your specific needs and budget:

Choose the NVIDIA 3070_8GB if:

- You're on a tight budget: The 3070_8GB is significantly more affordable than the RTX 4000 Ada 20GB x4.

- You're working with smaller LLM models: The 3070_8GB performs well with smaller, more efficient models like Llama 8B.

- You need quick responses for interactive tasks: The 3070_8GB offers fast token generation speeds, making it suitable for applications like chatbots.

Choose the NVIDIA RTX4000Ada20GBx4 if:

- You need the absolute best performance: The RTX 4000 Ada 20GB x4 delivers exceptional processing speed and can handle the most demanding LLM tasks.

- You're working with large LLM models: The massive memory and processing power make it ideal for running large models like Llama 70B or even larger.

- You have a generous budget and power supply: The RTX 4000 Ada 20GB x4 comes at a high cost and requires a robust power supply.

Conclusion

The choice between the NVIDIA 3070_8GB and the NVIDIA RTX 4000 Ada 20GB x4 ultimately depends on your specific needs and resources.

The 3070_8GB is a solid performer for smaller models and those looking for a budget-friendly option. The RTX 4000 Ada 20GB x4 is a powerhouse for demanding tasks, high-performance computing, and larger models.

No matter which way you go, running LLMs locally opens up a world of possibilities for developers, researchers, and anyone seeking to harness the power of these incredible models.

FAQ:

Q: What are LLMs?

A: Large Language Models (LLMs) are a type of artificial intelligence that are trained on massive amounts of text data. They can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

Q: What is quantization and how does it affect performance?

A: Quantization is a technique that reduces the size of an LLM model by using fewer bits to represent the model's weights. This can dramatically improve performance, especially for running on devices with limited memory. However, it can lead to a small loss of accuracy.

Q: What are some popular LLM models?

A: Some popular LLMs include:

- GPT-3 (Generative Pre-trained Transformer 3) by OpenAI

- LaMDA (Language Model for Dialogue Applications) by Google

- BERT (Bidirectional Encoder Representations from Transformers) - While not a full LLM, BERT is a popular language model for various NLP tasks.

Q: What are CUDA cores and how do they relate to LLM performance?

A: CUDA cores are the processing units on GPUs that perform calculations. The more CUDA cores a GPU has, the more calculations it can perform simultaneously, resulting in faster processing speed for LLMs.

Q: Why is GPU memory important for running LLMs?

A: LLMs require a significant amount of memory to store their weights and internal calculations. A GPU with ample memory can handle larger models without bottlenecking performance.

Keywords: NVIDIA RTX 3070 8GB, NVIDIA RTX 4000 Ada 20GB x4, LLM, Large Language Model, token generation, processing speed, quantization, Llama 3, GPU, model inference, benchmark, performance, cost, power consumption, memory, CUDA cores, AI, machine learning, natural language processing, NLP