Which is Better for Running LLMs locally: NVIDIA 3070 8GB or NVIDIA 4090 24GB x2? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is booming, with powerful AI models like GPT-3, LaMDA, and PaLM pushing the boundaries of what's possible with language technology. But running these models locally can be a challenge, demanding significant computational resources. If you're a developer looking to explore the world of LLMs on your personal machine, the choice of hardware becomes crucial.

This article dives deep into the performance comparison of two popular graphics cards for running LLMs locally: NVIDIA GeForce RTX 3070 8GB and NVIDIA GeForce RTX 4090 24GB x2. We'll analyze their performance on various Llama 3 models, highlighting their strengths and weaknesses, and providing practical recommendations for different use cases.

Performance Analysis: A Deep Dive into the Numbers

Let's get down to the nitty-gritty. We'll dissect the performance of each GPU using the Llama 3 models as our test subjects. We'll be focusing on the following scenarios:

- Llama 3 8B Q4 KM Generation: This measure represents the tokens per second generated by the model when using quantized weights (Q4) and the KM optimization technique.

- Llama 3 8B F16 Generation: Similar to above, but with the model running using half-precision floating-point (F16) weights.

- Llama 3 70B Q4 K_M Generation: The same, but for the larger 70B model.

- Llama 3 70B F16 Generation: Again, same as above but using F16 weights.

- Llama 3 8B Q4 K_M Processing: This measure shows how fast the model can process text during training.

- Llama 3 8B F16 Processing: Similar to above, but using F16 weights instead of Q4.

- Llama 3 70B Q4 K_M Processing: This measures the processing speed of the larger 70B model using Q4 weights.

- Llama 3 70B F16 Processing: Same as above, but using F16 weights.

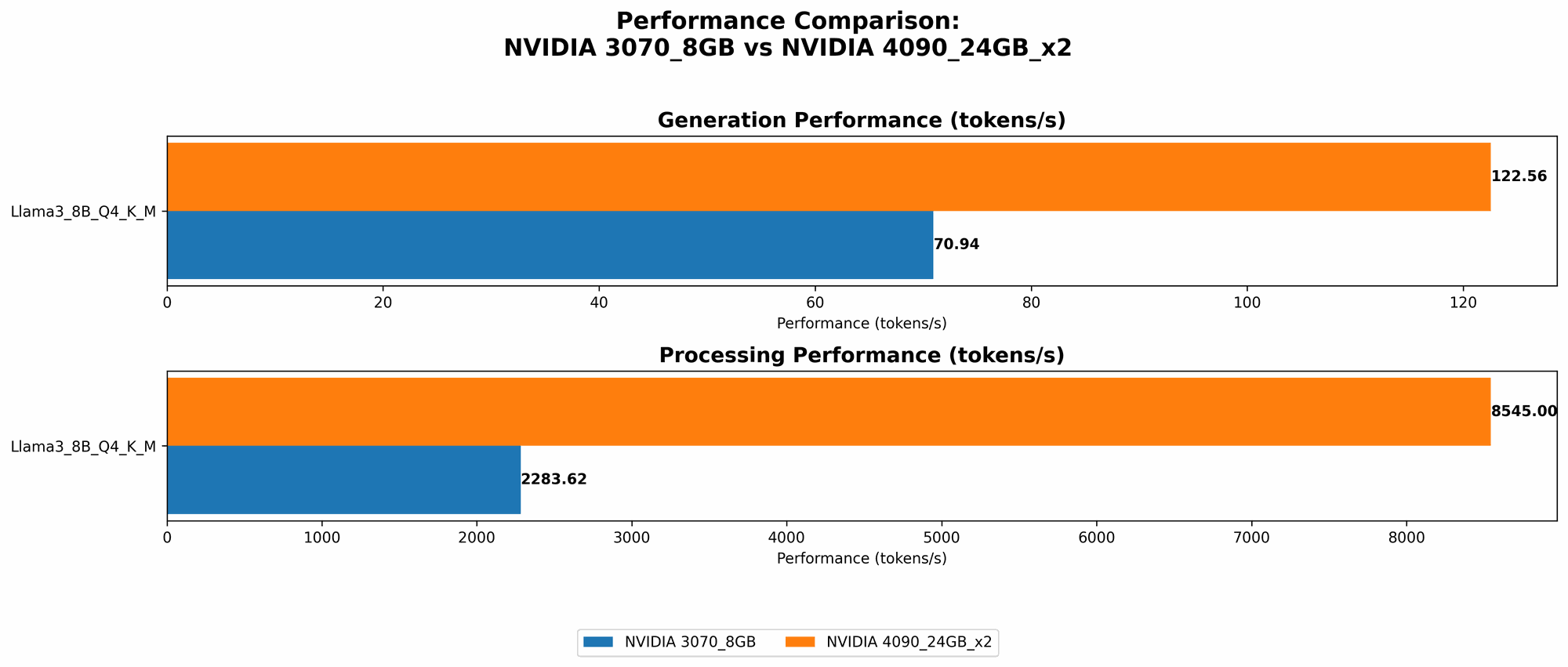

Comparison of NVIDIA 3070 8GB and NVIDIA 4090 24GB x2 for Llama 3 8B Model

| Scenario | NVIDIA 3070 8GB (tokens/second) | NVIDIA 4090 24GB x2 (tokens/second) |

|---|---|---|

| Llama 3 8B Q4 K_M Generation | 70.94 | 122.56 |

| Llama 3 8B F16 Generation | N/A | 53.27 |

| Llama 3 8B Q4 K_M Processing | 2283.62 | 8545.0 |

| Llama 3 8B F16 Processing | N/A | 11094.51 |

Analysis:

- Generation: The 4090 x2 delivers a significant performance boost compared to the 3070, especially with the F16 weights. Imagine it like this: the 4090 x2 generates text at a rate almost double the speed of the 3070 when using Q4 weights, and about 1.5 times faster when using F16.

- Processing: This is where the 4090 x2 truly shines! It offers an astounding 3.75x improvement in processing speed when using Q4 weights. Even with F16 weights, the performance advantage is significant, almost 5x faster!

Key takeaways:

- The 4090 x2 is the clear winner for performance, generating and processing text at a much faster rate than the 3070.

- If you're prioritizing speed, especially for larger models or training workloads, the 4090 x2 is the superior choice.

Comparison of NVIDIA 3070 8GB and NVIDIA 4090 24GB x2 for Llama 3 70B Model

| Scenario | NVIDIA 3070 8GB (tokens/second) | NVIDIA 4090 24GB x2 (tokens/second) |

|---|---|---|

| Llama 3 70B Q4 K_M Generation | N/A | 19.06 |

| Llama 3 70B F16 Generation | N/A | N/A |

| Llama 3 70B Q4 K_M Processing | N/A | 905.38 |

| Llama 3 70B F16 Processing | N/A | N/A |

Analysis:

- Generation: The 3070 simply lacks the memory capacity to handle the 70B model, so it's a no-go. The 4090 x2 can handle the 70B model with Q4 weights but struggles with F16. Why? Because F16 weights require more memory, and the 4090 x2, despite its 48GB of combined VRAM, still finds it challenging for this particular scenario.

- Processing: Similar to the generation scenario, the 3070 can't handle the 70B model. The 4090 x2 manages to process the 70B model with Q4 weights, although it doesn't provide performance data for the F16 scenario.

Key takeaways:

- The 3070 is not a good match for the 70B model due to limitations in memory capacity.

- The 4090 x2 can handle the 70B model with Q4 weights but struggles with F16 weights, highlighting the importance of considering memory requirements for larger models.

Understanding the Underlying Factors: Quantization, Memory, and Optimization Techniques

To further understand the performance differences, we need to delve into the underlying techniques and concepts:

Quantization: Making LLMs More Compact

Think of quantization as a diet for LLMs. It reduces the size of the model's weights by representing them using fewer bits. This makes the model smaller and faster, particularly important for GPUs with limited memory.

Imagine you're trying to write a story using only lowercase letters. This is like "Q4" quantization, using just 4 bits to represent each weight. You'll save space but sacrifice some detail and complexity.

- Q4: This is the most aggressive quantization scheme, using only 4 bits. It's great for saving memory but can lead to some accuracy loss.

- F16: This uses 16-bit floating-point numbers, sacrificing less accuracy but using more memory.

Memory: The Bottleneck for Large Models

Memory is the ultimate constraint for running LLMs. If you're trying to squeeze a 70B model onto an 8GB GPU, you're going to have a bad time.

Imagine trying to fit a massive library into a small closet. You're going to need a bigger closet, or you'll have to get rid of some books!

Optimization Techniques: Making Models Run Faster

Optimization techniques like K_M help make the model run faster. Imagine being able to walk through a library without getting lost. You'll find the books you need much faster.

- K_M: This technique reorganizes the model's weights and computations, enabling more efficient processing.

Practical Recommendations for Different Use Cases

Now, that we've analyzed the performance and underlying factors, let's translate this into real-world recommendations:

- If you're on a budget and want to experiment with smaller LLMs: The NVIDIA GeForce RTX 3070 8GB is a solid choice. It can handle Llama 3 8B models efficiently, especially with Q4 weights.

- If you want to run the largest models with the fastest performance: The NVIDIA GeForce RTX 4090 24GB x2 is the champion. It offers exceptional speed and memory capacity for models like Llama 3 70B (using Q4 weights), making it ideal for complex tasks involving training and text processing.

- If you need high performance for a specific scenario: It's important to consider the specific model and the level of accuracy you need. If you're working with the 70B model, Q4 weights might be your best bet. However, if accuracy is crucial, F16 weights may be preferred, even if it means compromising on performance.

The "Bigger is Better" Mindset: A Word of Caution

While a powerful GPU like the 4090 x2 might seem like the ultimate solution, it's important to remember that it's not always about "more is better." Consider these factors:

- Power Consumption: A 4090 x2 consumes significantly more power than a 3070. This means higher electricity bills and potential need for a better cooling system.

- Cost: The 4090 x2 is considerably more expensive than a 3070. Make sure the added performance justifies the investment for your specific use case.

- Environmental Impact: Higher power consumption means a larger carbon footprint. It's essential to be mindful of environmental sustainability when choosing hardware.

FAQ

What are the advantages of running LLMs locally?

Running LLMs locally offers several advantages:

- Privacy: You have full control over your data and it doesn't leave your machine.

- Speed: Depending on your hardware, you can experience faster response times compared to cloud-based solutions.

- Flexibility: You're not limited by API calls or quotas.

How can I choose the right GPU for my needs?

Consider the following factors:

- Model size: Larger models require more memory.

- Desired performance: If speed is critical, you'll need a powerful GPU.

- Budget: Balance performance and cost.

- Power consumption: Be mindful of energy efficiency.

What are some alternatives to NVIDIA GPUs?

Other options include:

- AMD GPUs: AMD offers competitive GPUs with comparable performance to Nvidia at a lower price point.

- Apple M1/M2 chips: Apple's ARM-based chips offer impressive performance and efficiency.

Keywords

Local LLMs, NVIDIA GeForce RTX 3070 8GB, NVIDIA GeForce RTX 4090 24GB x2, Llama 3 8B, Llama 3 70B, Quantization, Q4, F16, K_M Optimization, Memory, Performance Comparison, Benchmark Analysis, Token Generation, Text Processing, Power Consumption, Cost, Environmental Impact, GPU, Deep Learning, AI, Large Language Model.