Which is Better for Running LLMs locally: NVIDIA 3070 8GB or NVIDIA 4070 Ti 12GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is evolving rapidly, and running them locally offers incredible potential for developers and researchers. However, choosing the right hardware for this task can be a daunting challenge. In this article, we'll dissect the performance of two popular NVIDIA GPUs – the 30708GB and the 4070Ti_12GB – in the context of running LLMs locally. We'll delve into their performance with the Llama3 model, examining the impact of different quantization formats and model sizes. Buckle up, it's time to unleash the power of these GPUs!

The Players: NVIDIA 30708GB vs. NVIDIA 4070Ti_12GB

Imagine you're trying to build a spaceship for interstellar travel. You need a powerful engine, and the 30708GB and 4070Ti_12GB are like two different engines with distinct capabilities.

The NVIDIA 30708GB is a solid performer, offering great value for its price. It's the workhorse of many gamers and creatives, and it's certainly capable of handling LLMs. The 4070Ti_12GB, on the other hand, is a performance beast, boasting a significant performance boost. Its larger memory capacity and faster processing capabilities make it a top contender for resource-hungry LLMs.

Performance Analysis: Llama3 Model Benchmark

Let's cut to the chase – how do these GPUs fare when it comes to running the Llama3 model? We'll analyze the performance based on the benchmark data provided by the llama.cpp and GPU Benchmarks on LLM Inference projects.

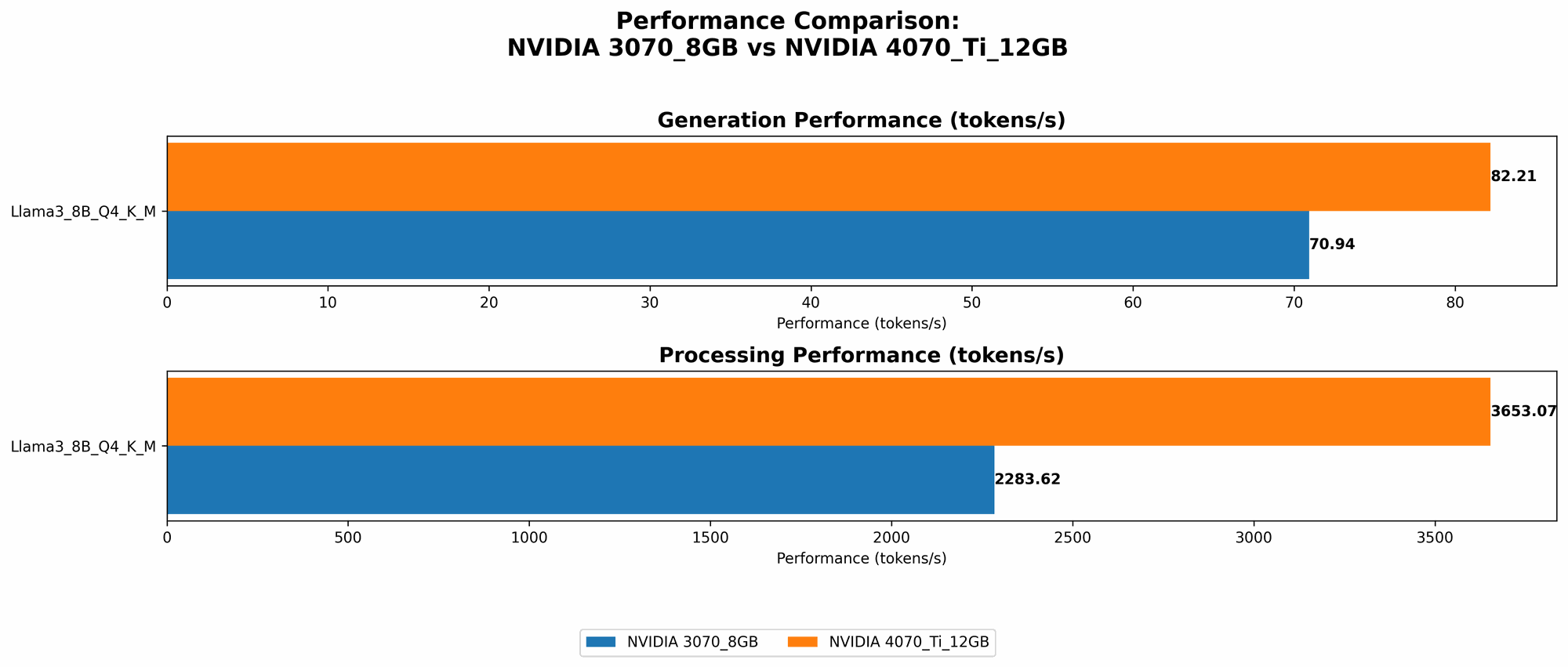

Llama3 8B: Token Speed Generation

The data reveals that the 4070Ti12GB consistently outperforms the 3070_8GB in terms of token speed for the Llama3 8B model.

| GPU | Llama3 8B Q4 K_M Generation (Tokens/Second) |

|---|---|

| NVIDIA 3070_8GB | 70.94 |

| NVIDIA 4070Ti12GB | 82.21 |

Here's what the numbers tell us:

- The 4070Ti12GB generates tokens at a faster rate compared to the 3070_8GB, roughly 16% faster. This means you can generate more text in the same timeframe!

- Imagine a car race. The 4070Ti12GB is like a Formula 1 car speeding ahead, while the 3070_8GB is a sleek sports car that's still fast but slightly behind.

Llama3 8B: Token Processing Speed

Just as important as generating tokens is processing them efficiently. The 4070Ti12GB continues to shine in this area, demonstrating a clear advantage over the 3070_8GB.

| GPU | Llama3 8B Q4 K_M Processing (Tokens/Second) |

|---|---|

| NVIDIA 3070_8GB | 2283.62 |

| NVIDIA 4070Ti12GB | 3653.07 |

Key takeaways:

- The 4070Ti12GB processes tokens at a significantly faster rate, almost 60% faster than the 3070_8GB. This translates to smoother, more responsive LLM interactions.

- Think of it like this: The 4070Ti12GB is a high-speed train, swiftly chugging through a vast amount of data. The 3070_8GB, while a capable commuter train, can't quite keep up with the train of thought.

What About F16 Quantization and Larger Llama3 Models (70B)?

Unfortunately, the provided benchmark dataset doesn't include data for Llama3 models with F16 quantization or the larger 70B model. This means we can't compare the performance of these GPUs in those scenarios.

Comparison of NVIDIA 30708GB and NVIDIA 4070Ti_12GB for LLMs

Let's summarize the key points and offer some practical recommendations for using these GPUs.

- Overall Performance: The NVIDIA 4070Ti12GB outperforms the NVIDIA 30708GB in both token generation and processing for the Llama3 8B model with Q4 KM quantization.

- Price Point: The 30708GB offers better value for money, especially if you're on a budget. However, if performance is your top priority, the 4070Ti_12GB might be a better choice.

- Memory Capacity: The 4070Ti12GB comes with 12GB of GDDR6X memory, which is advantageous for running larger LLMs or models with higher memory requirements.

Practical Recommendations

Here's how you can make the best choice for your needs:

- Budget-conscious developers: The 3070_8GB is a solid option that will still provide a decent performance boost for smaller LLMs, research projects, and exploration.

- Performance-focused developers: The 4070Ti12GB is the clear winner if you need to run larger LLMs, handle demanding tasks, or maximize token throughput for more responsive interactions.

- Large-scale deployments: If you're planning on running LLMs for production or high-performance applications, consider a more powerful GPU like the RTX 4090 or a specialized AI accelerator.

LLMs: A Glimpse into the Future

LLMs are revolutionizing the way we interact with technology, opening doors to new possibilities in natural language processing, code generation, and beyond. As these models grow in complexity and size, powerful hardware like the 4070Ti12GB will become increasingly essential for unlocking their full potential.

FAQ

What are LLMs?

LLMs are advanced artificial intelligence models trained on massive datasets of text and code. They can generate realistic and coherent text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is quantization in LLMs?

Quantization is a technique used to reduce the precision of LLM model weights, making them smaller and more efficient. It's like using a smaller ruler to measure something – you lose some accuracy but gain significant benefits in terms of memory usage and performance. Q4 K_M is a widely used quantization format for LLMs, achieving good performance with reduced memory footprints.

What about CPU-based inference for LLMs?

While CPUs can run LLMs, they generally lag behind GPUs in terms of performance. GPUs are specifically designed for parallel processing, making them much better suited for the computationally intensive tasks involved in LLM inference.

Keywords

LLMs, large language models, NVIDIA 30708GB, NVIDIA 4070Ti12GB, GPU, performance, benchmark, Llama3, 8B, 70B, quantization, Q4 KM, F16, token speed, token generation, token processing, local, inference, hardware, AI, machine learning, deep learning, developers, geeks, research, production, deployment, budget, performance, memory, model size, practical recommendations, applications, future, natural language processing, code generation, translation, text generation, creative content, FAQ, CPU, GPU, parallel processing