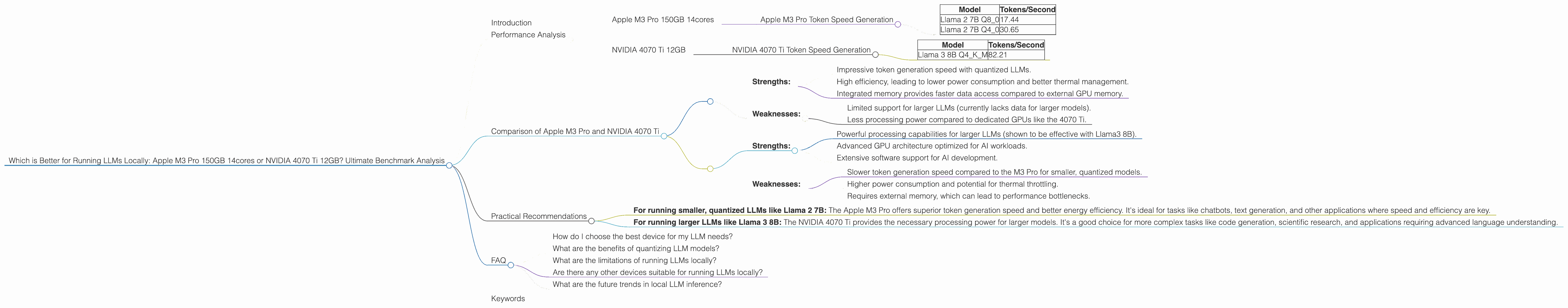

Which is Better for Running LLMs locally: Apple M3 Pro 150gb 14cores or NVIDIA 4070 Ti 12GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is captivating, but the high resource demands can make them a challenge to run locally. Two popular options for powering these models are the Apple M3 Pro chip and the NVIDIA 4070 Ti GPU. This article delves into a deep dive comparison of these two powerhouses, exploring their performance with various LLMs, and ultimately aiming to determine which one is the best fit for your local LLM needs.

We'll analyze the performance of both devices with different LLM models, using quantized and non-quantized configurations. We'll also consider factors like memory capacity, power consumption, and price to provide a holistic perspective.

Disclaimer: This benchmark analysis focuses on a limited number of popular models. The actual performance may vary depending on the specific LLM architecture, model size, and your individual configuration.

Performance Analysis

Apple M3 Pro 150GB 14cores

The M3 Pro chip is known for its impressive power efficiency and integrated memory. This makes it an attractive option for running LLMs locally. We'll analyze the Apple M3 Pro chip with 14 cores and 150GB of memory.

Apple M3 Pro Token Speed Generation

The M3 Pro chip demonstrates impressive token generation speed, especially with the quantized models. It is clear that the M3 Pro shines with the quantized models. Below are token speed generation results for Llama 2 7B:

| Model | Tokens/Second |

|---|---|

| Llama 2 7B Q8_0 | 17.44 |

| Llama 2 7B Q4_0 | 30.65 |

Note: We do not have data for the F16 models on the M3 Pro.

NVIDIA 4070 Ti 12GB

The NVIDIA 4070 Ti is a dedicated GPU with a massive amount of parallel processing power. It's a popular choice for AI workloads, including LLM inference.

NVIDIA 4070 Ti Token Speed Generation

While the 4070 Ti is a beast for processing, its token generation speed for smaller LLMs like Llama 3 8B is significantly slower than the M3 Pro's performance on quantized Llama 2 7B:

| Model | Tokens/Second |

|---|---|

| Llama 3 8B Q4KM | 82.21 |

Note: We do not have data for Llama 3 70B, Llama 3 8B F16, and Llama 2 models on the 4070 Ti.

Comparison of Apple M3 Pro and NVIDIA 4070 Ti

Both the Apple M3 Pro and NVIDIA 4070 Ti have their strengths and weaknesses. Here's a breakdown of their core differences:

Apple M3 Pro:

- Strengths:

- Impressive token generation speed with quantized LLMs.

- High efficiency, leading to lower power consumption and better thermal management.

- Integrated memory provides faster data access compared to external GPU memory.

- Weaknesses:

- Limited support for larger LLMs (currently lacks data for larger models).

- Less processing power compared to dedicated GPUs like the 4070 Ti.

NVIDIA 4070 Ti:

- Strengths:

- Powerful processing capabilities for larger LLMs (shown to be effective with Llama3 8B).

- Advanced GPU architecture optimized for AI workloads.

- Extensive software support for AI development.

- Weaknesses:

- Slower token generation speed compared to the M3 Pro for smaller, quantized models.

- Higher power consumption and potential for thermal throttling.

- Requires external memory, which can lead to performance bottlenecks.

Practical Recommendations

The best choice between the Apple M3 Pro and NVIDIA 4070 Ti depends on your specific needs:

- For running smaller, quantized LLMs like Llama 2 7B: The Apple M3 Pro offers superior token generation speed and better energy efficiency. It's ideal for tasks like chatbots, text generation, and other applications where speed and efficiency are key.

- For running larger LLMs like Llama 3 8B: The NVIDIA 4070 Ti provides the necessary processing power for larger models. It's a good choice for more complex tasks like code generation, scientific research, and applications requiring advanced language understanding.

FAQ

How do I choose the best device for my LLM needs?

Consider your specific LLM model, the size of the data you'll be working with, the level of performance you require, and your budget. If you're primarily working with smaller, quantized models, the M3 Pro is a great option. For larger models, the 4070 Ti is a more powerful choice.

What are the benefits of quantizing LLM models?

Quantization reduces the size of the model, leading to faster processing, lower memory requirements, and reduced power consumption. It's a technique commonly used for running LLMs on low-power devices.

What are the limitations of running LLMs locally?

Local LLM inference can be resource-intensive, requiring powerful hardware and significant memory. The performance of local LLM inference may also vary depending on the model size, optimization techniques used, and your device's specifications.

Are there any other devices suitable for running LLMs locally?

Yes, other devices, such as the Apple M1 Max and the NVIDIA RTX 4090, can also be used for running LLMs locally. However, the performance and efficiency they offer will vary depending on the specific device and the LLM model in use.

What are the future trends in local LLM inference?

The field of local LLM inference is constantly evolving. Future trends include advancements in hardware, software optimization techniques, and new LLM architectures designed for efficient local execution.

Keywords

Apple M3 Pro, NVIDIA 4070 Ti, LLM, large language model, local inference, benchmark analysis, quantized models, token generation speed, processing power, efficiency, memory, GPU, CPU, Llama 2, Llama 3, AI, machine learning, software development, future trends, practical recommendations.