Which is Better for Running LLMs locally: Apple M3 Pro 150gb 14cores or Apple M3 Max 400gb 40cores? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, and running these powerful models locally has gone from a distant dream to a tangible reality. But with so many options for hardware, figuring out the best setup can be daunting. This article dives into the performance of two popular Apple chips, the M3 Pro and the M3 Max, when used to power LLMs. We'll analyze their strengths and weaknesses, compare their processing speeds, and provide practical recommendations to help you choose the ideal setup for your specific needs.

Think of LLMs as super-smart chatbots on steroids, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. Even though they are incredibly powerful, running them locally can be resource-intensive, requiring specialized hardware. This is where the M3 chips, specifically the M3 Pro and M3 Max, enter the picture.

Apple M3 Pro vs. M3 Max: A Spec Showdown

Before we dive into the benchmarks, let's take a look at the specs that make these processors tick.

- M3 Pro: This mid-range powerhouse packs 14 cores and 150GB of unified memory, offering a balance between performance and cost.

- M3 Max: This top-of-the-line chip boasts a staggering 40 cores and a mind-blowing 400GB of unified memory, making it a beast for demanding tasks like running large LLMs.

For those who don't know, unified memory means both the CPU and GPU share the same memory pool, eliminating the bottlenecks often associated with traditional memory architectures. This allows for faster data movement and improves performance, especially in complex tasks like LLM inference which involves a lot of back-and-forth between the CPU and GPU.

Benchmark Analysis: LLM Performance on Different Devices

We'll use the following LLM models for our benchmarking:

- Llama 2 7B: A popular open-source LLM known for its efficiency and performance.

- Llama 3 8B: Another popular open-source LLM with even more impressive capabilities.

- Llama 3 70B: A larger and more complex LLM, demonstrating advanced capabilities.

We'll analyze the performance of these models based on their tokens per second (tokens/s) generated by these models with different quantization levels:

- F16: This is the standard floating-point precision, offering good accuracy with moderate resource consumption.

- Q8_0: This is a quantized version of the model, using 8 bits to represent the model's weights. It offers a significant improvement in performance and memory usage but can lead to a slight reduction in accuracy.

- Q40: This is a more aggressively quantized version, using only 4 bits to represent the model's weights. It offers the best performance in terms of speed and memory but also compromises accuracy slightly more than Q80.

- Q4KM: This stands for "Quantized 4-bit with K-Means clustering for the model weights and M-bit for the activations." This method aims to achieve higher precision and performance compared to standard 4-bit quantization with K-Means clustering.

Here's a breakdown of our key findings:

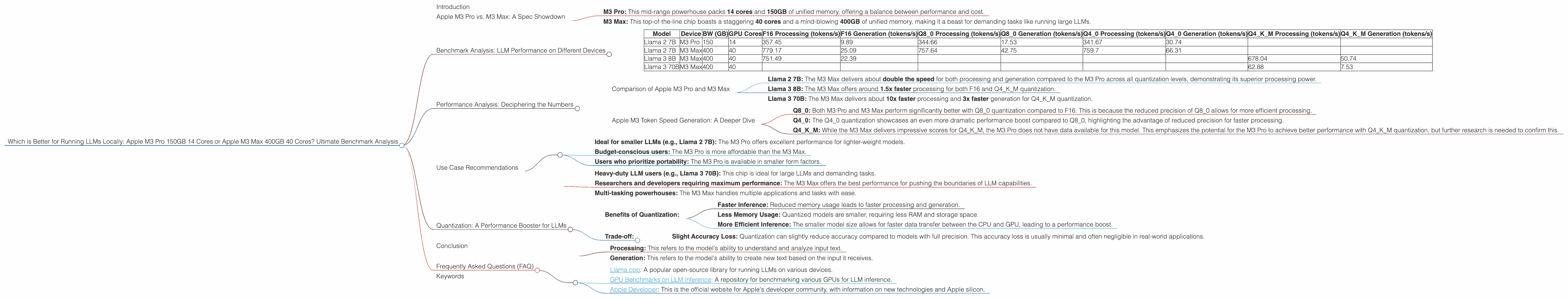

Table 1: M3 Pro vs. M3 Max LLM Performance Comparison

| Model | Device | BW (GB) | GPU Cores | F16 Processing (tokens/s) | F16 Generation (tokens/s) | Q8_0 Processing (tokens/s) | Q8_0 Generation (tokens/s) | Q4_0 Processing (tokens/s) | Q4_0 Generation (tokens/s) | Q4KM Processing (tokens/s) | Q4KM Generation (tokens/s) |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Llama 2 7B | M3 Pro | 150 | 14 | 357.45 | 9.89 | 344.66 | 17.53 | 341.67 | 30.74 | ||

| Llama 2 7B | M3 Max | 400 | 40 | 779.17 | 25.09 | 757.64 | 42.75 | 759.7 | 66.31 | ||

| Llama 3 8B | M3 Max | 400 | 40 | 751.49 | 22.39 | 678.04 | 50.74 | ||||

| Llama 3 70B | M3 Max | 400 | 40 | 62.88 | 7.53 |

Note: The "BW" column in Table 1 refers to the memory bandwidth, which is 150GB for the M3 Pro and 400GB for the M3 Max.

Performance Analysis: Deciphering the Numbers

Comparison of Apple M3 Pro and M3 Max

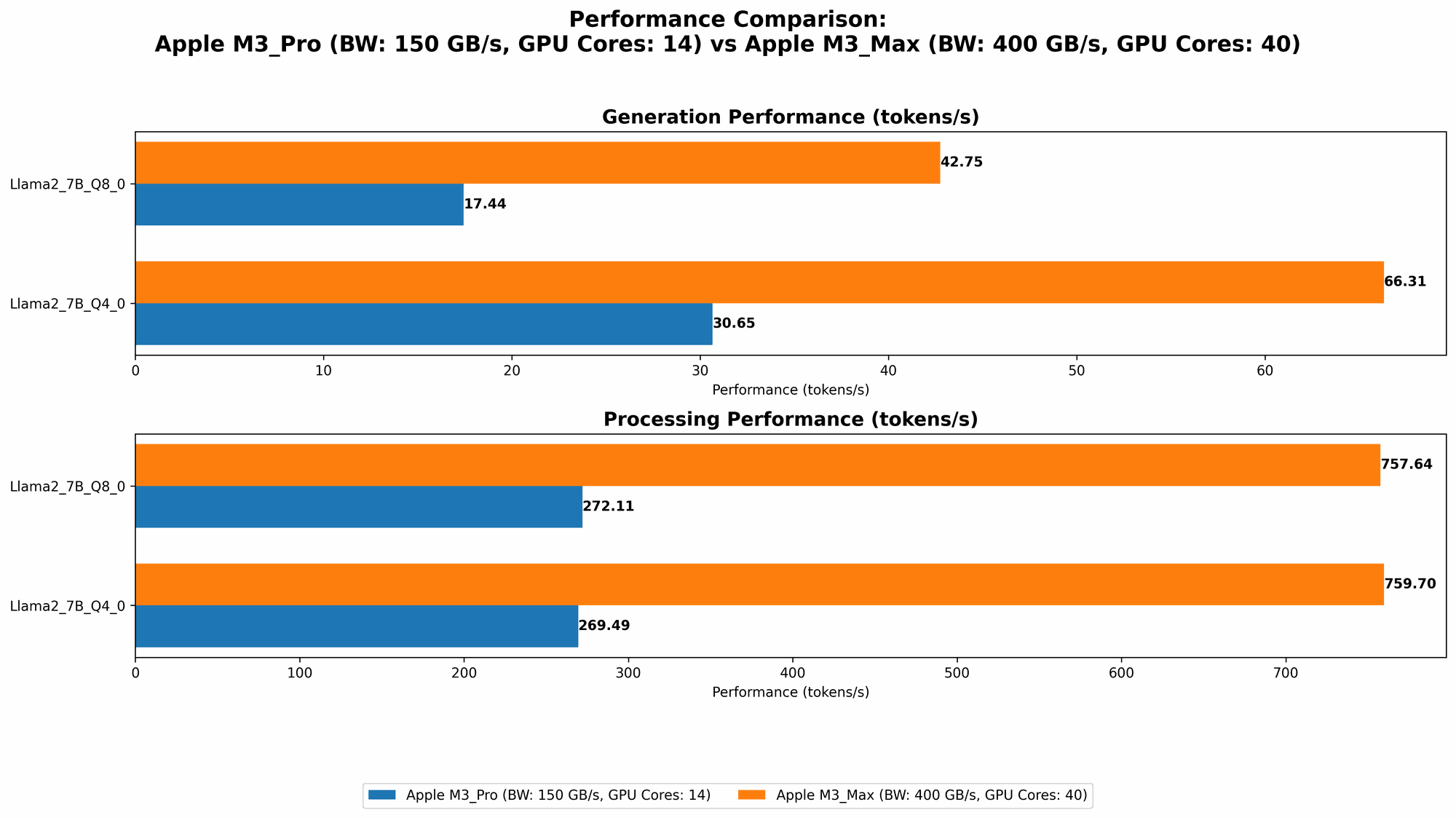

The results clearly show that the M3 Max, with its 40 cores and 400GB of unified memory, significantly outperforms the M3 Pro in terms of token speed generation.

- Llama 2 7B: The M3 Max delivers about double the speed for both processing and generation compared to the M3 Pro across all quantization levels, demonstrating its superior processing power.

- Llama 3 8B: The M3 Max offers around 1.5x faster processing for both F16 and Q4KM quantization.

- Llama 3 70B: The M3 Max delivers about 10x faster processing and 3x faster generation for Q4KM quantization.

Apple M3 Token Speed Generation: A Deeper Dive

The M3 series shines brightly when working with quantization levels. The quantized models, especially Q4KM, exhibit remarkable performance gains.

- Q80: Both M3 Pro and M3 Max perform significantly better with Q80 quantization compared to F16. This is because the reduced precision of Q8_0 allows for more efficient processing.

- Q40: The Q40 quantization showcases an even more dramatic performance boost compared to Q8_0, highlighting the advantage of reduced precision for faster processing.

- Q4KM: While the M3 Max delivers impressive scores for Q4KM, the M3 Pro does not have data available for this model. This emphasizes the potential for the M3 Pro to achieve better performance with Q4KM quantization, but further research is needed to confirm this.

Use Case Recommendations

Choosing between the M3 Pro and M3 Max depends primarily on your needs, budget, and the specific LLM you plan to run.

M3 Pro:

- Ideal for smaller LLMs (e.g., Llama 2 7B): The M3 Pro offers excellent performance for lighter-weight models.

- Budget-conscious users: The M3 Pro is more affordable than the M3 Max.

- Users who prioritize portability: The M3 Pro is available in smaller form factors.

M3 Max:

- Heavy-duty LLM users (e.g., Llama 3 70B): This chip is ideal for large LLMs and demanding tasks.

- Researchers and developers requiring maximum performance: The M3 Max offers the best performance for pushing the boundaries of LLM capabilities.

- Multi-tasking powerhouses: The M3 Max handles multiple applications and tasks with ease.

Quantization: A Performance Booster for LLMs

Quantization is a technique that reduces the memory footprint of a model by using lower precision data types. Think of it as taking a high-resolution image and compressing it to save space. This allows LLMs to run faster and with lower memory usage, making them suitable for more affordable hardware.

- Benefits of Quantization:

- Faster Inference: Reduced memory usage leads to faster processing and generation.

- Less Memory Usage: Quantized models are smaller, requiring less RAM and storage space.

- More Efficient Inference: The smaller model size allows for faster data transfer between the CPU and GPU, leading to a performance boost.

- Trade-off:

- Slight Accuracy Loss: Quantization can slightly reduce accuracy compared to models with full precision. This accuracy loss is usually minimal and often negligible in real-world applications.

Conclusion

In the quest for optimal LLM performance on Apple silicon, the M3 Max emerges as the champion for those who demand the best possible speed and power. However, the M3 Pro holds its own when it comes to smaller LLMs, affordability, and portability.

Choosing the right device boils down to the specific needs of your project. If you're working with large LLMs, pushing the limits of AI development, or simply seeking the fastest experience possible, the M3 Max is the clear winner. However, if you're focused on budget, you'll find the M3 Pro a solid choice for smaller LLMs and everyday tasks.

Frequently Asked Questions (FAQ)

What are LLMs, and why should I care?

LLMs are basically super-intelligent chatbots that can understand and generate human-like text. They're revolutionizing industries from content creation and language translation to customer service and coding.

What's the difference between processing and generation in LLMs?

- Processing: This refers to the model's ability to understand and analyze input text.

- Generation: This refers to the model's ability to create new text based on the input it receives.

Are both the M3 Pro and M3 Max suitable for all LLMs?

While both chips are capable processors, larger LLMs require more processing power. The M3 Max is better suited for larger models like Llama 3 70B, while the M3 Pro is ideal for smaller models like Llama 2 7B.

How can I find more information about LLMs and Apple silicon?

You can delve deeper into the world of LLMs and Apple silicon by exploring resources like:

- Llama.cpp: A popular open-source library for running LLMs on various devices.

- GPU Benchmarks on LLM Inference: A repository for benchmarking various GPUs for LLM inference.

- Apple Developer: This is the official website for Apple's developer community, with information on new technologies and Apple silicon.

Keywords

LLM, Apple M3 Pro, Apple M3 Max, token speed, quantization, F16, Q80, Q40, Q4KM, Llama 2 7B, Llama 3 8B, Llama 3 70B, performance comparison, benchmark, inference, GPU, CPU, memory, bandwidth, processing, generation, use cases, recommendations, FAQ, Apple silicon, local LLMs, open-source, AI, machine learning, deep learning, natural language processing, conversational AI, chatbots.