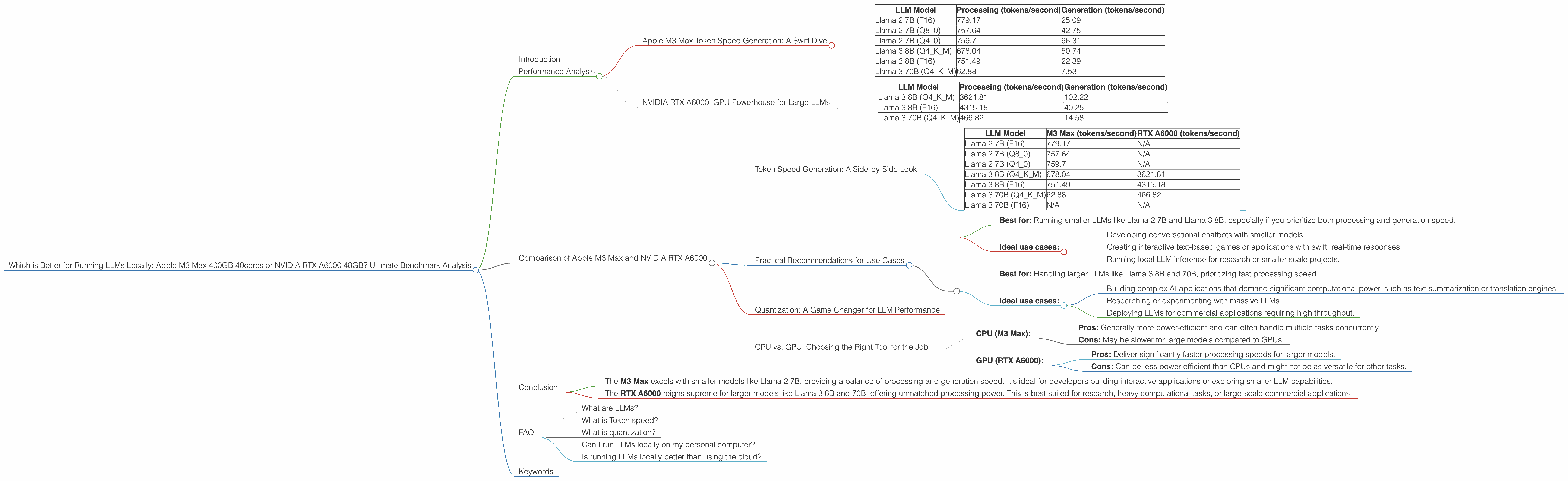

Which is Better for Running LLMs locally: Apple M3 Max 400gb 40cores or NVIDIA RTX A6000 48GB? Ultimate Benchmark Analysis

Introduction

Running large language models (LLMs) locally is becoming increasingly popular, allowing users to enjoy the power of AI without relying on cloud services. This empowers developers to leverage LLMs for creative projects, research, and personalized applications. However, choosing the right hardware for efficient LLM execution can be tricky, especially considering the vast range of options available.

This article dives into the head-to-head comparison of two popular hardware choices for local LLM deployment: the Apple M3 Max 400GB 40cores and the NVIDIA RTX A6000 48GB. We'll analyze their performance on various LLM models, providing an in-depth understanding of their strengths and weaknesses. By the end, you'll have a clear picture of which device reigns supreme for your specific LLM needs, whether you're experimenting with smaller models or tackling massive 70B parameter behemoths.

Performance Analysis

Apple M3 Max Token Speed Generation: A Swift Dive

The Apple M3 Max 400GB 40cores is a powerhouse of a chip, renowned for its exceptional speed and efficiency. Its 40 CPU cores and a massive 400GB of unified memory provide a solid foundation for handling the demanding computations required by LLMs.

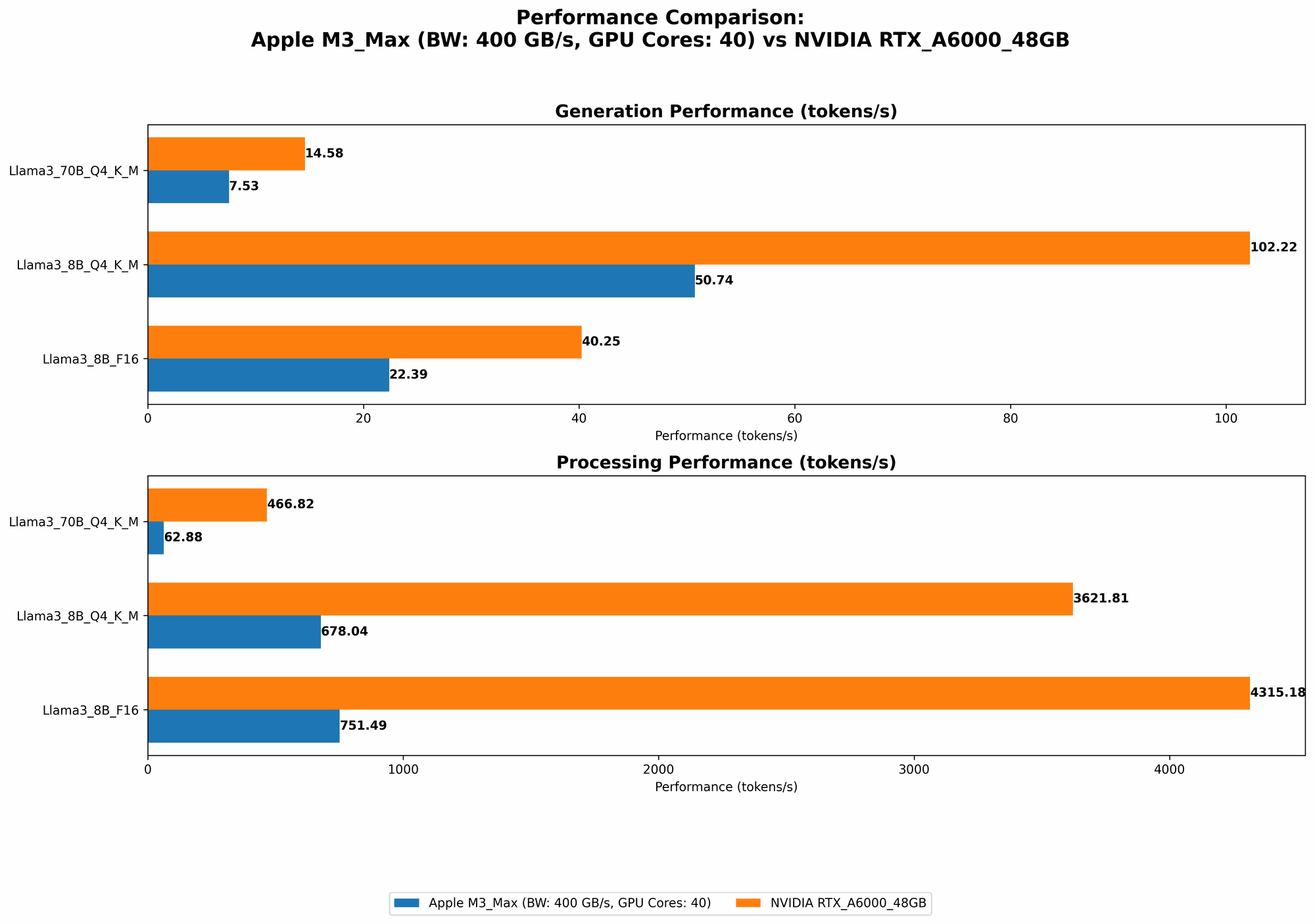

Let's delve into the token speed for different LLMs on the M3 Max, revealing its proficiency:

| LLM Model | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Llama 2 7B (F16) | 779.17 | 25.09 |

| Llama 2 7B (Q8_0) | 757.64 | 42.75 |

| Llama 2 7B (Q4_0) | 759.7 | 66.31 |

| Llama 3 8B (Q4KM) | 678.04 | 50.74 |

| Llama 3 8B (F16) | 751.49 | 22.39 |

| Llama 3 70B (Q4KM) | 62.88 | 7.53 |

As you can see, the M3 Max excels in processing speeds, particularly for smaller models like Llama 2 7B and Llama 3 8B. It can handle roughly 750-770 tokens per second in F16 and Q8_0 quantization for these models. However, its generation speeds lag behind the RTX A6000, especially for larger models.

NVIDIA RTX A6000: GPU Powerhouse for Large LLMs

The NVIDIA RTX A6000 48GB is a dedicated GPU designed for high-performance computing tasks, including machine learning and AI applications. Its powerful architecture and ample memory make it a compelling choice for running LLMs locally.

Here's how the RTX A6000 performs with various LLM models:

| LLM Model | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Llama 3 8B (Q4KM) | 3621.81 | 102.22 |

| Llama 3 8B (F16) | 4315.18 | 40.25 |

| Llama 3 70B (Q4KM) | 466.82 | 14.58 |

As you can notice, the RTX A6000 shines in processing larger models like Llama 3 8B and 70B. Its processing speed surpasses the M3 Max by a significant margin, capable of handling over 4000 tokens/second for Llama 3 8B in F16 quantization. However, its performance in generation for smaller models is unimpressive compared to the M3 Max.

Comparison of Apple M3 Max and NVIDIA RTX A6000

Token Speed Generation: A Side-by-Side Look

| LLM Model | M3 Max (tokens/second) | RTX A6000 (tokens/second) |

|---|---|---|

| Llama 2 7B (F16) | 779.17 | N/A |

| Llama 2 7B (Q8_0) | 757.64 | N/A |

| Llama 2 7B (Q4_0) | 759.7 | N/A |

| Llama 3 8B (Q4KM) | 678.04 | 3621.81 |

| Llama 3 8B (F16) | 751.49 | 4315.18 |

| Llama 3 70B (Q4KM) | 62.88 | 466.82 |

| Llama 3 70B (F16) | N/A | N/A |

The table highlights the strengths and weaknesses of both devices. The M3 Max excels in processing and generating tokens for smaller LLMs like Llama 2 7B, while the RTX A6000 shines in processing larger models like Llama 3 8B and 70B. However, the RTX A6000 lacks data for smaller model generation speeds.

Practical Recommendations for Use Cases

Apple M3 Max 400GB 40cores:

- Best for: Running smaller LLMs like Llama 2 7B and Llama 3 8B, especially if you prioritize both processing and generation speed.

- Ideal use cases:

- Developing conversational chatbots with smaller models.

- Creating interactive text-based games or applications with swift, real-time responses.

- Running local LLM inference for research or smaller-scale projects.

NVIDIA RTX A6000 48GB:

- Best for: Handling larger LLMs like Llama 3 8B and 70B, prioritizing fast processing speed.

- Ideal use cases:

- Building complex AI applications that demand significant computational power, such as text summarization or translation engines.

- Researching or experimenting with massive LLMs.

- Deploying LLMs for commercial applications requiring high throughput.

Quantization: A Game Changer for LLM Performance

Quantization is a technique used to reduce the size of LLM models and improve their performance on devices with limited resources, such as the M3 Max. It involves converting the model's weights from floating-point numbers (F16) to smaller integer formats (Q4, Q8).

As you can see in the comparison table, the M3 Max demonstrates impressive performance in Q4 and Q8 quantization for Llama 2 7B. This illustrates how quantization can significantly enhance the efficiency of LLMs on devices with limited GPU memory.

CPU vs. GPU: Choosing the Right Tool for the Job

The M3 Max primarily relies on its powerful CPU to process and generate tokens, while the RTX A6000 leverages its dedicated GPU. Each option has its advantages and disadvantages:

CPU (M3 Max):

- Pros: Generally more power-efficient and can often handle multiple tasks concurrently.

- Cons: May be slower for large models compared to GPUs.

GPU (RTX A6000):

- Pros: Deliver significantly faster processing speeds for larger models.

- Cons: Can be less power-efficient than CPUs and might not be as versatile for other tasks.

Ultimately, the best choice between CPU and GPU depends on your specific LLM model and use case.

Conclusion

The choice between the Apple M3 Max and the NVIDIA RTX A6000 for running LLMs locally hinges on your model size and performance priorities.

The M3 Max excels with smaller models like Llama 2 7B, providing a balance of processing and generation speed. It's ideal for developers building interactive applications or exploring smaller LLM capabilities.

The RTX A6000 reigns supreme for larger models like Llama 3 8B and 70B, offering unmatched processing power. This is best suited for research, heavy computational tasks, or large-scale commercial applications.

Remember, quantizing LLM models can drastically improve performance on devices with limited resources, making the M3 Max a viable option for a wider range of models when optimized correctly.

FAQ

What are LLMs?

LLMs, or large language models, are a type of artificial intelligence capable of understanding and generating human-like text. They are trained on massive datasets of text and code, enabling them to perform tasks like summarization, translation, and creative writing.

What is Token speed?

Token speed refers to the number of tokens a device can process or generate per second. Tokens are the building blocks of text in LLMs, representing individual words or parts of words. It's a crucial metric for evaluating the performance of LLM hardware.

What is quantization?

Quantization is a technique that reduces the size of LLM models by converting their weights from high-precision floating-point numbers to lower-precision integer formats. This allows models to run more efficiently on devices with limited memory, such as the M3 Max.

Can I run LLMs locally on my personal computer?

Yes, you can run LLMs locally on your computer, depending on its specifications. However, running larger models might require a powerful CPU or GPU and sufficient memory to provide optimal performance.

Is running LLMs locally better than using the cloud?

There are pros and cons to both approaches. Running LLMs locally offers greater control and privacy over your data, while using the cloud can provide access to more powerful infrastructure and resources. The best option depends on your specific needs and priorities.

Keywords

Apple M3 Max, NVIDIA RTX A6000, LLM, Large language model, Token speed, Performance, Generation, Processing, Quantization, Llama 2, Llama 3, Local Inference, AI, Machine Learning