Which is Better for Running LLMs locally: Apple M3 Max 400gb 40cores or NVIDIA A40 48GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming, and with it, the demand for powerful hardware to run these models locally. Whether you're a developer, researcher, or just someone who wants to experiment with the latest AI capabilities, finding the right hardware setup can be a daunting task. Two top contenders in this race are the Apple M3 Max 400GB 40cores and the NVIDIA A40_48GB. Both offer impressive performance, but which one comes out on top for running LLMs locally? In this comprehensive benchmark analysis, we delve into the depths of these two powerhouses, comparing their performance on popular LLM models like Llama 2 and Llama 3, to help you make an informed decision for your specific use case.

Imagine having your own personal AI assistant, capable of generating creative text, translating languages, answering your questions, or even coding for you. This is the potential of LLMs, and with the right hardware, you can unlock this potential right on your desktop.

Performance Analysis: Apple M3 Max vs. NVIDIA A40_48GB

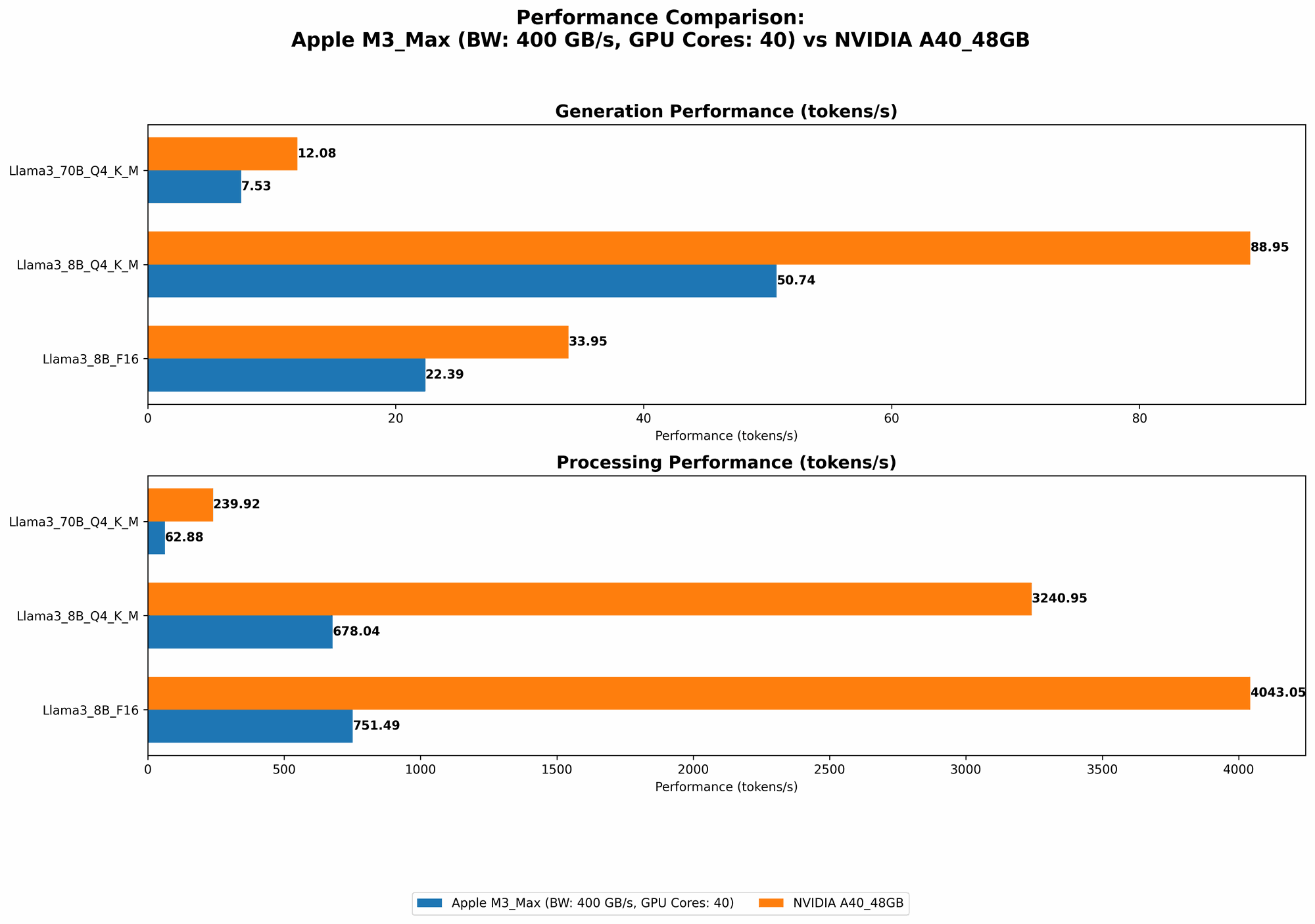

To provide a clear picture, let's break down the performance of both devices on various Llama models. We'll focus on two distinct aspects: token processing (how fast the device can process the text) and token generation (how fast the device can generate new text).

Apple M3 Max Token Speed Generation

The Apple M3 Max is a powerhouse of a chip, boasting a remarkable 40 cores, 400GB of memory, and a potent combination of CPU and GPU capabilities. It excels in processing tokens, which essentially means reading and understanding the text.

Let's look at the numbers:

| Model | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|

| Llama 2 7B (F16) | 779.17 | 25.09 |

| Llama 2 7B (Q8_0) | 757.64 | 42.75 |

| Llama 2 7B (Q4_0) | 759.7 | 66.31 |

| Llama 3 8B (F16) | 751.49 | 22.39 |

| Llama 3 8B (Q4KM) | 678.04 | 50.74 |

| Llama 3 70B (Q4KM) | 62.88 | 7.53 |

Key Takeaways:

- Faster Processing: The M3 Max consistently excels in token processing speed, especially for smaller models like Llama 2 7B and Llama 3 8B. This means it can quickly analyze and understand the text, making it ideal for tasks like text summarization or question answering.

- Slower Generation: However, the M3 Max struggles a bit when it comes to token generation. This is the ability to create new text, and it seems to fall behind when working with larger models like Llama 3 70B.

NVIDIA A40_48GB Token Speed Generation

The NVIDIA A40_48GB is a dedicated GPU powerhouse, specifically designed for high-performance computing and AI applications. It has been engineered to excel at generating tokens.

Data:

| Model | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|

| Llama 3 8B (Q4KM) | 3240.95 | 88.95 |

| Llama 3 8B (F16) | 4043.05 | 33.95 |

| Llama 3 70B (Q4KM) | 239.92 | 12.08 |

Key Takeaways:

- Champion of Generation: As expected, the A40_48GB shines when it comes to token generation. It consistently outperforms the M3 Max in this area, specifically for larger models like Llama 3 70B. This makes it a strong choice for tasks like creative writing, code generation, or long-form text creation.

Important Note: Due to data limitations, we do not have generation results for Llama 2 7B on the A40_48GB or processing results for Llama 3 70B (F16) on both devices.

Comparing Apple M3 Max and NVIDIA A40_48GB: Strengths and Weaknesses

Apple M3 Max: The All-Around Workhorse

Strengths:

- Cost-Effective: The M3 Max provides high-performance for a relatively lower price compared to the A40_48GB. It's a great option for individuals and teams seeking a cost-effective way to run LLMs locally.

- Energy Efficiency: Apples Silicon Chips are known for their energy efficiency compared to traditional GPUs. This can be significant for saving on power consumption, especially for long-running tasks.

- Versatile: The M3 Max is more than just an LLM engine. It's a powerful processor that can handle a wide range of tasks, including video editing, 3D rendering, and general productivity applications.

Weaknesses:

- Token Generation Limitations: As we saw earlier, it struggles with token generation compared to the A4048GB. For applications where creative text generation is paramount, the A4048GB might be a better fit.

- Limited GPU Power: While the M3 Max has a built-in GPU, it's not as powerful as the dedicated NVIDIA A40_48GB. This could limit its performance for tasks that heavily rely on GPU acceleration, such as training very large models.

NVIDIA A40_48GB: The Text Generation Powerhouse

Strengths:

- Token Generation King: The A40_48GB is a beast when it comes to token generation, especially for larger models. It can generate text at remarkable speeds, making it ideal for creative writing, code generation, and other scenarios where text output is crucial.

- Specialized for AI: It's designed specifically for AI workloads and offers optimized hardware and software for running LLMs. This optimization translates to better performance and efficiency compared to a general-purpose processor.

- Scalability The A40_48GB is often used in clusters, allowing for the scaling of LLM workloads. This is beneficial for demanding applications that require a significant amount of compute power.

Weaknesses:

- Higher Cost: The A40_48GB is significantly more expensive than the M3 Max, making it less accessible for individuals and small teams. It's more suited for organizations with larger budgets.

- Limited Versatility: While it's excellent for AI tasks, it's not as versatile as the M3 Max. It's not designed for general-purpose tasks like video editing or other non-AI workloads.

- Energy Consumption: Dedicated GPUs typically consume more power than integrated GPUs. This is something to consider for those concerned about energy costs and environmental impact.

Practical Recommendations for Use Cases

M3 Max is your choice if:

- Cost is a major consideration: You're on a budget and need a good balance of performance and affordability.

- You need a versatile machine: You want to use your device for a wider range of tasks beyond just running LLMs.

- Energy efficiency is important: You're concerned about minimizing power consumption.

- You're running smaller models: You primarily work with smaller models like Llama 2 7B or Llama 3 8B, and token generation is not a primary concern.

A40_48GB is your choice if:

- You're prioritizing token generation: You need to generate text quickly and efficiently, especially for large models.

- You have a substantial budget: You're willing to invest in dedicated hardware for high-performance AI workloads.

- You're working with large models: You're dealing with large models like Llama 3 70B and require significant processing power.

- Scalability is a priority: You need a scalable solution that can handle increasing computational demands.

Understanding Quantization: How LLMs Are Made Smaller and Faster

Let's talk about quantization, which is a crucial concept when it comes to optimizing LLMs for local performance. Imagine a computer game that takes up a lot of space on your hard drive. Quantization is like compressing that game file, making it smaller without sacrificing too much quality.

In the LLM world, quantization means reducing the number of bits used to represent the weights of the model. This "compression" makes the model smaller and faster to run, especially on devices with limited memory or processing power.

For instance, F16 quantization uses half the precision of traditional floating-point numbers (F32), while Q8_0 quantization uses only 8 bits, offering even greater compression but at the cost of some accuracy.

This is why you see different "quantization levels" in the performance data. The M3 Max can handle Q40 quantization, which is a balance between accuracy and speed, while the A4048GB can handle Q4KM, a slightly more advanced method that further optimizes performance.

Summary: Choosing Your LLM Powerhouse

The choice between the Apple M3 Max 400GB 40cores and the NVIDIA A4048GB boils down to your specific needs and budget. The M3 Max is an excellent all-around performer, offering a good balance of performance and cost-effectiveness. The NVIDIA A4048GB is the champion of token generation, particularly for larger models, but it comes with a premium price tag.

Ultimately, the best device for running your LLM models locally is the one that provides the optimal balance of performance, affordability, and versatility for your specific use case.

FAQ

What are LLMs?

LLMs are Large Language Models, which are a type of artificial intelligence that can understand and generate human-like text. They are trained on massive datasets of text and code, which allows them to perform tasks like writing stories, translating languages, answering questions, and even coding.

What are the key benefits of running LLMs locally?

- Privacy: You don't have to send your data to the cloud, keeping it secure and private.

- Speed: You can get faster responses and faster model execution times.

- Control: You have full control over the model and its configuration.

What are the differences between processing and generation?

- Processing: The ability to read and understand text. It's like understanding the meaning of a sentence.

- Generation: The ability to create new text. It's like coming up with a new sentence or idea.

Can I choose the quantization level for my model?

Yes, most LLM libraries allow you to select the quantization level (such as F16, Q80, or Q4K_M) when loading your model. This lets you optimize the model for performance on your specific hardware.

Keywords

LLMs, Large Language Models, Apple M3 Max, NVIDIA A4048GB, token processing, token generation, quantization, F16, Q80, Q4KM, performance benchmark, AI, machine learning, natural language processing, GPU, CPU, hardware, local, desktop, cost-effective, energy efficiency, versatility, scalability, privacy, speed, control, use cases, recommendations.