Which is Better for Running LLMs locally: Apple M3 Max 400gb 40cores or NVIDIA 4080 16GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming, with powerful models like Llama 2 and Llama 3 pushing the boundaries of natural language processing. But running these models locally often demands substantial hardware resources. Two popular contenders in this space are the Apple M3 Max, a powerful CPU-focused chip, and the NVIDIA 4080, a high-end GPU with dedicated AI capabilities.

This article dives deep into the performance of these two devices when running LLMs locally. We'll analyze their strengths and weaknesses, comparing their token processing and generation speeds across different LLM models and quantization levels. By the end, you'll have a clear understanding of which device reigns supreme in the LLM local deployment arena.

Breakdown of the Devices:

Apple M3 Max

- A powerhouse CPU with 40 cores and a massive 400GB of memory. This makes it suitable for running large models that demand significant RAM.

- Apple's silicon is known for its efficiency, often delivering comparable performance to GPUs while consuming less power.

- This device is great for tasks that don't require tons of parallel processing power for accelerated matrix operations like GPUs do.

NVIDIA 4080 16GB

- A top-of-the-line GPU with 16GB of VRAM. This GPU is designed for demanding applications like gaming and AI, excelling in parallel processing tasks.

- NVIDIA's GPUs are optimized for matrix operations, making them ideal for accelerating LLM computations and achieving faster processing and generation speeds.

- This device shines when the task at hand is highly parallel and requires a wide range of calculations to be performed simultaneously, such as training LLMs.

Performance Analysis: Token Per Second

Apple M3 Max Performance:

Table 1: Apple M3 Max Token Per Second Performance:

| LLM Model | Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|

| Llama 2 7B | F16 | 779.17 | 25.09 |

| Llama 2 7B | Q8_0 | 757.64 | 42.75 |

| Llama 2 7B | Q4_0 | 759.7 | 66.31 |

| Llama 3 8B | Q4KM | 678.04 | 50.74 |

| Llama 3 8B | F16 | 751.49 | 22.39 |

| Llama 3 70B | Q4KM | 62.88 | 7.53 |

Observations:

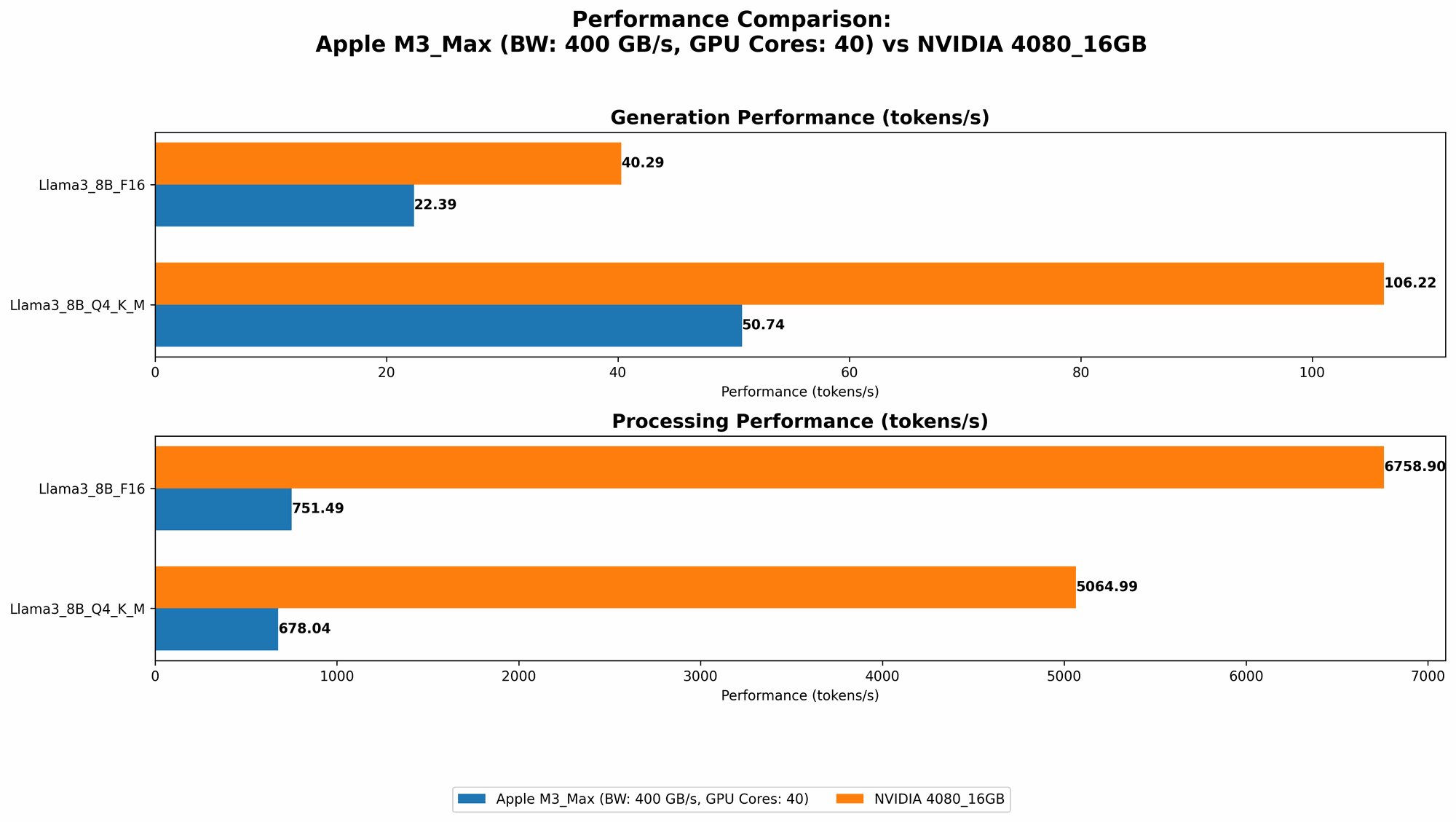

- The Apple M3 Max demonstrates impressive token processing speed, particularly for Llama 2 7B and Llama 3 8B models, showing over 750 tokens per second with F16 and Q4_0 quantization.

- It's worth noting that while the M3 Max boasts high processing speeds, its generation speeds are considerably lower, especially for larger models like Llama 3 70B.

*NVIDIA 4080 16GB Performance: *

Table 2: NVIDIA 4080 16GB Token Per Second Performance:

| LLM Model | Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 5064.99 | 106.22 |

| Llama 3 8B | F16 | 6758.9 | 40.29 |

| Llama 3 70B | Q4KM | N/A | N/A |

| Llama 3 70B | F16 | N/A | N/A |

Observations:

- The NVIDIA 4080 16GB shines in processing speed, achieving over 5000 tokens per second for Llama 3 8B with Q4KM quantization and over 6700 tokens per second with F16 quantization.

- It also surpasses the M3 Max in generation speed for Llama 3 8B, showcasing its strong parallel processing capabilities.

- Data for Llama 3 70B is not available, indicating that the 4080 might struggle with larger models due to memory constraints.

Comparison of Apple M3 Max and NVIDIA 4080 16GB:

Apple M1 Token Speed Generation

The Apple M3 Max exhibits a noteworthy advantage in terms of processing speed, especially for smaller models. Its ability to handle these models with efficiency stems from its powerful CPU architecture suitable for tasks that don't demand extensive parallel computation. This makes it a solid choice for developers focused on smaller LLM models or those seeking a balance between performance and power consumption.

NVIDIA 4080 16GB Token Speed Generation

The NVIDIA 4080 16GB emerges as the clear winner in token processing speed, particularly for larger models. Its dedication to parallel processing via its GPU architecture allows it to excel in computationally demanding tasks. This makes it an excellent option for developers working with larger models or requiring the highest possible processing throughput.

Memory Limitation

A notable difference between the two devices lies in their available memory. The M3 Max boasts a substantial 400GB of memory, making it suitable for even the most memory-intensive models. On the other hand, the 4080 16GB has a limited 16GB of VRAM, potentially posing a challenge for running large models. While the 4080's processing speed is impressive, its memory constraints might limit its applicability for models exceeding its capacity.

Cost Considerations

Both the M3 Max and the 4080 16GB are high-end hardware options, reflecting a significant investment. While the 4080 might appear more expensive due to its specialized GPU capabilities, the overall cost could be influenced by other factors like power consumption. The M3 Max, with its efficiency, could potentially offset the higher initial cost through reduced power consumption over time.

Practical Applications:

Apple M3 Max:

- Efficient development and testing: Suitable for rapid prototyping and experimenting with smaller LLM models.

- Resource-constrained environments: A plausible choice for users who are mindful of power consumption and hardware costs.

- General-purpose computing: Offers versatility in other tasks like video editing and game development.

NVIDIA 4080 16GB:

- Production deployments: Ideal for deploying large LLM models in production settings where performance is paramount.

- High-throughput applications: Best for tasks that demand a high volume of parallel computations, like large-scale training or inference.

- Specialized AI workloads: A powerful tool for research and development involving computationally intensive deep learning models.

Conclusion:

Choosing between the Apple M3 Max and NVIDIA 4080 16GB for running LLMs locally boils down to a careful evaluation of your needs. The M3 Max excels in its efficiency and memory capacity, making it suitable for smaller models and resource-sensitive environments. Conversely, the 4080 reigns supreme in processing speed, especially for larger models, but its memory limitations might restrict its application.

Ultimately, the ideal choice depends on your specific LLM model size, performance requirements, and budgetary constraints.

FAQ

What are LLMs?

Large Language Models (LLMs) are sophisticated artificial intelligence models trained on vast amounts of text data. They can understand and generate human-like text, making them valuable for tasks like language translation, text summarization, and chatbot development.

What is quantization?

Quantization is a technique used to reduce the memory footprint and computational requirements of LLM models. It involves converting the model's weights, which are typically stored as 32-bit floating-point numbers, to smaller data formats like 16-bit or 8-bit integers. This reduction in precision can have minimal impact on model performance while significantly lowering storage and processing demands.

How do I choose the right device for my LLM needs?

Consider these factors:

- Model Size: If you're working with large models (e.g., Llama 3 70B), a device with sufficient memory and processing power like the NVIDIA 4080 might be necessary. For smaller models, the Apple M3 Max could be a more efficient option.

- Performance Requirements: If you prioritize high processing speeds, the NVIDIA 4080's GPU capabilities deliver superior performance. However, if efficiency and cost are key considerations, the Apple M3 Max could be a better choice.

- Budget: Both devices represent high-end hardware options, so carefully assess your budget and prioritize features that align with your specific needs.

Keywords:

LLM, Llama 2, Llama 3, Apple M3 Max, NVIDIA 4080, Token Processing, Token Generation, Quantization, F16, Q80, Q40, Q4KM, GPU, CPU, Memory, Performance, Benchmark, Local Deployment, Inference, AI, Deep Learning, Machine Learning, Natural Language Processing, NLP.