Which is Better for Running LLMs locally: Apple M3 Max 400gb 40cores or NVIDIA 3090 24GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is evolving rapidly, with new models and applications emerging constantly. But before you can unleash the power of these AI marvels, you need the right hardware. This article will delve into the performance of two popular choices for running LLMs locally: the Apple M3 Max 400GB 40cores and the NVIDIA 3090 24GB. We'll analyze their strengths and weaknesses, comparing their token speed generation for various LLM models through a comprehensive benchmark analysis.

Think of it like this: imagine you're training a cheetah for a race. You can pick a powerful but nimble greyhound (M3 Max) or a mighty but less agile lion (3090). Which one wins? Let's find out!

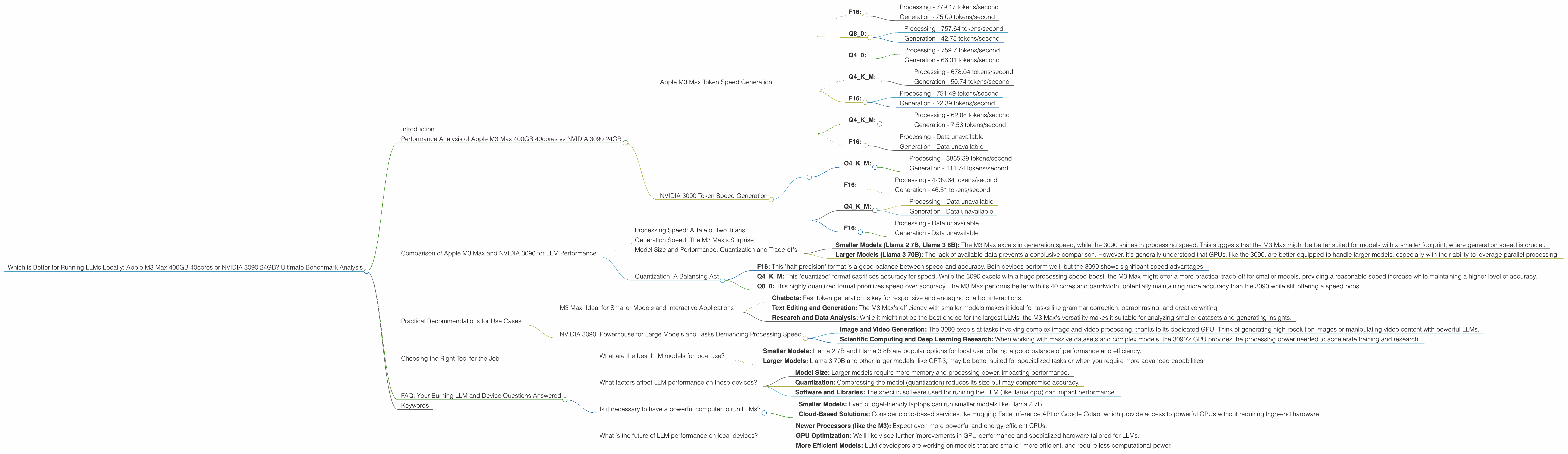

Performance Analysis of Apple M3 Max 400GB 40cores vs NVIDIA 3090 24GB

Apple M3 Max Token Speed Generation

The M3 Max boasts a powerful combination of 40 CPU cores and a generous 400GB of bandwidth. This makes it a formidable machine for general-purpose computing, including running LLMs. Let's dive into its token generation speed for different models:

Llama 2 7B:

- F16:

- Processing - 779.17 tokens/second

- Generation - 25.09 tokens/second

- Q8_0:

- Processing - 757.64 tokens/second

- Generation - 42.75 tokens/second

- Q4_0:

- Processing - 759.7 tokens/second

- Generation - 66.31 tokens/second

Llama 3 8B:

- Q4KM:

- Processing - 678.04 tokens/second

- Generation - 50.74 tokens/second

- F16:

- Processing - 751.49 tokens/second

- Generation - 22.39 tokens/second

Llama 3 70B:

- Q4KM:

- Processing - 62.88 tokens/second

- Generation - 7.53 tokens/second

- F16:

- Processing - Data unavailable

- Generation - Data unavailable

NVIDIA 3090 Token Speed Generation

The NVIDIA 3090, known for its prowess in graphics and machine learning, offers a dedicated GPU for increased processing power. Although it lacks the sheer bandwidth of the M3 Max, the 3090's GPU prowess shines for specific LLM tasks.

Llama 3 8B:

- Q4KM:

- Processing - 3865.39 tokens/second

- Generation - 111.74 tokens/second

- F16:

- Processing - 4239.64 tokens/second

- Generation - 46.51 tokens/second

Llama 3 70B:

- Q4KM:

- Processing - Data unavailable

- Generation - Data unavailable

- F16:

- Processing - Data unavailable

- Generation - Data unavailable

Comparison of Apple M3 Max and NVIDIA 3090 for LLM Performance

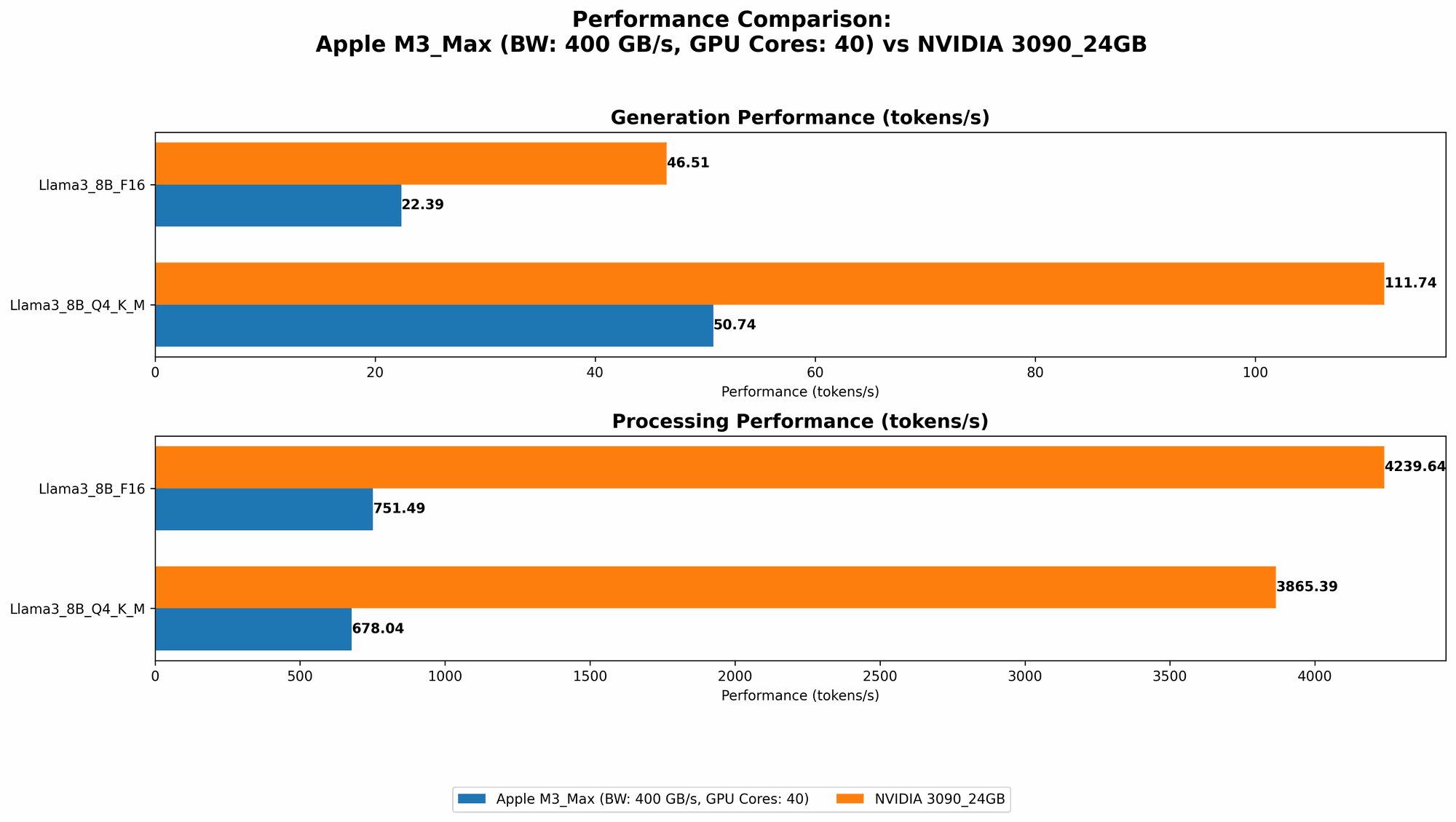

Processing Speed: A Tale of Two Titans

When it comes to processing speed, the NVIDIA 3090 clearly dominates. It processes tokens significantly faster than the M3 Max for both Llama 3 8B models (F16 and Q4KM). For example, the 3090 processes almost 5 times faster than the M3 Max for the Llama 3 8B Q4KM model. This is mainly due to the 3090's dedicated GPU, specialized for handling complex calculations.

Generation Speed: The M3 Max's Surprise

Surprisingly, the M3 Max outperforms the 3090 in generation speed for smaller models. For both Llama 2 7B and Llama 3 8B models, the M3 Max generates tokens faster. However, when it comes to larger models like Llama 3 70B, data is not available for either device, making a direct comparison impossible.

Model Size and Performance: Quantization and Trade-offs

The performance of these devices varies significantly depending on the size and quantization level of the LLM model.

- Smaller Models (Llama 2 7B, Llama 3 8B): The M3 Max excels in generation speed, while the 3090 shines in processing speed. This suggests that the M3 Max might be better suited for models with a smaller footprint, where generation speed is crucial.

- Larger Models (Llama 3 70B): The lack of available data prevents a conclusive comparison. However, it's generally understood that GPUs, like the 3090, are better equipped to handle larger models, especially with their ability to leverage parallel processing.

Quantization: A Balancing Act

Quantization is like compressing a large file. It reduces the size of the LLM model while slightly impacting accuracy. It's a common technique to optimize local model performance.

- F16: This "half-precision" format is a good balance between speed and accuracy. Both devices perform well, but the 3090 shows significant speed advantages.

- Q4KM: This "quantized" format sacrifices accuracy for speed. While the 3090 excels with a huge processing speed boost, the M3 Max might offer a more practical trade-off for smaller models, providing a reasonable speed increase while maintaining a higher level of accuracy.

- Q8_0: This highly quantized format prioritizes speed over accuracy. The M3 Max performs better with its 40 cores and bandwidth, potentially maintaining more accuracy than the 3090 while still offering a speed boost.

Practical Recommendations for Use Cases

M3 Max: Ideal for Smaller Models and Interactive Applications

If you're working with smaller models or need fast generation speed for interactive applications like chatbots, the M3 Max is a solid choice:

- Chatbots: Fast token generation is key for responsive and engaging chatbot interactions.

- Text Editing and Generation: The M3 Max's efficiency with smaller models makes it ideal for tasks like grammar correction, paraphrasing, and creative writing.

- Research and Data Analysis: While it might not be the best choice for the largest LLMs, the M3 Max's versatility makes it suitable for analyzing smaller datasets and generating insights.

NVIDIA 3090: Powerhouse for Large Models and Tasks Demanding Processing Speed

For large LLMs and tasks that rely heavily on raw processing power, the 3090 reigns supreme:

- Image and Video Generation: The 3090 excels at tasks involving complex image and video processing, thanks to its dedicated GPU. Think of generating high-resolution images or manipulating video content with powerful LLMs.

- Scientific Computing and Deep Learning Research: When working with massive datasets and complex models, the 3090's GPU provides the processing power needed to accelerate training and research.

Choosing the Right Tool for the Job

The choice between the M3 Max and the 3090 ultimately depends on your specific needs. For smaller models and tasks that emphasize generation speed, the M3 Max offers a compelling combination of performance and efficiency. However, if you're working with larger models, require immense processing power, or plan to leverage LLMs for complex tasks, the 3090's dedicated GPU provides the horsepower to handle the demands.

FAQ: Your Burning LLM and Device Questions Answered

What are the best LLM models for local use?

The "best" model depends on your use case.

- Smaller Models: Llama 2 7B and Llama 3 8B are popular options for local use, offering a good balance of performance and efficiency.

- Larger Models: Llama 3 70B and other larger models, like GPT-3, may be better suited for specialized tasks or when you require more advanced capabilities.

What factors affect LLM performance on these devices?

Besides the device itself, other factors play a vital role:

- Model Size: Larger models require more memory and processing power, impacting performance.

- Quantization: Compressing the model (quantization) reduces its size but may compromise accuracy.

- Software and Libraries: The specific software used for running the LLM (like llama.cpp) can impact performance.

Is it necessary to have a powerful computer to run LLMs?

While powerful hardware is generally beneficial, it's possible to run LLMs on less powerful computers:

- Smaller Models: Even budget-friendly laptops can run smaller models like Llama 2 7B.

- Cloud-Based Solutions: Consider cloud-based services like Hugging Face Inference API or Google Colab, which provide access to powerful GPUs without requiring high-end hardware.

What is the future of LLM performance on local devices?

The future is bright! With the constant advancement of computing technology, we can expect even more efficient and powerful local LLM solutions:

- Newer Processors (like the M3): Expect even more powerful and energy-efficient CPUs.

- GPU Optimization: We'll likely see further improvements in GPU performance and specialized hardware tailored for LLMs.

- More Efficient Models: LLM developers are working on models that are smaller, more efficient, and require less computational power.

Keywords

Large Language Model, LLM, Apple M3 Max, NVIDIA 3090, Token Speed, Generation, Processing, Llama 2, Llama 3, Quantization, F16, Q4KM, Q8_0, Performance Benchmark, Local Inference, GPU, CPU, Bandwidth, Model Size, Chatbot, Image Generation, Deep Learning, Cloud-Based Solutions