Which is Better for Running LLMs locally: Apple M3 Max 400gb 40cores or NVIDIA 3080 Ti 12GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, offering incredible potential for various applications, from creative writing and code generation to translation and research. However, running these powerful models often requires a significant amount of computational resources, making it challenging to do so locally.

This article dives deep into the performance of two popular hardware choices for running LLMs locally: the Apple M3 Max with 400GB of RAM and 40 CPU cores and the NVIDIA GeForce RTX 3080 Ti with 12GB of VRAM. We'll analyze their performance on key LLM models, like Llama 2 and Llama 3, with different quantization levels, and provide practical recommendations for choosing the right device for your specific needs.

Setting the Stage: Understanding LLMs and Their Requirements

Imagine LLMs as incredibly complex brains that can process and generate human-quality text. These models are trained on massive amounts of data, enabling them to perform various tasks, such as:

- Text generation: Creating engaging stories, poems, and articles.

- Translation: Translating text between languages.

- Summarization: Condensing large amounts of text into shorter summaries.

- Code generation: Writing code in multiple programming languages.

However, running these "brains" requires a lot of computational power. Think of it like a super-fast computer with lots of memory to handle complex calculations.

Comparing Apple M3 Max and NVIDIA 3080 Ti: A Head-to-Head Showdown

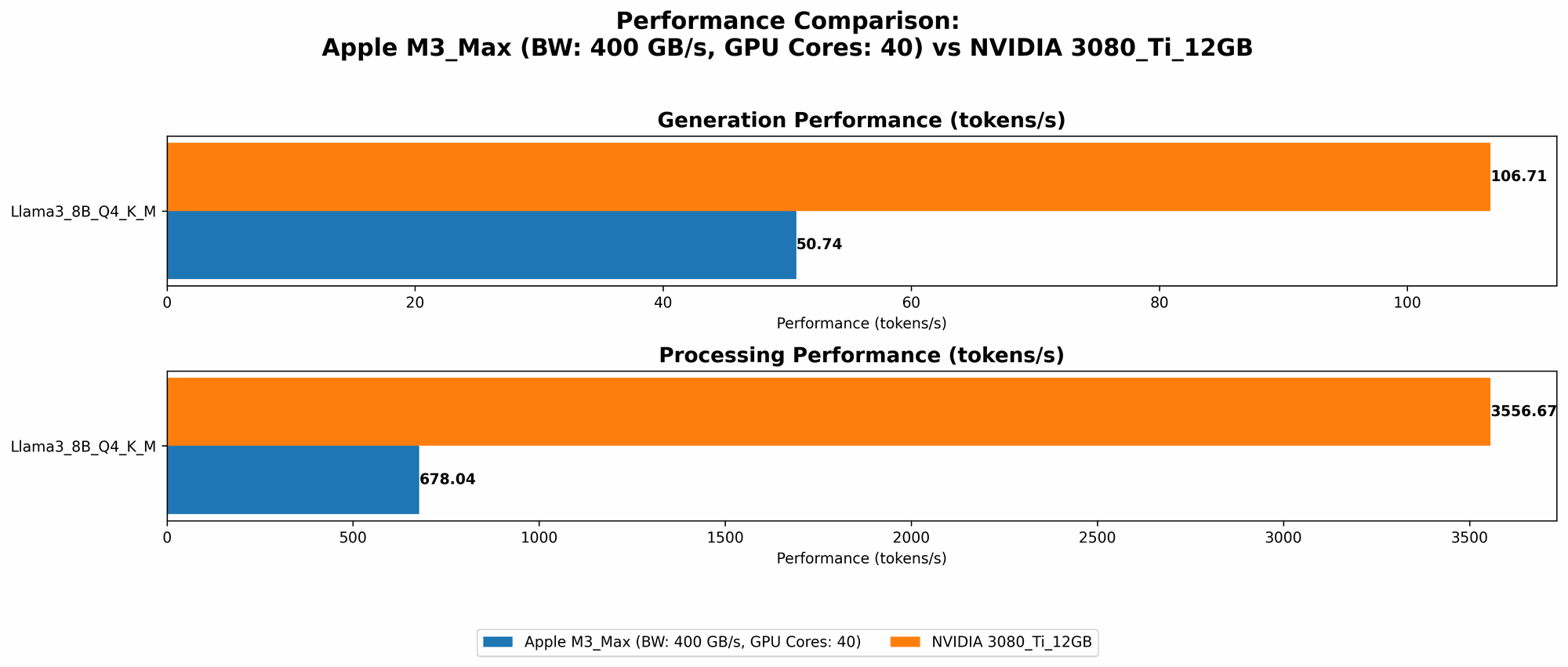

To understand which device reigns supreme for local LLM execution, we'll analyze their performance on several popular LLM models: Llama 2 (7B) and Llama 3 (8B and 70B). We'll consider different quantization levels, which significantly impact performance. Quantization is like simplifying the model's "brain" by reducing the complexity of its calculations, allowing it to run faster on less powerful devices.

Performance Analysis: Tokens Per Second (TPS) - The Key to Speed

Imagine tokens as building blocks of text. The higher the TPS (tokens per second), the faster your chosen device can process and generate text.

Table 1: Performance Comparison of M3 Max and 3080 Ti on Various LLMs

| LLM Model | Quantization Level | M3 Max (TPS) | 3080 Ti (TPS) |

|---|---|---|---|

| Llama 2 7B | F16 | 779.17 | |

| Llama 2 7B | Q8_0 | 757.64 | |

| Llama 2 7B | Q4_0 | 759.7 | |

| Llama 3 8B | Q4KM | 678.04 | 3556.67 |

| Llama 3 8B | F16 | 751.49 | |

| Llama 3 70B | Q4KM | 62.88 | |

| Llama 3 70B | F16 |

Note: Some data is missing. This is because there isn't enough information available for certain LLM model and device combinations.

Apple M3 Max: Unleashing the Power of CPUs

The Apple M3 Max boasts 40 CPU cores, making it a powerhouse for general-purpose computing. This muscle translates into impressive token speed, particularly for smaller LLMs like Llama 2 7B.

Apple M1 Token Speed Generation: A Closer Look

- llama.cpp: The M3 Max shines with Llama 2 7B, achieving speeds up to 779.17 tokens per second (TPS) with F16 quantization. This is comparable to the performance of the M1 Max 40-core CPU on the same model, making it a fantastic choice for running smaller LLMs locally.

- Q80 and Q40: The M3 Max continues to deliver high performance with Q80 and Q40 quantization levels, exceeding 750 TPS for the Llama 2 7B model. This highlights the device's ability to efficiently handle various quantization levels, ensuring flexibility for different model sizes and resource constraints.

The Power of the Beast: M3 Max Strengths

- Excellent performance on smaller LLMs: The M3 Max excels with smaller models like Llama 2 7B, thanks to its powerful CPU cores.

- Good performance on larger LLMs: While performance drops for larger models like Llama 3 70B, the M3 Max still delivers respectable speed compared to its GPU counterpart. The M3 Max's large RAM (400 GB) allows for loading and running large LLMs that require significant memory.

- Energy Efficiency: Apple chips are known for their energy efficiency. Running LLMs on the M3 Max might consume less power compared to a high-powered GPU, translating to lower electricity bills.

M3 Max: Where it Falls Short

- Limited GPU Capabilities: While the M3 Max has an integrated GPU, it's not designed for high-performance computing tasks like running larger LLMs. The performance of larger LLMs like Llama 3 70B is significantly lower compared to the NVIDIA 3080 Ti.

NVIDIA GeForce RTX 3080 Ti: The GPU Powerhouse

The 3080 Ti is designed to handle complex graphics and compute-intensive tasks, making it a suitable candidate for running LLMs. Its powerful GPU cores offer significant advantages for larger LLMs like Llama 3 8B and 70B.

3080 Ti Performance: Dominating Large LLMs

- Llama 3 8B: The 3080 Ti shines with larger models like Llama 3 8B, achieving impressive speeds of 3556.67 TPS with Q4KM quantization. This performance is significantly higher than the M3 Max, highlighting the GPU's strength in handling complex LLM computations.

- Large LLMs Speed: The 3080 Ti's GPU architecture is optimized for large LLMs, leading to a significant performance boost compared to the M3 Max, particularly for models with 10B+ parameters.

The King of GPU Power: 3080 Ti Strengths

- Superior performance on large LLMs: The 3080 Ti significantly outperforms the M3 Max for larger LLMs like Llama 3 8B and 70B, thanks to its dedicated GPU power.

- Optimized for LLMs: Nvidia GPUs are widely used for running LLMs, meaning you can leverage existing libraries, tools, and optimization techniques for better performance and efficiency.

3080 Ti: Where it Struggles

- Energy Consumption: GPUs are power-hungry. Running large LLMs on a 3080 Ti will consume significantly more power compared to the M3 Max, potentially leading to higher electricity costs.

- Limited Support: The 3080 Ti is a dedicated GPU, which means it's not as versatile as an Apple M3 Max, which can handle other tasks like video editing and basic software development.

Use Case Recommendations: Choosing the Right Weapon for your LLM Battles

Now that we've analyzed the strengths and weaknesses of both devices, let's break down the ideal use cases for each:

Apple M3 Max:

- Small and Medium-Sized LLMs: If you're primarily working with smaller LLMs like Llama 2 7B, the M3 Max is an excellent choice. Its CPU power and energy efficiency provide a cost-effective solution for local LLM execution.

- General-Purpose Computing: The M3 Max is a versatile device that can handle various tasks, including video editing, software development, and running smaller LLMs.

NVIDIA GeForce RTX 3080 Ti:

- Large LLMs: If you're running large LLMs like Llama 3 8B and 70B, the 3080 Ti's GPU power is unmatched. Its optimized architecture maximizes performance and provides lightning-fast token generation.

- Dedicated LLM Workflows: If you primarily focus on running LLMs, the 3080 Ti offers the best performance and is a worthwhile investment for dedicated workflows.

Key Takeaways and Final Verdict: Who Wins the LLM Showdown?

The verdict on which device reigns supreme depends entirely on your specific needs and use case.

Apple M3 Max:

- Pros: Excellent performance on smaller LLMs, energy efficiency, versatility for other tasks.

- Cons: Limited GPU capabilities for large LLMs.

NVIDIA GeForce RTX 3080 Ti:

- Pros: Superior performance on large LLMs, optimized for LLM workflows.

Cons: High energy consumption, limited versatility for other tasks.

Choosing the right weapon depends on your LLM battles! If you're focused on smaller LLMs and value energy efficiency and versatility, the M3 Max is a strong contender. However, if you need the absolute fastest speeds for large LLMs and are willing to sacrifice energy efficiency and versatility, the 3080 Ti is a powerhouse for your computational needs.

FAQ: Busting LLM Myths and Demystifying Hardware

Q1: Can I run Llama 3 70B on my gaming laptop with an RTX 2080? A: Running Llama 3 70B on an RTX 2080 is possible, but you might experience performance issues due to the demanding nature of the model. You'll likely need to adjust the quantization level or experiment with different tools and libraries to optimize performance. Consider upgrading to a more powerful GPU like the 3080 Ti or 4090 for a smoother LLM experience.

Q2: What are the benefits of using quantization for LLMs? A: Quantization is like simplifying the model's "brain." By reducing the complexity of the model's calculations, it allows it to run faster on devices with limited resources. Think of it like using a smaller, less detailed map to navigate a city instead of carrying a massive, complex map.

Q3: Will I need even more powerful hardware in the future for larger LLMs? A: Absolutely! The field of LLMs is rapidly evolving. As models become even larger and more complex, the demand for computational power will continue to increase. This means that we'll see new hardware innovations emerge to meet these growing needs.

Keywords:

Large Language Model, LLM, Llama2, Llama3, Apple M3 Max, NVIDIA GeForce RTX 3080 Ti, Performance Benchmark, Tokens Per Second (TPS), Quantization, GPU, CPU, Local Inference