Which is Better for Running LLMs locally: Apple M3 Max 400gb 40cores or NVIDIA 3080 10GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is booming, and running these powerful models locally is becoming increasingly popular. Whether you're a developer experimenting with new models or a researcher fine-tuning your own, having a powerful machine to handle the processing can make a big difference.

This article dives into the ultimate benchmark analysis of two popular options for running LLMs locally: the Apple M3 Max 400GB 40cores and the NVIDIA 3080 10GB. We'll compare their performance on key LLM benchmarks, analyze their strengths and weaknesses, and help you decide which is the best fit for your needs.

Apple M3 Max Token Speed Generation: A Closer Look

The Apple M3 Max 400GB 40cores is a powerful beast, boasting a whopping 40 CPU cores and 400GB of unified memory. But how does it stack up against the NVIDIA 3080 10GB in terms of LLM processing power? Let's break it down.

Llama 2 7B: A Comparative Analysis

The Apple M3 Max shines when it comes to processing the Llama 2 7B model.

- Processing Speed: The M3 Max achieves an impressive 779.17 tokens per second for F16 processing and 757.64 tokens per second for Q8_0 processing.

- Generation Speed: The M3 Max also excels in generation speed, achieving 25.09 tokens per second for F16 and 42.75 tokens per second for Q8_0.

This means the M3 Max can quickly process and generate text with Llama 2 7B, making it ideal for developers and researchers seeking fast turnaround times.

Llama 3 8B: Exploring the Q4KM Advantage

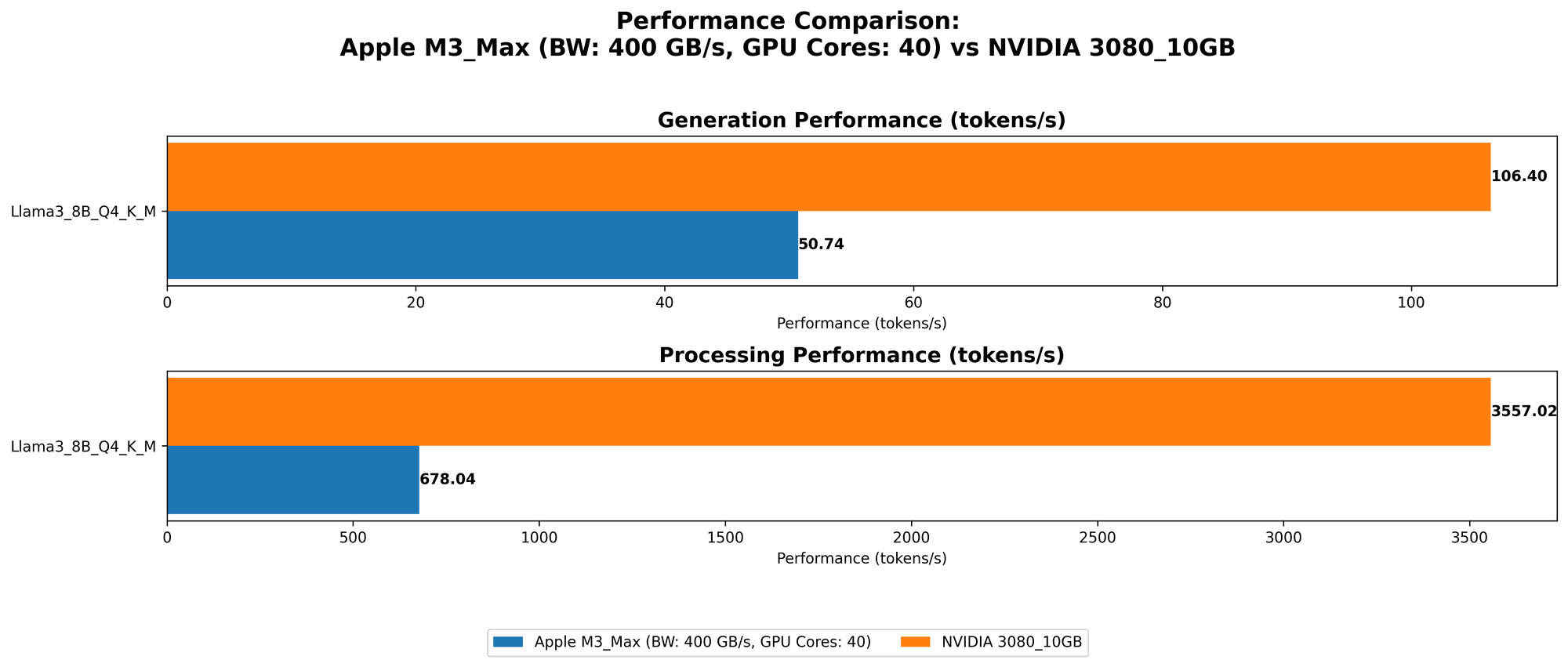

The Apple M3 Max's performance on the Llama 3 8B model is remarkable, particularly with Q4KM quantization.

- Processing Speed: The M3 Max processes Llama 3 8B at 678.04 tokens per second using Q4KM quantization, demonstrating its efficiency in handling smaller models with this quantization method.

- Generation Speed: The M3 Max's generation speed with Q4KM is 50.74 tokens per second.

F16 Performance on Llama 3 8B

The Apple M3 Max's performance on Llama 3 8B with F16 isn't as impressive:

- Processing Speed: With F16, the M3 Max achieves 751.49 tokens per second for processing, slightly slower than its Q4KM performance.

- Generation Speed: The generation speed for F16 is 22.39 tokens per second.

Llama 3 70B: A Look at the Limitations

Unfortunately, data for the Apple M3 Max running Llama 3 70B with both F16 and Q4KM is not available. This indicates that the M3 Max might struggle with larger models like Llama 3 70B, particularly when using F16 precision.

NVIDIA 3080 10GB: GPU Power for LLMs

The NVIDIA 3080 10GB is a powerhouse graphics card designed for demanding tasks like gaming and video editing. But how does it fare in the realm of LLMs?

Llama 3 8B: A Notable Performance with Q4KM

The NVIDIA 3080 10GB shines when it comes to Llama 3 8B, particularly with Q4KM. While the M3 Max excels in Q4KM processing and generation, the 3080 excels in processing:

- Processing Speed: The NVIDIA 3080 10GB achieves an impressive 3557.02 tokens per second for Q4KM processing, outperforming the Apple M3 Max significantly.

- Generation Speed: The NVIDIA 3080 10GB can generate text at 106.4 tokens per second, surpassing the M3 Max in terms of generation speed.

The Missing Data: Limitations of the NVIDIA 3080

Unfortunately, data for Llama 3 8B with F16, Llama 3 70B with both Q4KM and F16, and Llama 2 7B is not available. While the NVIDIA 3080 excels with Llama 3 8B using Q4KM, its performance with F16 and larger models remains unknown.

Performance Analysis: Strengths and Weaknesses

Apple M3 Max: Unified Memory Advantage

The Apple M3 Max's unified memory architecture is a key strength. It allows for fast data transfers between the CPU and GPU, leading to faster processing and generation speeds for smaller models. The M3 Max also excels with Q4KM quantization, making it a great option for developers experimenting with different quantization methods.

However, the M3 Max faces limitations when handling larger models like Llama 70B. The lack of data for larger models suggests potential bottlenecks with memory bandwidth or GPU performance, hindering its ability to process and generate text with these more demanding LLMs.

NVIDIA 3080: GPU Processing Power

The NVIDIA 3080 10GB excels in GPU processing power, specifically with Q4KM quantization for Llama 3 8B. Its dedicated GPU architecture allows for parallel processing, leading to significantly faster processing speeds compared to the M3 Max.

However, the 3080's performance with F16 precision and larger models remains unclear. The missing data points to the potential limitations of the 3080's memory capacity and GPU performance in handling larger models and different precision levels.

Practical Recommendations and Use Cases

When to Choose the Apple M3 Max

- Smaller Models: If you primarily work with smaller LLM models like Llama 2 7B and Llama 3 8B, the Apple M3 Max's unified memory and Q4KM performance make it a solid choice.

- Experimentation: The M3 Max is perfect for experimenting with different model sizes, quantization methods, and workflows, thanks to its fast processing and generation capabilities.

- Budget-Conscious: The M3 Max generally offers a better price-to-performance ratio compared to some dedicated AI accelerators.

When to Choose the NVIDIA 3080

- High-Performance Processing: If you need super-fast processing speeds for Llama 3 8B with Q4KM quantization, the NVIDIA 3080 10GB is the clear winner.

- GPU-Accelerated Applications: The 3080's GPU architecture makes it suitable for applications that leverage GPU acceleration, such as machine learning and deep learning tasks.

Conclusion

The choice between the Apple M3 Max 400GB 40cores and the NVIDIA 3080 10GB ultimately depends on your specific needs. The M3 Max excels with smaller models and Q4KM quantization, offering a good balance of performance and price, while the NVIDIA 3080 dominates with GPU-powered processing for specific models.

For developers and researchers working with LLMs, understanding the strengths and limitations of each device can help you make informed decisions to optimize your workflow and leverage their capabilities.

FAQ

What are LLMs?

Large language models (LLMs) are artificial intelligence systems trained on massive amounts of text data, allowing them to generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is quantization in the context of LLMs?

Quantization is a technique used to reduce the size of LLM models by representing their weights and activations using fewer bits. This allows for faster processing and less memory usage, but it can also lead to some accuracy loss.

What does F16, Q8, and Q4KM mean?

- F16: Float16, a data type that uses 16 bits to represent a floating-point number, offering a balance between precision and memory usage.

- Q8: Quantization with 8 bits per weight, reducing memory usage but potentially sacrificing accuracy.

- Q4KM: Quantization with 4 bits per weight using techniques like Kernel and Matrix Quantization to preserve accuracy.

Keywords

LLMs, Large Language Models, Apple M3 Max, NVIDIA 3080, GPU, CPU, unified memory, token speed, processing speed, generation speed, Llama2, Llama3, F16, Q8, Q4KM, quantization, performance comparison, benchmark analysis, local inference, AI, machine learning, deep learning