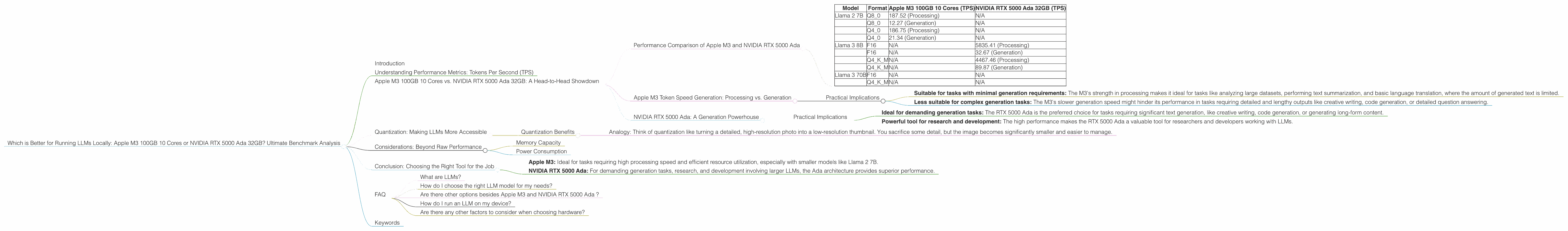

Which is Better for Running LLMs locally: Apple M3 100gb 10cores or NVIDIA RTX 5000 Ada 32GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming, and developers are constantly looking for ways to run these powerful models locally. While cloud services like Google Colab offer powerful options, running LLMs on your own machine provides greater control, faster access, and reduced latency. But choosing the right hardware for the job can be a daunting task.

This article will dive into the performance comparison of two popular options for local LLM execution: the Apple M3 processor with 100GB of memory and 10 cores, and the NVIDIA RTX 5000 Ada graphics card with 32GB of memory. We'll analyze their strengths and weaknesses, evaluate their performance, and provide practical recommendations for different use cases.

Buckle up, as we embark on a data-driven journey to discover the ultimate champion for local LLM execution.

Understanding Performance Metrics: Tokens Per Second (TPS)

Before we jump into the comparison, let's clarify the main performance metric we'll be using: Tokens per second (TPS).

TPS measures the speed at which a device can process and generate text tokens. A higher TPS generally indicates better performance. Think of it like this: the more tokens a device can process per second, the faster it can generate responses, translate text, or perform other language-based tasks.

Apple M3 100GB 10 Cores vs. NVIDIA RTX 5000 Ada 32GB: A Head-to-Head Showdown

Performance Comparison of Apple M3 and NVIDIA RTX 5000 Ada

| Model | Format | Apple M3 100GB 10 Cores (TPS) | NVIDIA RTX 5000 Ada 32GB (TPS) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 187.52 (Processing) | N/A |

| Q8_0 | 12.27 (Generation) | N/A | |

| Q4_0 | 186.75 (Processing) | N/A | |

| Q4_0 | 21.34 (Generation) | N/A | |

| Llama 3 8B | F16 | N/A | 5835.41 (Processing) |

| F16 | N/A | 32.67 (Generation) | |

| Q4KM | N/A | 4467.46 (Processing) | |

| Q4KM | N/A | 89.87 (Generation) | |

| Llama 3 70B | F16 | N/A | N/A |

| Q4KM | N/A | N/A |

Key Observations:

- Limited Data Availability: The benchmark data for the Apple M3 is limited to Llama 2 7B, and the NVIDIA RTX 5000 Ada data is mainly for Llama 3 models (8B and 70B), so a direct comparison is difficult.

- Apple M3 Performance: The Apple M3 demonstrates impressive performance with the Llama 2 7B model, especially in the processing stage, where it achieves around 187 TPS in Q80 and Q40 formats. However, the generation speed is significantly lower, suggesting that the M3 might struggle with more complex models.

- NVIDIA RTX 5000 Ada Dominates: The NVIDIA RTX 5000 Ada shows massive advantages when running Llama 3 8B, particularly for processing. With a TPS exceeding 5800 for F16 and 4400 for Q4KM, it outperforms the M3 by a large margin. However, the generation speed is still significantly lower compared to the processing speed.

- No Comparative Data for Larger Models: The benchmark data is missing for larger models on both devices (Llama 3 70B on both, Llama 2 7B on the RTX 5000 Ada), highlighting the need for more comprehensive benchmarks.

Let's delve deeper into the analysis of each device's performance and compare their strengths and weaknesses.

Apple M3 Token Speed Generation: Processing vs. Generation

The Apple M3 shows a clear disparity between its processing and generation speeds. While it excels in processing tokens quickly, generation seems to be a bottleneck. This suggests that the M3 is highly efficient at reading and interpreting model instructions, but struggles to translate them into coherent outputs.

Think of it like this: the M3 is a brilliant athlete who can sprint through a maze effortlessly, but stumbles when it comes to completing a complex puzzle at the end.

Practical Implications

- Suitable for tasks with minimal generation requirements: The M3's strength in processing makes it ideal for tasks like analyzing large datasets, performing text summarization, and basic language translation, where the amount of generated text is limited.

- Less suitable for complex generation tasks: The M3's slower generation speed might hinder its performance in tasks requiring detailed and lengthy outputs like creative writing, code generation, or detailed question answering.

NVIDIA RTX 5000 Ada: A Generation Powerhouse

The NVIDIA RTX 5000 Ada demonstrates superior processing and generation capabilities, especially when running Llama 3 models. This suggests that the Ada architecture is well-suited for handling the complex computations involved in running larger LLMs.

Practical Implications

- Ideal for demanding generation tasks: The RTX 5000 Ada is the preferred choice for tasks requiring significant text generation, like creative writing, code generation, or generating long-form content.

- Powerful tool for research and development: The high performance makes the RTX 5000 Ada a valuable tool for researchers and developers working with LLMs.

Quantization: Making LLMs More Accessible

Both the Apple M3 and the NVIDIA RTX 5000 Ada support quantization, a technique that reduces the size of LLM models by simplifying the numbers used to represent them. This results in smaller, more manageable models that require less memory and processing power.

Quantization Benefits

- Reduced memory footprint: Quantized models can occupy significantly less memory, making them suitable for devices with limited RAM.

- Faster loading times: Smaller models load and run faster, reducing the time required for tasks.

- Improved performance: While quantization can reduce accuracy, it can sometimes lead to faster inference speeds.

Analogy: Think of quantization like turning a detailed, high-resolution photo into a low-resolution thumbnail. You sacrifice some detail, but the image becomes significantly smaller and easier to manage.

Considerations: Beyond Raw Performance

While raw performance metrics are crucial, other factors can influence your choice of hardware for running LLMs locally.

Memory Capacity

The memory capacity of your device is essential for running larger LLM models. The Apple M3 offers 100GB of RAM, while the NVIDIA RTX 5000 Ada has 32GB. For larger models like Llama 3 70B, 100GB of RAM is likely to be more beneficial, as the model might not fit comfortably in the 32GB of the RTX 5000 Ada.

Power Consumption

Both the Apple M3 and the NVIDIA RTX 5000 Ada consume significant power during operation. If you're concerned about energy consumption, consider the Apple M3 which is generally more power-efficient than a dedicated GPU.

Conclusion: Choosing the Right Tool for the Job

Both the Apple M3 and the NVIDIA RTX 5000 Ada offer unique advantages for running LLMs locally. The Apple M3 demonstrates impressive processing power, while the NVIDIA RTX 5000 Ada stands out in generating longer and more complex text outputs.

Ultimately, the best choice depends on your specific needs and use cases.

- Apple M3: Ideal for tasks requiring high processing speed and efficient resource utilization, especially with smaller models like Llama 2 7B.

- NVIDIA RTX 5000 Ada: For demanding generation tasks, research, and development involving larger LLMs, the Ada architecture provides superior performance.

Important Note: The available data is limited, and further benchmark studies are necessary to provide a more comprehensive comparison.

FAQ

What are LLMs?

LLMs are complex artificial intelligence models trained on massive datasets of text and code. They can perform various natural language tasks like generating creative content, translating languages, summarizing text, and answering questions.

How do I choose the right LLM model for my needs?

The choice of LLM model depends on the specific task you want to perform. If you need a model for simple tasks like text summarization or basic translation, a smaller model like Llama 2 7B might suffice. For more complex tasks like generating creative content or code, a larger model like Llama 3 70B might be necessary.

Are there other options besides Apple M3 and NVIDIA RTX 5000 Ada ?

Yes, several other devices can run LLMs locally, including GPUs from AMD, dedicated AI accelerators like Google's TPU, and even some high-end CPUs. However, the comparison focused on these two popular options due to their availability and performance.

How do I run an LLM on my device?

Several open-source tools and libraries allow you to run LLMs locally. One popular option is llama.cpp, which provides a simple interface for running these models on various hardware platforms.

Are there any other factors to consider when choosing hardware?

Besides performance and memory capacity, you might also consider factors like noise level, power consumption, and cost when selecting hardware for running LLMs locally.

Keywords

LLMs, Apple M3, NVIDIA RTX 5000 Ada, Token per second (TPS), Processing, Generation, Quantization, GPU, CPU, Llama 2, Llama 3, Benchmark, Performance, Local, Hardware, Memory, Inference, Development, Research,