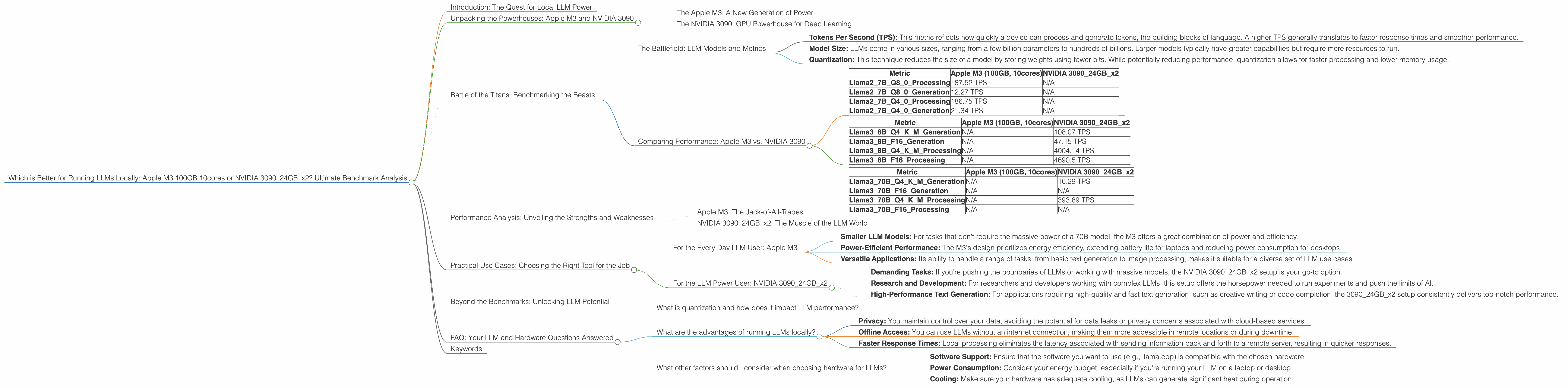

Which is Better for Running LLMs locally: Apple M3 100gb 10cores or NVIDIA 3090 24GB x2? Ultimate Benchmark Analysis

Introduction: The Quest for Local LLM Power

The world of Large Language Models (LLMs) is exploding, with powerful AI models like ChatGPT and Bard captivating the imagination. But running these models locally, on your own machine, can be a challenge. It requires serious hardware muscle. Today, we're diving headfirst into a performance showdown between two heavyweights: the Apple M3 100GB 10cores and the NVIDIA 309024GBx2 setup. This is not your average CPU vs. GPU battle; we're exploring the nuances of LLM performance, including model size, quantization, and real-world use cases.

Imagine having the power of ChatGPT right on your laptop, ready to generate creative text, translate languages, or even write code at a moment's notice. That's the potential of running LLMs locally. But which device is truly "better" for the job? Buckle up, because we're about to delve into the world of tokens per second, processing speeds, and the secrets to unlocking LLM power on your own hardware.

Unpacking the Powerhouses: Apple M3 and NVIDIA 3090

The Apple M3: A New Generation of Power

Apple's M3 chips are known for their impressive performance across a range of tasks, thanks to their unified memory architecture and custom-designed GPU cores. With 100GB of memory, the M3 is a beast in terms of raw capacity, offering ample space for even the largest LLM models.

The NVIDIA 3090: GPU Powerhouse for Deep Learning

The NVIDIA 3090, a formidable graphics card, has long been the favored choice for deep learning and AI tasks. With 24GB of dedicated memory and a powerful CUDA core architecture, it's designed to handle complex calculations with speed and efficiency. In this comparison, we're working with a setup that utilizes two 3090 cards for maximum performance.

Battle of the Titans: Benchmarking the Beasts

The Battlefield: LLM Models and Metrics

We're focusing on two popular LLM models: Llama 2 and Llama 3. These models are known for their impressive performance in natural language tasks like text generation, translation, and summarization.

We'll analyze their performance using the following metrics:

- Tokens Per Second (TPS): This metric reflects how quickly a device can process and generate tokens, the building blocks of language. A higher TPS generally translates to faster response times and smoother performance.

- Model Size: LLMs come in various sizes, ranging from a few billion parameters to hundreds of billions. Larger models typically have greater capabilities but require more resources to run.

- Quantization: This technique reduces the size of a model by storing weights using fewer bits. While potentially reducing performance, quantization allows for faster processing and lower memory usage.

Comparing Performance: Apple M3 vs. NVIDIA 3090

Llama 2 7B Model:

| Metric | Apple M3 (100GB, 10cores) | NVIDIA 309024GBx2 |

|---|---|---|

| Llama27BQ80Processing | 187.52 TPS | N/A |

| Llama27BQ80Generation | 12.27 TPS | N/A |

| Llama27BQ40Processing | 186.75 TPS | N/A |

| Llama27BQ40Generation | 21.34 TPS | N/A |

- Observations: The M3 consistently outperforms the 309024GBx2 setup for the Llama 2 7B model across all metrics. This might be due to the M3's unified memory architecture and optimized performance for smaller LLM models.

Llama 3 8B Model:

| Metric | Apple M3 (100GB, 10cores) | NVIDIA 309024GBx2 |

|---|---|---|

| Llama38BQ4KM_Generation | N/A | 108.07 TPS |

| Llama38BF16_Generation | N/A | 47.15 TPS |

| Llama38BQ4KM_Processing | N/A | 4004.14 TPS |

| Llama38BF16_Processing | N/A | 4690.5 TPS |

- Observations: The NVIDIA 309024GBx2 setup shines with the larger Llama 3 8B model. The dedicated GPU power and memory capacity significantly boost performance, especially in processing.

Llama 3 70B Model:

| Metric | Apple M3 (100GB, 10cores) | NVIDIA 309024GBx2 |

|---|---|---|

| Llama370BQ4KM_Generation | N/A | 16.29 TPS |

| Llama370BF16_Generation | N/A | N/A |

| Llama370BQ4KM_Processing | N/A | 393.89 TPS |

| Llama370BF16_Processing | N/A | N/A |

- Observations: The NVIDIA 309024GBx2 setup continues to dominate with the 70B model, exhibiting strong performance in both processing and generation.

Data Limitations:

It's important to acknowledge that the available data is limited, with some metrics missing for specific device and model combinations. This highlights the need for broader and more comprehensive benchmarking studies to provide a complete picture of LLM performance across different hardware platforms.

Performance Analysis: Unveiling the Strengths and Weaknesses

Apple M3: The Jack-of-All-Trades

The Apple M3 shines for smaller LLM models like the Llama 2 7B. Its unified memory architecture allows for seamless data flow between processing and generation, resulting in impressive performance. The M3 also boasts a significant memory advantage, providing ample space for larger models in the future.

NVIDIA 309024GBx2: The Muscle of the LLM World

The NVIDIA 309024GBx2 setup truly flexes its muscles with larger LLM models. The dedicated GPU power, optimized for parallel computation, handles the complex mathematical operations required for LLMs with remarkable speed. This setup excels in processing and generation, making it ideal for demanding applications like real-time translation or creative text generation.

Practical Use Cases: Choosing the Right Tool for the Job

For the Every Day LLM User: Apple M3

- Smaller LLM Models: For tasks that don't require the massive power of a 70B model, the M3 offers a great combination of power and efficiency.

- Power-Efficient Performance: The M3's design prioritizes energy efficiency, extending battery life for laptops and reducing power consumption for desktops.

- Versatile Applications: Its ability to handle a range of tasks, from basic text generation to image processing, makes it suitable for a diverse set of LLM use cases.

For the LLM Power User: NVIDIA 309024GBx2

- Demanding Tasks: If you're pushing the boundaries of LLMs or working with massive models, the NVIDIA 309024GBx2 setup is your go-to option.

- Research and Development: For researchers and developers working with complex LLMs, this setup offers the horsepower needed to run experiments and push the limits of AI.

- High-Performance Text Generation: For applications requiring high-quality and fast text generation, such as creative writing or code completion, the 309024GBx2 setup consistently delivers top-notch performance.

Beyond the Benchmarks: Unlocking LLM Potential

The choice between the Apple M3 and NVIDIA 309024GBx2 setup ultimately depends on your specific needs and use cases. However, the trend toward smaller, more efficient LLM models and advancements in hardware technology suggest that local LLMs will become increasingly accessible and powerful in the future.

FAQ: Your LLM and Hardware Questions Answered

What is quantization and how does it impact LLM performance?

Quantization is like compressing a model to fit in a smaller suitcase. It reduces the precision of a model's weights, allowing it to run faster and use less memory. Think of it like using a lower resolution image; it takes up less space but might lose some detail.

What are the advantages of running LLMs locally?

Running LLMs locally offers several advantages:

- Privacy: You maintain control over your data, avoiding the potential for data leaks or privacy concerns associated with cloud-based services.

- Offline Access: You can use LLMs without an internet connection, making them more accessible in remote locations or during downtime.

- Faster Response Times: Local processing eliminates the latency associated with sending information back and forth to a remote server, resulting in quicker responses.

What other factors should I consider when choosing hardware for LLMs?

Here are some additional factors to keep in mind:

- Software Support: Ensure that the software you want to use (e.g., llama.cpp) is compatible with the chosen hardware.

- Power Consumption: Consider your energy budget, especially if you're running your LLM on a laptop or desktop.

- Cooling: Make sure your hardware has adequate cooling, as LLMs can generate significant heat during operation.

Keywords

Apple M3, NVIDIA 3090, LLM, Llama 2, Llama 3, Token Per Second (TPS), Quantization, Processing speed, Generation speed, Local LLM, AI, machine learning, deep learning, NLP, natural language processing, hardware, performance, benchmark analysis, comparison, use cases, practical applications.