Which is Better for Running LLMs locally: Apple M2 Ultra 800gb 60cores or NVIDIA L40S 48GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, and running these powerful AI models locally is becoming increasingly popular. This enables more control, privacy, and potential cost savings compared to cloud-based solutions. But which device is best suited for local LLM execution? This article dives into the performance of two popular choices: Apple’s M2 Ultra 800GB 60 Core and NVIDIA’s L40S 48GB. We will compare their performance on several popular LLM models, analyze their strengths and weaknesses, and provide practical recommendations for your use cases.

Imagine you're building a chatbot for your website. You want it to be snappy and responsive, providing near-instant answers to user queries. Or maybe you're a researcher experimenting with different LLM architectures to push the boundaries of AI. Either way, choosing the right hardware is crucial to unlocking the full potential of LLMs.

Performance Analysis: A Head-to-Head Comparison

Let’s get down to the nitty-gritty and see how these two titans of the tech world stack up against each other. We'll be looking at their performance across different LLM models, considering various quantization levels (F16, Q80, Q40, Q4KM) which affect both memory usage and speed.

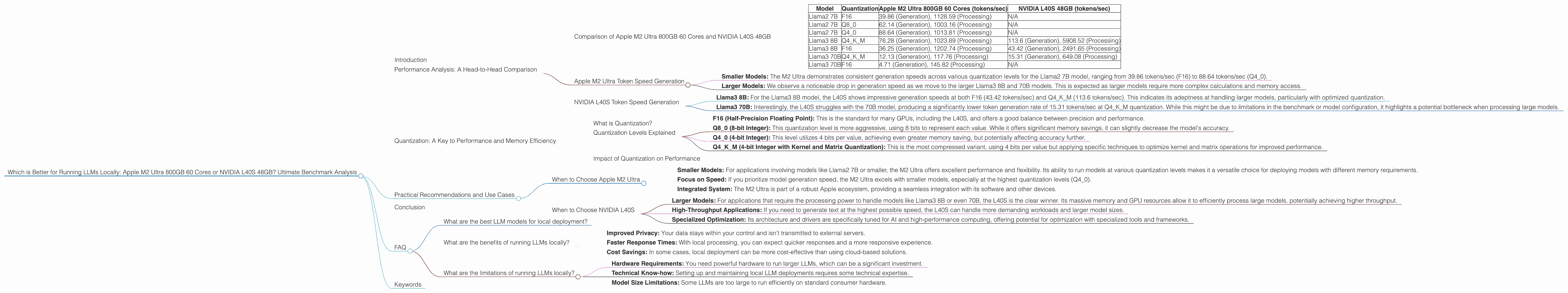

Comparison of Apple M2 Ultra 800GB 60 Cores and NVIDIA L40S 48GB

To make it easier to digest the data, let’s put it in a table format. This table will showcase the token-per-second (tokens/sec) performance for different LLM models and quantization levels. Note that some combinations are not available for both devices, highlighting their individual strengths and weaknesses.

| Model | Quantization | Apple M2 Ultra 800GB 60 Cores (tokens/sec) | NVIDIA L40S 48GB (tokens/sec) |

|---|---|---|---|

| Llama2 7B | F16 | 39.86 (Generation), 1128.59 (Processing) | N/A |

| Llama2 7B | Q8_0 | 62.14 (Generation), 1003.16 (Processing) | N/A |

| Llama2 7B | Q4_0 | 88.64 (Generation), 1013.81 (Processing) | N/A |

| Llama3 8B | Q4KM | 76.28 (Generation), 1023.89 (Processing) | 113.6 (Generation), 5908.52 (Processing) |

| Llama3 8B | F16 | 36.25 (Generation), 1202.74 (Processing) | 43.42 (Generation), 2491.65 (Processing) |

| Llama3 70B | Q4KM | 12.13 (Generation), 117.76 (Processing) | 15.31 (Generation), 649.08 (Processing) |

| Llama3 70B | F16 | 4.71 (Generation), 145.82 (Processing) | N/A |

Observations & Insights:

- NVIDIA L40S: Seems to be the clear winner in processing speed for larger models, specifically Llama3 8B and 70B. This is likely due to its dedicated GPU architecture optimized for high-performance computing. The L40S boasts a massive 48GB of HBM3e memory, which allows for efficient data transfer and processing.

- Apple M2 Ultra: While it doesn't match the L40S in sheer processing horsepower, the M2 Ultra shines in its ability to efficiently run smaller models like Llama2 7B. Its versatility with different quantization levels (Q40, Q80, F16) gives it more flexibility for various use cases.

- Generation Speed: Both the M2 Ultra and L40S have comparable generation speeds for smaller models (Llama2 7B). The M2 Ultra edges out slightly at the highest quantization level (Q4_0), while the L40S takes the lead with the larger Llama3 8B, although it struggles with the 70B model.

- Missing Data: It’s worth noting that the data provided does not contain performance figures for the L40S with Llama2 7B, Llama3 70B F16, and Llama3 8B Q4KM processing speeds. This might be due to the specific configurations tested or limitations of current benchmarks.

Apple M2 Ultra Token Speed Generation

Let's zoom in on the M2 Ultra's performance and explore how it handles different models and quantization levels.

- Smaller Models: The M2 Ultra demonstrates consistent generation speeds across various quantization levels for the Llama2 7B model, ranging from 39.86 tokens/sec (F16) to 88.64 tokens/sec (Q4_0).

- Larger Models: We observe a noticeable drop in generation speed as we move to the larger Llama3 8B and 70B models. This is expected as larger models require more complex calculations and memory access.

NVIDIA L40S Token Speed Generation

Now, let's delve into the L40S's generation capabilities.

- Llama3 8B: For the Llama3 8B model, the L40S shows impressive generation speeds at both F16 (43.42 tokens/sec) and Q4KM (113.6 tokens/sec). This indicates its adeptness at handling larger models, particularly with optimized quantization.

- Llama3 70B: Interestingly, the L40S struggles with the 70B model, producing a significantly lower token generation rate of 15.31 tokens/sec at Q4KM quantization. While this might be due to limitations in the benchmark or model configuration, it highlights a potential bottleneck when processing large models.

Quantization: A Key to Performance and Memory Efficiency

What is Quantization?

Quantization is a technique used to reduce the memory footprint of LLMs, making them more efficient to run on devices with limited resources. Imagine compressing a high-definition video into a smaller file size without sacrificing too much visual quality. Quantization does something similar for LLMs, reducing the precision of numbers representing weights and activations in the model.

Think of quantization as putting numbers on a diet. Instead of using a full-fledged 32-bit number for each value, we can use smaller versions like 16-bit or 8-bit, sacrificing some precision but drastically decreasing the memory required to load and process the model.

Quantization Levels Explained

- F16 (Half-Precision Floating Point): This is the standard for many GPUs, including the L40S, and offers a good balance between precision and performance.

- Q8_0 (8-bit Integer): This quantization level is more aggressive, using 8 bits to represent each value. While it offers significant memory savings, it can slightly decrease the model's accuracy.

- Q4_0 (4-bit Integer): This level utilizes 4 bits per value, achieving even greater memory saving, but potentially affecting accuracy further.

- Q4KM (4-bit Integer with Kernel and Matrix Quantization): This is the most compressed variant, using 4 bits per value but applying specific techniques to optimize kernel and matrix operations for improved performance.

Impact of Quantization on Performance

The performance of both the M2 Ultra and L40S is directly influenced by the chosen quantization level. As we move towards more compressed levels (like Q40 and Q4KM), we observe a trade-off between memory efficiency and performance. While the L40S thrives with Q4K_M quantization for the Llama3 8B model, its performance on the 70B model deteriorates. The M2 Ultra demonstrates more consistent performance across different quantization levels for the Llama2 7B model, showing its ability to handle various memory constraints.

Practical Recommendations and Use Cases

When to Choose Apple M2 Ultra

- Smaller Models: For applications involving models like Llama2 7B or smaller, the M2 Ultra offers excellent performance and flexibility. Its ability to run models at various quantization levels makes it a versatile choice for deploying models with different memory requirements.

- Focus on Speed: If you prioritize model generation speed, the M2 Ultra excels with smaller models, especially at the highest quantization levels (Q4_0).

- Integrated System: The M2 Ultra is part of a robust Apple ecosystem, providing a seamless integration with its software and other devices.

When to Choose NVIDIA L40S

- Larger Models: For applications that require the processing power to handle models like Llama3 8B or even 70B, the L40S is the clear winner. Its massive memory and GPU resources allow it to efficiently process large models, potentially achieving higher throughput.

- High-Throughput Applications: If you need to generate text at the highest possible speed, the L40S can handle more demanding workloads and larger model sizes.

- Specialized Optimization: Its architecture and drivers are specifically tuned for AI and high-performance computing, offering potential for optimization with specialized tools and frameworks.

Conclusion

Choosing the right device for running LLMs locally is a crucial decision that impacts both performance and cost. Both the Apple M2 Ultra 800GB 60 Cores and NVIDIA L40S 48GB offer compelling advantages for different use cases. The M2 Ultra shines in its ability to handle smaller models with high efficiency across various quantization levels, while the L40S emerges as the powerhouse for processing larger models with impressive speed. Ultimately, the best choice depends on your specific requirements, model size, and desired performance. With this detailed analysis, you have the tools to make an informed decision and unleash the full potential of your local LLM deployments.

FAQ

What are the best LLM models for local deployment?

The choice of LLM model depends on your specific use case. For smaller, resource-constrained applications, models like Llama2 7B or smaller versions of Llama3 are excellent choices. If you need the power to handle more complex tasks, larger models like Llama3 8B or 70B might be more suitable, though they require more powerful hardware.

What are the benefits of running LLMs locally?

Running LLMs locally offers several advantages:

- Improved Privacy: Your data stays within your control and isn't transmitted to external servers.

- Faster Response Times: With local processing, you can expect quicker responses and a more responsive experience.

- Cost Savings: In some cases, local deployment can be more cost-effective than using cloud-based solutions.

What are the limitations of running LLMs locally?

While running LLMs locally is becoming more accessible, it's not without its challenges:

- Hardware Requirements: You need powerful hardware to run larger LLMs, which can be a significant investment.

- Technical Know-how: Setting up and maintaining local LLM deployments requires some technical expertise.

- Model Size Limitations: Some LLMs are too large to run efficiently on standard consumer hardware.

Keywords

Apple M2 Ultra, NVIDIA L40S, LLM, Large Language Model, Llama2, Llama3, Token Speed, Quantization, F16, Q80, Q40, Q4KM, Local Deployment, Performance Benchmark, Processing, Generation, GPU, CPU, Memory, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP.