Which is Better for Running LLMs locally: Apple M2 Ultra 800gb 60cores or NVIDIA A100 SXM 80GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, and running these models locally is becoming increasingly popular. While cloud-based solutions are still the dominant force, having a dedicated LLM setup in your own machine offers the benefits of privacy, lower latency, and more control over your data. But with the mind-boggling variety of hardware available, choosing the right setup for your LLM needs can feel like navigating a maze.

This article pits two powerhouses against each other: the Apple M2 Ultra 800GB 60 Core and the NVIDIA A100SXM80GB, both known for their computational prowess. We'll dive deep into their performance, comparing their strengths and weaknesses when running popular LLM models like Llama 2 and Llama 3. This comprehensive analysis will help you determine which powerhouse is best suited for your LLM adventures.

Performance Analysis of M2 Ultra & A100SXM80GB for LLMs

To get a clear picture, we'll analyze each device's performance based on the most popular quantization levels for Llama 2 and Llama 3:

- F16: A standard approach where numbers are represented using 16 bits, striking a balance between speed and accuracy.

- Q8_0: A quantization level that uses 8 bits for representation, leading to significantly smaller models and potentially faster inference times.

- Q4KM: An advanced quantization technique that uses only 4 bits to represent numbers, dramatically reducing the model's size and memory footprint.

Note: Data on the A100SXM80GB is limited, focusing mainly on Llama 3 models. We will highlight missing data points throughout the analysis.

Apple M2 Ultra 800GB 60 Core: Token Speed Generation Showdown

The M2 Ultra 800GB 60 Core is a beastly chip boasting a massive 800GB of unified memory (think a superhighway for data) and 60 CPU cores designed to tackle demanding tasks like LLM inference. Let's see how it stacks up against the A100SXM80GB.

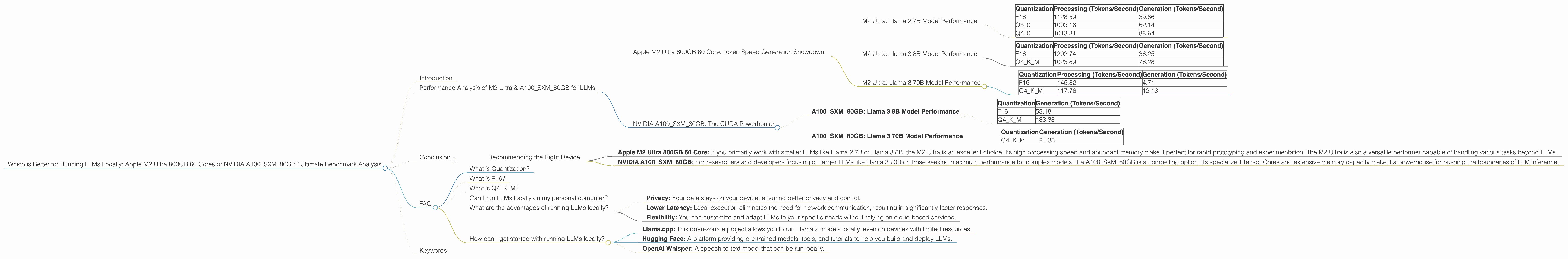

M2 Ultra: Llama 2 7B Model Performance

| Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| F16 | 1128.59 | 39.86 |

| Q8_0 | 1003.16 | 62.14 |

| Q4_0 | 1013.81 | 88.64 |

Key Observations:

- Processing Power: The M2 Ultra excels in the processing phase, generating tokens at a high rate, particularly with F16 quantization. This is because the M2 Ultra's architecture is optimized for memory bandwidth, which is crucial for fast processing.

- Generation Speed: The M2 Ultra's generation speed is comparable to the A100SXM80GB in the Llama 3 8B model (as we'll see later). However, it lags behind the A100 in the Llama 3 70B model.

- Quantization: Q4_0 quantization offers the highest token generation speed with the M2 Ultra, showcasing its ability to handle complex tasks efficiently.

M2 Ultra: Llama 3 8B Model Performance

| Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| F16 | 1202.74 | 36.25 |

| Q4KM | 1023.89 | 76.28 |

Key Observations:

- F16 vs. Q4KM: While the M2 Ultra exhibits strong processing performance with F16 quantization, Q4KM outperforms it in generation speed for the Llama 3 8B model. This highlights the effectiveness of Q4KM in optimizing for LLM inference on this specific model.

- Comparison with A100SXM80GB: The M2 Ultra's generation speed for Llama 3 8B is significantly lower than the A100SXM80GB. This disparity might stem from the A100's specialized Tensor Cores designed for matrix multiplication, which are heavily used in LLMs.

M2 Ultra: Llama 3 70B Model Performance

| Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| F16 | 145.82 | 4.71 |

| Q4KM | 117.76 | 12.13 |

Key Observations:

- Scaling Up: When running larger models like Llama 3 70B, the M2 Ultra's performance significantly decreases compared to its performance with smaller models. This is because the massive memory demand of the 70B model puts a strain on the M2 Ultra's memory bandwidth.

- A100 Advantage: The A100SXM80GB outperforms the M2 Ultra in terms of generation speed for Llama 3 70B, indicating that the A100's optimized Tensor Cores are crucial for efficient inference with larger LLMs

NVIDIA A100SXM80GB: The CUDA Powerhouse

The NVIDIA A100SXM80GB is a GPU designed for high-performance computing, boasting a massive 80GB of HBM2e memory and 40GB/s memory bandwidth. It's renowned for its ability to handle complex computational tasks with lightning speed thanks to its dedicated Tensor Cores, specifically optimized for matrix operations, a core component of LLM inference.

Let's analyze its performance in detail, comparing it to the M2 Ultra where data allows.

A100SXM80GB: Llama 3 8B Model Performance

| Quantization | Generation (Tokens/Second) |

|---|---|

| F16 | 53.18 |

| Q4KM | 133.38 |

Key Observations:

- Tensor Core Advantage: The A100SXM80GB demonstrates exceptional performance, especially with Q4KM quantization. This underscores the A100's efficiency in utilizing its Tensor Cores, leading to significant speed gains.

- Higher Generation Speed: Even with F16 quantization, the A100's generation speed surpasses the M2 Ultra's Q4KM performance for the Llama 3 8B model. This suggests its superior ability to handle complex matrix multiplications involved in LLM inference.

A100SXM80GB: Llama 3 70B Model Performance

| Quantization | Generation (Tokens/Second) |

|---|---|

| Q4KM | 24.33 |

Key Observations:

- Larger Model Performance: Despite the absence of data on F16 performance, the A100SXM80GB demonstrates impressive generation speed even with the massive Llama 3 70B model using Q4KM quantization. This signifies its ability to handle demanding computational tasks with larger LLMs.

- Efficiency in Large Models: The A100SXM80GB's strong performance with the Llama 3 70B model may be attributed to its extensive memory capacity and its optimized Tensor Cores. These factors enable it to efficiently process large models with minimal performance loss.

Conclusion

Both the Apple M2 Ultra 800GB 60 Core and NVIDIA A100SXM80GB are powerful contenders for running LLMs locally. The M2 Ultra shines with its exceptional processing speed and massive memory bandwidth, particularly with smaller models. However, the A100SXM80GB edges out in terms of generation speed, especially with larger models and Q4KM quantization. This is primarily due to its dedicated Tensor Cores, designed to excel in matrix operations commonly found in LLM inference.

Recommending the Right Device

- Apple M2 Ultra 800GB 60 Core: If you primarily work with smaller LLMs like Llama 2 7B or Llama 3 8B, the M2 Ultra is an excellent choice. Its high processing speed and abundant memory make it perfect for rapid prototyping and experimentation. The M2 Ultra is also a versatile performer capable of handling various tasks beyond LLMs.

- NVIDIA A100SXM80GB: For researchers and developers focusing on larger LLMs like Llama 3 70B or those seeking maximum performance for complex models, the A100SXM80GB is a compelling option. Its specialized Tensor Cores and extensive memory capacity make it a powerhouse for pushing the boundaries of LLM inference.

FAQ

What is Quantization?

Quantization is a technique used to reduce the size and memory footprint of LLMs while maintaining reasonable accuracy. Think of it like compressing a high-resolution image into a smaller file size without sacrificing too much detail. By using fewer bits to represent numbers, LLMs become faster and more efficient to run, especially on devices with limited memory like personal computers.

What is F16?

F16 represents a quantization level where each number within the LLM is stored using 16 bits. This is a common approach that balances accuracy and speed. While not as dramatically compressed as approaches like Q4KM, F16 still effectively reduces the model's size.

What is Q4KM?

Q4KM is a more advanced quantization technique that uses only 4 bits to represent each number. This results in a significant reduction in the model's size and memory demand, making it ideal for devices with limited resources. The "K" and "M" in Q4KM stand for "kernel" and "matrix," respectively. This type of quantization specifically targets the matrices used within the LLM architecture, further optimizing its performance.

Can I run LLMs locally on my personal computer?

Yes! Running LLMs locally is becoming increasingly accessible. While dedicated hardware like the M2 Ultra or A100SXM80GB provides superior performance, you can still achieve reasonable results with even a consumer-grade GPU, especially with smaller models.

What are the advantages of running LLMs locally?

Running LLMs locally offers several benefits:

- Privacy: Your data stays on your device, ensuring better privacy and control.

- Lower Latency: Local execution eliminates the need for network communication, resulting in significantly faster responses.

- Flexibility: You can customize and adapt LLMs to your specific needs without relying on cloud-based services.

How can I get started with running LLMs locally?

There are several resources available to help you get started:

- Llama.cpp: This open-source project allows you to run Llama 2 models locally, even on devices with limited resources.

- Hugging Face: A platform providing pre-trained models, tools, and tutorials to help you build and deploy LLMs.

- OpenAI Whisper: A speech-to-text model that can be run locally.

Keywords

LLMs, large language models, Apple M2 Ultra, NVIDIA A100SXM80GB, Llama 2, Llama 3, token generation, processing speed, inference, quantization, F16, Q80, Q4K_M, Tensor Cores, local execution, memory bandwidth, GPU, CPU, performance, benchmark analysis, deep learning, artificial intelligence.