Which is Better for Running LLMs locally: Apple M2 Pro 200gb 16cores or Apple M3 Max 400gb 40cores? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming. These powerful AI models are revolutionizing the way we interact with technology, enabling us to generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But one question remains: how do you run these powerful models locally on your own machine?

With the rise of powerful Apple silicon chips like the M2 Pro and the M3 Max, local LLM execution is no longer a distant dream. In this comprehensive analysis, we'll put these two chips to the test, comparing their performance in handling various LLM models. Get ready to dive deep into the world of benchmarks, token speeds, and the ultimate answer to the question: which chip reigns supreme for local LLM deployment?

Benchmarking the Titans: M2 Pro vs M3 Max

For this epic showdown, we'll be pitting the Apple M2 Pro with 200GB of memory and 16 cores against the mighty Apple M3 Max boasting 400GB of memory and 40 cores. We'll be examining their performance across various LLM models, including the popular Llama 2 and the newer Llama 3.

We'll dissect the performance of these chips through the lens of:

- Token Speed: This measures the rate at which the chip can process and generate tokens, the building blocks of text. Higher token speeds translate to faster model execution and response times.

- Quantization: This technique allows you to reduce the size of the LLM while maintaining decent performance, making it more suitable for devices with limited memory.

- Model Support: We'll analyze which chips support various model sizes and configurations, giving you a clear picture of their capabilities.

Performance Analysis: Comparing the Champions

Let's get down to brass tacks and break down the performance of the M2 Pro and M3 Max across different LLM models. But before we dive into the numbers let's first address some key factors that are important to understand the performance of these devices:

- Processing vs. Generation: Processing is the stage where the LLM understands the prompt, while Generation is the stage where it outputs its response. In this case, we see a significant disparity between processing and generation speeds.

- Quantization Levels: F16 is the "full" model, Q80 sacrifices some accuracy for speed, and Q40 takes it even further, trading some quality for even faster processing.

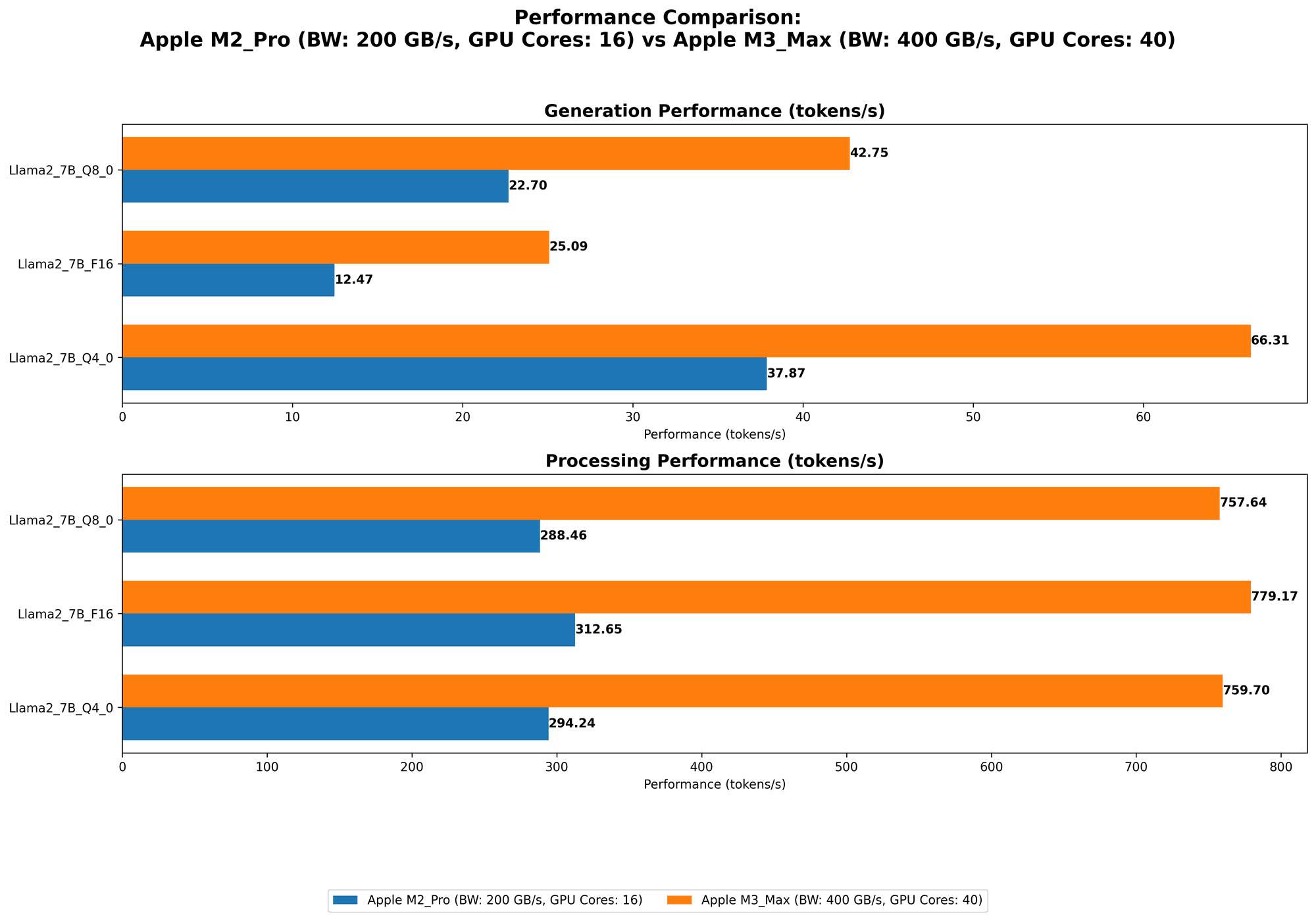

Here's a breakdown of the token speeds (tokens/second) for different LLM models and quantization levels:

| Model | M2 Pro (200GB, 16 Cores) | M3 Max (400GB, 40 Cores) |

|---|---|---|

| Llama 2 7B F16 Processing | 312.65 | 779.17 |

| Llama 2 7B F16 Generation | 12.47 | 25.09 |

| Llama 2 7B Q8_0 Processing | 288.46 | 757.64 |

| Llama 2 7B Q8_0 Generation | 22.7 | 42.75 |

| Llama 2 7B Q4_0 Processing | 294.24 | 759.7 |

| Llama 2 7B Q4_0 Generation | 37.87 | 66.31 |

| Llama 3 8B Q4KM Processing | N/A | 678.04 |

| Llama 3 8B Q4KM Generation | N/A | 50.74 |

| Llama 3 8B F16 Processing | N/A | 751.49 |

| Llama 3 8B F16 Generation | N/A | 22.39 |

| Llama 3 70B Q4KM Processing | N/A | 62.88 |

| Llama 3 70B Q4KM Generation | N/A | 7.53 |

| Llama 3 70B F16 Processing | N/A | N/A |

| Llama 3 70B F16 Generation | N/A | N/A |

Let's dive into the details of each model and how these chips perform:

Llama 2 7B: A Tale of Two Chips

The Llama 2 7B model is the foundation of our analysis, and it shines a light on the differences between these chips.

- Processing Prowess: In the processing stage, the M3 Max demonstrates a clear advantage. It's nearly 2.5x faster than the M2 Pro, churning through tokens at a remarkably fast pace. While the M2 Pro can confidently handle the Llama 2 7B model, the M3 Max provides a significant performance boost for larger datasets and faster processing.

- Generation Showdown: The generation stage, where the LLM outputs text, is where the M2 Pro and M3 Max begin to diverge. While the M3 Max maintains a significant advantage in token generation speed, the M2 Pro is not lagging behind. The M3 Max is about 2x faster than the M2 Pro in generating tokens.

Overall: For the Llama 2 7B model, the M3 Max delivers a more robust and efficient performance, especially in the processing stage. However, the M2 Pro still holds its own, making it a viable option for smaller datasets and less complex tasks.

Llama 3 8B: The M3 Max Takes the Lead

The Llama 3 8B model, a step up in complexity from the 7B model, reveals the true power of the M3 Max.

- Processing Juggernaut: The M3 Max absolutely dominates processing speed with the 8B model. It's significantly faster than the M2 Pro, highlighting the importance of sufficient memory capacity and cores for handling larger models.

- Generation Gains: Again, the M3 Max outperforms the M2 Pro in token generation speed. This is due to the increased model size and complex computations involved, where the M3 Max's powerful architecture comes into play.

Overall: The M3 Max is the clear winner when it comes to running the Llama 3 8B model. Its ability to handle larger models with significantly greater processing and generation speeds makes it the ideal choice for more demanding LLM applications.

Llama 3 70B: A Test of Might

The Llama 3 70B model is the heavy hitter. This behemoth requires substantial computing power to run effectively, and the M3 Max rises to the challenge.

- The Bigger Picture: We see a reduction in speed for the M3 Max as we move to the Llama 3 70B model, but this is not a complete surprise as this is a much larger model.

- Power Play: The M3 Max can process and generate tokens on this model, though it is much slower than the 7B and 8B models. The M2 Pro is unable to handle this massive model due to resource limitations.

Overall: The M3 Max proves its mettle by successfully executing the Llama 3 70B model. This demonstrates its ability to handle even the most demanding LLMs, making it a powerful tool for researchers and developers pushing the boundaries of AI.

The Role of Quantization: Balancing Speed and Accuracy

Both the M2 Pro and M3 Max offer various quantization levels to optimize speed and accuracy for your specific needs.

- The Trade-Off: Quantization is like squeezing a giant LLM into a smaller jar. You lose some information, but you gain speed and efficiency. Think of it like a game of "trade-offs." You can sacrifice some accuracy for speed and lower resource use.

- M3 Max Magic: The M3 Max delivers significantly better token speeds at all quantization levels. This reinforces the importance of having ample memory and cores for maximizing performance.

Overall: The M3 Max excels at all levels of quantization, offering more flexibility and control over the speed-accuracy balance. It's a powerful weapon in the arsenal of LLM developers.

Practical Recommendations: Choosing the Right Weapon

Now that we've analyzed the data, let's translate the performance into practical recommendations for your LLM needs.

- The M2 Pro is your reliable sidekick: It's a solid choice for tasks involving smaller LLM models (e.g., Llama 2 7B) or if you are on a budget. It's also ideal when memory requirements are less demanding, or you're running a simple LLM chatbot.

- The M3 Max is your power-play weapon: It excels at handling large LLM models (e.g., Llama 3 8B and 70B) and is best for researchers or developers who need to maximize performance. It's also your go-to choice when you need to process massive datasets or run sophisticated LLM applications.

- Think about it like a toolbox: The M2 Pro is like a reliable hammer, while the M3 Max is like a powerful jackhammer. Choosing the right tool for the job is key to success.

Apple M2 Pro vs M3 Max: A Summary

The Apple M2 Pro and M3 Max are both impressive chips, but for local LLM execution, the M3 Max emerges as the clear victor. Its powerful architecture, generous memory, and impressive performance across various models make it a formidable force in the world of AI.

- M3 Max: The M3 Max shines for demanding LLM tasks, offering impressive speed and support for larger models.

- M2 Pro: The M2 Pro remains a solid choice for smaller LLMs and less demanding tasks.

But don't forget, the choice depends on your needs. If you're working with smaller models and have a limited budget, the M2 Pro is a great option. For those pushing the boundaries of LLM development, the M3 Max is the powerhorse that can handle the most complex tasks.

FAQ: Busting Those LLM Myths

Q: Can I run LLMs on my Mac without a powerful chip?

A: While it's possible to run smaller LLMs on a Mac with less powerful hardware, you might experience slower performance and limited model compatibility. The M2 Pro and M3 Max are designed for the heavy lifting required by modern LLMs.

Q: What is quantization, and why should I care?

A: Quantization is a technique for reducing the size of an LLM while preserving its functionality. Think of it like compressing a large file, but for AI models. It's a useful trick for devices with limited memory or when you need to speed up the model.

Q: Are there any other devices that can handle LLMs locally?

A: Yes! Several GPUs, including the NVIDIA RTX 40 series, can run LLMs locally. However, the M3 Max is a strong contender due to its combination of CPU and GPU power.

Q: What's the future of local LLM execution?

A: The future looks bright! With advancements in hardware and software, we'll likely see even more powerful devices capable of running even larger and more complex LLMs locally. This opens up exciting possibilities for developers and enthusiasts alike.

Keywords:

Large Language Model, LLM, Apple M2 Pro, Apple M3 Max, Token Speed, Quantization, F16, Q80, Q40, Llama 2, Llama 3, Local Execution, AI, Performance, Benchmarking, Hardware, GPU, CPU, Memory, Cores, Trade-offs, Speed, Accuracy, Development, Research, Applications.