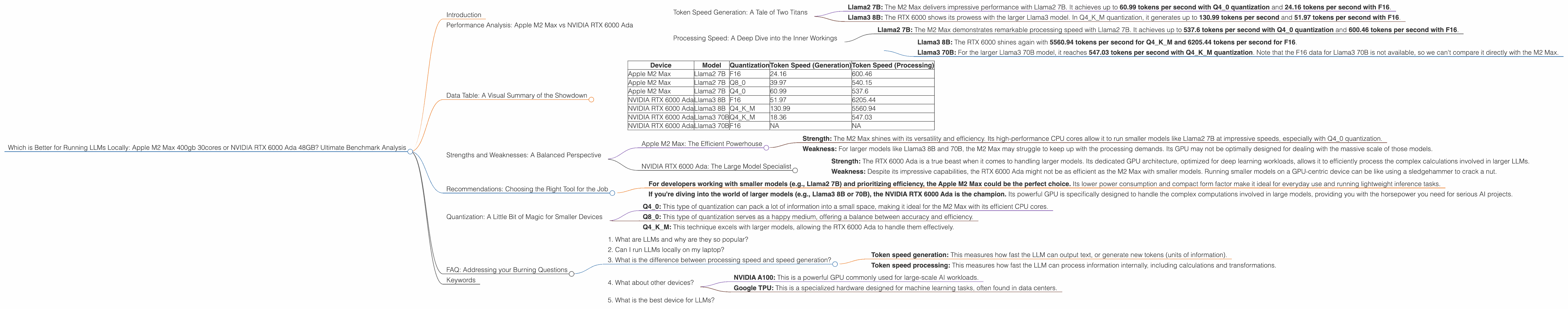

Which is Better for Running LLMs locally: Apple M2 Max 400gb 30cores or NVIDIA RTX 6000 Ada 48GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, and developers are constantly looking for ways to run these powerful models locally. One of the key factors influencing performance is the hardware used. Today, we'll compare two high-end devices: the Apple M2 Max 400GB 30-core and the NVIDIA RTX 6000 Ada 48GB, to see which reigns supreme for running LLMs locally.

Think of these devices like powerful brains for your LLM projects. We'll dive deep into benchmarks, analyze their strengths, and help you choose the right weapon for your AI battles.

Performance Analysis: Apple M2 Max vs NVIDIA RTX 6000 Ada

Token Speed Generation: A Tale of Two Titans

Let's kick things off with the token speed generation performance. Imagine your LLM as an eloquent storyteller; it's the speed at which it spins those words that matters.

Apple M2 Max:

- Llama2 7B: The M2 Max delivers impressive performance with Llama2 7B. It achieves up to 60.99 tokens per second with Q4_0 quantization and 24.16 tokens per second with F16.

NVIDIA RTX 6000 Ada:

- Llama3 8B: The RTX 6000 shows its prowess with the larger Llama3 model. In Q4KM quantization, it generates up to 130.99 tokens per second and 51.97 tokens per second with F16.

Result: The RTX 6000 Ada emerges as the champion for token speed generation with the Llama3 8B model. However, the M2 Max excels with Llama2 7B, showcasing strong performance at Q4_0 quantization.

Processing Speed: A Deep Dive into the Inner Workings

Now, let's delve into the processing speed - how fast these devices can "think" and process information. We'll compare the tokens per second achieved for specific models and quantization levels.

Apple M2 Max:

- Llama2 7B: The M2 Max demonstrates remarkable processing speed with Llama2 7B. It achieves up to 537.6 tokens per second with Q4_0 quantization and 600.46 tokens per second with F16.

NVIDIA RTX 6000 Ada:

- Llama3 8B: The RTX 6000 shines again with 5560.94 tokens per second for Q4KM and 6205.44 tokens per second for F16.

- Llama3 70B: For the larger Llama3 70B model, it reaches 547.03 tokens per second with Q4KM quantization. Note that the F16 data for Llama3 70B is not available, so we can't compare it directly with the M2 Max.

Result: The RTX 6000 Ada takes the lead again in the processing speed arena, demonstrating noticeably higher performance with the Llama3 8B model. The M2 Max, while performing well with Llama2 7B, is outpaced by the RTX 6000 Ada in the larger model scenarios.

Data Table: A Visual Summary of the Showdown

To make the numbers sing, we'll present a table summarizing the key performance indicators for each device:

| Device | Model | Quantization | Token Speed (Generation) | Token Speed (Processing) |

|---|---|---|---|---|

| Apple M2 Max | Llama2 7B | F16 | 24.16 | 600.46 |

| Apple M2 Max | Llama2 7B | Q8_0 | 39.97 | 540.15 |

| Apple M2 Max | Llama2 7B | Q4_0 | 60.99 | 537.6 |

| NVIDIA RTX 6000 Ada | Llama3 8B | F16 | 51.97 | 6205.44 |

| NVIDIA RTX 6000 Ada | Llama3 8B | Q4KM | 130.99 | 5560.94 |

| NVIDIA RTX 6000 Ada | Llama3 70B | Q4KM | 18.36 | 547.03 |

| NVIDIA RTX 6000 Ada | Llama3 70B | F16 | NA | NA |

Key:

- BW: Bandwidth (in GB/s)

- GPUCores: Number of GPU cores

- F16: Floating Point 16-bit precision

- Q8_0: Quantization 8-bit with zero point

- Q4_0: Quantization 4-bit with zero point

- Q4KM: Quantization 4-bit with Kernel and Matrix quantization

Note: The data for Llama3 70B F16 is not available so we could not include it in the table.

Strengths and Weaknesses: A Balanced Perspective

Apple M2 Max: The Efficient Powerhouse

- Strength: The M2 Max shines with its versatility and efficiency. Its high-performance CPU cores allow it to run smaller models like Llama2 7B at impressive speeds, especially with Q4_0 quantization.

- Weakness: For larger models like Llama3 8B and 70B, the M2 Max may struggle to keep up with the processing demands. Its GPU may not be optimally designed for dealing with the massive scale of those models.

NVIDIA RTX 6000 Ada: The Large Model Specialist

- Strength: The RTX 6000 Ada is a true beast when it comes to handling larger models. Its dedicated GPU architecture, optimized for deep learning workloads, allows it to efficiently process the complex calculations involved in larger LLMs.

- Weakness: Despite its impressive capabilities, the RTX 6000 Ada might not be as efficient as the M2 Max with smaller models. Running smaller models on a GPU-centric device can be like using a sledgehammer to crack a nut.

Recommendations: Choosing the Right Tool for the Job

- For developers working with smaller models (e.g., Llama2 7B) and prioritizing efficiency, the Apple M2 Max could be the perfect choice. Its lower power consumption and compact form factor make it ideal for everyday use and running lightweight inference tasks.

- If you're diving into the world of larger models (e.g., Llama3 8B or 70B), the NVIDIA RTX 6000 Ada is the champion. Its powerful GPU is specifically designed to handle the complex computations involved in large models, providing you with the horsepower you need for serious AI projects.

Quantization: A Little Bit of Magic for Smaller Devices

Think of quantization as a clever trick for compressing the information within your LLM. It's like converting a detailed painting into a pixelated version, but without sacrificing too much detail. This makes LLMs smaller and easier to work with on less powerful hardware.

Key takeaways:

- Q4_0: This type of quantization can pack a lot of information into a small space, making it ideal for the M2 Max with its efficient CPU cores.

- Q8_0: This type of quantization serves as a happy medium, offering a balance between accuracy and efficiency.

- Q4KM: This technique excels with larger models, allowing the RTX 6000 Ada to handle them effectively.

FAQ: Addressing your Burning Questions

1. What are LLMs and why are they so popular?

Large Language Models (LLMs) are advanced AI systems trained on massive datasets of text and code. They can understand and generate human-like text, making them useful for various tasks like writing, translation, and even programming.

2. Can I run LLMs locally on my laptop?

It's highly likely, but it depends on the power of your laptop and the size of the LLM you're running. Smaller models like Llama2 7B can potentially run on even mid-range laptops, while larger models like Llama3 70B might require a more powerful machine like the ones we discussed.

3. What is the difference between processing speed and speed generation?

- Token speed generation: This measures how fast the LLM can output text, or generate new tokens (units of information).

- Token speed processing: This measures how fast the LLM can process information internally, including calculations and transformations.

4. What about other devices?

The M2 Max and RTX 6000 Ada are just two examples of powerful devices suitable for running LLMs locally. Other options include:

- NVIDIA A100: This is a powerful GPU commonly used for large-scale AI workloads.

- Google TPU: This is a specialized hardware designed for machine learning tasks, often found in data centers.

5. What is the best device for LLMs?

There's no definitive "best" device. The ideal choice depends on your specific needs, including the size of the model you want to use, your budget, and your power consumption requirements.

Keywords

LLM, LLM model, GPU, CPU, Apple M2 Max, NVIDIA RTX 6000 Ada, Llama2, Llama3, token speed generation, token speed processing, quantization, F16, Q80, Q40, Q4KM, performance comparison, benchmark analysis, AI, deep learning, local inference, machine learning