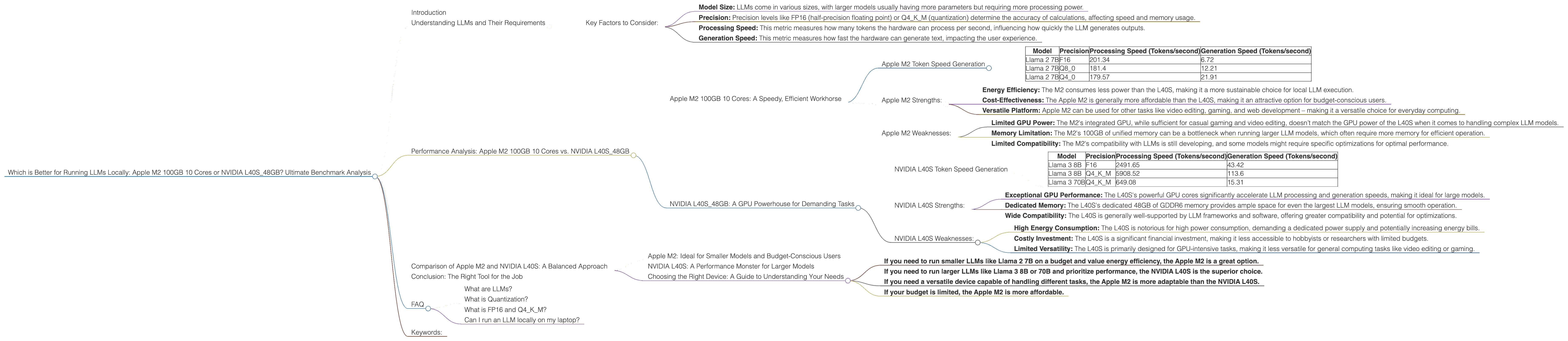

Which is Better for Running LLMs locally: Apple M2 100gb 10cores or NVIDIA L40S 48GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, with new models being released almost daily. These models are capable of some amazing things, from generating realistic text to translating languages. However, running these models locally can be challenging, requiring significant processing power and memory.

This article explores the performance of two popular hardware options for running LLMs locally: the Apple M2 100GB 10-core processor and the NVIDIA L40S_48GB GPU. We’ll dissect their performance, compare strengths and weaknesses, and provide practical recommendations for use cases. Think of it like a tech showdown – we'll analyze the differences in their processing speeds, memory capacities, and their abilities to handle the ever-growing size and complexity of LLMs.

Understanding LLMs and Their Requirements

Imagine LLMs as incredibly complex and sophisticated brains. These brains learn patterns, make predictions, and generate outputs – but they need a lot of power and memory to function properly. That's where the hardware comes in. Our chosen hardware options are like different types of engines for these powerful brains, and we need to analyze which engine is best for specific tasks.

Key Factors to Consider:

- Model Size: LLMs come in various sizes, with larger models usually having more parameters but requiring more processing power.

- Precision: Precision levels like FP16 (half-precision floating point) or Q4KM (quantization) determine the accuracy of calculations, affecting speed and memory usage.

- Processing Speed: This metric measures how many tokens the hardware can process per second, influencing how quickly the LLM generates outputs.

- Generation Speed: This metric measures how fast the hardware can generate text, impacting the user experience.

Performance Analysis: Apple M2 100GB 10 Cores vs. NVIDIA L40S_48GB

Let's dive into the benchmark results and understand how these two hardware options perform with different LLM models and precision levels.

Apple M2 100GB 10 Cores: A Speedy, Efficient Workhorse

The Apple M2 is known for its high-performance capabilities, impressive energy efficiency, and the ability to handle demanding tasks. Let’s see how it shines with these LLMs:

Apple M2 Token Speed Generation

| Model | Precision | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|---|

| Llama 2 7B | F16 | 201.34 | 6.72 |

| Llama 2 7B | Q8_0 | 181.4 | 12.21 |

| Llama 2 7B | Q4_0 | 179.57 | 21.91 |

Observations:

- The Apple M2 delivers impressive processing speeds for the Llama 2 7B model across various precision levels, showcasing its power.

- The Apple M2 excels at processing speed, but the generation speed is relatively lower, particularly with FP16 precision.

Apple M2 Strengths:

- Energy Efficiency: The M2 consumes less power than the L40S, making it a more sustainable choice for local LLM execution.

- Cost-Effectiveness: The Apple M2 is generally more affordable than the L40S, making it an attractive option for budget-conscious users.

- Versatile Platform: Apple M2 can be used for other tasks like video editing, gaming, and web development – making it a versatile choice for everyday computing.

Apple M2 Weaknesses:

- Limited GPU Power: The M2's integrated GPU, while sufficient for casual gaming and video editing, doesn’t match the GPU power of the L40S when it comes to handling complex LLM models.

- Memory Limitation: The M2's 100GB of unified memory can be a bottleneck when running larger LLM models, which often require more memory for efficient operation.

- Limited Compatibility: The M2's compatibility with LLMs is still developing, and some models might require specific optimizations for optimal performance.

NVIDIA L40S_48GB: A GPU Powerhouse for Demanding Tasks

The L40S is a high-end GPU designed to tackle computing-intensive tasks, making it a natural choice for demanding applications like LLM inference.

NVIDIA L40S Token Speed Generation

| Model | Precision | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|---|

| Llama 3 8B | F16 | 2491.65 | 43.42 |

| Llama 3 8B | Q4KM | 5908.52 | 113.6 |

| Llama 3 70B | Q4KM | 649.08 | 15.31 |

Observations:

- The L40S delivers exceptionally high processing speeds for the Llama 3 8B model, showing its prowess with larger models.

- The L40S also boasts impressive generation speeds, especially for the Llama 3 8B model with Q4KM precision.

- It's important to note that the L40S data lacks certain combinations like Llama 3 70B with F16 precision. The lack of data highlights the ongoing development of LLM compatibility with various hardware platforms.

NVIDIA L40S Strengths:

- Exceptional GPU Performance: The L40S's powerful GPU cores significantly accelerate LLM processing and generation speeds, making it ideal for large models.

- Dedicated Memory: The L40S's dedicated 48GB of GDDR6 memory provides ample space for even the largest LLM models, ensuring smooth operation.

- Wide Compatibility: The L40S is generally well-supported by LLM frameworks and software, offering greater compatibility and potential for optimizations.

NVIDIA L40S Weaknesses:

- High Energy Consumption: The L40S is notorious for high power consumption, demanding a dedicated power supply and potentially increasing energy bills.

- Costly Investment: The L40S is a significant financial investment, making it less accessible to hobbyists or researchers with limited budgets.

- Limited Versatility: The L40S is primarily designed for GPU-intensive tasks, making it less versatile for general computing tasks like video editing or gaming.

Comparison of Apple M2 and NVIDIA L40S: A Balanced Approach

Apple M2: Ideal for Smaller Models and Budget-Conscious Users

The Apple M2 makes sense for those seeking an efficient and affordable solution for running smaller LLMs, especially if you are using a Mac for other tasks. It excels at processing speed but lags in generation speed compared to the L40S. The M2 is an excellent choice for those working with Llama 2 7B or similar models and prioritizing energy efficiency.

NVIDIA L40S: A Performance Monster for Larger Models

The L40S is a powerhouse when it comes to performance, especially for larger LLMs like Llama 3 8B and 70B. It excels in both processing and generation speeds, showcasing its raw power. However, its high cost and energy consumption might be prohibitive for some users.

Choosing the Right Device: A Guide to Understanding Your Needs

Here's a quick guide to help you choose the right device for your needs:

- If you need to run smaller LLMs like Llama 2 7B on a budget and value energy efficiency, the Apple M2 is a great option.

- If you need to run larger LLMs like Llama 3 8B or 70B and prioritize performance, the NVIDIA L40S is the superior choice.

- If you need a versatile device capable of handling different tasks, the Apple M2 is more adaptable than the NVIDIA L40S.

- If your budget is limited, the Apple M2 is more affordable.

Conclusion: The Right Tool for the Job

The choice between the Apple M2 and the NVIDIA L40S depends on your specific needs and priorities. The Apple M2 offers a balanced approach with acceptable performance and energy efficiency for smaller LLMs, while the NVIDIA L40S is a performance beast for larger models. The right device depends on your needs, budget, and the complexity of the LLM models you intend to use!

FAQ

What are LLMs?

LLMs are machine learning models trained on massive datasets of text and code. They can understand and generate human-like text, translate languages, write different creative text formats, and answer questions in an informative way.

What is Quantization?

Quantization is a technique used to reduce the size of LLM models without significantly impacting performance. Think of it like compressing a large image to reduce file size without sacrificing too much quality. It helps to save memory and speeds up processing.

What is FP16 and Q4KM?

FP16 and Q4KM are two different precision levels used in LLMs. FP16 (half-precision floating point) uses less memory but can reduce accuracy. Q4KM (quantization) is a more aggressive form of quantization, further reducing memory usage and speeding up processing, but with potential loss of accuracy.

Can I run an LLM locally on my laptop?

While it's possible to run smaller LLMs on a laptop with a powerful CPU and GPU, it might be slow or require significant RAM. For larger models, a dedicated GPU or cloud computing services are recommended.

Keywords:

Apple M2, NVIDIA L40S, LLM, Llama 2, Llama 3, Local Inference, GPU, Token Speed, Processing Speed, Generation Speed, Precision, Quantization, FP16, Q4KM, Performance Benchmark, Cost-Effective, Energy Efficiency, Compatibility, Use Cases, Hardware Recommendations