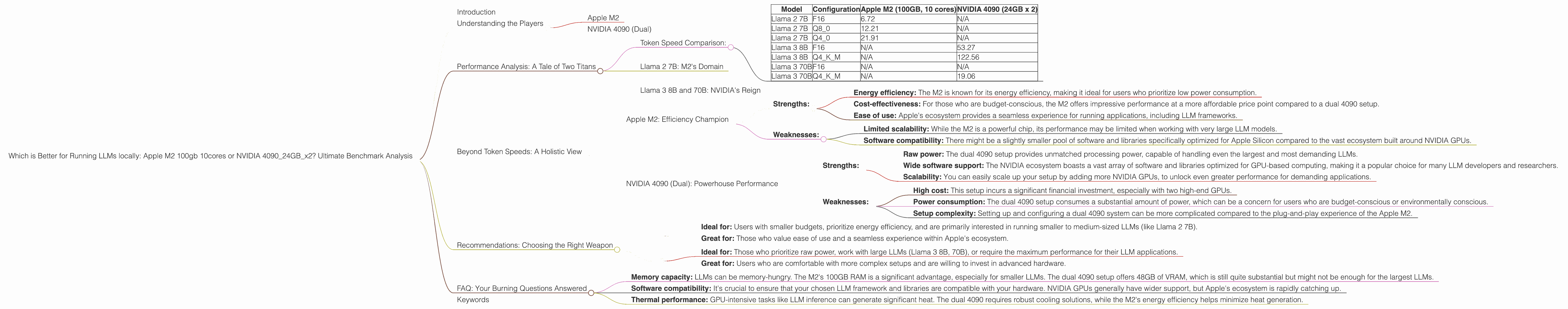

Which is Better for Running LLMs locally: Apple M2 100gb 10cores or NVIDIA 4090 24GB x2? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and techniques emerging constantly. While cloud-based solutions dominate the LLM landscape, running LLMs locally on your own machine offers several advantages: enhanced privacy, greater control, and potentially faster inference speeds. But choosing the right hardware can feel like a daunting task. Should you opt for the power of a high-end NVIDIA GPU or the efficiency of an Apple M2 chip? This article will dive deep into a head-to-head benchmark analysis of the Apple M2 (100GB, 10 cores) vs. the dual NVIDIA 4090 (24GB each) for running LLMs locally. We'll analyze the performance of these setups across various popular LLM models and explore their strengths and weaknesses.

Understanding the Players

Apple M2

The Apple M2 chip is a beast of its own. It's a powerful, energy-efficient processor designed specifically for Apple's ecosystem. We're focusing on a configuration with 100GB of RAM and 10 cores, which delivers impressive performance for a variety of tasks, including LLM inference.

NVIDIA 4090 (Dual)

The NVIDIA 4090 is widely considered the king of GPUs. We're looking at a setup with two of these monsters, giving us a massive amount of processing power and graphics memory. This setup is a true powerhouse designed to handle the computationally intensive task of running large language models.

Performance Analysis: A Tale of Two Titans

To compare the performance of the Apple M2 and the dual NVIDIA 4090, we'll analyze their speeds in tokens/second for several LLM models. Tokens, the building blocks of text, represent words or parts of words, making tokens/second a reliable measure of LLM inference speed.

Note: Our dataset for this comparison focuses on the Llama and Llama 3 families. We will not be comparing the performance of other models like GPT-3, as they are not included in the available data.

Token Speed Comparison:

| Model | Configuration | Apple M2 (100GB, 10 cores) | NVIDIA 4090 (24GB x 2) |

|---|---|---|---|

| Llama 2 7B | F16 | 6.72 | N/A |

| Llama 2 7B | Q8_0 | 12.21 | N/A |

| Llama 2 7B | Q4_0 | 21.91 | N/A |

| Llama 3 8B | F16 | N/A | 53.27 |

| Llama 3 8B | Q4KM | N/A | 122.56 |

| Llama 3 70B | F16 | N/A | N/A |

| Llama 3 70B | Q4KM | N/A | 19.06 |

Key Observations:

- Smaller models: The Apple M2 performs remarkably well with the Llama 2 7B model, across different quantization levels (F16, Q80, Q40). The NVIDIA 4090 data is unavailable for this model size.

- Larger models: The NVIDIA 4090 shines with the Llama 3 8B and 70B models, exhibiting significantly faster token generation speeds, particularly with Q4KM quantization. The M2 data is unavailable for these larger models.

- Quantization: Both devices demonstrate faster token speeds with more aggressive quantization levels (Q80, Q40, Q4KM). Quantization, like a clever compression algorithm, reduces the size of the LLM's model, leading to faster inference times (especially with the NVIDIA 4090). However, quantization can sometimes sacrifice accuracy, which you'll need to weigh against speed.

Llama 2 7B: M2's Domain

The Apple M2 excels with the Llama 2 7B model. It delivers impressive token generation speeds, particularly with Q4_0 quantization, generating over 21 tokens per second. This makes the M2 a compelling choice if you're working with smaller, resource-efficient models.

Think of it this way: Imagine a LLM as a car. Smaller models (like the Llama 2 7B) are like a compact car – efficient, nimble, and perfect for navigating city streets. The M2 is like a powerful yet fuel-efficient engine, perfectly suited to drive this compact car.

Llama 3 8B and 70B: NVIDIA's Reign

The NVIDIA 4090 demonstrates its power with the Llama 3 8B and 70B models. The dual 4090 setup delivers significantly faster token speeds, particularly with the Q4KM quantization technique. It's like driving a high-performance sports car on the open highway!

A little analogy: Larger LLMs (like the Llama 3 8B and 70B) are like luxury SUVs. The NVIDIA 4090 is like a powerful V8 engine, enabling this SUV to effortlessly conquer any terrain and handle demanding tasks.

Beyond Token Speeds: A Holistic View

While token speeds are a crucial metric, they don’t tell the whole story. Let's dive into a broader analysis of the M2 and NVIDIA 4090 to understand their strengths and weaknesses for running LLMs locally:

Apple M2: Efficiency Champion

Strengths:

- Energy efficiency: The M2 is known for its energy efficiency, making it ideal for users who prioritize low power consumption.

- Cost-effectiveness: For those who are budget-conscious, the M2 offers impressive performance at a more affordable price point compared to a dual 4090 setup.

- Ease of use: Apple's ecosystem provides a seamless experience for running applications, including LLM frameworks.

Weaknesses:

- Limited scalability: While the M2 is a powerful chip, its performance may be limited when working with very large LLM models.

- Software compatibility: There might be a slightly smaller pool of software and libraries specifically optimized for Apple Silicon compared to the vast ecosystem built around NVIDIA GPUs.

NVIDIA 4090 (Dual): Powerhouse Performance

Strengths:

- Raw power: The dual 4090 setup provides unmatched processing power, capable of handling even the largest and most demanding LLMs.

- Wide software support: The NVIDIA ecosystem boasts a vast array of software and libraries optimized for GPU-based computing, making it a popular choice for many LLM developers and researchers.

- Scalability: You can easily scale up your setup by adding more NVIDIA GPUs, to unlock even greater performance for demanding applications.

Weaknesses:

- High cost: This setup incurs a significant financial investment, especially with two high-end GPUs.

- Power consumption: The dual 4090 setup consumes a substantial amount of power, which can be a concern for users who are budget-conscious or environmentally conscious.

- Setup complexity: Setting up and configuring a dual 4090 system can be more complicated compared to the plug-and-play experience of the Apple M2.

Recommendations: Choosing the Right Weapon

So, who wins the battle of the LLMs? Unfortunately, there isn't a single "best" device. The ideal choice depends on your specific needs and priorities.

Here's a breakdown to help you decide:

Apple M2:

- Ideal for: Users with smaller budgets, prioritize energy efficiency, and are primarily interested in running smaller to medium-sized LLMs (like Llama 2 7B).

- Great for: Those who value ease of use and a seamless experience within Apple's ecosystem.

NVIDIA 4090 (Dual):

- Ideal for: Those who prioritize raw power, work with large LLMs (Llama 3 8B, 70B), or require the maximum performance for their LLM applications.

- Great for: Users who are comfortable with more complex setups and are willing to invest in advanced hardware.

FAQ: Your Burning Questions Answered

Q: What is quantization, and how does it affect LLM performance?

A: Quantization is like a clever compression technique for LLMs. Imagine a big, detailed painting. To make it easier to store and transmit, you could reduce the number of colors used to make the painting (the "bits" representing the data). Quantization does something similar to LLMs, reducing the precision (number of bits) used to represent the model's weights. This results in a smaller model, which can then be loaded faster and run more quickly.

Q: Are there any other factors to consider besides token speeds?

*A: * Absolutely! Here are some other crucial considerations:

- Memory capacity: LLMs can be memory-hungry. The M2's 100GB RAM is a significant advantage, especially for smaller LLMs. The dual 4090 setup offers 48GB of VRAM, which is still quite substantial but might not be enough for the largest LLMs.

- Software compatibility: It's crucial to ensure that your chosen LLM framework and libraries are compatible with your hardware. NVIDIA GPUs generally have wider support, but Apple's ecosystem is rapidly catching up.

- Thermal performance: GPU-intensive tasks like LLM inference can generate significant heat. The dual 4090 requires robust cooling solutions, while the M2's energy efficiency helps minimize heat generation.

Q: What about using cloud-based solutions?

A: Cloud services like Google Colab or AWS offer powerful compute resources, and you can utilize them to run and experiment with various LLMs. However, cloud-based solutions can be more expensive in the long run, might not offer the same level of privacy control, and can be slower for users with poor internet connectivity.

Keywords

Apple M2, NVIDIA 4090, LLM, Large Language Model, Llama 2, Llama 3, Token Speed, Quantization, F16, Q80, Q40, Q4KM, Generation, Processing, Inference, Performance, Benchmark, Local, Hardware, GPU, CPU, Memory, Cost, Efficiency, Power, Software, Compatibility.