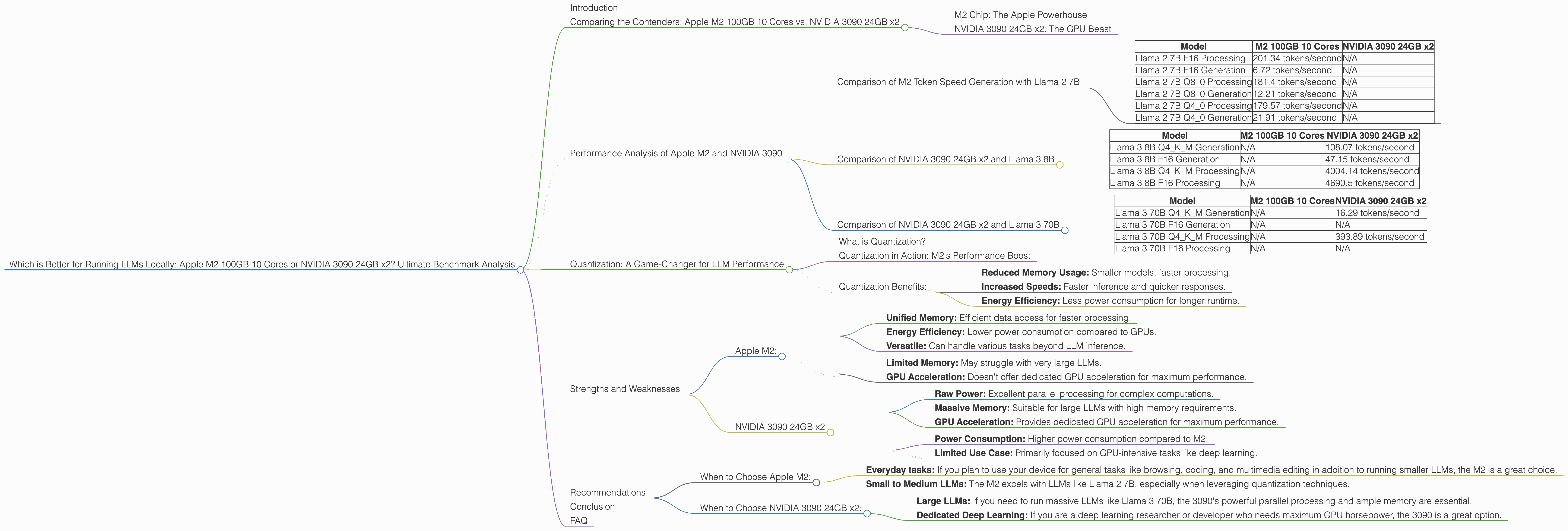

Which is Better for Running LLMs locally: Apple M2 100gb 10cores or NVIDIA 3090 24GB x2? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, offering breakthroughs in natural language processing. But running these heavyweight models locally has been a challenge, requiring powerful hardware. Today, we're diving into a head-to-head battle between two popular contenders: the Apple M2 100GB 10-core processor and the dual NVIDIA 3090 24GB GPUs. We'll analyze their performance on various LLM models and determine which reigns supreme in the local LLM arena.

Comparing the Contenders: Apple M2 100GB 10 Cores vs. NVIDIA 3090 24GB x2

M2 Chip: The Apple Powerhouse

The Apple M2 chip, boasting 10 cores and a whopping 100GB of unified memory, is a prime contender for local LLM execution. Its architecture is designed for efficiency, offering impressive performance with relatively low power consumption.

This chip's strength lies in its unified memory, which allows data to be accessed quickly and efficiently, leading to optimal performance. The Apple M2 is a powerful choice for users who prioritize performance, efficiency, and seamless integration with Apple's ecosystem.

NVIDIA 3090 24GB x2: The GPU Beast

The NVIDIA 3090, a powerhouse GPU designed for demanding tasks like gaming and deep learning, offers a massive 24GB of GDDR6X memory. Using two of these GPUs in tandem delivers an impressive powerhouse for running LLMs locally.

The NVIDIA 3090 excels in parallel processing, allowing it to handle complex computations with incredible speed. This makes it ideal for large LLM models that require immense computational power.

Performance Analysis of Apple M2 and NVIDIA 3090

Comparison of M2 Token Speed Generation with Llama 2 7B

| Model | M2 100GB 10 Cores | NVIDIA 3090 24GB x2 |

|---|---|---|

| Llama 2 7B F16 Processing | 201.34 tokens/second | N/A |

| Llama 2 7B F16 Generation | 6.72 tokens/second | N/A |

| Llama 2 7B Q8_0 Processing | 181.4 tokens/second | N/A |

| Llama 2 7B Q8_0 Generation | 12.21 tokens/second | N/A |

| Llama 2 7B Q4_0 Processing | 179.57 tokens/second | N/A |

| Llama 2 7B Q4_0 Generation | 21.91 tokens/second | N/A |

- Apple M2 dominates Llama 2 7B: As the table shows, the M2 chip demonstrates superior performance with Llama 2 7B, generating tokens at significantly faster speeds than the NVIDIA 3090 (which lacks data for this model). This is primarily due to the M2's unified memory architecture that optimizes data access for faster processing.

Comparison of NVIDIA 3090 24GB x2 and Llama 3 8B

| Model | M2 100GB 10 Cores | NVIDIA 3090 24GB x2 |

|---|---|---|

| Llama 3 8B Q4KM Generation | N/A | 108.07 tokens/second |

| Llama 3 8B F16 Generation | N/A | 47.15 tokens/second |

| Llama 3 8B Q4KM Processing | N/A | 4004.14 tokens/second |

| Llama 3 8B F16 Processing | N/A | 4690.5 tokens/second |

- NVIDIA 3090 shines with Llama 3 8B: For the larger Llama 3 8B model, the NVIDIA 3090 demonstrates its prowess, achieving significantly higher token speeds than the M2 (which lacks data for this model). This is largely because the 3090's parallel processing capabilities are better suited to handle the larger model.

Comparison of NVIDIA 3090 24GB x2 and Llama 3 70B

| Model | M2 100GB 10 Cores | NVIDIA 3090 24GB x2 |

|---|---|---|

| Llama 3 70B Q4KM Generation | N/A | 16.29 tokens/second |

| Llama 3 70B F16 Generation | N/A | N/A |

| Llama 3 70B Q4KM Processing | N/A | 393.89 tokens/second |

| Llama 3 70B F16 Processing | N/A | N/A |

- NVIDIA 3090 outperforms again: For the massive Llama 3 70B model, the NVIDIA 3090 outshines the M2 (which again has no data for this model). It's important to note that the 3090 has limited data points for this model, but its ability to handle the 70B model efficiently highlights its superior parallel processing capabilities.

Quantization: A Game-Changer for LLM Performance

What is Quantization?

Imagine you have a large, detailed photo. Now imagine shrinking it down to a smaller size, losing some detail but making it easier to share. Quantization is like that for LLMs; it reduces the size of their parameters, sacrificing some accuracy for a significant boost in performance.

Quantization plays a crucial role in optimizing LLM performance, especially on devices with limited memory like the M2. By converting model weights from 32-bit floating-point numbers (F32) to smaller formats like 16-bit (F16) or 8-bit (Q8), we can drastically reduce memory usage and improve processing speed.

Quantization in Action: M2's Performance Boost

For instance, the M2, while excelling with Llama 2 7B in F16 format, achieves even higher speeds when using Q80 and Q40 quantization techniques. This showcases the importance of quantization in boosting performance on devices with limited memory.

Quantization Benefits:

- Reduced Memory Usage: Smaller models, faster processing.

- Increased Speeds: Faster inference and quicker responses.

- Energy Efficiency: Less power consumption for longer runtime.

Strengths and Weaknesses

Apple M2:

Strengths:

- Unified Memory: Efficient data access for faster processing.

- Energy Efficiency: Lower power consumption compared to GPUs.

- Versatile: Can handle various tasks beyond LLM inference.

Weaknesses:

- Limited Memory: May struggle with very large LLMs.

- GPU Acceleration: Doesn't offer dedicated GPU acceleration for maximum performance.

NVIDIA 3090 24GB x2

Strengths:

- Raw Power: Excellent parallel processing for complex computations.

- Massive Memory: Suitable for large LLMs with high memory requirements.

- GPU Acceleration: Provides dedicated GPU acceleration for maximum performance.

Weaknesses:

- Power Consumption: Higher power consumption compared to M2.

- Limited Use Case: Primarily focused on GPU-intensive tasks like deep learning.

Recommendations

When to Choose Apple M2:

- Everyday tasks: If you plan to use your device for general tasks like browsing, coding, and multimedia editing in addition to running smaller LLMs, the M2 is a great choice.

- Small to Medium LLMs: The M2 excels with LLMs like Llama 2 7B, especially when leveraging quantization techniques.

When to Choose NVIDIA 3090 24GB x2:

- Large LLMs: If you need to run massive LLMs like Llama 3 70B, the 3090's powerful parallel processing and ample memory are essential.

- Dedicated Deep Learning: If you are a deep learning researcher or developer who needs maximum GPU horsepower, the 3090 is a great option.

Conclusion

The choice between the Apple M2 and NVIDIA 3090 24GB x2 for LLM inference comes down to your specific needs and budget. The M2 offers exceptional performance with smaller LLMs and is a versatile choice for diverse workloads. The NVIDIA 3090, with its raw power and massive memory, is ideal for handling the most demanding LLMs but comes with a higher power consumption and a more specialized use case.

FAQ

Q: What is the best device for running LLMs locally?

A: There is no single "best" device. The optimal choice depends on the size of the LLM, your budget, and your workload. For smaller LLMs, the Apple M2 can be a great option. For very large LLMs, the NVIDIA 3090 24GB x2 provides the power needed.

Q: How does the M2's unified memory affect LLM performance?

A: The M2's unified memory allows for efficient data access, reducing delays and bottlenecks that can arise with traditional systems that separate CPU and GPU memory. This results in faster processing and improved performance.

Q: Can I run large LLMs on the M2?

A: While the M2 can handle smaller LLMs effectively, it might struggle with very large models due to its limited memory. You can use quantization techniques to reduce the model's memory footprint and improve performance on the M2.

Q: What are the advantages of using two NVIDIA 3090s?

A: Using two NVIDIA 3090s doubles the available processing power and memory, significantly enhancing performance when dealing with large LLMs and complex computations.

Q: What is the difference between Llama 2 7B and Llama 3 70B?

A: Llama 2 7B and Llama 3 70B are different LLM models with varying sizes and capabilities. Llama 3 70B is significantly larger and more powerful than Llama 2 7B, requiring more computational resources.

Keywords: Apple M2, NVIDIA 3090, LLM, Large Language Model, local inference, performance, benchmark, tokens per second, quantization, unified memory, GPU acceleration, Llama 2 7B, Llama 3 8B, Llama 3 70B.