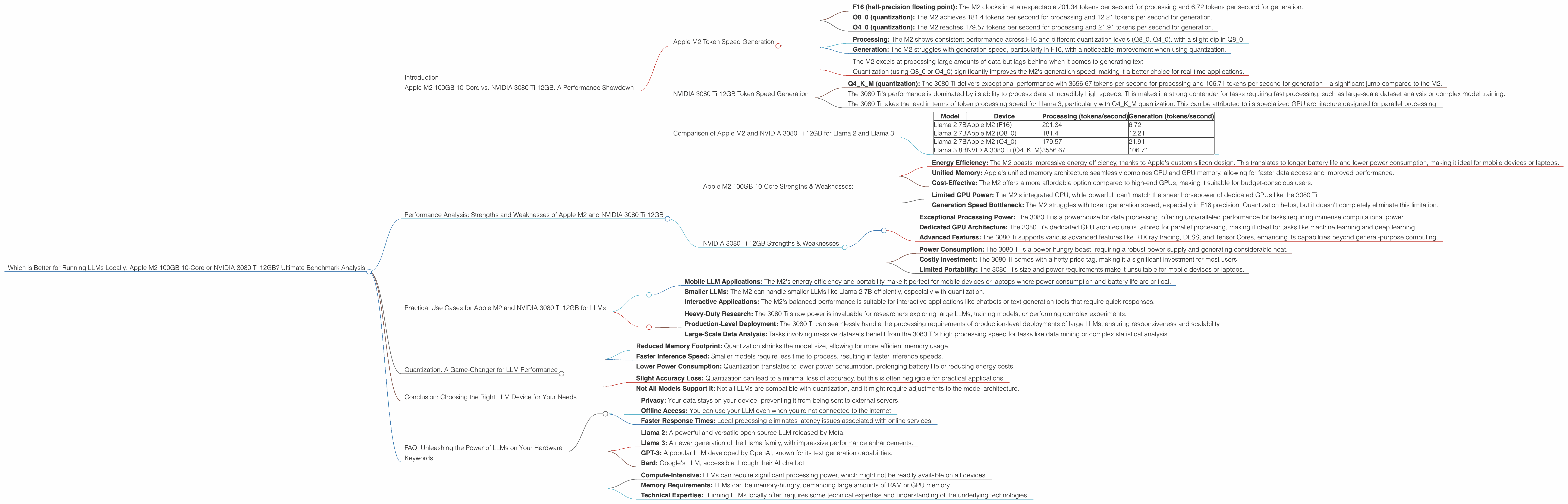

Which is Better for Running LLMs locally: Apple M2 100gb 10cores or NVIDIA 3080 Ti 12GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, offering incredible potential for tasks like writing, translation, and code generation. But running these models locally can be a challenge, especially for the computationally demanding ones. Today, we're diving deep into a head-to-head showdown between two popular contenders: the Apple M2 100GB 10-core chip and the NVIDIA 3080 Ti 12GB GPU. We'll analyze their performance on popular LLM models like Llama 2 and Llama 3, comparing their token speeds, strengths, and weaknesses. Buckle up, because this is going to be a wild ride through the LLM performance landscape!

Apple M2 100GB 10-Core vs. NVIDIA 3080 Ti 12GB: A Performance Showdown

Let's cut to the chase: who reigns supreme in the local LLM battle? To make a fair comparison, we'll evaluate the devices based on their token processing and generation speeds for Llama 2 and Llama 3 models, using the benchmarks provided in the accompanying JSON data.

Apple M2 Token Speed Generation

Llama 2 7B with Apple M2:

- F16 (half-precision floating point): The M2 clocks in at a respectable 201.34 tokens per second for processing and 6.72 tokens per second for generation.

- Q8_0 (quantization): The M2 achieves 181.4 tokens per second for processing and 12.21 tokens per second for generation.

- Q4_0 (quantization): The M2 reaches 179.57 tokens per second for processing and 21.91 tokens per second for generation.

Interpreting the Results:

- Processing: The M2 shows consistent performance across F16 and different quantization levels (Q80, Q40), with a slight dip in Q8_0.

- Generation: The M2 struggles with generation speed, particularly in F16, with a noticeable improvement when using quantization.

Key Takeaways:

- The M2 excels at processing large amounts of data but lags behind when it comes to generating text.

- Quantization (using Q80 or Q40) significantly improves the M2's generation speed, making it a better choice for real-time applications.

NVIDIA 3080 Ti 12GB Token Speed Generation

Llama 3 8B with NVIDIA 3080 Ti 12GB:

- Q4KM (quantization): The 3080 Ti delivers exceptional performance with 3556.67 tokens per second for processing and 106.71 tokens per second for generation – a significant jump compared to the M2.

Interpreting the Results:

- The 3080 Ti's performance is dominated by its ability to process data at incredibly high speeds. This makes it a strong contender for tasks requiring fast processing, such as large-scale dataset analysis or complex model training.

Key Takeaways:

- The 3080 Ti takes the lead in terms of token processing speed for Llama 3, particularly with Q4KM quantization. This can be attributed to its specialized GPU architecture designed for parallel processing.

Comparison of Apple M2 and NVIDIA 3080 Ti 12GB for Llama 2 and Llama 3

Let's bring together the performance data and compare the two devices head-to-head for Llama 2 and Llama 3 models:

| Model | Device | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama 2 7B | Apple M2 (F16) | 201.34 | 6.72 |

| Llama 2 7B | Apple M2 (Q8_0) | 181.4 | 12.21 |

| Llama 2 7B | Apple M2 (Q4_0) | 179.57 | 21.91 |

| Llama 3 8B | NVIDIA 3080 Ti (Q4KM) | 3556.67 | 106.71 |

Observations:

- Llama 2: The M2 holds the edge with Llama 2, particularly with Q4_0 quantization for better generation speeds. However, the difference in performance isn't dramatic, making either device a viable option for this model.

- Llama 3: The 3080 Ti absolutely dominates with Llama 3, showcasing its superiority in high-performance computing. The M2 lacks data for this comparison.

Practical Recommendations:

- For Llama 2: Choose the M2 if you prioritize a balance between processing and generation speed, especially when using Q4_0 quantization.

- For Llama 3: The 3080 Ti is the clear winner, especially if you need the fastest possible processing speeds – perfect for research or complex tasks.

Performance Analysis: Strengths and Weaknesses of Apple M2 and NVIDIA 3080 Ti 12GB

Let's delve deeper into the intricacies of each device's strengths and weaknesses.

Apple M2 100GB 10-Core Strengths & Weaknesses:

Strengths:

- Energy Efficiency: The M2 boasts impressive energy efficiency, thanks to Apple's custom silicon design. This translates to longer battery life and lower power consumption, making it ideal for mobile devices or laptops.

- Unified Memory: Apple's unified memory architecture seamlessly combines CPU and GPU memory, allowing for faster data access and improved performance.

- Cost-Effective: The M2 offers a more affordable option compared to high-end GPUs, making it suitable for budget-conscious users.

Weaknesses:

- Limited GPU Power: The M2's integrated GPU, while powerful, can't match the sheer horsepower of dedicated GPUs like the 3080 Ti.

- Generation Speed Bottleneck: The M2 struggles with token generation speed, especially in F16 precision. Quantization helps, but it doesn't completely eliminate this limitation.

NVIDIA 3080 Ti 12GB Strengths & Weaknesses:

Strengths:

- Exceptional Processing Power: The 3080 Ti is a powerhouse for data processing, offering unparalleled performance for tasks requiring immense computational power.

- Dedicated GPU Architecture: The 3080 Ti's dedicated GPU architecture is tailored for parallel processing, making it ideal for tasks like machine learning and deep learning.

- Advanced Features: The 3080 Ti supports various advanced features like RTX ray tracing, DLSS, and Tensor Cores, enhancing its capabilities beyond general-purpose computing.

Weaknesses:

- Power Consumption: The 3080 Ti is a power-hungry beast, requiring a robust power supply and generating considerable heat.

- Costly Investment: The 3080 Ti comes with a hefty price tag, making it a significant investment for most users.

- Limited Portability: The 3080 Ti's size and power requirements make it unsuitable for mobile devices or laptops.

Practical Use Cases for Apple M2 and NVIDIA 3080 Ti 12GB for LLMs

Let's explore practical scenarios where each device shines:

Apple M2:

- Mobile LLM Applications: The M2's energy efficiency and portability make it perfect for mobile devices or laptops where power consumption and battery life are critical.

- Smaller LLMs: The M2 can handle smaller LLMs like Llama 2 7B efficiently, especially with quantization.

- Interactive Applications: The M2's balanced performance is suitable for interactive applications like chatbots or text generation tools that require quick responses.

NVIDIA 3080 Ti 12GB:

- Heavy-Duty Research: The 3080 Ti's raw power is invaluable for researchers exploring large LLMs, training models, or performing complex experiments.

- Production-Level Deployment: The 3080 Ti can seamlessly handle the processing requirements of production-level deployments of large LLMs, ensuring responsiveness and scalability.

- Large-Scale Data Analysis: Tasks involving massive datasets benefit from the 3080 Ti's high processing speed for tasks like data mining or complex statistical analysis.

Quantization: A Game-Changer for LLM Performance

Quantization is a technique that reduces the size of LLM models by representing numbers with fewer bits. This can significantly improve performance, particularly for devices with limited memory or processing power like the M2.

Think of quantization like a diet for your LLM. Instead of using the full-fat version (F16), you're using a low-fat version (Q80 or Q40) that takes up less space and requires less computing power. In this way, you can squeeze more performance out of your existing hardware.

Key Benefits of Quantization:

- Reduced Memory Footprint: Quantization shrinks the model size, allowing for more efficient memory usage.

- Faster Inference Speed: Smaller models require less time to process, resulting in faster inference speeds.

- Lower Power Consumption: Quantization translates to lower power consumption, prolonging battery life or reducing energy costs.

Caveats of Quantization:

- Slight Accuracy Loss: Quantization can lead to a minimal loss of accuracy, but this is often negligible for practical applications.

- Not All Models Support It: Not all LLMs are compatible with quantization, and it might require adjustments to the model architecture.

Conclusion: Choosing the Right LLM Device for Your Needs

Choosing the right device for running LLMs locally depends heavily on your specific use case and budget. The Apple M2 offers a balanced approach with its energy efficiency and portability, while the NVIDIA 3080 Ti excels in processing speed and power but comes with a higher price tag.

By analyzing your needs, you can make an informed decision and select the device that best suits your LLM journey. Remember to consider factors like model size, performance requirements, and budget before making your final choice.

FAQ: Unleashing the Power of LLMs on Your Hardware

Q: What are Large Language Models (LLMs)?

A: LLMs are a type of artificial intelligence (AI) that can understand and generate human-like text. Think of an AI that can write stories, translate languages, and answer your questions in a conversational way.

Q: How do LLMs work?

A: LLMs are trained on massive amounts of text data, learning patterns and relationships in language. This allows them to predict and generate text that is similar to what they've been trained on.

Q: What are the benefits of running LLMs locally?

A: Running LLMs locally offers several advantages:

- Privacy: Your data stays on your device, preventing it from being sent to external servers.

- Offline Access: You can use your LLM even when you're not connected to the internet.

- Faster Response Times: Local processing eliminates latency issues associated with online services.

Q: What are some popular LLM models?

*A: * There are many popular LLMs out there, including:

- Llama 2: A powerful and versatile open-source LLM released by Meta.

- Llama 3: A newer generation of the Llama family, with impressive performance enhancements.

- GPT-3: A popular LLM developed by OpenAI, known for its text generation capabilities.

- Bard: Google's LLM, accessible through their AI chatbot.

Q: What are some challenges of running LLMs locally?

A: While running LLMs locally presents advantages, it also poses challenges:

- Compute-Intensive: LLMs can require significant processing power, which might not be readily available on all devices.

- Memory Requirements: LLMs can be memory-hungry, demanding large amounts of RAM or GPU memory.

- Technical Expertise: Running LLMs locally often requires some technical expertise and understanding of the underlying technologies.

Q: Can I run LLMs on my phone?

A: Running LLMs on your phone is becoming increasingly feasible with advancements in mobile hardware and optimized model sizes. However, it's important to consider phone specifications and model size for a smooth experience.

Keywords

Apple M2, NVIDIA 3080 Ti 12GB, LLM, Large Language Model, Llama 2, Llama 3, Token Speed, Processing, Generation, Quantization, Performance, Comparison, Benchmark, Strengths, Weaknesses, Use Cases, GPU, CPU, GPU Cores, Memory, Energy Efficiency, Cost, FAQ, AI, ML, Deep Learning, Inference Speed, Accuracy Loss, Data Analysis, Research, Deployment, Mobile, Portability