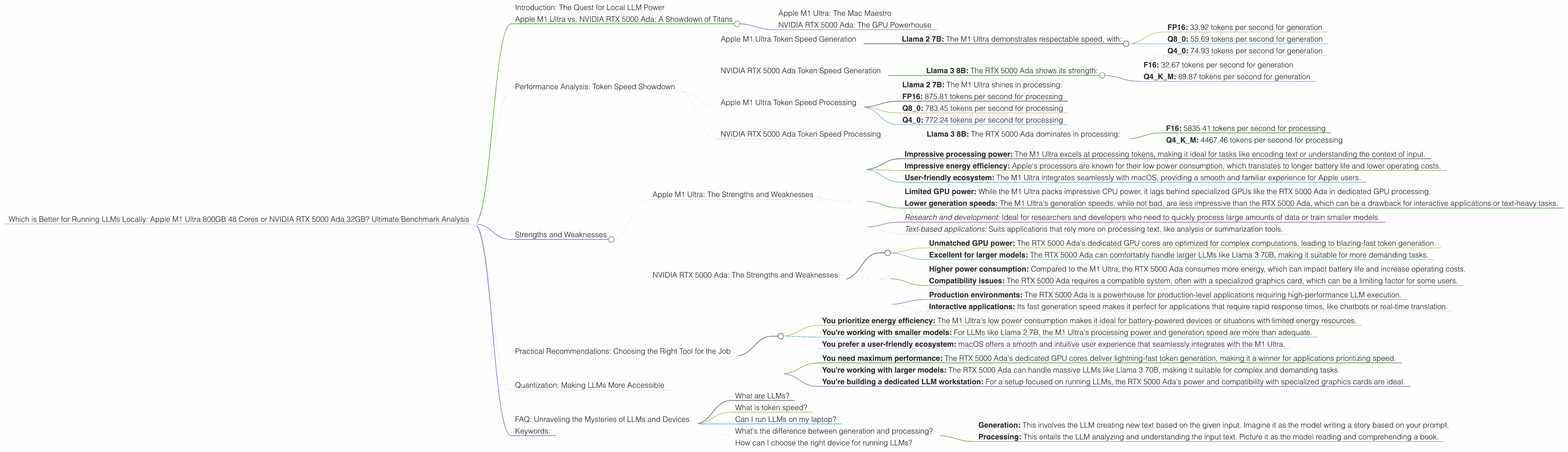

Which is Better for Running LLMs locally: Apple M1 Ultra 800gb 48cores or NVIDIA RTX 5000 Ada 32GB? Ultimate Benchmark Analysis

Introduction: The Quest for Local LLM Power

The world of Large Language Models (LLMs) is buzzing with excitement, but the hefty computational demands often leave users relying on cloud services. However, with the rise of powerful local processors, the dream of running sophisticated LLMs directly on your computer is becoming a reality. But which hardware reigns supreme? In this deep dive, we'll compare the Apple M1 Ultra 800GB 48 Cores and the NVIDIA RTX 5000 Ada 32GB – two titans in the local LLM arena – to find out which one delivers the ultimate performance.

Whether you are a developer experimenting with cutting-edge language models or just curious about this fascinating world, this benchmark analysis will equip you with the knowledge you need to make an informed decision. Buckle up and let's embark on this thrilling exploration!

Apple M1 Ultra vs. NVIDIA RTX 5000 Ada: A Showdown of Titans

Apple M1 Ultra: The Mac Maestro

Imagine a processor so powerful it can rival a supercomputer – that's the Apple M1 Ultra. Featuring a mind-boggling 48 cores and 800GB of bandwidth, this beast is designed for demanding tasks, including running state-of-the-art LLMs locally.

NVIDIA RTX 5000 Ada: The GPU Powerhouse

On the other side of the ring, we have the NVIDIA RTX 5000 Ada – a formidable GPU designed for professional applications. Its incredible processing power, boosted by the Ada Lovelace architecture, makes it a prime candidate for LLM work.

Performance Analysis: Token Speed Showdown

We're going to focus primarily on the speed with which these devices churn out tokens – the building blocks of language – during both processing and generation phases of LLM operation. Let's break down the numbers:

Apple M1 Ultra Token Speed Generation

- Llama 2 7B: The M1 Ultra demonstrates respectable speed, with:

- FP16: 33.92 tokens per second for generation

- Q80: 55.69 tokens per second for generation

- Q40: 74.93 tokens per second for generation

NVIDIA RTX 5000 Ada Token Speed Generation

- Llama 3 8B: The RTX 5000 Ada shows its strength:

- F16: 32.67 tokens per second for generation

- Q4KM: 89.87 tokens per second for generation

Important Note: We don't have data for Llama 3 70B on the RTX 5000 Ada, so we can't compare their performance on this larger model.

Apple M1 Ultra Token Speed Processing

- Llama 2 7B: The M1 Ultra shines in processing:

- FP16: 875.81 tokens per second for processing

- Q80: 783.45 tokens per second for processing

- Q40: 772.24 tokens per second for processing

NVIDIA RTX 5000 Ada Token Speed Processing

- Llama 3 8B: The RTX 5000 Ada dominates in processing:

- F16: 5835.41 tokens per second for processing

- Q4KM: 4467.46 tokens per second for processing

Important note: We don't have data for Llama 3 70B on the RTX 5000 Ada, so we can't compare their performance on this larger model.

Strengths and Weaknesses

Apple M1 Ultra: The Strengths and Weaknesses

Strengths:

- Impressive processing power: The M1 Ultra excels at processing tokens, making it ideal for tasks like encoding text or understanding the context of input.

- Impressive energy efficiency: Apple's processors are known for their low power consumption, which translates to longer battery life and lower operating costs.

- User-friendly ecosystem: The M1 Ultra integrates seamlessly with macOS, providing a smooth and familiar experience for Apple users.

Weaknesses:

- Limited GPU power: While the M1 Ultra packs impressive CPU power, it lags behind specialized GPUs like the RTX 5000 Ada in dedicated GPU processing.

- Lower generation speeds: The M1 Ultra's generation speeds, while not bad, are less impressive than the RTX 5000 Ada, which can be a drawback for interactive applications or text-heavy tasks.

Use Cases:

- Research and development: Ideal for researchers and developers who need to quickly process large amounts of data or train smaller models.

- Text-based applications: Suits applications that rely more on processing text, like analysis or summarization tools.

NVIDIA RTX 5000 Ada: The Strengths and Weaknesses

Strengths:

- Unmatched GPU power: The RTX 5000 Ada's dedicated GPU cores are optimized for complex computations, leading to blazing-fast token generation.

- Excellent for larger models: The RTX 5000 Ada can comfortably handle larger LLMs like Llama 3 70B, making it suitable for more demanding tasks.

Weaknesses:

- Higher power consumption: Compared to the M1 Ultra, the RTX 5000 Ada consumes more energy, which can impact battery life and increase operating costs.

- Compatibility issues: The RTX 5000 Ada requires a compatible system, often with a specialized graphics card, which can be a limiting factor for some users.

Use Cases:

- Production environments: The RTX 5000 Ada is a powerhouse for production-level applications requiring high-performance LLM execution.

- Interactive applications: Its fast generation speed makes it perfect for applications that require rapid response times, like chatbots or real-time translation.

Practical Recommendations: Choosing the Right Tool for the Job

So, which device reigns supreme? The answer is: it depends! The optimal choice hinges on your specific use case and priorities.

Choose the Apple M1 Ultra if:

- You prioritize energy efficiency: The M1 Ultra's low power consumption makes it ideal for battery-powered devices or situations with limited energy resources.

- You're working with smaller models: For LLMs like Llama 2 7B, the M1 Ultra's processing power and generation speed are more than adequate.

- You prefer a user-friendly ecosystem: macOS offers a smooth and intuitive user experience that seamlessly integrates with the M1 Ultra.

Choose the NVIDIA RTX 5000 Ada if:

- You need maximum performance: The RTX 5000 Ada's dedicated GPU cores deliver lightning-fast token generation, making it a winner for applications prioritizing speed.

- You're working with larger models: The RTX 5000 Ada can handle massive LLMs like Llama 3 70B, making it suitable for complex and demanding tasks.

- You're building a dedicated LLM workstation: For a setup focused on running LLMs, the RTX 5000 Ada's power and compatibility with specialized graphics cards are ideal.

Quantization: Making LLMs More Accessible

Before we wrap up, let's address a common question: What is quantization, and how does it affect LLM performance?

Imagine an LLM as a massive recipe book, with each ingredient representing a number. Quantization is like simplifying the recipe by reducing the number of possible ingredient amounts. This simplification makes the recipe smaller and easier to work with, but it might slightly affect the final dish's flavor.

Similarly, quantization reduces the precision of numbers in an LLM, making the model smaller and faster. Think of FP16 as a recipe with many possible ingredient amounts, while Q80 and Q40 are recipes with fewer options. While quantization makes the recipe smoother to use, it might subtly impact the final output quality.

The M1 Ultra's performance with different quantization levels (Q80, Q40) reflects the tradeoff between speed and accuracy. The RTX 5000 Ada also showcases the power of quantization, with its impressive Q4KM generation speed. However, the choice between different quantization levels ultimately depends on your priorities and the specific application.

FAQ: Unraveling the Mysteries of LLMs and Devices

What are LLMs?

LLMs are computer programs that can understand and generate human-like text. They are trained on massive datasets, allowing them to complete various tasks, from writing creative content to answering your questions. Think of them as the ultimate language wizards!

What is token speed?

Tokens are the building blocks of text. Think of them as the individual letters, words, or even phrases that make up a sentence. Token speed refers to how many tokens a device can process or generate per second.

Can I run LLMs on my laptop?

With the right hardware and resources, you can run surprisingly powerful LLMs on a laptop. However, the bigger the model, the more powerful your computer needs to be.

What's the difference between generation and processing?

- Generation: This involves the LLM creating new text based on the given input. Imagine it as the model writing a story based on your prompt.

- Processing: This entails the LLM analyzing and understanding the input text. Picture it as the model reading and comprehending a book.

How can I choose the right device for running LLMs?

Consider your specific needs and priorities. If you value energy efficiency and user-friendliness, the M1 Ultra might be your go-to choice. If you need maximum performance for larger models, the NVIDIA RTX 5000 Ada is the champion.

Keywords:

LLMs, Local LLM, Apple M1 Ultra, NVIDIA RTX 5000 Ada, Benchmark Analysis, Token Speed, Generation, Processing, Quantization, FP16, Q80, Q40, Performance Comparison, Strengths and Weaknesses, Practical Recommendations, Use Cases, Developer, Geek, LLM Performance, Hardware, GPU, CPU, Energy Efficiency, Local AI, Deep Learning, Natural Language Processing, Machine Learning, AI Models, Model Inference, LLM Inference.